Paper:

Data Augmentation for Semantic Segmentation Using a Real Image Dataset Captured Around the Tsukuba City Hall

Yuriko Ueda*, Miho Adachi*

, Junya Morioka*, Marin Wada*, and Ryusuke Miyamoto**

, Junya Morioka*, Marin Wada*, and Ryusuke Miyamoto**

*Department of Computer Science, Graduate School of Science and Technology, Meiji University

1-1-1 Higashimita, Tama-ku, Kawasaki, Kanagawa 214-8571, Japan

**Department of Computer Science, School of Science and Technology, Meiji University

1-1-1 Higashimita, Tama-ku, Kawasaki, Kanagawa 214-8571, Japan

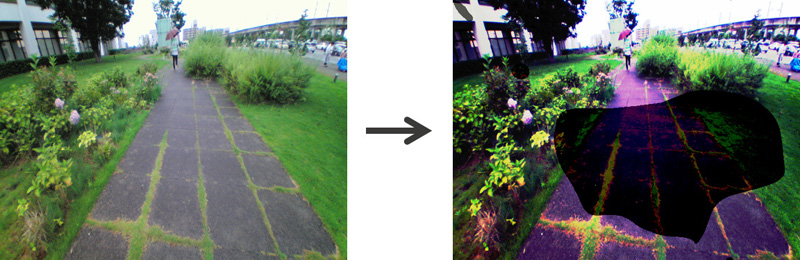

We are exploring the use of semantic scene understanding in autonomous navigation for the Tsukuba Challenge. However, manually creating a comprehensive dataset that covers various outdoor scenes with time and weather variations to ensure high accuracy in semantic segmentation is onerous. Therefore, we propose modifications to the model and backbone of semantic segmentation, along with data augmentation techniques. The data augmentation techniques, including the addition of virtual shadows, histogram matching, and style transformations, aim to improve the representation of variations in shadow presence and color tones. In our evaluation using images from the Tsukuba Challenge course, we achieved the highest accuracy by switching the model to PSPNet and changing the backbone to ResNeXt. Furthermore, the adaptation of shadow and histogram proved effective for critical classes in robot navigation, such as road, sidewalk, and terrain. In particular, the combination of histogram matching and shadow application demonstrated effectiveness for data not included in the base training dataset.

An example of an augmented image

- [1] L. Chen, G. Papandreou, I. Kokkinos, K. Murphy, and A. L. Yuille, “DeepLab: Semantic Image Segmentation with Deep Convolutional Nets, Atrous Convolution, and Fully Connected CRFs,” IEEE Trans. Pattern Anal. Mach. Intell., Vol.40, No.4, pp. 834-848, 2018. https://doi.org/10.1109/TPAMI.2017.2699184

- [2] J. Xu, Z. Xiong, and S. P. Bhattacharyya, “PIDNet: A Real-time Semantic Segmentation Network Inspired from PID Controller,” Proc. of IEEE Conf. Comput. Vis. Pattern Recognit., pp. 19529-19539, 2023. https://doi.org/10.1109/CVPR52729.2023.01871

- [3] H. Zhao, X. Qi, X. Shen, J. Shi, and J. Jia, “ICNet for Real-Time Semantic Segmentation on High-Resolution Images,” Proc. of European Conf. Comput. Vis., pp. 418-434, 2018. https://doi.org/10.1007/978-3-030-01219-9_25

- [4] H. Zhao, J. Shi, X. Qi, X. Wang, and J. Jia, “Pyramid scene parsing network,” Proc. of IEEE Conf. Comput. Vis. Pattern Recognit., pp. 2881-2890, 2017. https://doi.org/10.1109/CVPR.2017.660

- [5] M. Adachi, S. Shatari, and R. Miyamoto, “Visual Navigation Using a Webcam Based on Semantic Segmentation for Indoor Robots,” Proc. of SITIS, 2019. https://doi.org/10.1109/SITIS.2019.00015

- [6] M. Adachi, K. Honda, and R. Miyamoto, “Turning at Intersections Using Virtual LiDAR Signals Obtained from a Segmentation Result,” J. Robot. Mechatron., Vol.35, No.2, pp. 347-361, 2023. https://doi.org/10.20965/jrm.2023.p0347

- [7] M. Adachi and R. Miyamoto, “Model-Based Estimation of Road Direction in Urban Scenes Using Virtual LiDAR Signals,” Proc. of IEEE Int. Conf. on Systems, Man, and Cybernetics, 2020. https://doi.org/10.1109/SMC42975.2020.9282925

- [8] R. Miyamoto, Y. Nakamura, M. Adachi, T. Nakajima, H. Ishida, K. Kojima, R. Aoki, T. Oki, and S. Kobayashi, “Vision-Based Road-Following Using Results of Semantic Segmentation for Autonomous Navigation,” Proc. of ICCE Berlin, pp. 194-199, 2019. https://doi.org/10.1109/ICCE-Berlin47944.2019.8966198

- [9] R. Miyamoto, M. Adachi, H. Ishida, T. Watanabe, K. Matsutani, H. Komatsuzaki, S. Sakata, R. Yokota, and S. Kobayashi, “Visual Navigation Based on Semantic Segmentation Using Only a Monocular Camera as an External Sensor,” J. Robot. Mechatron., Vol.32, No.6, pp. 1137-1153, 2020. https://doi.org/10.20965/jrm.2020.p1137

- [10] M. Cordts, M. Omran, S. Ramos, T. Rehfeld, M. Enzweiler, R. Benenson, U. Franke, S. Roth, and B. Schiele, “The Cityscapes Dataset for Semantic Urban Scene Understanding,” Proc. of IEEE Conf. Comput. Vis. Pattern Recognit., 2016. https://doi.org/10.1109/CVPR.2016.350

- [11] A. Geiger, P. Lenz, and R. Urtasun, “Are we ready for Autonomous Driving? The KITTI Vision Benchmark Suite,” Proc. of IEEE Conf. Comput. Vis. Pattern Recognit., 2012. https://doi.org/10.1109/CVPR.2012.6248074

- [12] Y. Liao, J. Xie, and A. Geiger, “KITTI-360: A Novel Dataset and Benchmarks for Urban Scene Understanding in 2D and 3D,” IEEE Trans. Pattern Anal. Mach. Intell., Vol.45, No.3, pp. 3292-3310, 2022. https://doi.org/10.1109/TPAMI.2022.3179507

- [13] R. Miyamoto, M. Adachi, Y. Nakamura, T. Nakajima, H. Ishida, and S. Kobayashi, “Accuracy Improvement of Semantic Segmentation Using Appropriate Datasets for Robot Navigation,” Proc. of CoDIT, pp. 1610-1615, 2019. https://doi.org/10.1109/CoDIT.2019.8820616

- [14] Y. Takagi and Y. Ji, “Motion Control of Mobile Robot for Snowy Environment-Performance Improvement of Semantic Segmentation by Using GAN in Snow-covered Road,” Proc. of the 2022 JMSE Conf. on Robotics and Mechatronics, 2022. https://doi.org/10.1299/jsmermd.2022.2P1-F06

- [15] J. Choi, T. Kim, and C. Kim, “Self-Ensembling with GAN-based Data Augmentation for Domain Adaptation in Semantic Segmentation,” Proc. of IEEE Int. Conf. Comput. Vis., pp. 6830-6840, 2019. https://doi.org/10.1109/ICCV.2019.00693

- [16] X. Huang and S. Belongie, “Arbitrary Style Transfer in Real-time with Adaptive Instance Normalization,” Proc. of IEEE Int. Conf. Comput. Vis., pp. 1501-1510, 2017. https://doi.org/10.1109/ICCV.2017.167

- [17] P. Isola, J.-Y. Zhu, T. Zhou, and A. A. Efros, “Image-to-Image Translation with Conditional Adversarial Networks,” Proc. of IEEE Conf. Comput. Vis. Pattern Recognit., 2017. https://doi.org/10.1109/CVPR.2017.632

- [18] T. Inoue, Y. Tomita, K. Gakuta, E. Yamada, A. Kariya, M. Kinosada, Y. Kitaide, and R. Miyamoto, “Improving Classification Accuracy of Real Images by Style Transfer Utilized on Synthetic Training Data,” Proc. of Int. Workshop on Smart Info-Media Systems in Asia, pp. 71-76, 2023. https://doi.org/10.34385/proc.77.RS3-2

- [19] M. Wada, Y. Ueda, M. Adachi, and R. Miyamoto, “Dataset Generation for Semantic Segmentation from 3D Scanned Data Considering Domain Gap,” Proc. of CoDIT, pp. 1711-1716, 2023. https://doi.org/10.1109/CODIT58514.2023.10284381

- [20] E. Riba, D. Mishkin, D. Ponsa, E. Rublee, and G. Bradski, “Kornia: an Open Source Differentiable Computer Vision Library for PyTorch,” Proc. of IEEE Winter Conf. Appl. Comput. Vis., pp. 3663-3672, 2020. https://doi.org/10.1109/WACV45572.2020.9093363

- [21] A. Fournier, D. Fussell, and L. Carpenter, “Computer Rendering of Stochastic Models,” Commun. ACM, Vol.25, No.6, pp. 371-384, 1982. https://doi.org/10.1145/358523.358553

- [22] R. C. Gonzalez and R. E. Woods, “Digital Image Processing (3rd Edition),” Prentice-Hall, Inc., USA, 2006.

- [23] M. Kim and H. Byun, “Learning Texture Invariant Representation for Domain Adaptation of Semantic Segmentation,” Proc. of IEEE Conf. Comput. Vis. Pattern Recognit., pp. 12972-12981, 2020. https://doi.org/10.1109/CVPR42600.2020.01299

- [24] K. He, X. Zhang, S. Ren, and J. Sun, “Deep Residual Learning for Image Recognition,” Proc. of IEEE Conf. Comput. Vis. Pattern Recognit., pp. 770-778, 2016. https://doi.org/10.1109/CVPR.2016.90

- [25] S. Xie, R. Girshick, P. Dollár, Z. Tu, and K. He, “Aggregated Residual Transformations for Deep Neural Networks,” Proc. of IEEE Conf. Comput. Vis. Pattern Recognit., pp. 5987-5995, 2017. https://doi.org/10.1109/CVPR.2017.634

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.