Paper:

A Remote Rehabilitation and Evaluation System Based on Azure Kinect

Tai-Qi Wang*, Yu You*, Keisuke Osawa*, Megumi Shimodozono**, and Eiichiro Tanaka***

*Graduate School of Information, Production and Systems, Waseda University

2-7 Hibikino, Wakamatsu-ku, Kitakyushu, Fukuoka 808-0135, Japan

**Graduate School of Medical and Dental Sciences, Kagoshima University

8-35-1 Sakuragaoka, Kagoshima, Kagoshima 890-8544, Japan

***Faculty of Science and Engineering, Waseda University

2-7 Hibikino, Wakamatsu-ku, Kitakyushu, Fukuoka 808-0135, Japan

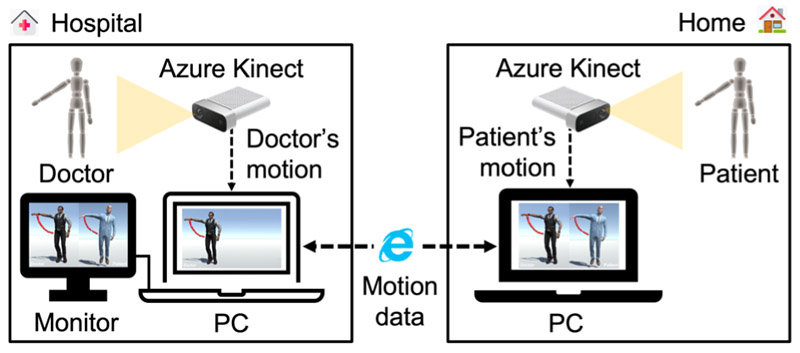

In response to the shortage, uneven distribution, and high cost of rehabilitation resources in the context of the COVID-19 pandemic, we developed a low-cost, easy-to-use remote rehabilitation system that allows patients to perform rehabilitation training and receive real-time guidance from doctors at home. The proposed system uses Azure Kinect to capture motions with an error of just 3% compared to professional motion capture systems. In addition, the system provides an automatic evaluation function of rehabilitation training, including evaluation of motion angles and trajectories. After acquiring the user’s 3D motions, the system synchronizes the 3D motions to the virtual human body model in Unity with an average error of less than 1%, which gives the user a more intuitive and interactive experience. After a series of evaluation experiments, we verified the usability, convenience, and high accuracy of the system, finally concluding that the system can be used in practical rehabilitation applications.

Schematic of remote rehabilitation and evaluation system

- [1] E. Tanaka, W. L. Lian, Y. T. Liao, H. Yang, L. N. Li, H. H. Lee, and M. Shimodozono, “Development of a Tele-Rehabilitation System Using an Upper Limb Assistive Device,” J. Robot. Mechatron., Vol.33, No.4, pp. 877-886, 2021.

- [2] B. B. Johansson, “Current trends in stroke rehabilitation. A review with focus on brain plasticity,” Acta Neurologica Scandinavica, Vol.123, No.3, pp. 147-159, 2011.

- [3] T. Tsuji and K. Ogata, “Rehabilitation Systems Based on Visualization Techniques: A Review,” J. Robot. Mechatron., Vol.27, No.2, pp. 122-125, 2015.

- [4] T. Fukao, Y. Tsumaki, and K. Kurashiki, “Special Issue on Field Robotics with Vision Systems,” J. Robot. Mechatron., Vol.33, No.6, p. 1215, 2021.

- [5] N. Tsuda, T. Ehiro, Y. Nomura, and N. Kato, “Training to Improve the Landing of an Uninjured Leg in Crutch Walk Using AR Technology to Present an Obstacle,” J. Robot. Mechatron., Vol.33, No.5, pp. 1096-1103, 2021.

- [6] M. S. Wu, W. Wang, and J. Y. Sun, “A balance ability training system for the elderly based on virtual reality,” J. Electronic Measurement Technology, Vol.42, No.21, pp. 163-168, 2019.

- [7] D. Perez-Marcos, O. Chevalley, T. Schmidlin, G. Garipelli, A. Serino, P. Vuadens, T. Tadi, O. Blanke, and J. D. R. Millan, “Increasing upper limb training intensity in chronic stroke using embodied virtual reality: a pilot study,” J. Neuroeng. Rehabil., Vol.17, No.14, Article No.119, 2017.

- [8] A. Peretti, F. Amenta, S. K. Tayebati, G. Nittari, and S. S. Mahdi, “Telerehabilitation: Review of the State-of-the-Art and Areas of Application,” JMIR Rehabil. Assist. Technol., Vol.4, Issue 2, Article No.e7, 2017.

- [9] T. Johansson and C. Wild, “Telerehabilitation in stroke care – a systematic review,” J. Telemed. Telecare., Vol.17, No.1, pp. 1-6, 2011.

- [10] S. Vukicevic, Z. Stamenkovic, S. Murugesan, and Z. Bogdanovic, “A New Telerehabilitation System Based on Internet of Things,” Facta Universitatis Series Electronics and Energetics, Vol.29, No.3, pp. 395-405, 2016.

- [11] D. Anton, I. Berges, J. Bermudez, A. Goni, and A. Illarramendi, “A Telerehabilitation System for the Selection, Evaluation and Remote Management of Therapies,” Sensors, Vol.18, No.5, Article No.1459, 2018.

- [12] G. Bernava, A. Nucita, G. Iannizzotto, T. Capri, and R. A. Fabio, “Proteo: A Framework for Serious Games in Telerehabilitation,” Applied Sciences, Vol.11, No.13, Article No.5935, 2021.

- [13] F. Rosique, F. Losilla, and P. J. N. Lorente, “Applying Vision-Based Pose Estimation in a Telerehabilitation Application,” Applied Sciences, Vol.11, No.19, Article No.9132, 2021.

- [14] M. Ma, R. Proffitt, and M. Skubic, “Validation of a Kinect V2 based rehabilitation game,” PLOS ONE, Vol.13, No.8, e0202338, 2018.

- [15] T. Adachi, M. Goseki, H. Takemura, H. Mizoguchi, F. Kusunoki, M. Sugimoto, E. Yamaguchi, S. Inagaki, and Y. Takeda, “Integration of Ultrasonic Sensors and Kinect Sensors for People Distinction and 3D Localization,” J. Robot. Mechatron., Vol.25, No.4, pp. 762-766, 2013.

- [16] T. Kikuchi, K. Sakai, and K. Ishiya, “Gait Analysis with Automatic Speed-Controlled Treadmill,” J. Robot. Mechatron., Vol.27, No.5, pp. 528-534, 2015.

- [17] I. Miyamoto, Y. Suzuki, A. Ming, M. Ishikawa, and M. Shimojo, “Basic Study of Touchless Human Interface Using Net Structure Proximity Sensors,” J. Robot. Mechatron., Vol.25, No.3, pp. 553-558, 2013.

- [18] Y. T. Liao, H. Yang, H. H. Lee, and E. Tanaka, “Development and Evaluation of a Kinect-Based Motion Recognition System based on Kalman Filter for Upper-Limb Assistive Device,” Proc. of the SICE Annual Conf., pp. 1621-1626, 2019.

- [19] A. Bilesan, S. Komizunai, T. Tsujita, and A. Konno, “Improved 3D Human Motion Capture Using Kinect Skeleton and Depth Sensor,” J. Robot. Mechatron., Vol.33, No.6, pp. 1408-1422, 2021.

- [20] Z. Cao, G. Hidalgo, T. Simon, S. E. Wei, and Y. Sheikh, “OpenPose: Realtime Multi-Person 2D Pose Estimation Using Part Affinity Fields,” IEEE Trans. Pattern Anal. Mach. Intell., Vol.43, No.1, pp. 172-186, 2021.

- [21] A. S. B. Pauzi, F. B. M. Nazri, S. Sani, A. M. Bataineh, M. N. Hisyam, M. H. Jaafar, M. N. A. Wahab, and A. S. A. Mohamed, “Movement Estimation Using Mediapipe BlazePose,” Proc. Advances in Visual Informatics: 7th Int. Visual Informatics Conf. (IVIC2021), pp. 562-571, 2021.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.