Paper:

Active Sound Source Localization by Pinnae with Recursive Bayesian Estimation

Wataru Odo*1, Daisuke Kimoto*2, Makoto Kumon*3, and Tomonari Furukawa*4

*1Sumitomo Chemical, Co., Ltd.

2200 Tsurusaki, Oita 870-0106, Japan

*2Daihatsu Motor Kyushu Co., Ltd.

1 Showashinden, Nakatsu, Oita 879-0107, Japan

*3Kumamoto University

2-39-1 Kurokami, Chuo-ku, Kumamoto 860-8555, Japan

*4Virginia Tech

Blacksburg, VA 24061, USA

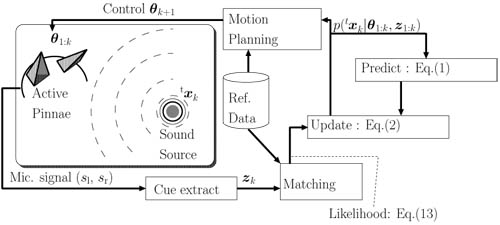

Schematic of the proposed system for actively localizing the sound source

- [1] K. Nakadai, H. G. Okuno, and H. Kitano, “Exploiting auditory fovea in humanoid-human interaction,” R. Dechter and R. S. Sutton (Eds.), AAAI/IAAI, pp. 431-438. AAAI Press / The MIT Press, 2002.

- [2] K. Nakadai, H. Nakajima, M. Murase, S. Kaijiri, K. Yamada, T. Nakamura, Y. Hasegawa, H. G. Okuno, and H. Tsujino, “Robust tracking of multiple sound sources by spatial integration of room and robot microphone arrays,” Proc. of IEEE Int. Conf. on Acoustics, Speech and Signal Processing, Vol.IV, pp. 929-932, 2006.

- [3] T. Otsuka, K. Ishiguro, H. Sawada, and H. G. Okuno, “Bayesian nonparametrics for microphone array processing,” IEEE Trans. on Audio, Speech and Language Processing, Vol.22, No.2, pp. 493-504, 2014.

- [4] A. Portello, P. Dan‘es, S. Argentieri, and S. Pledel, “Hrtf-based source azimuth estimation and activity detection from a binaural sensor,” Proc. of 2013 IEEE/RSJ Int. Conf. on Intelligent Robots and Systems, pp. 2908-2913, 2013.

- [5] M. Kumon, D. Kimoto, K. Takami, and T. Furukawa, “Bayesian non-field-of-view target estimation incorporating an acoustic sensor,” Proc. of 2013 IEEE/RSJ Int. Conf. on Intelligent Robots and Systems, pp. 3425-3432, 2013.

- [6] M. Kumon, D. Kimoto, K. Takami, and T. Furukawa, “Acoustic recursive Bayesian estimation for non-field-of-view targets,” Proc. of 14th Int. Workshop on Image and Audio Analysis for Multimedia Interactive Services, 2013.

- [7] K. Takami, T. Furukawa, M. Kumon, D. Kimoto, and G. Dissanayake, “Estimation of a nonvisible field-of-view mobile target incorporating optical and acoustic sensors,” Autonomous Robots, Vol.40, No.2, pp. 343-359, 2016.

- [8] D. Fox, W. Burgard, and S. Thrun, “Active Markov Localization for Mobile Robots,” Robotics and Autonomous Systems, Vol.25, pp. 195-207, 1998.

- [9] L. Mihaylova, T. Lefebvre, H. Bruyninckx, K. Gadeyne, and J. De Schutter, “Active Sensing for Robotics – A Survey,” Proc. of the 5 th Int. Conf. on Numerical Methods and Applications, pp. 1-8, 2002.

- [10] C. Kwok and D. Fox, “Reinforcement learning for sensing strategies,” 2004 IEEE/RSJ Int. Conf. on Intelligent Robots and Systems (IROS) (IEEE Cat. No.04CH37566), Vol.4, pp. 3158-3163, 2004.

- [11] E. W. Frew and C. Dixon, “Active sensing by unmanned aircraft systems in realistic communication environments,” Proc. of the IFAC Workshop on Networked Robotics, pp. 62-67, 2009.

- [12] F. Bourgault, A. Göktogan, T. Furukawa, and H. F. Durrant-Whyte, “Coordinated search for a lost target in a Bayesian world,” Advanced Robotics, Vol.18, No.10, pp. 979-1000, 2004.

- [13] C. Kreucher, K. Kastella, and A. O. Hero III, “Sensor management using an active sensing approach,” Signal Processing, Vol.85, No.3, pp. 607-624, March 2005.

- [14] J. Blauert, “Spatial Hearing – Revised Edition: The Psychophysics of Human Sound Localization,” The MIT Press, October 1996.

- [15] E. A. G. Shaw and R Teranishi, “Sound pressure generated in an external-ear replica and real human ears by a nearby point source,” J. of Acoustic Society America, Vol.44, No.1, pp. 240-249, 1968.

- [16] E. A. Lopez-Poveda and R. Meddis, “A physical model of sound diffraction and reflections in the human concha,” J. of Acoustic Society America, Vol.100, No.5, pp. 3248-3259, 1996.

- [17] D. W. Batteau, “The role of the pinna in human localization,” Proc. of Royal Society of London, B 158, pp. 158-180, 1967.

- [18] R. Heffner, H. Heffner, and N. Stichman, “Role of the elephant pinna in sound localization,” Animal Behaviour, Vol.30, No.2, pp. 628-629, 1982.

- [19] L. C. Populin and T. C. T. Yin, “Pinna movements of the cat during sound localization,” J. of Neuroscience, Vol.18, No.11, pp. 4233-4243, 1998.

- [20] T. Shimoda, T. Nakashima, M. Kumon, R. Kohzawa, I. Mizumoto, and Z. Iwai, “Spectral cues for robust sound localization with pinnae,” Proc. of 2006 IEEE/RSJ Int. Conf. on Intelligent Robots and Systems, pp. 386-391, 2006.

- [21] T. Rodemann, G. Ince, F. Joublin, and C. Goerick, “Using binaural and spectral cues for azimuth and elevation localization,” 2008 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2008.

- [22] U.-H. Kim and H. G. Okuno, “Improved binaural sound localization and tracking for unknown time-varying number of speakers,” Advanced Robotics, Vol.27, No.15, pp. 1161-1173, 2013.

- [23] M. Kumon and Y. Noda, “Active soft pinnae for robots,” Proc. of IEEE/RSJ Int. Conf. on Intelligent Robots and Systems, pp. 112-117, 2011.

- [24] M. Honda, “The function of the tragus,” Practica Oto-Rhino-Laryngologica, Vol.78, 1985 (in Japanese).

- [25] N. Chinchor, “MUC-4 evaluation metrics,” Proc. of Fourth Message Understanding Conf. (MUC-4), pp. 22-29, 1992.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.