Paper:

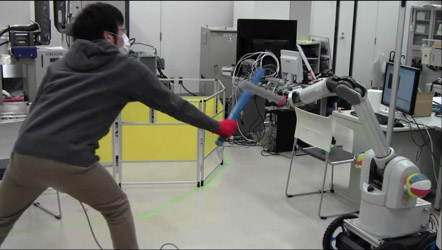

Motion Generation for a Sword-Fighting Robot Based on Quick Detection of Opposite Player’s Initial Motions

Akio Namiki and Fumiyasu Takahashi

Graduate School of Engineering, Chiba University

1-33 Yayoi-cho, Inage-ku, Chiba-shi, Chiba 263-8522, Japan

Defensive motion against attack

Defensive motion against attack- [1] M. Asada, M. Veloso, G. K. Kraetzschmar, and H. Kitano, “A review of robot world cup soccer research issues RoboCup: Today and tomorrow,” Experimental Robotics VI, Lecture Notes in Control and Information Sciences, Vol.250, pp. 369-378, 2000.

- [2] M. Matsushima, T. Hashimoto, M. Takeuchi, and F. Miyazaki, “A learning approach to robotic table tennis,” IEEE Trans. Robot. Autom., Vol.21, No.4, pp. 767-771, 2005.

- [3] Z. Zhang, D. Xu, and J. Yu, “Research and latest development of ping-pong robot player,” 7th World Congress on Intelligent Control Automation, pp. 4881-4886, 2008.

- [4] T. Senoo, A. Namiki, and M. Ishikawa, “High-Speed Batting Using a Multi-Jointed Manipulator,” IEEE Int. Conf. on Robotics and Automation, pp. 1191-1196, 2004.

- [5] M. Ishikawa, A. Namiki, T. Senoo, and Y. Yamakawa, “Ultra High-speed Robot Based on 1 kHz Vision System,” IEEE/RSJ Int. Conf. on Intelligent Robots and Systems, pp. 5460-5461, 2012.

- [6] B. E. Bishop and M. W. Spong, “Vision-Based Control of an Air Hockey Playing Robot,” IEEE Control Systems Magazine, pp. 23-32, 1999.

- [7] M. Ogawa, S. Shimizu, T. Kadogawa, T. Hashizume, S. Kudoh, T. Suehiro, Y. Sato, and K. Ikeuchi, “Development of air hockey robot improving with the human players,” 37th Annual Conf. IEEE Industrial Electronics Society, pp. 3364-3369, 2011.

- [8] A. Namiki, S. Matsushita, T. Ozeki, and K. Nonami, “Hierarchical processing architecture for an air-hockey robot system,” IEEE Int. Conf. Robotics and Automation, pp. 1187-1192, 2013.

- [9] F. Takahashi and A. Namiki, “Development of a Sword-Fighting Robot Controlled by High-Speed Vision,” JSME Conf. on Robotics and Mechatronics, 1A1-D06, 2014 (in Japanese).

- [10] Y. Watanabe, T. Komuro, S. Kagami, and M. Ishikawa, “Multi-Target Tracking Using a Vision Chip and its Applications to Real-Time Visual Measurement,” J. of Robotics and Mechatronics, Vol.17, No.2, 2005.

- [11] H. Yang, T. Takaki, and I. Ishii, “Simultaneous Dynamics-Based Visual Inspection Using Modal Parameter Estimation,” J. of Robotics and Mechatronics, Vol.23, No.1, 2011.

- [12] Y. Liu, H. Gao, Q. Gu, T. Aoyama, T. Takaki, and I. Ishii, “High-Frame-Rate Structured Light 3-D Vision for Fast Moving Objects,” J. of Robotics and Mechatronics, Vol.26, No.3, 2014.

- [13] T. Senoo, Y. Yamakawa, Y. Watanabe, H. Oku, and M. Ishikawa, “High-Speed Vision and its Application Systems,” J. of Robotics and Mechatronics, Vol.26, No.3, 2014.

- [14] T. Kunz, P. Kingston, M. Stilman, and M. Egerstedt, “Dynamic Chess; Strategic Planning for Robot Motion,” IEEE Int. Conf. Robotics and Automation, pp. 3796-3803, 2011.

- [15] T. Kroger, K. Oslund, T. Jenkins, D. Torczynski, N. Hippenmeyer, R. B. Rusu, and O. Khatib, “JediBot-Experiments in Human-Robot Sword-Fighting,” ISER, Vol.88 of Springer Tracts in Advanced Robotics, pp. 155-166, 2012.

- [16] T. Kizaki and A. Namiki, “Two Ball Juggling with High-Speed Hand-Arm and High-Speed Vision System,” IEEE Int. Conf. on Robotics and Automation, pp. 1372-1377, 2012.

- [17] A. Namiki and N. Ito, “Ball Catching in Kendama Game by Estimating Grasp Conditions Based on a High-Speed Vision System and Tactile Sensors,” IEEE-RAS Int. Conf. on Humanoid Robots, pp. 634-639, 2014.

- [18] Y. Yamakawa, A. Namiki, and M. Ishikawa, “Dynamic High-speed Knotting of a Rope by a Manipulator,” Int. J. of Advanced Robotic Systems, Vol.10, 2013.

- [19] I. Ishii, T. Tatebe, Q. Gu, Y. Moriue, T. Takaki, and K. Tajima, “2000 fps Real-time Vision System with High-frame-rate Video Recording,” IEEE Int. Conf. Robotics and Automation, pp. 1536-1541, 2010.

- [20] G. R. Bradski, “Computer Vision Face Tracking For Use in a Perceptual User Interface,” Intel Technology Journal, 2nd Quarter, 1998.

- [21] J. Takeuchi and K. Ymanishi, “A Unifying framework for detecting outliners and change points from time series,” IEEE Trans. Knowledge and Data Engineering, Vol.18, No.4, pp. 482-492, 2006.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.