Paper:

Ridge-Structure-Based Crop Classification for Small UGV and Derived Detection Framework

Yusuke Iuchi, Soki Nishiwaki, Fan Yi, Takuma Shoji, Ahmad Aizad Bin Azam, and Takanori Emaru

Hokkaido University

Kita 13, Nishi 8, Kita-ku, Sapporo, Hokkaido 060-8628, Japan

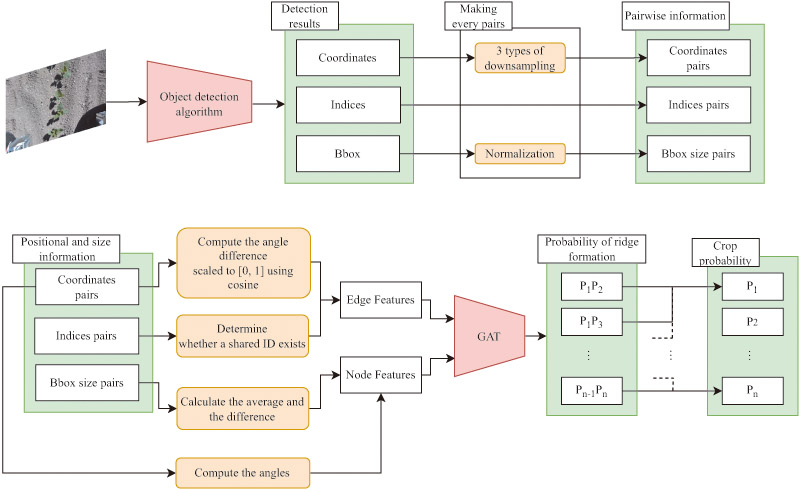

In crop detection, ridge structures provide crucial cues for classifying crops and weeds. However, it is difficult to obtain ridge structures for unmanned ground vehicles which can capture images only within a narrow field of view. This study proposes a lightweight algorithm that enables a model to implicitly infer the ridge structure from plant-to-plant spatial relationships and sizes. An object detector first detects each plant. The resulting bounding boxes are treated as pairwise features in the nodes. Metainformation indicating whether two nodes share the same ID is combined with their geometric relationships and encoded as edge features. A graph attention network addresses these relationships to infer and propagate ridge-aware regularities. By understanding the structure only from object relationships, the method compensates for the information lost to the limited field of view without any explicit edge structure input. In the experiments wherein we deliberately introduced a domain shift between the training/validation sets and test set, the proposed method increased the baseline mAP50 from 30.6% to 44.4%. This amounts to an increase of up to 13.8 percentage points. In addition, the proposed method requires only approximately 10 ms/frame on a Jetson AGX Orin to classify plants. This method acquires ridge structures internally without relying on external sensors or hand-tuned thresholds. Thus, it displays potential for in-field agricultural applications such as autonomous weeding.

Overview of the proposed framework

- [1] T. Yoshida, T. Fukao, and T. Hasegawa, “Fast detection of tomato peduncle using point cloud with a harvesting robot,” J. Robot. Mechatron., Vol.30, No.2, pp. 180-186, 2018. https://doi.org/10.20965/jrm.2018.p0180

- [2] M. Kawaguchi and N. Takesue, “A method of detection and identification for axillary buds,” J. Robot. Mechatron., Vol.36, No.1, pp. 201-210, 2024. https://doi.org/10.20965/jrm.2024.p0201

- [3] J. Tatsuno, K. Tajima, and M. Kato, “Automatic transplanting equipment for chain pot seedlings in shaft tillage cultivation,” J. Robot. Mechatron., Vol.34, No.1, pp. 10-17, 2022. https://doi.org/10.20965/jrm.2022.p0010

- [4] S. Wolfert, L. Ge, C. Verdouw, and M.-J. Bogaardt, “Big data in smart farming – A review,” Agricultural Systems, Vol.153, pp. 69-80, 2017. https://doi.org/10.1016/j.agsy.2017.01.023

- [5] A. Olsen et al., “DeepWeeds: A multiclass weed species image dataset for deep learning,” Scientific Reports, Vol.9, Article No.2058, 2019. https://doi.org/10.1038/s41598-018-38343-3

- [6] D. Steininger, A. Trondl, G. Croonen, J. Simon, and V. Widhalm, “The CropAndWeed dataset: A multi-modal learning approach for efficient crop and weed manipulation,” 2023 IEEE/CVF Winter Conf. on Applications of Computer Vision, pp. 3718-3727, 2023. https://doi.org/10.1109/WACV56688.2023.00372

- [7] K. Ota, J. Y. Louhi Kasahara, A. Yamashita, and H. Asama, “Weed and crop detection by combining crop row detection and k-means clustering in weed infested agricultural fields,” 2022 IEEE/SICE Int. Symp. on System Integration, pp. 985-990, 2022. https://doi.org/10.1109/SII52469.2022.9708815

- [8] M. Pérez-Ortiz et al., “Selecting patterns and features for between- and within- crop-row weed mapping using UAV-imagery,” Expert Systems with Applications, Vol.47, pp. 85-94, 2016. https://doi.org/10.1016/j.eswa.2015.10.043

- [9] P. De Marinis, G. Vessio, and G. Castellano, “RoWeeder: Unsupervised weed mapping through crop-row detection,” Computer Vision – ECCV 2024 Workshops, Part 3, pp. 132-145, 2025. https://doi.org/10.1007/978-3-031-91835-3_9

- [10] L. P. Osco et al., “A CNN approach to simultaneously count plants and detect plantation-rows from UAV imagery,” ISPRS J. of Photogrammetry and Remote Sensing, Vol.174, pp. 1-17, 2021. https://doi.org/10.1016/j.isprsjprs.2021.01.024

- [11] T. N. Kipf and M. Welling, “Semi-supervised classification with graph convolutional networks,” 5th Int. Conf. on Learning Representations, 2017.

- [12] P. Veličković et al., “Graph attention networks,” 6th Int. Conf. on Learning Representations, 2018.

- [13] Y. Iuchi, A. Koshigoe, S. Nishiwaki, and T. Emaru, “Crop detection method using relative positional relationships for small weeding robots,” 2025 IEEE/SICE Int. Symp. on System Integration, pp. 1357-1362, 2025. https://doi.org/10.1109/SII59315.2025.10871075

- [14] D. G. Kim, T. F. Burks, J. Qin, and D. M. Bulanon, “Classification of grapefruit peel diseases using color texture feature analysis,” Int. J. of Agricultural and Biological Engineering, Vol.2, No.3, pp. 41-50, 2009. https://doi.org/10.3965/j.issn.1934-6344.2009.03.041-050

- [15] A. Tellaeche, X. P. Burgos-Artizzu, G. Pajares, and A. Ribeiro, “A vision-based method for weeds identification through the Bayesian decision theory,” Pattern Recognition, Vol.41, No.2, pp. 521-530, 2008. https://doi.org/10.1016/j.patcog.2007.07.007

- [16] M. Montalvo et al., “Automatic detection of crop rows in maize fields with high weeds pressure,” Expert Systems with Applications, Vol.39, No.15, pp. 11889-11897, 2012. https://doi.org/10.1016/j.eswa.2012.02.117

- [17] Q. Lü, J. Cai, B. Liu, L. Deng, and Y. Zhang, “Identification of fruit and branch in natural scenes for citrus harvesting robot using machine vision and support vector machine,” Int. J. of Agricultural and Biological Engineering, Vol.7, No.2, pp. 115-121, 2014. https://doi.org/10.3965/j.ijabe.20140702.014

- [18] R. Girshick, “Fast R-CNN,” 2015 IEEE Int. Conf. on Computer Vision, pp. 1440-1448, 2015. https://doi.org/10.1109/ICCV.2015.169

- [19] K. He, G. Gkioxari, P. Dollár, and R. Girshick, “Mask R-CNN,” 2017 IEEE Int. Conf. on Computer Vision, pp. 2980-2988, 2017. https://doi.org/10.1109/ICCV.2017.322

- [20] Z. Cai and N. Vasconcelos, “Cascade R-CNN: High quality object detection and instance segmentation,” IEEE Trans. on Pattern Analysis and Machine Intelligence, Vol.43, No.5, pp. 1483-1498, 2021. https://doi.org/10.1109/TPAMI.2019.2956516

- [21] N. Carion et al., “End-to-end object detection with transformers,” Proc. of the 16th European Conf. on Computer Vision, Part 1, pp. 213-229, 2020. https://doi.org/10.1007/978-3-030-58452-8_13

- [22] Sunil G. C. et al., “Field-based multispecies weed and crop detection using ground robots and advanced YOLO models: A data and model-centric approach,” Smart Agricultural Technology, Vol.9, Article No.100538, 2024. https://doi.org/https://doi.org/10.1016/j.atech.2024.100538

- [23] T. Cover and P. Hart, “Nearest neighbor pattern classification,” IEEE Trans. on Information Theory, Vol.13, No.1, pp. 21-27, 1967. https://doi.org/10.1109/TIT.1967.1053964

- [24] A. Vaswani et al., “Attention is all you need,” Proc. of the 31st Int. Conf. on Neural Information Processing Systems, pp. 6000-6010, 2017.

- [25] J. L. Ba, J. R. Kiros, and G. E. Hinton, “Layer normalization,” arXiv:1607.06450, 2016. https://doi.org/10.48550/arXiv.1607.06450

- [26] D.-A. Clevert, T. Unterthiner, and S. Hochreiter, “Fast and accurate deep network learning by exponential linear units (ELUs),” arXiv:1511.07289, 2016. https://doi.org/10.48550/arXiv.1511.07289

- [27] S. Ioffe and C. Szegedy, “Batch normalization: Accelerating deep network training by reducing internal covariate shift,” Proc. of the 32nd Int. Conf. on Machine Learning, Vol.37, pp. 448-456, 2015.

- [28] T.-Y. Lin, P. Goyal, R. Girshick, K. He, and P. Dollár, “Focal loss for dense object detection,” 2017 IEEE Int. Conf. on Computer Vision, pp. 2999-3007, 2017. https://doi.org/10.1109/ICCV.2017.324

- [29] Y. Tian, Q. Ye, and D. Doermann, “YOLOv12: Attention-centric real-time object detectors,” arXiv:2502.12524, 2025. https://doi.org/10.48550/arXiv.2502.12524

- [30] H. Zhang et al., “DINO: DETR with improved denoising anchor boxes for end-to-end object detection,” arXiv:2203.03605, 2022. https://doi.org/10.48550/arXiv.2203.03605

- [31] T.-Y. Lin et al., “Microsoft COCO: Common objects in context,” Proc. of the 13th European Conf. on Computer Vision, Part 5, pp. 740-755, 2014. https://doi.org/10.1007/978-3-319-10602-1_48

- [32] K. Chen et al., “MMDetection: Open MMLab detection toolbox and benchmark,” arXiv:1906.07155, 2019. https://doi.org/10.48550/arXiv.1906.07155

- [33] I. Loshchilov and F. Hutter, “Decoupled weight decay regularization,” 7th Int. Conf. on Learning Representations, 2019.

- [34] “The state of food and agriculture 2022: Leveraging automation to transform agrifood systems.” https://openknowledge.fao.org/server/api/core/bitstreams/1c329966-521a-4277-83d7-07283273b64b/content/sofa-2022/technological-change-agricultural-production.html [Accessed July 30, 2025]

- [35] “White paper on food, agriculture and rural areas (FY2022),” (in Japanese). https://www.maff.go.jp/j/wpaper/w_maff/r4/pdf/zentaiban.pdf [Accessed July 30, 2025]

- [36] “Ultralytics YOLO (v 8.0.0).” https://github.com/ultralytics/ultralytics [Accessed July 30, 2025]

- [37] “YOLOv12: Attention-centric real-time object detectors.” https://github.com/sunsmarterjie/yolov12 [Accessed July 30, 2025]

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.