Development Report:

Development of a Quadruped Robot System for Load-Carrying Support in Orchard Operations

Ryoma Kataoka*, Mitsuki Aratani*, Kazutoshi Hamada**, and Toru Kurihara*

*Kochi University of Technology

185 Miyanokuchi, Tosayamada, Kami, Kochi 782-8502, Japan

**Kochi University

200 Monobe Otsu, Nankoku, Kochi 783-8502, Japan

Harvesting and thinning in orchards involve intensive fruit transport, which is inefficient and burdensome, particularly in mountainous and hilly areas. Heavy vehicles can damage soils and roots, whereas manual transport is labor-intensive. We developed a quadruped robot system capable of stable walking on uneven terrain. Equipped with detachable harvest baskets, an electric basket for discarded fruit, and voice-command control, the system enhances efficiency and enables sustainable orchard management in challenging landscapes.

Automatic disposal of thinned fruits

1. Introduction

The agricultural sector currently faces increasingly severe issues such as an aging workforce and a shortage of successors. Similar challenges are observed in fruit tree cultivation. In particular, thinning operations conducted prior to harvest are important processes that directly affect fruit quality and the yield of the following year. However, they still rely heavily on manual labor, resulting in an increased labor burden. Traditionally, hand-held baskets and vehicles have been used for fruit transportation. However, manual transportation imposes a significant physical burden on workers and is inefficient. Transport vehicles have the advantage of being able to transport large quantities of fruit simultaneously. However, problems such as soil damage from repeated trips in the orchard and fruit damage due to instability on slopes pose risks.

In terms of robotic applications in agriculture, studies on transportation support and monitoring in orchards using wheeled- and crawler-type robots have been reported 1,2,3,4,5. These include an autonomous mowing system that recognizes tree trunks using LiDAR 6, unpaved road recognition using 3D LiDAR 7, and high-precision 3D map construction of an entire orchard using SLAM with UAVs 8. Furthermore, research has been conducted on forklifts that perform autonomous loading using RGB-D cameras to improve the efficiency of transport operations 9. However, many of these assume flat terrain or indoor environments. Thus, their applicability is limited in sloped or uneven areas such as mountainous orchards. To address this issue, a traveling unit for steep slopes was developed in which stable movement was achieved through a loading platform control mechanism using electric cylinders, and its adaptability to harvesting transportation tasks was proposed 10.

Quadruped robots enable stable locomotion even on uneven or sloped terrain, and are less likely to damage orchard surfaces. Furthermore, by automating fruit transportation, workers can continue harvesting or thinning operations during transport, thereby improving overall work efficiency.

In our previous study, we developed quadruped robot-based load-carrying support systems for harvesting and thinning operations as well as orchard data collection systems. In an initial study, we investigated a method for constructing an environmental map of an orchard by following a designated worker wearing a bib and automatically transporting the harvested fruits to a temporary storage location along the shortest path derived from this map 11. The initial system consisted of a laptop PC and a Raspberry Pi 5. However, to reduce the system size and increase the payload capacity, these components were later replaced with a Jetson Orin Nano and a Tier IV C1-120. This redesign enabled system miniaturization and improved robustness under backlighting conditions commonly encountered in outdoor orchards 12. Subsequently, focusing on thinning operations, which represent a particularly labor-intensive process in fruit cultivation, we developed a support system for single-operator use that enables automatic disposal of thinned fruits using an electrically actuated basket with posture control 13. In addition, to support data-driven cultivation management, we developed a LiDAR-based system for collecting three-dimensional point cloud data from orchard environments 14. Building on these preceding studies, the present work integrates transportation, sensing, and human-robot interaction into a unified quadruped robot-based system and evaluates its practical usability in real orchard environments.

In this study, we aimed to develop a load-carrying support system for yuzu (Citrus junos) orchards that enables the autonomous transport of both harvested and thinned fruits. We report the system configuration, implementation, and field validation results.

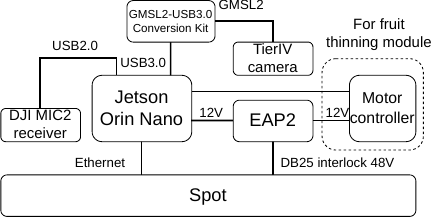

2. Hardware Configuration

The photograph of the proposed system is shown in Fig. 1, and its hardware configuration is shown in Fig. 2. This system uses a quadruped robot Spot as its base and is equipped with various devices to support transportation operations. A Jetson Orin Nano was installed to handle the commands for autonomous movement and human tracking. Furthermore, the EAP2 option equipped with LiDAR was mounted on the back to assist in creating environmental maps. In addition, a Tier IV camera was mounted on the front to perform human tracking. Each component is described in detail below.

Fig. 1. Photo of the proposed system.

Fig. 2. Hardware configuration.

2.1. Quadruped Robot Platform

A quadruped robot Spot (Boston Dynamics) was employed as the base platform for the proposed system. The primary specifications of Spot are listed in Table 1. Spot has the capability to walk stably even on uneven terrain and slopes, and has mobility suitable for transportation tasks in fruit orchards. The EAP2 option b was mounted at the back of the Spot. EAP2 is equipped with a LiDAR sensor (VLP-16, Velodyne) and Jetson Xavier NX and plays a role in supporting the generation of environmental maps and autonomous movement functions. In this study, we added various sensors and transportation functions on top of this base to construct a fruit agriculture support system.

Table 1. Specification of Spot a.

2.2. Sensing Device

2.2.1. Tier IV Camera

Fig. 3. Tier IV C1-120.

Spot was equipped with cameras on the front, rear, left, and right. However, these were designed to capture the ground obliquely downward to check the footing, making it difficult to sufficiently capture workers at a distance. Furthermore, since this system is intended for outdoor use, there is a problem that optical ghosting artifacts appear in the images under backlit conditions, reducing the accuracy of human tracking. In addition, because tracking is required even when workers move laterally, a camera with a wide field of view (FoV) is required. Therefore, we adopted a Tier IV C1-120 manufactured by Tier IV, which is also used in automotive camera applications 12. The camera was connected to the Jetson Orin Nano through a GMSL2-USB3.0 converter, and the acquired images were processed on the Jetson Orin Nano. Fig. 3 shows the appearance of the Tier IV C1-120, and its intrinsic parameters were computed using MATLAB’s Computer Vision Toolbox. To ensure consistency with the input size of the detection model, calibration was performed using preprocessed images, and the original images (\(1920\times1280\)) were cropped by 100 pixels from both the top and bottom to \(1920 \times 1080\) pixels and subsequently resized to \(640\times360\) pixels. The resulting calibration parameters are listed in Table 2.

We also evaluated the installation angles of the cameras. The camera height (74 cm), bib height (170 cm), and distance (50 cm) yielded the required angle of approximately 62.5°. Considering a vertical FoV of 77° (\(\pm\)38.5°), an upward tilt of approximately \(62.5° - 38.5° = 24°\) ensured that the entire bib was captured.

Consequently, by installing the camera tilted approximately 24° upward, it was possible to capture the entire bib of the workers at a close distance within the FoV.

Table 2. Intrinsic parameters of Tier IV C1-120.

Table 3. Specification of Jetson Orin Nano c.

2.2.2. DJI MIC2

In this system, we adopted a DJI MIC2 to send commands to Spot via voice. With a Bluetooth connection, voice commands are sometimes not recognized correctly due to interference from other devices. Therefore, we connected the receiver to the Jetson Orin Nano via USB and sent voice commands via wireless communication with the transmitter. Although the specified communication distance was 250 m, the actual distance at which voice recognition functioned normally was approximately 75 m. Considering the size of the orchard, we considered this communication distance sufficient.

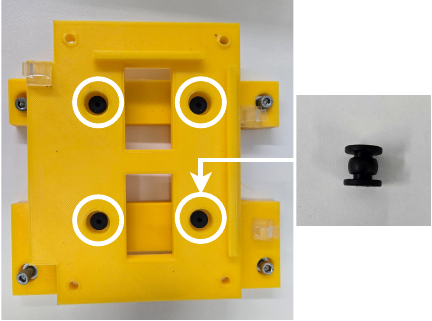

2.3. Companion Computer (Jetson Orin Nano)

In addition to the internal controller embedded within Spot, we mounted an external companion computer, a Jetson Orin Nano, to handle high-level processing 12. While the onboard computer of Spot manages locomotion and stability, Jetson is responsible for issuing navigation commands, processing camera images for worker recognition, and executing voice recognition to receive operator commands. It also integrates the data from various sensors required for agricultural support tasks. Through this configuration, Jetson functions as a central node that bridges human input and autonomous robot operation. Table 3 lists the specifications of the Jetson Orin Nano Development Kit. During operation, we observed the possibility of malfunctions in the Jetson Orin Nano or momentary disconnections of connected cables due to vibrations generated during Spot walking. An anti-vibration mechanism was implemented to mitigate these issues. As shown in Fig. 4, a rubber ball was placed between the base mounted on the back of the Spot and the mounting plate for the Jetson Orin Nano, thereby absorbing vibrations. Furthermore, cable clamps and clips were installed to prevent momentary disconnections, as shown in Fig. 5, to securely fix the cables and minimize the transmission of vibrations to the connectors.

Fig. 4. Anti-vibration mounting structure for Jetson Orin Nano.

Fig. 5. Cable fixing with clamps and clips.

2.4. Transport Modules

2.4.1. Detachable Mechanism for Harvest Baskets

A detachable attachment was installed on the EAP2 option to enable a one-touch replacement of the harvest basket. A bicycle detachment mechanism was adopted for a low-cost, reliable fixation and easy removal. While 20 kg baskets are common in the field, Spot’s payload constraints require the use of a smaller half-height basket. Fig. 6 shows the base and installation. This mechanism allows quick basket exchange after transport, thereby improving the continuity and efficiency of harvesting.

Fig. 6. Harvest basket.

2.4.2. Electrically Actuated Basket for Discarded Fruits

An electrically actuated basket with an automatic opening and closing mechanism, originally developed in our previous work 13, was utilized to dispose of discarded fruits during thinning operations. Since thinned fruits have no commercial value, the design prioritizes lightweight construction, operational simplicity, and reliable discharge rather than careful handling.

Although the quadruped robot supports a maximum payload of approximately 14 kg, a portion of this capacity is consumed by the EAP2 module and onboard sensors (approximately 4 kg). Therefore, minimizing the mass of the basket and its actuation mechanism is essential for maximizing the remaining payload available for the transported fruits. To achieve this, an aluminum honeycomb structure was adopted for the basket frame, providing a high stiffness-to-weight ratio while maintaining sufficient mechanical strength under field conditions. Consequently, the developed basket achieves a total mass of 4.2 kg, enabling efficient utilization of the remaining payload capacity.

The opening and closing mechanisms were driven by a compact electric motor and the lid was controlled using GPIO signals from a Jetson Orin Nano mounted on the robot. Upon receiving a voice command to discard fruits, the quadruped robot autonomously moved to a predefined discarding location, opened the basket lid to discharge the contents, and returned to the working position. This parallelization of thinning and disposal tasks enables a single worker to continue thinning without interruption, thereby reducing the physical burden and improving overall work efficiency (Fig. 7).

Fig. 7. Operation of fruit discarding.

3. Software Architecture

3.1. Worker-Following Function

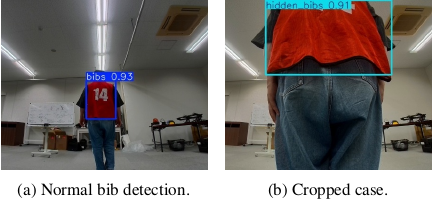

In this system, we assumed a scenario in which multiple workers are present in the field. The target worker wears a bib detected in the camera image to estimate the distance to the worker 11,12. Based on this information, movement commands were issued to Spot. For bib detection, we employed YOLOv8n, an object detection model provided by Ultralytics d. In future studies, we plan to identify the target worker by using the back number of the bib, enabling flexible switching of the tracking target.

3.1.1. YOLO-Based Bib Detection

For training and validation, 3099 images were collected at the Kochi University of Technology campus. During annotation, only the undeformed region of the bib (shoulder to hem) was labeled, excluding parts distorted by the wind. When a bib protruded beyond the image frame, it was annotated with a separate label (“hidden bib”) rather than the normal “bib” label, to prevent incorrect detection. During the operation, only bib labels were processed. From the dataset, 2479 images were used for training and 620 for validation. All images were resized to \(640\times360\), and training was conducted for 1000 epochs with a batch size of 16. A detection frame rate of 3.8 fps was achieved during the experimental trials. Examples of the detection results are shown in Fig. 8.

Fig. 8. Bibs detection.

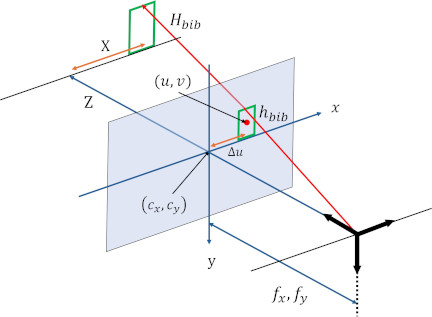

Fig. 9. Geometry of distance estimation using a pinhole camera model.

3.1.2. Distance Estimation and Tracking

The distance to the worker was estimated using a standard pinhole camera model. The relative position of the worker was determined within the image coordinate system using the intrinsic parameters of the camera calibrated in Section 2.2.1.

Let \(H_{\mathit{bib}}\) [mm] denote the actual vertical length of the bib in the physical world and let \(h_{\mathit{bib}}\) [pixels] denote the vertical length of the detected bib region in the image, measured as the height of the corresponding bounding box. The depth \(Z\) [mm] along the optical axis from the camera to the worker was computed using Eq. \(\eqref{eq:z_distance}\).

-

If the bounding box center is located within 90 pixels of the left or right edges of the image, Spot performs only in-place rotation (without any forward motion) to prevent loss of the target from the FoV. The rotation angle is computed as \(\tan^{-1}(X/Z)\).

-

When the bounding box center is near the center of the FoV and the estimated distance \(Z\) is within the range of 1.5–10 m, Spot approaches the worker by first rotating to align with the target and then moving forward.

-

If the estimated distance \(Z\) is less than 1.5 m, Spot refrains from advancing forward to ensure safety. However, in-place rotation may still be performed to maintain the worker within the FoV.

-

If the estimated distance \(Z\) exceeds 10 m, all motions are suspended, including both rotation and forward movements.

Table 4. Attributes of a waypoint.

Table 5. Attributes of an edge.

Fig. 10. Fiducial.

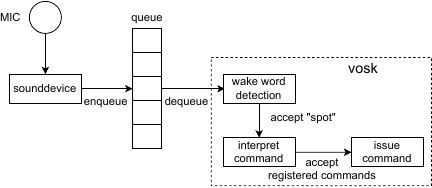

3.2. Voice Command Interface

Button-based or manual inputs are impractical because workers often occupy their hands. To address this issue, we implemented a voice command system using Vosk toolkit g. Voice data are managed in a queue, along with timestamps. To avoid accidental recognition of conversational speech, the wake word “Spot” must be spoken before any command. The implemented voice commands and their functions are summarized in Table 6. To further reduce processing delays, the system limits voice analysis to 0.5 s for the wake word, skipping longer utterances. Consequently, the latency from the completion of voice input to command execution was suppressed to a maximum of 0.53 s, ensuring a near-real-time response. The overall recognition flow is illustrated in Fig. 12.

Fig. 12. Flow of the voice command recognition process.

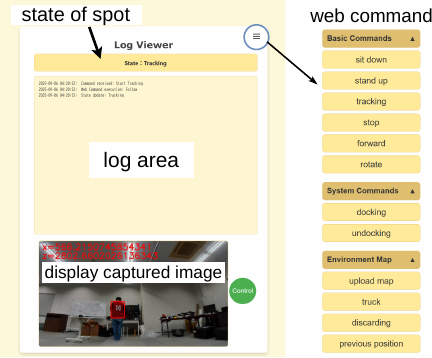

3.3. Monitoring Dashboard

In this system, during program execution, it is possible to remotely connect a PC to the Jetson Orin Nano and check the operational status via the console output. However, to grasp multiple pieces of information, such as program progress and robot status simultaneously, console output alone is not sufficient. Therefore, we constructed a web-based monitoring dashboard using Flask h to provide an integrated view of the robot’s activities. This interface allows the following information to be checked simultaneously in the browser:

-

Status display area representing the state of the robot.

-

Log area recording of accepted voice commands and misrecognitions.

-

Images captured by the Tier IV camera during the tracking operation and their inference results.

This enables developers to grasp the robot’s behavior and processing status in real time, significantly improving the visibility and operability compared to conventional console output. Fig. 13 shows the overall design of the monitoring dashboard.

Fig. 13. Monitoring dashboard.

4. Experiments

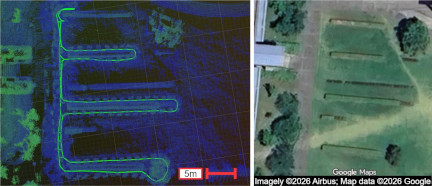

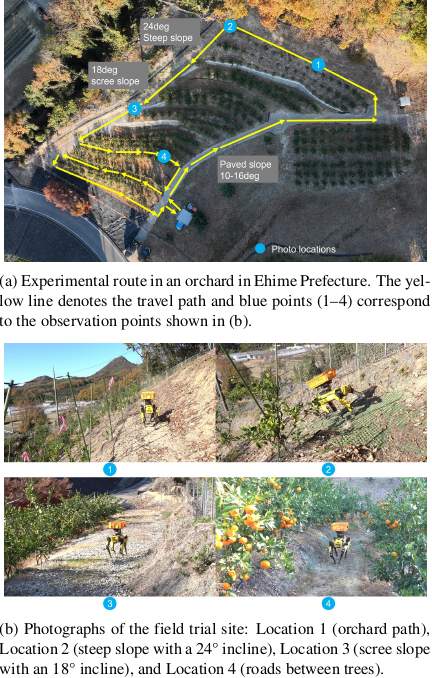

4.1. Field Experiments in Steep and Uneven Orchard Terrain

To demonstrate that quadruped robots can operate stably on slopes and uneven terrain, field-walking experiments were conducted at a public steep-slope model orchard operated by the Ehime Research Institute of Agriculture, Forestry and Fisheries. The orchard included several representative conditions commonly found in mountainous fruit farms: a steep slope with an inclination of \(24°\), a scree slope with an inclination of \(18°\), a paved slope with an inclination ranging from \(10°\) to \(16°\), and a horizontal walkway between the trees. A photograph of the experimental site is presented in Fig. 14(a).

The experiments were performed using Spot’s autonomous walking (Autowalk) function while carrying an approximately 7.5 kg fruit load. The robot repeatedly traversed a predefined route with a total distance of approximately 256 m. Spot successfully completed the course in approximately 5 minutes without falling or external intervention, demonstrating stable locomotion performance on a steep and uneven orchard terrain (Fig. 14(b)).

Fig. 14. Field experiments conducted in a public steep-slope model orchard environment.

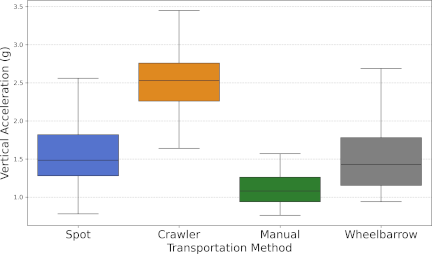

4.2. Comparison of Resultant Acceleration

To evaluate transportation safety and assess the ability of the proposed system to minimize damage to harvested fruits, we compared four transportation conditions: the quadruped robot Spot, a conventional engine-driven crawler-type carrier (KCG J850A, KIORITZ), manual carrying, and wheelbarrow. Experiments were conducted in a yuzu orchard over a transport distance of approximately 20 m.

Three-axis acceleration was recorded at 10 Hz using a USB acceleration logger (DT-178A, CEM) placed inside the transport basket. The experimental results are presented in Fig. 15.

Manual carrying exhibited the lowest median acceleration of 1.1 g, because the human body effectively absorbs mechanical shocks from uneven terrain. In contrast, the engine-driven crawler-type carrier showed a median acceleration exceeding 2.5 g with large fluctuations, which can be attributed to the direct transmission of ground-induced vibrations through its rigid mechanical structure.

Both Spot and the wheelbarrow maintained median acceleration levels of approximately 1.5 g. Notably, Spot demonstrated a substantial reduction in vibration compared to the conventional crawler carrier. Although the vibration characteristics may vary depending on the ground conditions, these results indicate that the proposed quadruped robot system offers superior fruit protection compared with conventional engine-driven crawler-type carriers, thereby reducing the risk of bruising and surface damage during orchard transportation.

Fig. 15. A comparison of each load’s vibration.

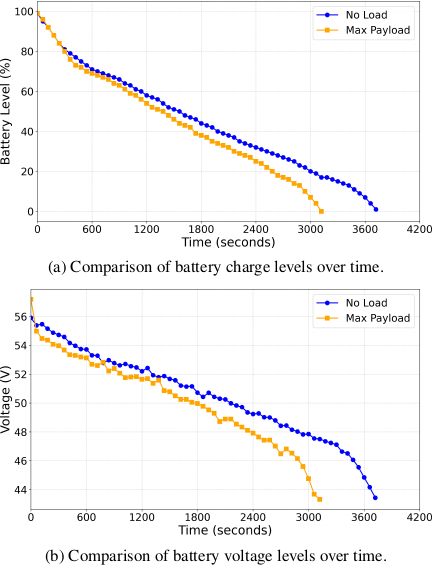

4.3. Comparison of Battery Consumption

To evaluate operational endurance and power stability, we investigated the effect of payload weight on battery consumption. The experiment was conducted on a flat grass field at the Kochi University of Technology. Two conditions were compared: (i) no payload in the basket, and (ii) a payload of 10.8 kg, corresponding to the maximum loading condition.

Starting from a fully charged battery (100%), the robot was instructed to walk continuously on a flat terrain at a constant speed of 1.2 m/s until it automatically stopped due to battery depletion. The battery level and voltage were recorded in real time using the Jetson Orin Nano. The experimental results are presented in Fig. 16.

Under the no payload condition, the robot operated continuously for approximately 62 minutes, whereas under the maximum payload condition, the operation time was approximately 52 minutes. These results indicate that payload weight leads to a reduction of approximately 10 minutes in continuous operating time, thereby providing a practical guideline for mission planning in orchard operations.

Fig. 16. Comparison of battery consumption by loads.

4.4. Qualitative Evaluation of Implemented Functions in Field Operations

4.4.1. Worker-Following Function

During field operations, Spot autonomously follows the worker, enabling continuous harvesting without manual repositioning. This reduces interruptions compared to manually operated transport vehicles and contributes to smoother task execution.

However, field trials revealed occasional tracking instabilities. Specifically, the robot temporarily lost detection when the identification bib was occluded by tree trunks or dense foliage or when the worker made abrupt directional changes between rows. Although tracking was typically reestablished once the worker reentered Spot’s FoV, these observations indicate that the current perception strategy remains sensitive to visual occlusion. Therefore, a more robust tracking and recovery method incorporating environmental mapping is required in future studies.

4.4.2. Voice Command Interface

The implementation of the voice command interface enabled hands-free operation of the robot even when workers’ hands were occupied, significantly improving the overall operability. Basic commands such as starting and stopping functions were successfully recognized in an actual orchard environment. With a clear line of sight, voice recognition was confirmed at distances of up to 75 m. The average command execution latency was 0.53 s from the onset of speech, indicating practical responsiveness to field operations. However, field trials also revealed that continuous conversations among workers occasionally interfere with the wake word detection or result in an increased processing latency. Therefore, the implementation of a more robust voice recognition method is required in future studies.

4.4.3. Detachable Mechanism for Harvest Baskets

The detachable mechanism, adapted from a bicycle attachment structure, enables the direct exchange of harvest baskets upon arrival at the transport vehicle. This eliminated the need to manually transfer the harvested fruits to another container.

The attachment and detachment processes required approximately 15 s and could be performed without specialized tools. This mechanism functioned reliably during repeated exchanges in field trials, demonstrating its practical suitability for orchard transport tasks. However, a practical limitation was identified in the physical design of the basket-attachment interface. The attachment to the bottom of the basket was approximately 3-cm-thick. When baskets filled with harvested fruits are stacked, this protruding component poses the risk of bruising or damaging the fruits in the underlying baskets. Therefore, the development of a detachable mechanism with thinner attachments to prevent fruit damage is required in future studies.

5. Conclusion and Future Work

In this study, we developed a quadruped robot-based fruit transportation support system to alleviate the burden of harvesting and thinning operations. The system can operate stably on slopes and uneven terrain, ensuring efficient transport and disposal of harvested and thinned fruits without damaging the orchard. It also introduces practical design features tailored for field use, including detachable harvest baskets, electric disposal baskets, and voice command interfaces. In future work, we plan to quantitatively evaluate the effects of reducing worker burden and task time through demonstration experiments in actual orchards. We also aim to enhance the worker tracking and autonomous exploration functions to improve operability across diverse scenarios. Furthermore, we intend to extend the system to other fruit tree species and integrate it with data collection platforms to develop labor-saving support systems that contribute to sustainable fruit production.

Acknowledgments

This work was supported by the Cabinet Office grant in aid “Evolution to Society 5.0 Agriculture Driven by IoP (Internet of Plants),” Japan.

- [1] Y. Ye et al., “Bin-Dog: A robotic platform for bin management in orchards,” Robotics, Vol.6, No.2, Article No.12, 2017. https://doi.org/10.3390/robotics6020012

- [2] W. Mao et al., “Development of a combined orchard harvesting robot navigation system,” Remote Sens., Vol.14, No.3, Article No.675, 2022. https://doi.org/10.3390/rs14030675

- [3] M. Mortazavi, D. J. Cappelleri, and R. Ehsani, “RoMu4o: A robotic manipulation unit for orchard operations automating proximal hyperspectral leaf sensing,” arXiv:2501.10621, 2025. https://doi.org/10.48550/arXiv.2501.10621

- [4] Z. Zhou et al., “Advancement in artificial intelligence for on-farm fruit sorting and transportation,” Frontiers in Plant Science, Vol.14, Article No.1082860, 2023. https://doi.org/10.3389/fpls.2023.1082860

- [5] D. Gerdan Koc and M. Vatandas, “Development and performance analysis of an autonomous agricultural vehicle for fruit transportation,” J. of Field Robotics, Vol.42, No.7, pp. 3189-3212, 2025. https://doi.org/10.1002/rob.22573

- [6] H. Kurita et al., “Localization method using camera and LiDAR and its application to autonomous mowing orchards,” J. Robot. Mechatron., Vol.34, No.4, pp. 877-886, 2022. https://doi.org/10.20965/jrm.2022.p0877

- [7] K. Kurashiki, K. Kono, and T. Fukao, “LiDAR based road detection and control for agricultural vehicles,” J. Robot. Mechatron., Vol.36, No.6, pp. 1516-1526, 2024. https://doi.org/10.20965/jrm.2024.p1516

- [8] S. Nishiwaki et al., “Proposal of UAV-SLAM-based 3D point cloud map generation method for orchard measurements,” J. Robot. Mechatron., Vol.36, No.5, pp. 1001-1009, 2024. https://doi.org/10.20965/jrm.2024.p1001

- [9] R. Iinuma et al., “Robotic forklift for stacking multiple pallets with RGB-D cameras,” J. Robot. Mechatron., Vol.33, No.6, pp. 1265-1273, 2021. https://doi.org/10.20965/jrm.2021.p1265

- [10] Y. Sashihara et al., “Development of a traveling unit for steep slopes and its adaptability to harvesting and transporting operations,” J. of the Japanese Society of Agricultural Machinery and Food Engineers, Vol.86, No.4, pp. 231-239, 2024.

- [11] R. Kataoka et al., “Investigation of a yuzu harvesting support system using human tracking and environmental map by Spot,” The Society of Instrument and Control Engineers Shikoku Chapter, pp. 124-127, 2024.

- [12] R. Kataoka et al., “Proposal of yuzu harvesting support system using quadruped robot Spot and Jetson,” Proc. JSME Conf. on Robotics and Mechatronics (ROBOMECH), Article No.2P2-A03, 2025. https://doi.org/10.1299/jsmermd.2025.2P2-A03

- [13] T. Kurihara et al., “Development of an autonomous transportation support system for fruit thinning using the quadruped robot ‘Spot’,” Proc. JSME Conf. on Robotics and Mechatronics (ROBOMECH), Article No.2P1-F09, 2025. https://doi.org/10.1299/jsmermd.2025.2P1-F09

- [14] K. Kanagawa and T. Kurihara, “The implementation of a quadruped point cloud collection system for leaf count estimation using Jetson,” Proc. JSME Conf. on Robotics and Mechatronics (ROBOMECH), Article No.2P1-F05, 2025. https://doi.org/10.1299/jsmermd.2025.2P1-F05

- [a] Boston Dynamics, “Spot Specifications.” https://support.bostondynamics.com/s/article/Spot-Specifications-49916 [Accessed August 13, 2025]

- [b] “Spot EAP2.” https://bostondynamics.com/wp-content/uploads/2023/05/spot-eap-2.pdf [Accessed August 13, 2025]

- [c] NVIDIA, “NVIDIA Jetson Orin Nano Developer Kit.” https://files.seeedstudio.com/wiki/Jetson-Orin-Nano-DevKit/jetson-orin-nano-developer-kit-datasheet.pdf [Accessed August 13, 2025]

- [d] Ultralytics, “YOLO Documentation.” https://docs.ultralytics.com/models/yolov8/ [Accessed September 7, 2025]

- [e] Boston Dynamics, “GRAPHNAV MAP STRUCTURE.” https://dev.bostondynamics.com/docs/concepts/autonomy/graphnav_map_structure.html [Accessed August 13, 2025]

- [f] “rerun.” https://rerun.io/ [Accessed September 5, 2025]

- [g] “VOSK Offline Speech Recognition API.” https://alphacephei.com/vosk/ [Accessed September 20, 2025]

- [h] “Flask Documentation.” https://flask.palletsprojects.com/en/stable/ [Accessed August 23, 2025]

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.