Paper:

Unified Optimization of YOLOX for Robust Small Object Detection in Real-Time Potato Harvesting

Joonam Kim†

, Kenichi Tokuda

, Kenichi Tokuda

, Giryeon Kim, and Rena Yoshitoshi

, Giryeon Kim, and Rena Yoshitoshi

Research Center for Agricultural Robotics, National Agricultural and Food Research Organization

2-1-12 Kannondai, Tsukuba, Ibaraki 305-8642, Japan

†Corresponding author

Automated potato harvesting requires vision systems meeting stringent operational constraints: near-zero potato misclassification (PMR <1%) and high impurity detection rate (IDR >60%). Although YOLOX supports real-time processing, it exhibits critical performance limitations for small object detection. This paper introduces a unified optimization strategy combining training-level modifications (P3 feature enhancement, SimOTA parameter optimization, and size-aware loss weighting) with inference-level threshold optimization. Experimental results based on 10,000 images containing 232,000 annotations demonstrate that all four evaluated approaches achieved the defined operational constraints. SimOTA emerged as the optimal configuration, delivering the highest small object recall (18.18%) while maintaining PMR of 0.06% and IDR of 95.05%. P3 feature enhancement achieved the lowest PMR (0.05%), SALW provided balanced performance (PMR 0.06%, IDR 96.11%), and the P3+SimOTA combination failed critically (PMR 9.90%), revealing fundamental incompatibility between optimization components. All successful configurations exceeded real-time processing requirements (45–61 fps), confirming suitability for deployment in resource-constrained agricultural automation systems.

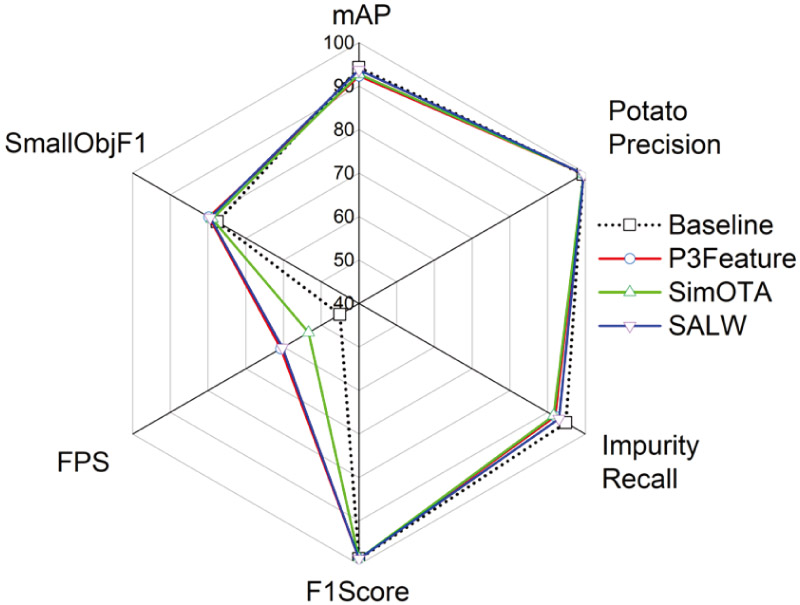

Optimization strategy comparison

1. Introduction

Global agriculture is undergoing profound transformation driven by automation technologies that address critical challenges, including labor shortages, economic sustainability, and the demand for enhanced productivity 1,2. In precision agriculture, automated harvesting of soil-grown crops requires robust real-time object detection frameworks capable of distinguishing crops from diverse impurities, such as stones, soil clods, and plant debris 3,4. Deep learning approaches have demonstrated strong performance across agricultural applications, including plant disease detection 5, animal health monitoring 6, and general AI-based object detection tasks 7. Among these applications, potato harvesting constitutes a particularly challenging case due to the need to preserve marketable yield while ensuring effective impurity removal during high-speed, excavation-based collection processes 8,9. The unified optimization framework illustrated in Fig. 1 addresses these challenges through integrated training-level and inference-level optimizations.

The Japanese agricultural context requires flexible, mobile harvesting systems that serve multiple small to medium-sized farms, contrasting with the centralized operations prevalent in European and North American agricultural models 10,11,12. This operational structure favors semi-automated rather than fully autonomous solutions. A typical potato harvester traditionally requires 4–6 workers for operation and quality control, with automation targeting a reduction by 2–3 persons while maintaining harvesting efficiency and product quality 13. This contextual reality directly shapes the asymmetric performance requirements for the detection system 14. Potato misclassification rate (PMR) must be kept extremely low (\(< 1{\%}\)), as misclassified potatoes represent irrecoverable yield loss within the existing workflow. By contrast, a moderate impurity detection rate (IDR \(> 60{\%}\)) remains acceptable because residual impurities can be manually removed by the reduced workforce supervising the semi-automated process.

Agricultural deployment environments present unique challenges for computer vision systems 15, as harvesting equipment typically operates in remote field locations with limited connectivity, relying on standalone edge computing units powered by onboard batteries and variable power supplies 16. Furthermore, these systems must function reliably under harsh conditions, including vibration, dust, temperature fluctuations, and inconsistent natural lighting 17. Such conditions restrict available computational resources for real-time inference and necessitate detection architectures that sustain high accuracy despite these limitations 18,19.

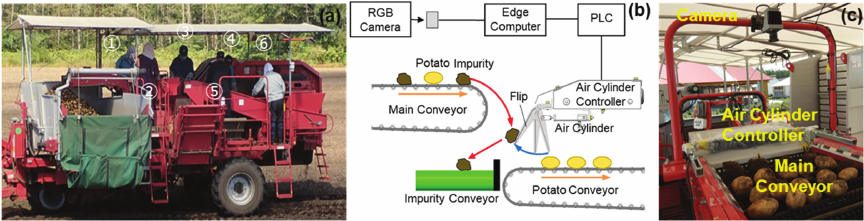

Fig. 1. System overview: (a) conventional manual harvesting, (b) automated system block diagram, (c) actual implementation integrated into potato harvester.

While YOLOX-Small offers a practical balance between detection accuracy and real-time inference speed critical for embedded systems typically used in agricultural machinery 20, YOLO-based architectures have been extensively validated across agricultural detection tasks 21,22. However, YOLOX exhibits notable performance degradation for small objects, which often include soil clods and small impurities crucial to downstream quality control 23, a limitation is also reported in remote sensing small object detection 24. This performance gap cannot be attributed to architectural constraints alone, as the inherent data imbalance in real-world agricultural datasets, encompassing both class distribution and object scale, plays a central role 25. Potatoes typically dominate both object count and spatial coverage, whereas small impurities and small potatoes are underrepresented in training data 26, reflecting imbalance challenges extensively documented in agricultural computer vision research 27,28. YOLOX was selected as the baseline architecture for several strategic reasons. First, its Apache 2.0 license enables commercial deployment without proprietary restrictions, which is essential for agricultural automation systems requiring long-term industrial support and integration into commercial harvesting equipment. Second, our prior comparative study 14 demonstrated YOLOX’s superior performance on the Jetson AGX Orin platform, achieving 2.38\(\times\) faster inference (107 vs. 45 fps) than YOLOv12, higher energy efficiency (0.58 vs. 0.75 J/frame), and comparable detection accuracy (F1-score 0.97 for both models). These results validated YOLOX’s suitability for resource-constrained edge deployment requiring real-time processing (\(> 30\) fps). Finally, the anchor-free design, decoupled head, and SimOTA labeling strategy provide a well-established baseline for investigating optimization strategies under data imbalance conditions typical of agricultural applications. Although newer architectures (YOLOv8–v11) offer incremental improvements, YOLOX’s combination of computational efficiency, licensing flexibility, and proven edge deployment performance rendered it optimal for this study. The system requires processing speeds exceeding 30 fps to align with conveyor speed and mechanical actuation timing constraints.

This study addressed these challenges by proposing a unified optimization framework for small object detection in agricultural harvesting scenarios, with emphasis on data-limited conditions and edge deployment constraints. The approach integrated training-level size-aware loss weighting (SALW) that adjusted gradient contributions according to object size, compensating for the underrepresentation of small instances during backpropagation, with inference-level parameter optimization designed to satisfy asymmetric operational requirements not adequately captured by standard evaluation metrics such as mean average precision (mAP) 29.

2. Methodology

2.1. Target System and Requirements

The experimental platform consists of a real-time potato harvesting system designed for the Japanese agricultural context. Unlike fully automated systems, this semi-automated approach reduces manual labor from 4–5 to 2–3 workers per harvester while maintaining product quality and operational efficiency. The system must maintain PMR below 1% to preserve marketable yield and to achieve IDR exceeding 60% for sufficient contamination removal 30.

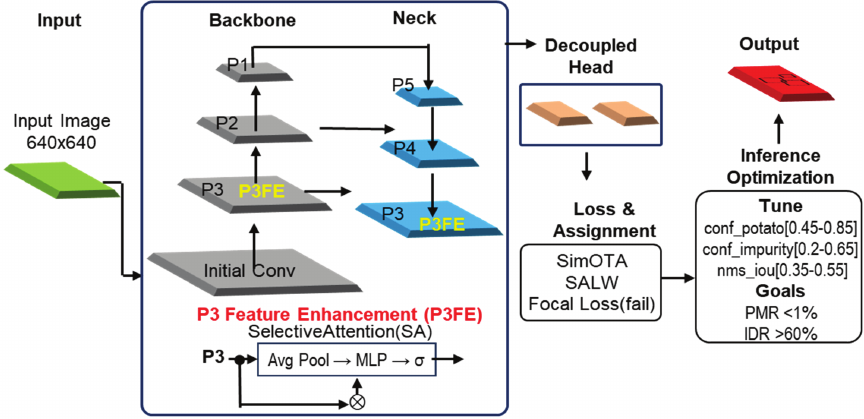

Fig. 2. YOLOX architecture with applied optimization techniques. P3 feature enhancement is integrated at the neck level, SimOTA label assignment operates during training with optional SALW, and inference-level parameter optimization satisfies operational constraints. The failed P3\(+\)SimOTA combination demonstrates spatial-semantic mismatch between optimization levels.

2.2. System Operation and Design Philosophy

The potato harvester system operates by selectively ejecting detected impurities, including soil clods and stones, via pneumatic air jets, while allowing potatoes to continue to the collection conveyor through inertial motion. This design reflects the practical requirements of semi-automated potato harvesting operations. Small potatoes (\(<3\textrm{,}000\) pixels, corresponding to approximately 4 cm in diameter) are occasionally misclassified as impurities and ejected, which represents acceptable operational losses. This design tradeoff is justified by four factors: (1) minimal commercial value of undersized potatoes (typically unmarketable due to size standards); (2) exceptionally high detection difficulty due to visual similarity to soil clods; (3) prioritization of system reliability over marginal yield recovery for very small objects; and (4) the absence of any recovery mechanism for ejected objects in the current workflow. The asymmetric performance requirements directly reflect this operational context. PMR \(< 1{\%}\) is a critical constraint because potato misclassification resulted in irreversible yield loss, necessitating preservation of more than 99% of marketable-sized potatoes. By contrast, IDR \(> 60{\%}\) is a practical target because residual impurities can be manually removed by the reduced workforce of 2–3 workers maintaining quality control oversight in the semi-automated process. Crucially, once potatoes are ejected alongside impurities, they cannot be recovered under the current harvester design, rendering potato preservation the primary objective.

2.3. Operational Metrics Definition

PMR and IDR were defined as follows: PMR \(=\) potato_misclassified_as_impurity \(/\) total_potato.

PMR represented the proportion of potatoes incorrectly classified as impurities (clods or stones) and consequently ejected, resulting in direct yield loss. Potatoes that were undetected (false negatives) did not contribute to PMR, as they passed naturally to the collection conveyor without ejection. Only potatoes actively misclassified as impurities and removed from the system constituted yield loss.

IDR was defined as combined impurity recall: IDR \(=\) TP_impurity / (TP_impurity \(+\) FN_impurity), where impurities included both soil clods (dokai) and stones (ishi). IDR represented the proportion of impurities correctly detected and ejected. The target IDR (\(> 60{\%}\)) reflect the operational reality that remaining impurities could be manually removed by workers supervising the semi-automated process. Evaluation followed the VOC2007 protocol with IoU \(\ge 0.5\) for true positive matching. Multiple detections were resolved using non-maximum suppression (NMS), with model-specific NMS thresholds typically ranging from 0.3–0.55.

2.4. Unified Optimization Framework Overview

The proposed unified optimization framework, shown in Fig. 2, addressed small object detection challenges through a two-stage approach integrating complementary optimization techniques across both training and inference stages to achieve operational objectives within the given constraints. Training-level optimization enhances the model’s inherent capability to detect small objects through targeted modifications, including P3 feature enhancement and SALW, without requiring additional data collection or augmentation. Inference-level parameter optimization systematically tuned post-training detection parameters, including confidence thresholds and NMS parameters, to explicitly satisfy operational constraints while maximizing secondary performance objectives.

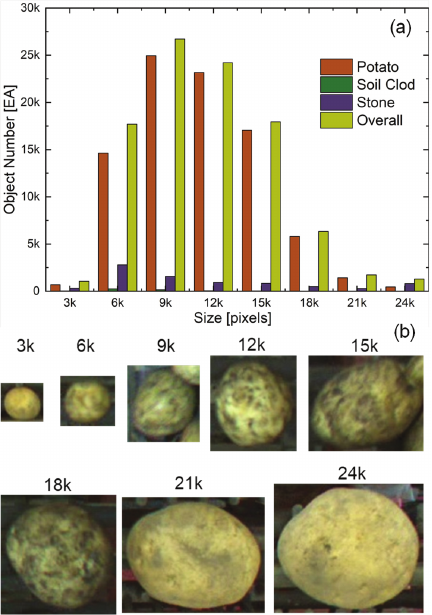

Fig. 3. Size-based object distribution and representative samples in the potato harvesting dataset. (a) Histogram showing severe class imbalance with small objects (\(< 3\)k pixels) comprising only 1.3% of all instances. (b) Representative potato samples across size categories: \(< 3\)k pixels (\(\approx\) 4 cm diameter), 6–12k pixels, and \(> 24\)k pixels, illustrating detection difficulty variation. Object characteristics and imaging conditions are detailed in 14.

2.5. Dataset and Experimental Design

The dataset comprised 10,000 images with 232,000 annotated objects in VOC2007 format 31, divided into 7,000 training, 2,000 validation, and 1,000 test images across three classes: potato (baresho), clod (dokai), and stone (ishi). The dataset was collected over a 12-month period in 2024, encompassing diverse harvesting conditions. Images were randomly assigned to splits using a fixed random seed for reproducibility. The 1-second temporal spacing between sampled frames (corresponding to approximately 55 source frames at 55 fps capture rate) ensures functional independence between randomly assigned train/test images, preventing correlation from adjacent video frames. Analysis revealed significant imbalance in object sizes, with small objects defined as those with bounding box areas below 3,000 pixels constituting only 1.3% of all instances yet presenting a disproportionate detection challenge. Fig. 3(a) illustrates the severe size-based class imbalance, with small objects (\(< 3\)k pixels) comprising only 1.3% of all instances. Fig. 3(b) presents representative potato samples across size categories, with detailed object characteristics previously reported in our comparative study 14. The small object threshold was determined using three criteria: (1) statistical distribution, whereby objects smaller than 3,000 pixels constituted the most underrepresented size category (1.3%), exhibiting severe class imbalance; (2) performance degradation, with preliminary analysis demonstrating approximately 15% lower average precision relative to medium (6–12k pixels) and large (\(> 12\)k pixels) objects; and (3) practical significance, as this category encompassed critical detection challenges including small soil clods and stones difficult to distinguish from small potatoes yet critical for quality control. Preliminary analysis confirmed a substantial performance deficit for small objects—approximately 15% lower average precision compared to medium and large objects—establishing the rationale for this research focus. The experimental system targeted deployment on mobile potato harvesters equipped with onboard edge computing hardware, requiring real-time processing at a minimum of 30 fps.

2.6. Training-Level Optimization Techniques

2.6.1. P3 Feature Enhancement

Figure 2 presents the YOLOX architecture with the applied optimization techniques. The P3 feature enhancement, operating at stride 8, provided the highest spatial resolution and was therefore critical for small object detection. A selective attention module was integrated at this level, combining average pooling and max pooling followed by a shared MLP to generate channel-wise attention weights. These weights were applied to the input feature maps and followed by a \(3\times 3\) convolution for feature refinement 32. This channel-wise attention mechanism was inspired by Squeeze-and-Excitation networks 33 that enhance discriminative feature learning through global information aggregation. The enhancement was implemented in both the backbone (CSPDarknet) and neck (YOLOPAFPN) to strengthen feature representations for small objects 34.

2.6.2. SALW

To increase model sensitivity to small objects during training, a size-based weighting scheme was applied to the loss function. For each ground truth object with bounding box area \(A_i\), a weight \(w_i\) was calculated as 35:

2.6.3. SimOTA Parameter Optimization

The SimOTA label assignment strategy in YOLOX was enhanced through systematic parameter optimization. Whereas the standard YOLOX implementation relied on default SimOTA settings, this study optimized key parameters, including center sampling radius and dynamic top-k IoU estimation, to improve assignment quality for small objects. The optimization focused on enhancing label assignment precision for challenging small object categories while maintaining computational efficiency 36.

2.6.4. Combined Optimization Strategy

To explore potential synergistic effects, a combined optimization strategy integrated P3 feature enhancement with SimOTA parameter optimization. This approach simultaneously leveraged feature-level improvements (enhanced P3 representations) and assignment-level optimization (improved small object label assignment). The combined model retained identical training hyperparameters while incorporating P3 feature enhancement modules within the neck architecture and optimized SimOTA parameters tailored for small object detection 37,38.

2.7. Inference-Level Parameter Optimization Framework

The inference-level parameter optimization framework systematically determined the optimal combination of confidence thresholds and NMS threshold that satisfied PMR \(<1\%\) and IDR \(>60\%\) while maximizing impurity F1-score as a secondary objective. Asymmetric confidence thresholds accommodated differing requirements for potatoes and impurities. Potato confidence was optimized within the range 0.45–0.85 to minimize false positives critical for PMR control. Impurity confidence, applied to both clod and stone classes, was optimized within 0.2–0.65 to achieve sufficient recall while maintaining acceptable precision. The NMS IoU threshold was tuned within 0.35–0.55 to manage overlapping detections. The optimization process employed a multi-stage grid search. An initial coarse confidence search with a step size of 0.05 was conducted using a fixed default NMS threshold to identify parameter regions satisfying PMR \(< 1{\%}\). A finer grid search was then performed within these viable regions to maximize IDR while maintaining PMR \(< 1{\%}\). Finally, with confidence thresholds fixed, NMS IoU tuning further refined overall performance.

3. Experiments and Results

3.1. Experimental Setup and Baseline Performance

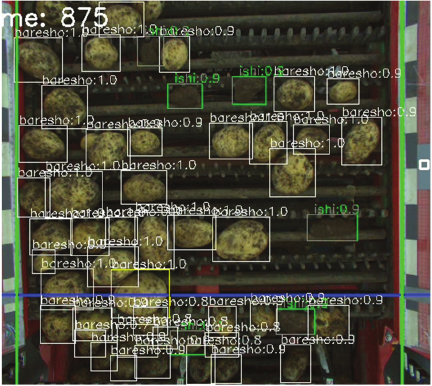

Experiments used the dataset described in Section 2.5, with validation data employed for parameter optimization and test data reserved for final evaluation. All models were trained using the PyTorch 2.3.1 framework on NVIDIA RTX 4090 GPUs, employing the SGD optimizer with momentum 0.9, weight decay 0.0005, a cosine learning rate schedule over 300 epochs, and a batch size of 32. Standard YOLOX data augmentations, including Mosaic and MixUp, were applied, with no additional data collection or specialized small object augmentation. The standard YOLOX-Small model 39, building upon the original YOLO real-time detection framework 40, exhibited the anticipated limitations in small object detection. Precision for potatoes smaller than 3k pixels reached only 0.803, while recall for clods smaller than 3k pixels was critically low at 0.114, confirming size-based performance disparity as a significant challenge requiring targeted intervention. Representative detection examples from actual harvesting operations are shown in Fig. 4, illustrating practical challenges including soil coverage on potato surfaces, strong visual similarity between soil clods and potatoes, and substantial size variation ranging from small (\(< 3\)k pixels) to large (\(> 12\)k pixels) objects under conveyor belt conditions.

Fig. 4. Real-time detection results during field operations. Potatoes (baresho, white boxes) and impurities (ishi-impurity, green boxes) are detected with confidence scores synchronized to conveyor coordinates for pneumatic ejection control.

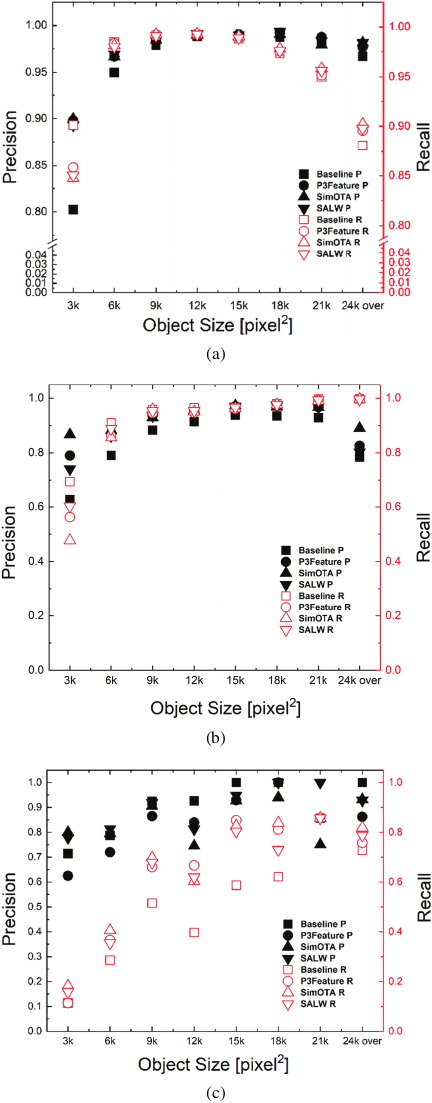

3.2. Training-Level Optimization Impact

Training-level optimization results across object sizes are presented in Fig. 5, showing precision and recall for all three classes under different optimization approaches prior to inference parameter tuning. The integration of P3 feature enhancement led to notable improvements in small object detection, with precision for potatoes smaller than 3k pixels rising to 0.898 and precision for stones in the same size range increasing to 0.790. These results indicate that strengthening high-resolution feature representations improves discrimination of small objects 23. However, this enhancement coincided with reduced recall for small object categories, reflecting a tradeoff in which the model becomes more selective while potentially discarding ambiguous instances.

The model utilizing the standard SimOTA configuration consistently demonstrated high precision across nearly all classes and sizes. Precision for potatoes smaller than 3k pixels reached 0.900, while stones of the same size achieved 0.867, the highest among all methods at this stage. This precision profile provided a strong foundation for satisfying the stringent PMR constraint in the final system. SALW sought to balance precision and recall enhancement for small objects. While it increased small object precision relative to the baseline, it exhibited a critical limitation 41; recall for large objects exceeding 24k pixels dropped sharply, reaching 0.385 for potatoes and 0.097 for stones. This failure mode would be unacceptable in practical deployment, particularly for large impurities that must be reliably removed.

Fig. 5. Precision and recall vs. object size for all three classes (a) potato, (b) stone, (c) clods across different model variants before inference parameter optimization.

3.3. Combined Optimization Analysis and Critical Incompatibility

Contrary to expectations, the combined P3\(+\)SimOTA model exhibited substantial performance degradation compared to individual optimization strategies. It failed to meet both operational constraints, achieving PMR of 9.90% and IDR of 56.70%, representing a 6.2-fold increase in potato misclassification relative to P3 feature enhancement alone and a 37.3% decrease in impurity detection compared to baseline performance. The inference speed of 34.77 fps showed no improvement over individual optimizations, eliminating any computational justification for the observed performance tradeoffs. This failure arose from fundamental incompatibility between optimization levels 38. P3 feature enhancement operates at the spatial feature level enhancing high-resolution representations through attention mechanisms, whereas SimOTA functions at the assignment level by optimizing label assignment based on IoU and classification confidence. When combined, these mechanisms introduced a spatial–semantic mismatch that produced overly conservative candidate filtering and degraded detection performance.

Table 1. Comprehensive performance comparison of optimization techniques.

3.4. Inference-Level Parameter Optimization and Final Model Performance

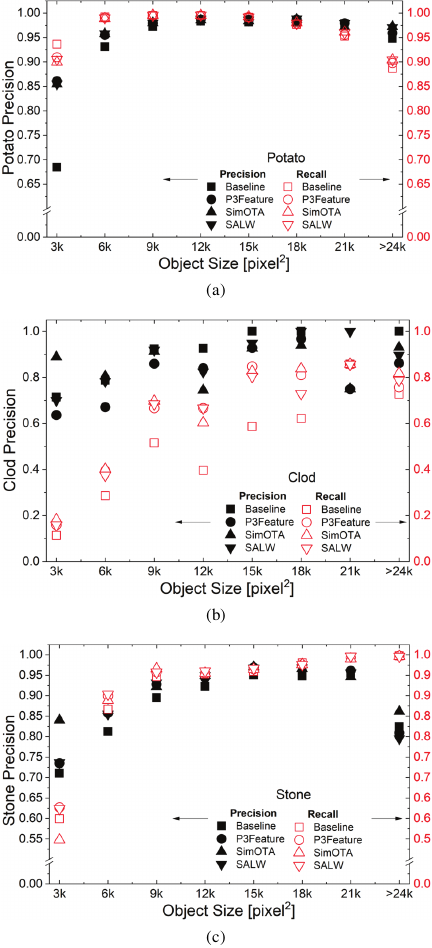

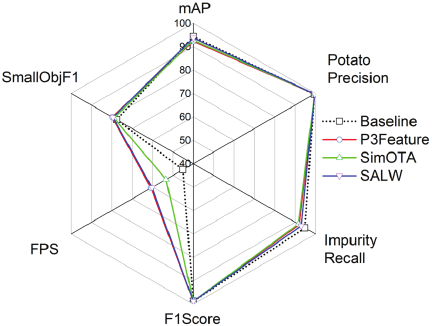

The inference-level optimization framework successfully identified parameter settings that satisfied the dual operational constraints for all four training-level approaches, underscoring the critical role of inference tuning in real-world deployment. Table 1 summarizes the final performance metrics, with post-optimization precision–recall characteristics across object sizes shown in Fig. 6. A comprehensive comparison across evaluation dimensions is provided in the radar chart in Fig. 7.

SimOTA emerged as the optimal configuration, achieving the highest small object recall (18.18%) among all successful models while maintaining excellent operational performance (PMR 0.06%, IDR 95.05%). This outcome directly fulfills the study objective of enhancing small object detection in agricultural harvesting scenarios. The optimized label assignment strategy substantially improved detection of small soil clods and stones, which are critical for quality control yet severely underrepresented in training data. With PMR well below 1% and IDR comfortably exceeding 60%, SimOTA represents the most effective realization of the unified optimization strategy’s goal: leveraging both training-level and inference-level enhancements to achieve superior small object detection under stringent operational constraints.

P3 feature enhancement achieved the lowest PMR (0.05%) across all configurations, offering maximal preservation of marketable potatoes through enhanced high-resolution feature representation. With IDR of 95.76% and small object recall of 15.91%, it constitutes an excellent alternative when minimizing yield loss takes precedence over maximizing small object detection performance, such as in premium-grade processing, where even marginal reductions in potato misclassification justify prioritizing PMR minimization.

Fig. 6. Effect of confidence threshold tuning on precision and recall scores of (a) potato, (b) stone, (c) clod during inference parameter optimization.

Fig. 7. Radar chart comparison of baseline and training-optimized models before inference parameter optimization.

SALW demonstrated successful compensation for training-stage tradeoffs, achieving well-balanced performance (PMR 0.06%, IDR 96.11%, small object recall 15.91%) despite severe large object degradation during training (\(> 24\)k pixels: potato recall 0.385, stone recall 0.097). Inference-level parameter optimization effectively mitigated this limitation through careful threshold tuning, validating the unified optimization strategy’s premise that coordinated training and inference optimizations can function synergistically.

Baseline YOLOX-Small achieved solid operational performance through inference optimization alone (PMR 0.08%, IDR 97.10%), although small object recall (11.36%) remained lower than that of training-optimized models.

By contrast, the P3\(+\)SimOTA configuration catastrophically failed both operational constraints (PMR 9.90%, IDR 56.70%), with small object recall (10.00%) falling below baseline performance. This failure confirms fundamental incompatibility between spatial-level feature enhancement and assignment-level optimization, where conflicting detection behaviors cannot be reconciled through parameter tuning alone.

3.5. Computational Performance and Deployment Validation

Real-time performance capability was confirmed for all models evaluated on Jetson AGX Orin utilizing TensorRT with FP16 precision. Baseline YOLOX-Small operated at approximately 45 fps, while the optimized P3 feature enhancement, SimOTA, and SALW models achieved faster inference speeds of 60 fps, 54 fps, and 48 fps, respectively. This counterintuitive acceleration of enhanced models can be attributed to several factors 42,43, including more efficient weight distributions resulting from training optimizations and optimized confidence thresholds enabling earlier termination of candidates detection during post-processing, thereby reducing computational load, with dynamic NMS strategies 44 potentially contributing to further post-processing efficiency gains. All configurations comfortably exceeded typical real-time requirements above 30 fps, validating their suitability for on-harvester deployment and demonstrating that improvements in detection accuracy can coincide with increased inference efficiency in resource-constrained agricultural edge environments.

4. Discussion

The experimental findings provide compelling evidence for the efficacy of the proposed unified optimization strategy in addressing the dual challenges of enhancing small object detection while adhering to strict operational constraints (PMR \(< 1{\%}\), IDR \(> 60{\%}\)).

4.1. Evaluation of Metric Alignment with Operational Objectives

Traditional computer vision metrics such as mAP, designed for general object detection benchmarks, fail to capture the asymmetric performance requirements of specialized agricultural applications 30. The results demonstrate that optimization techniques can substantially improve task-specific performance while enabling evaluation frameworks that better reflect real-world deployment scenarios. In agricultural harvesting, operational success is defined by potato preservation (PMR) and contamination removal (IDR) rather than abstract detection accuracy alone. The substantial improvements in meeting operational constraints, with all optimized models achieving PMR \(< 1{\%}\) and IDR \(> 60{\%}\), demonstrate the effectiveness of domain-specific optimization strategies. This represents a meaningful advancement in agricultural automation research, where system performance is ultimately measured by harvest efficiency and crop quality rather than benchmark-oriented metrics 31.

4.2. Interpretation of Training-Level Optimizations

The experimental results indicate that training-level optimizations yield marginal improvements over a well-tuned YOLOX-Small baseline in this agricultural application. P3 feature enhancement achieved the lowest PMR (0.05%), confirming that strengthening high-resolution feature representations aids small object discrimination. The corresponding improvement in small object recall (15.91% vs. 11.36% for the baseline) confirms targeted effectiveness, while the modest precision–recall tradeoff (IDR 95.76% vs. 97.10%) reflects increased selectivity. SimOTA achieved the highest small object recall (18.18%), validating that optimized label assignment improves detection of challenging targets. Its strong operational performance (PMR 0.06%, IDR 95.05%) confirms deployment suitability for applications prioritizing small object detection. SALW produced balanced final performance (PMR 0.06%, IDR 96.11%) despite severe degradation for large objects detection during training (\(> 24\)k pixels recall: potato 0.385, stone 0.097). Successful inference-level compensation demonstrates that coordinated training and inference optimizations can mitigate training-stage limitations. Overall, inference-level optimization emerged as the dominant factor in achieving operational constraints, while training-level enhancements provided incremental, task-specific gains (e.g., small object recall) that did not consistently translate into superior overall deployment performance.

4.3. Multi-Component Optimization Incompatibility Analysis

The failure of the P3\(+\)SimOTA configuration provides a critical case study in optimization incompatibility, demonstrating that the effectiveness of individual techniques does not guarantee successful integration 41. The failure stemmed from fundamental incompatibility between optimization levels. P3 feature enhancement operates at the spatial feature level, enhancing high-resolution representations through attention mechanisms, whereas SimOTA functions at the assignment level by optimizing label assignment based on IoU and classification confidence 32,38. When combined, the spatially enhanced features from P3 feature enhancement do not align with the semantically optimized anchor assignments from SimOTA, creating a spatial–semantic mismatch that degraded overall detection performance.

This incompatibility manifested as a double conservatism effect, whereby both techniques independently increased precision at the expense of recall. P3 feature enhancement promoted conservative spatial attention, while SimOTA applied strict assignment criteria, resulting in excessive filtering of candidates detection. The combined effect led to severe performance degradation, with PMR increasing to 9.90% and IDR dropping substantially, thereby violating both operational constraints 27,45. Despite each component meeting operational requirements independently, their combination produced a critical failure mode.

4.4. Operational Decision Framework

The experimental findings establish evidence-based selection criteria aligned with operational priorities and research objectives.

For enhanced small object detection (study objective), SimOTA achieved the highest small object recall (18.18%) while maintaining excellent operational performance (PMR 0.06%, IDR 95.05%), making it the optimal configuration for improving detection of underrepresented small impurities. This directly addresses the core challenge motivating this study: reliable detection of small soil clods and stones that are critical for quality control yet scarce in training data.

For maximum potato preservation, P3 feature enhancement delivered the lowest PMR (0.05%) through enhanced high-resolution feature representation, providing the strongest yield protection. With IDR of 95.76% (comfortably exceeding the 60% threshold) and small object recall of 15.91%, this configuration is well suited to processing scenarios where minimizing potato loss takes precedence.

For balanced performance under data imbalance, SALW (PMR 0.06%, IDR 96.11%, small object recall 15.91%) demonstrated that unified optimization can compensate for training-stage weaknesses through inference-level tuning. Despite severe large object degradation during training, careful parameter optimization achieved operational constraint satisfaction, validating coordinated optimization across training and inference stages.

For minimal implementation complexity, baseline YOLOX-Small achieved solid operational performance (PMR 0.08%, IDR 97.10%) through inference-level optimization alone, offering the most straightforward deployment path. However, its lower small object recall (11.36%) confirms that training-level enhancements provide measurable benefits for the targeted detection challenge.

The P3\(+\)SimOTA configuration, as discussed in Section 4.3, demonstrated fundamental incompatibility (PMR 9.90%, IDR 56.70%), underscoring that multi-component strategies require rigorous compatibility assessment before deployment.

4.5. The Precision-Recall Tradeoff and Inference Tuning Imperative

Training-stage results revealed a consistent precision–recall tradeoff across all methods, where efforts to improve small object detection often enhanced precision but suppressed recall. The initially low recall for small impurities across all configurations demonstrated that training-level optimization alone was insufficient to meet the IDR \(> 60{\%}\) requirement, establishing the necessity of inference-level parameter optimization. The high precision achieved during training provided a stable foundation for inference adjustments, primarily through lowering impurity confidence thresholds to increase recall without excessively compromising overall performance or violating the PMR constraint. These findings suggest that an effective strategy is to prioritize precision during training and subsequently tune the precision–recall balance during inference to meet specific operational requirements 46,41,45.

4.6. Computational Efficiency Considerations

An unexpected yet practically significant finding was the inference speed improvement for all optimized models compared to the baseline. The baseline model operated at 45 fps, whereas the optimized P3 feature enhancement, SimOTA, and SALW models achieved 60 fps, 54 fps, and 48 fps, respectively. Based on prior edge computing research 14, several contributing factors are hypothesized: (1) improved weight distribution efficiency resulting from training optimizations, which benefits GPU execution during TensorRT compilation; (2) reduced post-processing overhead due to optimized confidence thresholds that limit the number of candidates requiring NMS; and (3) TensorRT compilation optimizations, involving layer fusion and memory layout adjustments based on learned weight characteristics.

This counter-intuitive speed improvement is most plausibly attributed to reduced post-processing workload, as refined thresholding significantly minimizes computational burden during NMS. However, definitive attribution would require comprehensive profiling analysis, such as kernel-level investigation using NVIDIA Nsight, which lies beyond the scope of this study. All optimized configurations exceeded the real-time requirement of 30 fps, confirming suitability for on-harvester deployment.

4.7. Limitations and Future Work

Several limitations provide opportunities for future research. Although SALW improved small object detection, it severely degraded performance for large objects (\(> 24\)k pixels), indicating a need for adaptive weighting strategies that preserve performance for large objects. Detection of small clods remained particularly challenging, suggesting the value of class-specific optimization approaches and multi-scale attention mechanisms 47. While the proposed framework shows promise for potato harvesting, validation across additional crops and operational environments would strengthen claims of generalizability 48,49. Expanded field testing under diverse environmental conditions would further assess robustness, while systematic investigation of training-to-inference efficiency relationships could provide valuable insights for edge deployment optimization 50,51,52,53.

5. Conclusion

This study demonstrated that unified optimization successfully addresses small object detection challenges in agricultural automation through coordinated training and inference-level strategies. The proposed framework achieved stringent operational constraints without additional data collection, offering practical value for resource-constrained agricultural systems.

Three key insights emerged. First, inference-level optimization proved essential for meeting operational constraints regardless of training approach. Second, training-level enhancements (P3, SimOTA, SALW) provided targeted benefits aligned with specific priorities, indicating that optimization selection should reflect deployment objectives rather than algorithmic complexity.

Third, the failure of the P3\(+\)SimOTA configuration demonstrated that individual optimization effectiveness does not guarantee compatibility, emphasizing the need for systematic assessment before combining techniques.

For practical deployment, SimOTA emerges as the primary recommendation for applications prioritizing small object detection. The framework ability to satisfy operational requirements while improving inference efficiency validates its suitability for real-time, edge-deployed agricultural automation.

Future work should explore (1) systematic compatibility assessment, (2) adaptive parameter optimization strategies that automatically adjust to varying operational conditions, (3) the mechanisms underlying training-to-inference efficiency gains, and (4) validation across diverse crops and environmental conditions to establish generalization boundaries for the unified optimization framework.

Acknowledgments

This study was supported by the Agricultural Machinery Technology Cluster Project of The Institute of Agricultural Machinery, National Agriculture and Food Research Organization (IAM/NARO). We extend our sincere gratitude to the Tokachi Federation of Agricultural Cooperative for facilitating collaboration with Hokkaido farms and assisting with data annotation. We are particularly grateful to Tsuchiya Shinori, Kainuma Hideo, and Deguchi Noriko for their extensive support in annotation work. Finally, we would like to thank Editage (Cactus Communications K.K., Tokyo, Japan) for English language editing.

- [1] K. Bazargani and T. Deemyad, “Automation’s impact on agriculture: Opportunities, challenges, and economic effects,” Robotics, Vol.13, No.2, Article No.33, 2024. https://doi.org/10.3390/robotics13020033

- [2] M. Spagnuolo, G. Todde, M. Caria, N. Furnitto, G. Schillaci, and S. Failla, “Agricultural robotics: A technical review addressing challenges in sustainable crop production,” Robotics, Vol.14, No.2, Article No.9, 2025. https://doi.org/10.3390/robotics14020009

- [3] S. Mahmoudi, A. Davar, P. Sohrabipour, R. B. Bist, Y. Tao, and D. Wang, “Leveraging imitation learning in agricultural robotics: A comprehensive survey and comparative analysis,” Frontiers in Robotics and AI, Vol.11, Article No.1441312, 2024. https://doi.org/10.3389/frobt.2024.1441312

- [4] S. Kaplan, E. Ropelewska, S. Günaydın, K. Sabancı, and N. Çetin, “Machine learning and computer vision technology to analyze and discriminate soil samples,” Scientific Reports, Vol.14, Article No.19945, 2024. https://doi.org/10.1038/s41598-024-69464-7

- [5] J. Huang, F. Yi, Y. Cui, X. Wang, C. Jin, and F. A. Cheein, “Design and implementation of a seed potato cutting robot using deep learning and delta robotic system with accuracy and speed for automated processing of agricultural products,” Computers and Electronics in Agriculture, Vol.237, Part C, Article No.110716, 2025. https://doi.org/10.1016/j.compag.2025.110716

- [6] S. Singh, R. Vaishnav, S. Gautam, and S. Banerjee, “Agricultural robotics: A comprehensive review of applications, challenges and future prospects,” 2024 2nd Int. Conf. on Artificial Intelligence and Machine Learning Applications Theme: Healthcare and Internet of Things (AIMLA), 2024. https://doi.org/10.1109/AIMLA59606.2024.10531517

- [7] H. Tian, T. Wang, Y. Liu, X. Qiao, and Y. Li, “Computer vision technology in agricultural automation – A review,” Information Processing in Agriculture, Vol.7, No.1, pp. 1-19, 2020. https://doi.org/10.1016/j.inpa.2019.09.006

- [8] Q. He, H. Zhao, Y. Feng et al., “Edge computing-oriented smart agricultural supply chain mechanism with auction and fuzzy neural networks,” J. of Cloud Computing, Vol.13, Article No.66, 2024. https://doi.org/10.1186/s13677-024-00626-8

- [9] T. Wang, B. Chen, Z. Zhang, H. Li, and M. Zhang, “Applications of machine vision in agricultural robot navigation: A review,” Computers and Electronics in Agriculture, Vol.198, Article No.107085, 2022. https://doi.org/10.1016/j.compag.2022.107085

- [10] A. Upadhyay, N. S. Chandel, K. P. Singh, S. K. Chakraborty, B. M. Nandede, M. Kumar, A. Subeesh, K. Upendar, A. Salem, and A. Elbeltagi, “Deep learning and computer vision in plant disease detection: A comprehensive review of techniques, models, and trends in precision agriculture,” Artificial Intelligence Review, Vol.58, Article No.92, 2025. https://doi.org/10.1007/s10462-024-11100-x

- [11] M. Awais, S. M. Z. A. Naqvi, H. Zhang et al., “AI and machine learning for soil analysis: An assessment of sustainable agricultural practices,” Bioresources and Bioprocessing, Vol.10, Article No.90, 2023. https://doi.org/10.1186/s40643-023-00710-y

- [12] J. P. Aryal, D. B. Rahut, G. Thapa, and F. Simtowe, “Mechanisation of small-scale farms in South Asia: Empirical evidence derived from farm households survey,” Technology in Society, Vol.65, Article No.101591, 2021. https://doi.org/10.1016/j.techsoc.2021.101591

- [13] T. Gebiso, M. Ketema, A. Shumetie, and G. L. Feye, “Impact of farm mechanization on crop productivity and economic efficiency in central and southern Oromia, Ethiopia,” Frontiers in Sustainable Food Systems, Vol.8, Article No.1414912, 2024. https://doi.org/10.3389/fsufs.2024.1414912

- [14] H. Zheng, W. Ma, and D. B. Rahut, “Mechanization driving the future of agriculture in Asia,” Asia Pathways, 2024. https://doi.org/10.13140/RG.2.2.36780.58243

- [15] X. Li, L. Zhu, X. Chu, and H. Fu, “Edge computing-enabled wireless sensor networks for multiple data collection tasks in smart agriculture,” J. of Sensors, Article No.4398061, 2020. https://doi.org/10.1155/2020/4398061

- [16] P. E. David, P. R. Chelliah, and P. Anandhakumar, “Reshaping agriculture using intelligent edge computing,” Advances in Computers, Vol.132, pp. 167-204, 2024. https://doi.org/10.1016/bs.adcom.2023.08.007

- [17] J. Kim, G. Kim, R. Yoshitoshi, and K. Tokuda, “Real-time object detection for edge computing-based agricultural automation: A case study comparing the YOLOX and YOLOv12 architectures and their performance in potato harvesting systems,” Sensors, Vol.25, No.15, Article No.4586, 2025. https://doi.org/10.3390/s25154586

- [18] M. Everingham, L. Van Gool, C. K. I. Williams, J. Winn, and A. Zisserman, “The pascal visual object classes (voc) challenge,” Int. J. of Computer Vision, Vol.88, pp. 303-338, 2010. https://doi.org/10.1007/s11263-009-0275-4

- [19] K. S. Kamalesh, R. Kumaraperumal, P. Pazhanivelan, R. Jagadeeswaran, and P. C. Prabu, “YOLO deep learning algorithm for object detection in agriculture: A review,” J. of Agricultural Engineering, Vol.55, No.4, 2024. https://doi.org/10.4081/jae.2024.1641

- [20] R. B. Bist, X. Yang, S. Subedi, K. Bist, B. Paneru, G. Li, and L. Chai, “An automatic method for scoring poultry footpad dermatitis with deep learning and thermal imaging,” Computers and Electronics in Agriculture, Vol.226, Arrticle No.109481, 2024. https://doi.org/10.1016/j.compag.2024.109481

- [21] S. K. Chakraborty, N. S. Chandel, D. Jat, M. K. Tiwari, Y. A. Rajwade, and A. Subeesh, “Deep learning approaches and interventions for futuristic engineering in agriculture,” Neural Computing and Applications, Vol.34, pp. 20539-20573, 2022. https://doi.org/10.1007/s00521-022-07744-x

- [22] Z. Ge, S. Liu, F. Wang, Z. Li, and J. Sun, “YOLOX: Exceeding YOLO series in 2021,” arXiv preprint, arXiv:2107.08430, 2021. https://doi.org/10.48550/arXiv.2107.08430

- [23] G. Yang, J. Wang, Z. Nie, H. Yang, and S. Yu, “A lightweight YOLOv8 tomato detection algorithm combining feature enhancement and attention,” Agronomy, Vol.13, No.7, Article No.1824, 2023. https://doi.org/10.3390/agronomy13071824

- [24] T. Luan, S. Zhou, G. Zhang, Z. Song, J. Wu, and W. Pan, “Enhanced lightweight YOLOX for small object wildfire detection in UAV imagery,” Sensors, Vol.24, No.9, Article No.2710, 2024. https://doi.org/10.3390/s24092710

- [25] B. Ma, Z. Hua, Y. Wen, H. Deng, Y. Zhao, L. Pu, and H. Song, “Using an improved lightweight YOLOv8 model for real-time detection of multi-stage apple fruit in complex orchard environments,” Artificial Intelligence in Agriculture, Vol.11, pp. 70-82, 2024. https://doi.org/10.1016/j.aiia.2024.02.001

- [26] A. Allmendinger, A. O. Saltık, G. G. Peteinatos et al., “Assessing the capability of YOLO- and transformer-based object detectors for real-time weed detection,” Precision Agriculture, Vol.26, Article No.52, 2025. https://doi.org/10.1007/s11119-025-10246-0

- [27] J. M. Johnson and T. M. Khoshgoftaar, “Survey on deep learning with class imbalance,” J. of Big Data, Vol.6, Article No.27, 2019. https://doi.org/10.1186/s40537-019-0192-5

- [28] H. Wu, X. Mo, S. Wen, K. Wu, Y. Ye, Y. Wang et al., “DNE-YOLO: A method for apple fruit detection in diverse natural environments,” J. of King Saud University-Computer and Information Sciences, Vol.36, No.9, Article No.102220, 2024. https://doi.org/10.1016/j.jksuci.2024.102220

- [29] P. Chen, Y. Ren, B. Zhang, and Y. Zhao, “Class imbalance in the automatic interpretation of remote sensing images: A review,” IEEE J. of Selected Topics in Applied Earth Observations and Remote Sensing, Vol.18, pp. 9483-9508, 2025. https://doi.org/10.1109/JSTARS.2025.3555567

- [30] T.-Y. Lin, M. Maire, S. Belongie, J. Hays, P. Perona, D. Ramanan, P. Dollár, and C. L. Zitnick, “Microsoft COCO: Common objects in context,” European Conf. on Computer Vision (ECCV), pp. 740-755, 2014. https://doi.org/10.1007/978-3-319-10602-1_48

- [31] S. Saha, O. D. Kucher, A. O. Utkina, and N. Y. Rebouh, “Precision agriculture for improving crop yield predictions: A literature review,” Frontiers in Agronomy, Vol.7, Article No.1566201, 2025. https://doi.org/10.3389/fagro.2025.1566201

- [32] Y. Tian, Q. Ye, and D. Doermann, “YOLOv12: Attention-centric real-time object detectors,” arXiv preprint, arXiv:2502.12524, 2025. https://doi.org/10.48550/arXiv.2502.12524

- [33] J. Redmon, S. Divvala, R. Girshick, and A. Farhadi, “You only look once: Unified, real-time object detection,” 2016 Proc. of the IEEE Conf. on Computer Vision and Pattern Recognition, pp. 779-788, 2016. https://doi.org/10.1109/CVPR.2016.91

- [34] J. Hu, L. Shen, and G. Sun, “Squeeze-and-excitation networks,” 2018 IEEE/CVF Conf. on Computer Vision and Pattern Recognition, pp. 7132-7141, 2018. https://doi.org/10.1109/CVPR.2018.00745

- [35] A. B. Saad, G. Facciolo, and A. Davy, “On the importance of large objects in CNN based object detection algorithms,” Proc. of the IEEE/CVF Winter Conf. on Applications of Computer Vision (WACV), pp. 522-531, 2024. https://doi.org/10.1109/WACV57701.2024.00059

- [36] Z. Tian, C. Shen, H. Chen, and T. He, “FCOS: Fully convolutional one-stage object detection,” 2019 IEEE/CVF Int. Conf. on Computer Vision (ICCV), pp. 9626-9635, 2019. https://doi.org/10.1109/ICCV.2019.00972

- [37] H. Law and J. Deng, “CornerNet: Detecting objects as paired keypoints,” Proc. of European Conf. on Computer Vision, Vol.128, pp. 642-656, 2020. https://doi.org/10.1007/s11263-019-01204-1

- [38] Z. Ge, S. Liu, Z. Li, O. Yoshie, and J. Sun, “OTA: Optimal transport assignment for object detection,” 2021 IEEE/CVF Conf. on Computer Vision and Pattern Recognition (CVPR), pp. 303-312, 2021. https://doi.org/10.1109/CVPR46437.2021.00037

- [39] T. Miftahushudur, H. M. Sahin, B. Grieve, and H. Yin, “A survey of methods for addressing imbalance data problems in agriculture applications,” Remote Sensing, Vol.17, No.3, Article No.454, 2025. https://doi.org/10.3390/rs17030454

- [40] S. A. Nawaz, J. Li, U. A. Bhatti, M. U. Shoukat, and R. M. Ahmad, “AI-based object detection latest trends in remote sensing, multimedia and agriculture applications,” Frontiers in Plant Science, Vol.13, Article No.1041514, 2022. https://doi.org/10.3389/fpls.2022.1041514

- [41] K. Ghosh, C. Bellinger, R. Corizzo, P. Branco, B. Krawczyk, and N. Japkowicz, “The class imbalance problem in deep learning,” Machine Learning, Vol.113, pp. 4845-4901, 2024. https://doi.org/10.1007/s10994-022-06268-8

- [42] T. Hoefler, D. Alistarh, T. Ben-Nun, N. Dryden, and A. Peste, “Sparsity in deep learning: Pruning and growth for efficient inference and training in neural networks,” J. of Machine Learning Research, Vol.22, No.1, pp. 10882-11005, 2021.

- [43] N. Surantha, “Real-time overhead power line component detection on edge computing platforms,” Computers, Vol.14, No.4, Article No.134, 2025. https://doi.org/10.3390/computers14040134

- [44] H. Zhao, J. Wang, D. Dai, S. Lin, and Z. Chen, “D-NMS: A dynamic NMS network for general object detection,” Neurocomputing, Vol.512, pp. 225-234, 2022. https://doi.org/10.1016/j.neucom.2022.09.080

- [45] P. Chen, Y. Ren, B. Zhang, and Y. Zhao, “Class imbalance in the automatic interpretation of remote sensing images: A review,” IEEE J. of Selected Topics in Applied Earth Observations and Remote Sensing, Vol.18, pp. 9483-9508, 2025. https://doi.org/10.1109/JSTARS.2025.3555567

- [46] J. Peng, Z. Zhao, and D. Liu, “Impact of agricultural mechanization on agricultural production, income, and mechanism: Evidence from Hubei Province, China,” Frontiers in Environmental Science, Vol.10, Article No.838686, 2022. https://doi.org/10.3389/fenvs.2022.838686

- [47] X. Wei, Z. Li, and Y. Wang, “SED-YOLO based multi-scale attention for small object detection in remote sensing,” Scientific Reports, Vol.15, Article No.3125, 2025. https://doi.org/10.1038/s41598-025-87199-x

- [48] M. Ariza-Sentis, S. Velez, R. Martinez-Pena, H. Baja, and J. Valente, “Object detection and tracking in precision farming: A systematic review,” Computers and Electronics in Agriculture, Vol.219, Article No.108757, 2024. https://doi.org/10.1016/j.compag.2024.108757

- [49] F. Yan, X. Sun, S. Chen, and G. Dai, “Does agricultural mechanization improve agricultural environmental efficiency?,” Frontiers in Environmental Science, Vol.11, Article No.1344903, 2023. https://doi.org/10.3389/fenvs.2023.1344903

- [50] R. Khanam and M. Hussain, “YOLOv11: An overview of the key architectural enhancements,” arXiv preprint, arXiv:2410.17725, 2024. https://doi.org/10.48550/arXiv.2410.17725

- [a] J. Terven, D.-M. Córdova-Esparza, and J.-A. Romero-González, “A comprehensive review of YOLO architectures in computer vision: From YOLOv1 to YOLOv8 and YOLO-NAS,” Machine Learning and Knowledge Extraction, Vol.5, No.4, pp. 1680-1716, 2023. https://doi.org/10.3390/make5040083

- [b] R. Qin, Y. Wang, X. Liu, and H. Yu, “Advancing precision agriculture with deep learning enhanced SIS-YOLOv8 for Solanaceae crop monitoring,” Frontiers in Plant Science, Vol.15, Article No.1485903, 2025. https://doi.org/10.3389/fpls.2024.1485903

- [c] J. Qi, C. Ding, R. Zhang et al., “UAS-based MT-YOLO model for detecting missed tassels in hybrid maize detasseling,” Plant Methods, Vol.21, Article No.21, 2025. https://doi.org/10.1186/s13007-025-01341-4

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.