Paper:

Unified Optimization of YOLOX for Robust Small Object Detection in Real-Time Potato Harvesting

Joonam Kim†

, Kenichi Tokuda

, Kenichi Tokuda

, Giryeon Kim, and Rena Yoshitoshi

, Giryeon Kim, and Rena Yoshitoshi

Research Center for Agricultural Robotics, National Agricultural and Food Research Organization

2-1-12 Kannondai, Tsukuba, Ibaraki 305-8642, Japan

†Corresponding author

Automated potato harvesting requires vision systems meeting stringent operational constraints: near-zero potato misclassification (PMR <1%) and high impurity detection rate (IDR >60%). Although YOLOX supports real-time processing, it exhibits critical performance limitations for small object detection. This paper introduces a unified optimization strategy combining training-level modifications (P3 feature enhancement, SimOTA parameter optimization, and size-aware loss weighting) with inference-level threshold optimization. Experimental results based on 10,000 images containing 232,000 annotations demonstrate that all four evaluated approaches achieved the defined operational constraints. SimOTA emerged as the optimal configuration, delivering the highest small object recall (18.18%) while maintaining PMR of 0.06% and IDR of 95.05%. P3 feature enhancement achieved the lowest PMR (0.05%), SALW provided balanced performance (PMR 0.06%, IDR 96.11%), and the P3+SimOTA combination failed critically (PMR 9.90%), revealing fundamental incompatibility between optimization components. All successful configurations exceeded real-time processing requirements (45–61 fps), confirming suitability for deployment in resource-constrained agricultural automation systems.

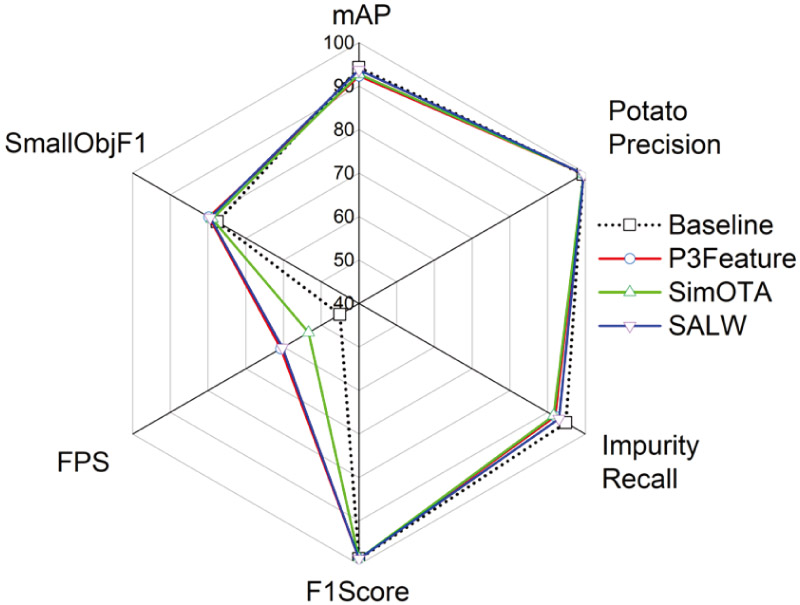

Optimization strategy comparison

- [1] K. Bazargani and T. Deemyad, “Automation’s impact on agriculture: Opportunities, challenges, and economic effects,” Robotics, Vol.13, No.2, Article No.33, 2024. https://doi.org/10.3390/robotics13020033

- [2] M. Spagnuolo, G. Todde, M. Caria, N. Furnitto, G. Schillaci, and S. Failla, “Agricultural robotics: A technical review addressing challenges in sustainable crop production,” Robotics, Vol.14, No.2, Article No.9, 2025. https://doi.org/10.3390/robotics14020009

- [3] S. Mahmoudi, A. Davar, P. Sohrabipour, R. B. Bist, Y. Tao, and D. Wang, “Leveraging imitation learning in agricultural robotics: A comprehensive survey and comparative analysis,” Frontiers in Robotics and AI, Vol.11, Article No.1441312, 2024. https://doi.org/10.3389/frobt.2024.1441312

- [4] S. Kaplan, E. Ropelewska, S. Günaydın, K. Sabancı, and N. Çetin, “Machine learning and computer vision technology to analyze and discriminate soil samples,” Scientific Reports, Vol.14, Article No.19945, 2024. https://doi.org/10.1038/s41598-024-69464-7

- [5] J. Huang, F. Yi, Y. Cui, X. Wang, C. Jin, and F. A. Cheein, “Design and implementation of a seed potato cutting robot using deep learning and delta robotic system with accuracy and speed for automated processing of agricultural products,” Computers and Electronics in Agriculture, Vol.237, Part C, Article No.110716, 2025. https://doi.org/10.1016/j.compag.2025.110716

- [6] S. Singh, R. Vaishnav, S. Gautam, and S. Banerjee, “Agricultural robotics: A comprehensive review of applications, challenges and future prospects,” 2024 2nd Int. Conf. on Artificial Intelligence and Machine Learning Applications Theme: Healthcare and Internet of Things (AIMLA), 2024. https://doi.org/10.1109/AIMLA59606.2024.10531517

- [7] H. Tian, T. Wang, Y. Liu, X. Qiao, and Y. Li, “Computer vision technology in agricultural automation – A review,” Information Processing in Agriculture, Vol.7, No.1, pp. 1-19, 2020. https://doi.org/10.1016/j.inpa.2019.09.006

- [8] Q. He, H. Zhao, Y. Feng et al., “Edge computing-oriented smart agricultural supply chain mechanism with auction and fuzzy neural networks,” J. of Cloud Computing, Vol.13, Article No.66, 2024. https://doi.org/10.1186/s13677-024-00626-8

- [9] T. Wang, B. Chen, Z. Zhang, H. Li, and M. Zhang, “Applications of machine vision in agricultural robot navigation: A review,” Computers and Electronics in Agriculture, Vol.198, Article No.107085, 2022. https://doi.org/10.1016/j.compag.2022.107085

- [10] A. Upadhyay, N. S. Chandel, K. P. Singh, S. K. Chakraborty, B. M. Nandede, M. Kumar, A. Subeesh, K. Upendar, A. Salem, and A. Elbeltagi, “Deep learning and computer vision in plant disease detection: A comprehensive review of techniques, models, and trends in precision agriculture,” Artificial Intelligence Review, Vol.58, Article No.92, 2025. https://doi.org/10.1007/s10462-024-11100-x

- [11] M. Awais, S. M. Z. A. Naqvi, H. Zhang et al., “AI and machine learning for soil analysis: An assessment of sustainable agricultural practices,” Bioresources and Bioprocessing, Vol.10, Article No.90, 2023. https://doi.org/10.1186/s40643-023-00710-y

- [12] J. P. Aryal, D. B. Rahut, G. Thapa, and F. Simtowe, “Mechanisation of small-scale farms in South Asia: Empirical evidence derived from farm households survey,” Technology in Society, Vol.65, Article No.101591, 2021. https://doi.org/10.1016/j.techsoc.2021.101591

- [13] T. Gebiso, M. Ketema, A. Shumetie, and G. L. Feye, “Impact of farm mechanization on crop productivity and economic efficiency in central and southern Oromia, Ethiopia,” Frontiers in Sustainable Food Systems, Vol.8, Article No.1414912, 2024. https://doi.org/10.3389/fsufs.2024.1414912

- [14] H. Zheng, W. Ma, and D. B. Rahut, “Mechanization driving the future of agriculture in Asia,” Asia Pathways, 2024. https://doi.org/10.13140/RG.2.2.36780.58243

- [15] X. Li, L. Zhu, X. Chu, and H. Fu, “Edge computing-enabled wireless sensor networks for multiple data collection tasks in smart agriculture,” J. of Sensors, Article No.4398061, 2020. https://doi.org/10.1155/2020/4398061

- [16] P. E. David, P. R. Chelliah, and P. Anandhakumar, “Reshaping agriculture using intelligent edge computing,” Advances in Computers, Vol.132, pp. 167-204, 2024. https://doi.org/10.1016/bs.adcom.2023.08.007

- [17] J. Kim, G. Kim, R. Yoshitoshi, and K. Tokuda, “Real-time object detection for edge computing-based agricultural automation: A case study comparing the YOLOX and YOLOv12 architectures and their performance in potato harvesting systems,” Sensors, Vol.25, No.15, Article No.4586, 2025. https://doi.org/10.3390/s25154586

- [18] M. Everingham, L. Van Gool, C. K. I. Williams, J. Winn, and A. Zisserman, “The pascal visual object classes (voc) challenge,” Int. J. of Computer Vision, Vol.88, pp. 303-338, 2010. https://doi.org/10.1007/s11263-009-0275-4

- [19] K. S. Kamalesh, R. Kumaraperumal, P. Pazhanivelan, R. Jagadeeswaran, and P. C. Prabu, “YOLO deep learning algorithm for object detection in agriculture: A review,” J. of Agricultural Engineering, Vol.55, No.4, 2024. https://doi.org/10.4081/jae.2024.1641

- [20] R. B. Bist, X. Yang, S. Subedi, K. Bist, B. Paneru, G. Li, and L. Chai, “An automatic method for scoring poultry footpad dermatitis with deep learning and thermal imaging,” Computers and Electronics in Agriculture, Vol.226, Arrticle No.109481, 2024. https://doi.org/10.1016/j.compag.2024.109481

- [21] S. K. Chakraborty, N. S. Chandel, D. Jat, M. K. Tiwari, Y. A. Rajwade, and A. Subeesh, “Deep learning approaches and interventions for futuristic engineering in agriculture,” Neural Computing and Applications, Vol.34, pp. 20539-20573, 2022. https://doi.org/10.1007/s00521-022-07744-x

- [22] Z. Ge, S. Liu, F. Wang, Z. Li, and J. Sun, “YOLOX: Exceeding YOLO series in 2021,” arXiv preprint, arXiv:2107.08430, 2021. https://doi.org/10.48550/arXiv.2107.08430

- [23] G. Yang, J. Wang, Z. Nie, H. Yang, and S. Yu, “A lightweight YOLOv8 tomato detection algorithm combining feature enhancement and attention,” Agronomy, Vol.13, No.7, Article No.1824, 2023. https://doi.org/10.3390/agronomy13071824

- [24] T. Luan, S. Zhou, G. Zhang, Z. Song, J. Wu, and W. Pan, “Enhanced lightweight YOLOX for small object wildfire detection in UAV imagery,” Sensors, Vol.24, No.9, Article No.2710, 2024. https://doi.org/10.3390/s24092710

- [25] B. Ma, Z. Hua, Y. Wen, H. Deng, Y. Zhao, L. Pu, and H. Song, “Using an improved lightweight YOLOv8 model for real-time detection of multi-stage apple fruit in complex orchard environments,” Artificial Intelligence in Agriculture, Vol.11, pp. 70-82, 2024. https://doi.org/10.1016/j.aiia.2024.02.001

- [26] A. Allmendinger, A. O. Saltık, G. G. Peteinatos et al., “Assessing the capability of YOLO- and transformer-based object detectors for real-time weed detection,” Precision Agriculture, Vol.26, Article No.52, 2025. https://doi.org/10.1007/s11119-025-10246-0

- [27] J. M. Johnson and T. M. Khoshgoftaar, “Survey on deep learning with class imbalance,” J. of Big Data, Vol.6, Article No.27, 2019. https://doi.org/10.1186/s40537-019-0192-5

- [28] H. Wu, X. Mo, S. Wen, K. Wu, Y. Ye, Y. Wang et al., “DNE-YOLO: A method for apple fruit detection in diverse natural environments,” J. of King Saud University-Computer and Information Sciences, Vol.36, No.9, Article No.102220, 2024. https://doi.org/10.1016/j.jksuci.2024.102220

- [29] P. Chen, Y. Ren, B. Zhang, and Y. Zhao, “Class imbalance in the automatic interpretation of remote sensing images: A review,” IEEE J. of Selected Topics in Applied Earth Observations and Remote Sensing, Vol.18, pp. 9483-9508, 2025. https://doi.org/10.1109/JSTARS.2025.3555567

- [30] T.-Y. Lin, M. Maire, S. Belongie, J. Hays, P. Perona, D. Ramanan, P. Dollár, and C. L. Zitnick, “Microsoft COCO: Common objects in context,” European Conf. on Computer Vision (ECCV), pp. 740-755, 2014. https://doi.org/10.1007/978-3-319-10602-1_48

- [31] S. Saha, O. D. Kucher, A. O. Utkina, and N. Y. Rebouh, “Precision agriculture for improving crop yield predictions: A literature review,” Frontiers in Agronomy, Vol.7, Article No.1566201, 2025. https://doi.org/10.3389/fagro.2025.1566201

- [32] Y. Tian, Q. Ye, and D. Doermann, “YOLOv12: Attention-centric real-time object detectors,” arXiv preprint, arXiv:2502.12524, 2025. https://doi.org/10.48550/arXiv.2502.12524

- [33] J. Redmon, S. Divvala, R. Girshick, and A. Farhadi, “You only look once: Unified, real-time object detection,” 2016 Proc. of the IEEE Conf. on Computer Vision and Pattern Recognition, pp. 779-788, 2016. https://doi.org/10.1109/CVPR.2016.91

- [34] J. Hu, L. Shen, and G. Sun, “Squeeze-and-excitation networks,” 2018 IEEE/CVF Conf. on Computer Vision and Pattern Recognition, pp. 7132-7141, 2018. https://doi.org/10.1109/CVPR.2018.00745

- [35] A. B. Saad, G. Facciolo, and A. Davy, “On the importance of large objects in CNN based object detection algorithms,” Proc. of the IEEE/CVF Winter Conf. on Applications of Computer Vision (WACV), pp. 522-531, 2024. https://doi.org/10.1109/WACV57701.2024.00059

- [36] Z. Tian, C. Shen, H. Chen, and T. He, “FCOS: Fully convolutional one-stage object detection,” 2019 IEEE/CVF Int. Conf. on Computer Vision (ICCV), pp. 9626-9635, 2019. https://doi.org/10.1109/ICCV.2019.00972

- [37] H. Law and J. Deng, “CornerNet: Detecting objects as paired keypoints,” Proc. of European Conf. on Computer Vision, Vol.128, pp. 642-656, 2020. https://doi.org/10.1007/s11263-019-01204-1

- [38] Z. Ge, S. Liu, Z. Li, O. Yoshie, and J. Sun, “OTA: Optimal transport assignment for object detection,” 2021 IEEE/CVF Conf. on Computer Vision and Pattern Recognition (CVPR), pp. 303-312, 2021. https://doi.org/10.1109/CVPR46437.2021.00037

- [39] T. Miftahushudur, H. M. Sahin, B. Grieve, and H. Yin, “A survey of methods for addressing imbalance data problems in agriculture applications,” Remote Sensing, Vol.17, No.3, Article No.454, 2025. https://doi.org/10.3390/rs17030454

- [40] S. A. Nawaz, J. Li, U. A. Bhatti, M. U. Shoukat, and R. M. Ahmad, “AI-based object detection latest trends in remote sensing, multimedia and agriculture applications,” Frontiers in Plant Science, Vol.13, Article No.1041514, 2022. https://doi.org/10.3389/fpls.2022.1041514

- [41] K. Ghosh, C. Bellinger, R. Corizzo, P. Branco, B. Krawczyk, and N. Japkowicz, “The class imbalance problem in deep learning,” Machine Learning, Vol.113, pp. 4845-4901, 2024. https://doi.org/10.1007/s10994-022-06268-8

- [42] T. Hoefler, D. Alistarh, T. Ben-Nun, N. Dryden, and A. Peste, “Sparsity in deep learning: Pruning and growth for efficient inference and training in neural networks,” J. of Machine Learning Research, Vol.22, No.1, pp. 10882-11005, 2021.

- [43] N. Surantha, “Real-time overhead power line component detection on edge computing platforms,” Computers, Vol.14, No.4, Article No.134, 2025. https://doi.org/10.3390/computers14040134

- [44] H. Zhao, J. Wang, D. Dai, S. Lin, and Z. Chen, “D-NMS: A dynamic NMS network for general object detection,” Neurocomputing, Vol.512, pp. 225-234, 2022. https://doi.org/10.1016/j.neucom.2022.09.080

- [45] P. Chen, Y. Ren, B. Zhang, and Y. Zhao, “Class imbalance in the automatic interpretation of remote sensing images: A review,” IEEE J. of Selected Topics in Applied Earth Observations and Remote Sensing, Vol.18, pp. 9483-9508, 2025. https://doi.org/10.1109/JSTARS.2025.3555567

- [46] J. Peng, Z. Zhao, and D. Liu, “Impact of agricultural mechanization on agricultural production, income, and mechanism: Evidence from Hubei Province, China,” Frontiers in Environmental Science, Vol.10, Article No.838686, 2022. https://doi.org/10.3389/fenvs.2022.838686

- [47] X. Wei, Z. Li, and Y. Wang, “SED-YOLO based multi-scale attention for small object detection in remote sensing,” Scientific Reports, Vol.15, Article No.3125, 2025. https://doi.org/10.1038/s41598-025-87199-x

- [48] M. Ariza-Sentis, S. Velez, R. Martinez-Pena, H. Baja, and J. Valente, “Object detection and tracking in precision farming: A systematic review,” Computers and Electronics in Agriculture, Vol.219, Article No.108757, 2024. https://doi.org/10.1016/j.compag.2024.108757

- [49] F. Yan, X. Sun, S. Chen, and G. Dai, “Does agricultural mechanization improve agricultural environmental efficiency?,” Frontiers in Environmental Science, Vol.11, Article No.1344903, 2023. https://doi.org/10.3389/fenvs.2023.1344903

- [50] R. Khanam and M. Hussain, “YOLOv11: An overview of the key architectural enhancements,” arXiv preprint, arXiv:2410.17725, 2024. https://doi.org/10.48550/arXiv.2410.17725

- [51] J. Terven, D.-M. Córdova-Esparza, and J.-A. Romero-González, “A comprehensive review of YOLO architectures in computer vision: From YOLOv1 to YOLOv8 and YOLO-NAS,” Machine Learning and Knowledge Extraction, Vol.5, No.4, pp. 1680-1716, 2023. https://doi.org/10.3390/make5040083

- [52] R. Qin, Y. Wang, X. Liu, and H. Yu, “Advancing precision agriculture with deep learning enhanced SIS-YOLOv8 for Solanaceae crop monitoring,” Frontiers in Plant Science, Vol.15, Article No.1485903, 2025. https://doi.org/10.3389/fpls.2024.1485903

- [53] J. Qi, C. Ding, R. Zhang et al., “UAS-based MT-YOLO model for detecting missed tassels in hybrid maize detasseling,” Plant Methods, Vol.21, Article No.21, 2025. https://doi.org/10.1186/s13007-025-01341-4

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.