Paper:

Development of a Vermin Detection System Using Multimodal Large Language Models

Koki Sato, Katsuma Akamatsu, Hayato Miura, Satoya Ito, Nagito Imai, Kenta Tada, Ryoma Tanaka, Kota Kohama, Sei Kuratomi, Utaha Ueno, Sanae Sakamoto, Tsubame Shintani, Sho Yamauchi

, and Keiji Suzuki

, and Keiji Suzuki

Future University Hakodate

116-2 Kamedanakano-cho, Hakodate, Hokkaido 041-8655, Japan

In recent years, the damage to humans and crops caused by bears and other vermin has become increasingly serious across Japan. Although smart agricultural monitoring systems have shown some promise, they are still limited by issues such as specificity to certain species, high expenses, and a lack of adaptability. This study focused on creating and testing a zero-shot system for vermin detection using a multimodal large language model. A total of 1,073 images were collected using cameras installed at three locations in Nanae-cho, Hokkaido, Japan, between May and September 2025. Twenty-two images showed the target animals, including 12 bears, nine deer, and one crow. A comparative evaluation of GPT-4o, LLaVA, YOLO-World, and Grounding DINO showed that GPT-4o had promising recall in our preliminary deployment (recall =1.00), although 17 false detections occurred in images without animals.

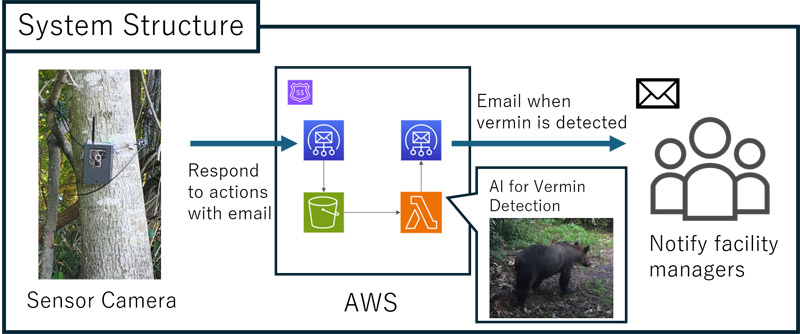

Multimodal LLM vermin detection system using AWS Lambda for email alerts

- [1] M. Naruoka, “Identification of wildlife using image analysis by AI,” Water, Land and Environmental Engineering, Vol.88, No.5, pp. 381-384, 2020 (in Japanese). https://doi.org/10.11408/jjsidre.88.5_381

- [2] M. A. Tabak, M. S. Norouzzadeh, D. W. Wolfson et al., “Machine learning to classify animal species in camera trap images: Applications in ecology,” Methods in Ecology and Evolution, Vol.10, No.4, pp. 585-590, 2019. https://doi.org/10.1111/2041-210X.13120

- [3] A. R. Elias, N. Golubovic, C. Krintz, and R. Wolski, “Where’s The Bear? – Automating Wildlife Image Processing Using IoT and Edge Cloud Systems,” Proc. of 2017 IEEE/ACM the Second Int. Conf. on Internet-of-Things Design and Implementation, pp. 247-258, 2017.

- [4] O. Bendel and A. Yürekkirmaz, “A Face Recognition System for Bears: Protection for Animals and Humans in the Alps,” Proc. of the Ninth Int. Conf. on Animal-Computer Interaction, 2022. https://doi.org/10.1145/3565995.3566030

- [5] M. Clapham, E. Miller, M. Nguyen, and C. T. Darimont, “Automated facial recognition for wildlife that lack unique markings: A deep learning approach for brown bears,” Ecology and Evolution, Vol.10, No.23, pp. 12883-12892, 2020. https://doi.org/10.1002/ece3.6840

- [6] A. Nakajima, H. Oku, K. Motegi, and Y. Shiraishi, “Wild animal recognition method for videos using a combination of deep learning and motion detection,” The Japanese J. of the Institute of Industrial Applications Engineers, Vol.9, No.1, pp. 38-45, 2021 (in Japanese). https://doi.org/10.12792/jjiiae.9.1.38

- [7] N. R. Varte, K. Bhattacharyya, and N. Saikia, “Advancing environmental monitoring: YOLO algorithm for real-time detection of greater one-horned rhinos,” Int. J. Environ. Sci., Vol.11, No.11s, pp. 995-1007, 2025. https://doi.org/10.64252/hg0c1n40

- [8] A. Korkmaz, M. T. Agdas, S. Kosunalp, T. Iliev, and I. Stoyanov, “Detection of threats to farm animals using deep learning models: A comparative study,” Applied Sciences, Vol.14, No.14, Article No.6098, 2024. https://doi.org/10.3390/app14146098

- [9] J. Wu, W. Gan, Z. Chen, S. Wan, and P. S. Yu, “Multimodal large language models: A survey,” 2023 IEEE Int. Conf. on Big Data (BigData), pp. 2247-2256, 2023. https://doi.org/10.1109/BigData59044.2023.10386743

- [10] M. Xu, W. Yin, D. Cai et al., “A survey of resource-efficient LLM and multimodal foundation models,” arXiv preprint, arXiv:2401.08092, 2024. https://doi.org/10.48550/arXiv.2401.08092

- [11] Gemini Team, Google, “Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context,” arXiv preprint, arXiv:2403.05530, 2024. https://doi.org/10.48550/arXiv.2403.05530

- [12] OpenAI, “Gpt-4o system card,” arXiv preprint, arXiv:2410.21276, 2024. https://doi.org/10.48550/arXiv.2410.21276

- [13] L. F. P. Oliveira, A. P. Moreira, and M. F. Silva, “Advances in agriculture robotics: A state-of-the-art review and challenges ahead,” Robotics, Vol.10, No.2, Article No.52, 2021. https://doi.org/10.3390/robotics10020052

- [14] G. J. Q. Spina, G. S. R. Costa, T. V. Spina, and H. Pedrini, “Low-cost robot for agricultural image data acquisition,” Agriculture, Vol.13, No.2, Article No.413, 2023. https://doi.org/10.3390/agriculture13020413

- [15] H. Liu, C. Li, Q. Wu, and Y. J. Lee, “Visual instruction tuning,” Advances in Neural Information Processing Systems, Vol.36, pp. 34892-34916, 2023.

- [16] T. Cheng, L. Song, Y. Ge, W. Liu, X. Wang, and Y. Shan, “Yolo-world: Real-time open-vocabulary object detection,” Proc. of the 2024 IEEE/CVF Conf. Computer Vision and Pattern Recognition (CVPR), 2024. https://doi.org/10.1109/CVPR52733.2024.01599

- [17] S. Liu, Z. Zeng, T. Ren et al., “Grounding dino: Marrying dino with grounded pre-training for open-set object detection,” Proc. of the 18th European Conf. on Computer Vision (ECCV2024), 2024, Part XLVII, p. 38-55, 2024. https://doi.org/10.1109/CVPR52733.2024.01599

- [18] “Survey of damage caused by wild birds and animals,” (in Japanese). https://www.pref.hokkaido.lg.jp/ks/skn/higai.html [Accessed August 4, 2025]

- [19] “Notification system for hunters upon capture of bears developed by matsumae town and future university hakodate,” (in Japanese). https://www.hokkaido-np.co.jp/article/1043135/ [Accessed July 26, 2024]

- [20] “Information on devices available for wildlife damage prevention,” (in Japanese). https://www.maff.go.jp/j/seisan/tyozyu/higai/kikijouhou/kikijouhou.html [Accessed July 26, 2024]

- [21] GISupply, “TRELink: Wildlife damage prevention system.” https://www.trelink.jp/ [Accessed January 18, 2026]

- [22] “Trel 4g-r sensor camera,” (in Japanese). https://www.gishop.jp/shopdetail/000000001435/ [Accessed September 16, 2025]

- [23] “Nanae-cho wildlife damage prevention plan,” (in Japanese). https://www.town.nanae.hokkaido.jp/hotnews/detail/00007826.html [Accessed September 16, 2025]

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.