Paper:

Dynamic Visualization of Construction Sites with Machine-Borne Sensors Toward Automated Earth Moving

Ryo Nakamura*

, Masato Domae*, Takaaki Morimoto**, Takeya Izumikawa**, and Hiromitsu Fujii*

, Masato Domae*, Takaaki Morimoto**, Takeya Izumikawa**, and Hiromitsu Fujii*

*Chiba Institute of Technology

2-17-1 Tsudanuma, Narashino, Chiba 275-0016, Japan

**Sumitomo Construction Machinery Co., Ltd.

731-1 Naganumahara-cho, Inage-ku, Chiba, Chiba 263-0001, Japan

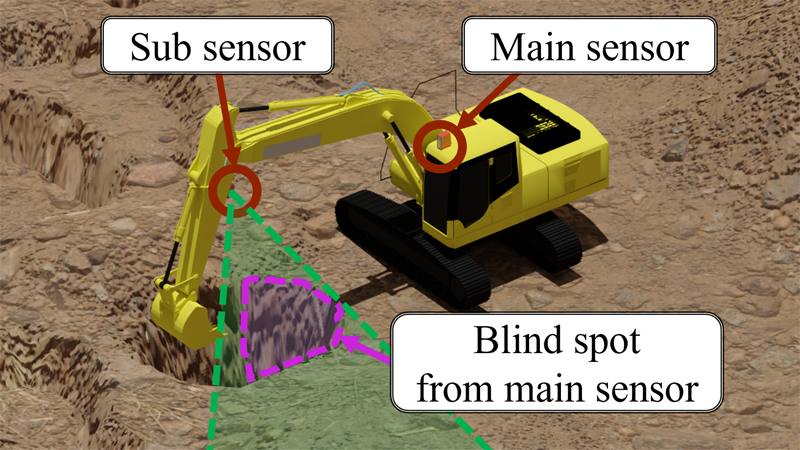

The digitization of the construction site environment has progressed rapidly. In this study, the operations of hydraulic excavators—which are machines widely used in the construction industry—were advanced to enable automation and unmanned operation. To achieve this, it is necessary to determine the environment of the machines at the sites, and a real-time measurement and visualization methodology that can be installed at common construction sites is required. In this study, we propose a measurement system for reconstructing a wide range of surrounding environments using machine-borne sensors mounted on a hydraulic excavator. The proposed system measures the entire surrounding environment using a sensor unit composed of a laser imaging detection and ranging (LiDAR) and a wide-angle camera. Furthermore, methods of time-series integration for wide-range and dynamic measurements during work for occlusion-robust visualization are proposed. In an experiment using actual machines on an earth-moving site, we validated the performance of our proposed system by quantitative evaluation and confirmed that the system provides an effective solution for the digitization of construction sites.

Non-occluded measurement with machine-borne sensors

- [1] R. Heikkilä, T. Makkonen, I. Niskanen, M. Immonen, M. Hiltunen, T. Kolli, and P. Tyni, “Development of an Earthmoving Machinery Autonomous Excavator Development Platform,” 36th Int. Symposium on Automation and Robotics in Construction, pp. 1005-1010, 2019. https://doi.org/10.22260/ISARC2019/0134

- [2] S. Su, R. Y. Zhong, and Y. Jiang, “Digital twin and its applications in the construction industry: A state-of-art systematic review [version 1; peer review: 1 approved with reservations],” Digital Twin 2022, Vol.2, Article No.15, 2022. https://doi.org/10.12688/digitaltwin.17664.2

- [3] O. M. U. Eraliev, K. Lee, D. Shin, and C. Lee, “Sensing, perception, decision, planning and action of autonomous excavators,” Automation in Construction, Vol.141, Article No.104428, 2022. https://doi.org/10.1016/j.autcon.2022.104428

- [4] T. Nagano, R. Yajima, S. Hamasaki, K. Nagatani, A. Moro, H. Okamoto, G. Yamauchi, T. Hashimoto, A. Yamashita, and H. Asama, “Arbitrary Viewpoint Visualization for Teleoperated Hydraulic Excavators,” J. Robot. Mechatron., Vol.32, No.6, pp. 1233-1243, 2020. https://doi.org/10.20965/jrm.2020.p1233

- [5] D. Yeom, H. Yoo, J. Kim, and Y. Kim, “Development of a vision-based machine guidance system for hydraulic excavators,” J. of Asian Architecture and Building Engineering, Vol.22, No.3, pp. 1564-1581, 2023. https://doi.org/10.1080/13467581.2022.2090365

- [6] M. Wada and Y. Mori, “3D Digital Twin System for Remote Construction Work,” Hitachi Review, Vol.72, No.1, 2024.

- [7] G. L. Besnerais, M. Sanfourche, and F. Champagnat, “Dense height map estimation from oblique aerial image sequences,” Computer Vision and Image Understanding, Vol.109, No.2, pp. 204-225, 2008. https://doi.org/10.1016/j.cviu.2007.07.003

- [8] A. Lucieer, S. M. Jong, and D. Turner, “Mapping landslide displacements using Structure from Motion (SfM) and image correlation of multi-temporal UAV photography,” Progress in Physical Geography: Earth and Environment, Vol.38, No.1, pp. 97-116, 2013. https://doi.org/10.1177/0309133313515293

- [9] S. Verykokou, A. Doulamis, G. Athanasiou, C. Ioannidis, and A. Amditis, “UAV-based 3D modelling of disaster scenes for urban search and rescue,” IEEE Int. Conf. on Imaging Systems and Techniques (IST), Chania, Greece, pp. 106-111, 2016. https://doi.org/10.1109/IST.2016.7738206

- [10] Y. Shao, Y. Ji, H. Fujii, S. Yamamoto, T. Chiba, K. Chayama, Y. Tamura, K. Nagatani, A. Yamashita, and H. Asama, “Estimation of Soil Volume Change Using UAV-based 3D Terrain Mapping,” Proc. of the 2017 IEEE/SICE Int. Symposium on System Integration, pp. 247-250, 2017.

- [11] S. Kundu, N. Das, D. Saha, and P. Biswas, “Unknown terrain imaging with adaptive spatial resolution using UAV,” Ad Hoc Networks, Vol.135, Article No.102937, 2022. https://doi.org/10.1016/j.adhoc.2022.102937

- [12] H. Yoshida, T. Yoshimoto, D. Umino, and N. Mori, “Practical Full Automation of Excavation and Loading for Hydraulic Excavators in Indoor Environments,” 2021 IEEE 17th Int. Conf. on Automation Science and Engineering (CASE), pp. 2153-2160, 2021. https://doi.org/10.1109/CASE49439.2021.9551504

- [13] M. Inagawa, T. Kawabe, and T. Takei, “Demonstration of Localization for Construction Vehicles Using 3D LiDARs Installed in the Field,” J. of Field Robotics, 2023 (in press). https://doi.org/10.1002/rob.22211

- [14] M. Inagawa, T. Kawabe, T. Takei, and K. Nagatani, “Demonstration of Position Estimation for Multiple Construction Vehicles of Different Models by Using 3D LiDARs Installed in the Field,” ROBOMECH J., Vol.10, Article No.15, 2023. https://doi.org/10.1186/s40648-023-00252-0

- [15] C. Sung and P. Y. Kim, “3D terrain reconstruction of construction sites using a stereo camera,” Automation in Construction, Vol.64, pp. 65-77, 2016. https://doi.org/10.1016/j.autcon.2015.12.022

- [16] D. Yeom, H. Yoo, and Y. Kim, “3D surround local sensing system H/W for intelligent excavation robot (IES),” J. of Asian Architecture and Building Engineering, Vol.18, No.5, pp. 439-456, 2019. https://doi.org/10.1080/13467581.2019.1679148

- [17] X. Li, C. Liu, J. Li, M. Baghdadi, and Y. Liu, “A Multi-Sensor Environmental Perception System for an Automatic Electric Shovel Platform,” Sensors, Vol.21, No.13, Article No.4355, 2021. https://doi.org/10.3390/s21134355

- [18] A. Rasul, A. Khaicnour, and J. Seo, “Development of Integrative Methodologies for Effective Excavation Progress Monitoring,” Sensors, Vol.21, No.2, Article No.364, 2021. https://doi.org/10.3390/s21020364

- [19] A. Rasul, A. Khaicnour, and J. Seo, “Effective Ground Mapping for Autonomous Excavation,” 2021 21st Int. Conf. on Control, Automation and Systems (ICCAS), pp. 1087-1092, 2021. https://doi.org/10.23919/ICCAS52745.2021.9650067

- [20] I. Niskanen, M. Immonen, T. Makkonen, P. Keränen, P. Tyni, L. Hallman, M. Hiltunen, T. Kolli, Y. Louhisalmi, J. Kostamovaara, and R. Huikkilä, “4D modeling of soil surface during excavation using a solid-state 2D profilometer mounted on the arm of an excavator,” Automation in Construction, Vol.112, Article No.103112, 2020. https://doi.org/10.1016/j.autcon.2020.103112

- [21] M. Immonen, I. Niskanen, L. Hallman, P. Keränen, M. Hiltunen, J. Kostamovaara, and R. Huikkilä, “Fusion of 4D Point Clouds From a 2D Profilometer and a 3D Lidar on an Excavator,” IEEE Sensors J., Vol.21, No.15, pp. 17200-17206, 2021. https://doi.org/10.1109/JSEN.2021.3078301

- [22] I. Niskanen, M. Immonen, T. Makkonen, L. Hallman, M. Mikkonen, P. Keränen, J. Kostamovaara, and R. Huikkilä, “Trench visualisation from a semiautonomous excavator with a base grid map using a TOF 2D profilometer,” J. of Visualization, Vol.26, No.4, pp. 889-898, 2023. https://doi.org/10.1007/s12650-023-00908-4

- [23] P. J. Besl and N. D. Mekay, “A method for registration of 3-D shapes,” IEEE Trans. on Pattern Analysis and Machine Intelligence, Vol.14, No.2, pp. 239-256, 1992. https://doi.org/10.1109/34.121791

- [24] D. Sun, C. Ji, S. Jang, S. Lee, J. No, C. Han, J. Han, and M. Kang, “Analysis of the Position Recognition of the Bucket Tip According to the Motion Measurement Method of Excavator Boom, Stick and Bucket,” Sensors, Vol.20, No.10, Article No.2881, 2020. https://doi.org/10.3390/s20102881

- [25] Z. Pučko, N. Šuman, and D. Rebolj, “Automated continuous construction progress monitoring using multiple workplace real time 3D scans,” Advanced Engineering Informatics, Vol.38, pp. 27-40, 2018. https://doi.org/10.1016/j.aei.2018.06.001

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.