Paper:

Image Search Strategy via Visual Servoing for Robotic Kidney Ultrasound Imaging

Takumi Fujibayashi*,**, Norihiro Koizumi**

, Yu Nishiyama**

, Yu Nishiyama**

, Jiayi Zhou**, Hiroyuki Tsukihara***, Kiyoshi Yoshinaka*

, Jiayi Zhou**, Hiroyuki Tsukihara***, Kiyoshi Yoshinaka*

, and Ryosuke Tsumura*

, and Ryosuke Tsumura*

*Health and Medical Research Institute, National Institute of Advanced Industrial Science and Technology

1-2-1 Namiki, Tsukuba, Ibaraki 205-8564, Japan

**Graduate School of Informatics and Engineering, The University of Electro-Communications

1-5-1 Chofugaoka, Chofu, Tokyo 182-8585, Japan

***Graduate School of Engineering, The University of Tokyo

7-3-1 Hongo, Bunkyo-ku, Tokyo 113-8656, Japan

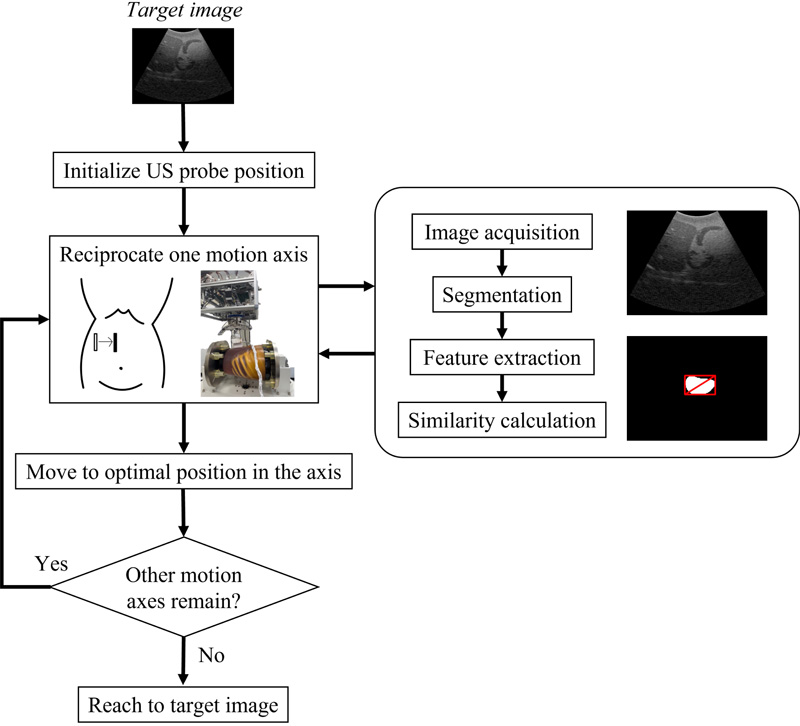

Ultrasound (US) imaging is beneficial for kidney diagnosis; however, it involves sophisticated tasks that must be performed by physicians to obtain the target image. We propose a target-image search strategy combining visual servoing and deep learning-based image evaluation for robotic kidney US imaging. The search strategy is designed by mimicking physicians’ motion axis of the US probe. By controlling the position of the US probe along each of the motion axes while evaluating the obtained US images based on an anatomical feature extraction method via instance segmentation with YOLACT++, we are able to search for an optimal target image. The proposed approach was validated through phantom studies. The results showed that the proposed approach could find the target kidney images with error rates of 2.88±1.76 mm and 2.75±3.36°. Thus, the proposed method enables the accurate identification of the target image, which highlights its potential for application in autonomous kidney US imaging.

Search strategy based on visual servoing

- [1] A. Pinto, F. Pinto, A. Faggian, G. Rubini, F. Caranci, L. Macarini, E. A. Genovese, and L. Brunese, “Sources of error in emergency ultrasonography,” Crit. Ultrasound J., Vol.5, No.Suppl.1, Article No.S1, 2013. https://doi.org/10.1186/2036-7902-5-S1-S1

- [2] C. F. Tator and C. Truluck, “Musculoskeletal pain relief in sonographers: A systematic review of the effects of therapeutic techniques,” J. Diagn. Med. Sonogr., Vol.33, No.5, pp. 420-426, 2017. https://doi.org/10.1177/8756479317721673

- [3] S. Ohrndorf, L. Naumann, J. Grundey, T. Scheel, A. K. Scheel, C. Werner, and M. Backhaus, “Is musculoskeletal ultrasonography an operator-dependent method or a fast and reliably teachable diagnostic tool? Interreader agreements of three ultrasonographers with different training levels,” Int. J. Rheumatol., Vol.2010, Article No.164518, 2010. https://doi.org/10.1155/2010/164518

- [4] K. Li, Y. Xu, and M. Q.-H. Meng, “An overview of systems and techniques for autonomous robotic ultrasound acquisitions,” IEEE Trans. Med. Robot. Bionics, Vol.3, No.2, pp. 510-524, 2021. https://doi.org/10.1109/TMRB.2021.3072190

- [5] Z. Jiang, Y. Gao, L. Xie, and N. Navab, “Towards autonomous atlas-based ultrasound acquisitions in presence of articulated motion,” IEEE Robot. Autom. Lett., Vol.7, No.3, pp. 7423-7430, 2022. https://doi.org/10.1109/lra.2022.3180440

- [6] F. Suligoj, C. M. Heunis, J. Sikorski, and S. Misra, “RobUSt—an autonomous robotic ultrasound system for medical imaging,” IEEE Access, Vol.9, pp. 67456-67465, 2021. https://doi.org/10.1109/ACCESS.2021.3077037

- [7] J. Zielke, C. Eilers, B. Busam, W. Weber, N. Navab, and T. Wendler, “RSV: Robotic sonography for thyroid volumetry,” IEEE Robot. Autom. Lett., Vol.7, No.2, pp. 3342-3348, 2022. https://doi.org/10.1109/LRA.2022.3146542

- [8] J. T. Kaminski, K. Rafatzand, and H. K. Zhang, “Feasibility of robot-assisted ultrasound imaging with force feedback for assessment of thyroid diseases,” Proc. SPIE 11315, Med. Imaging 2020: Image-Guid. Proced. Robot. Interv., Article No.113151D, 2020. https://doi.org/10.1117/12.2551118

- [9] S. Ipsen, D. Wulff, I. Kuhlemann, A. Schweikard, and F. Ernst, “Towards automated ultrasound imaging—robotic image acquisition in liver and prostate for long-term motion monitoring,” Phys. Med. Biol., Vol.66, No.9, Article No.094002, 2021. https://doi.org/10.1088/1361-6560/abf277

- [10] A. S. B. Mustafa et al., “Development of robotic system for autonomous liver screening using ultrasound scanning device,” 2013 IEEE Int. Conf. Robot. Biomim. (ROBIO), pp. 804-809, 2013. https://doi.org/10.1109/ROBIO.2013.6739561

- [11] R. Tsumura and H. Iwata, “Robotic fetal ultrasonography platform with a passive scan mechanism,” Int. J. Comput. Assist. Radiol. Surg., Vol.15, No.8, pp. 1323-1333, 2020. https://doi.org/10.1007/s11548-020-02130-1

- [12] S. Wang et al., “Robotic-assisted ultrasound for fetal imaging: Evolution from single-arm to dual-arm system,” Proc. 20th Annu. Conf. Towards Auton. Robot., Part 2, pp. 27-38, 2019. https://doi.org/10.1007/978-3-030-25332-5_3

- [13] R. Tsumura and H. Iwata, “Development of ultrasonography assistance robot for prenatal care,” Proc. SPIE, Vol.11315, Med. Imaging 2020: Image-Guid. Proced. Robot. Interv., Article No.113152O, 2020. https://doi.org/10.1117/12.2550038

- [14] R. Ye et al., “Feasibility of a 5G-based robot-assisted remote ultrasound system for cardiopulmonary assessment of patients with coronavirus disease 2019,” Chest, Vol.159, No.1, pp. 270-281, 2021. https://doi.org/10.1016/j.chest.2020.06.068

- [15] R. Tsumura et al., “Tele-operative low-cost robotic lung ultrasound scanning platform for triage of COVID-19 patients,” IEEE Robot. Autom. Lett., Vol.6, No.3, pp. 4664-4671, 2021. https://doi.org/10.1109/LRA.2021.3068702

- [16] P. Abolmaesumi, S. E. Salcudean, W.-H. Zhu, M. R. Sirouspour, and S. P. DiMaio, “Image-guided control of a robot for medical ultrasound,” IEEE Trans. Robot. Autom., Vol.18, No.1, pp. 11-23, 2002. https://doi.org/10.1109/70.988970

- [17] Z. Jiang, M. Grimm, M. Zhou, J. Esteban, W. Simson, G. Zahnd, and N. Navab, “Automatic normal positioning of robotic ultrasound probe based only on confidence map optimization and force measurement,” IEEE Robot. Autom. Lett., Vol.5, No.2, pp. 1342-1349, 2020. https://doi.org/10.1109/LRA.2020.2967682

- [18] P. Chatelain, A. Krupa, and N. Navab, “Confidence-driven control of an ultrasound probe: Target-specific acoustic window optimization,” 2016 IEEE Int. Conf. Robot. Autom. (ICRA), pp. 3441-3446, 2016. https://doi.org/10.1109/ICRA.2016.7487522

- [19] P. Chatelain, A. Krupa, and N. Navab, “Optimization of ultrasound image quality via visual servoing,” 2015 IEEE Int. Conf. Robot. Autom. (ICRA), pp. 5997-6002, 2015. https://doi.org/10.1109/ICRA.2015.7140040

- [20] Y. Huang, W. Xiao, C. Wang, H. Liu, R. Huang, and Z. Sun, “Towards fully autonomous ultrasound scanning robot with imitation learning based on clinical protocols,” IEEE Robot. Autom. Lett., Vol.6, No.2, pp. 3671-3678, 2021. https://doi.org/10.1109/LRA.2021.3064283

- [21] J. Zhou et al., “A VS ultrasound diagnostic system with kidney image evaluation functions,” Int. J. Comput. Assist. Radiol. Surg., Vol.18, No.2, pp. 227-246, 2022. https://doi.org/10.1007/s11548-022-02759-0

- [22] D. Bolya, C. Zhou, F. Xiao, and Y. J. Lee, “YOLACT++ better real-time instance segmentation,” IEEE Trans. Pattern Anal. Mach. Intell., Vol.44, No.2, pp. 1108-1121, 2022. https://doi.org/10.1109/TPAMI.2020.3014297

- [23] T. Fujii et al., “Servoing performance enhancement via a respiratory organ motion prediction model for a non-invasive ultrasound theragnostic system,” J. Robot. Mechatron., Vol.29, No.2, pp. 434-446, 2017. https://doi.org/10.20965/jrm.2017.p0434

- [24] S. Yin, Z. Zhang, H. Li, Q. Peng, X. You, S. L. Furth, G. E. Tasian, and Y. Fan, “Fully-automatic segmentation of kidneys in clinical ultrasound images using a boundary distance regression network,” 2019 IEEE 16th Int. Symp. Biomed. Imaging (ISBI 2019), pp. 1741-1744, 2019. https://doi.org/10.1109/ISBI.2019.8759170

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.