Paper:

Through-Hole Detection and Finger Insertion Planning as Preceding Motion for Hooking and Caging a Ring-Shaped Objects

Koshi Makihara*, Takuya Otsubo**, and Satoshi Makita***

*Osaka University

1-3 Machikaneyama, Toyonaka, Osaka 560-8531, Japan

**National Institute of Technology, Sasebo College

1-1 Okishincho, Sasebo, Nagasaki 857-1193, Japan

***Fukuoka Institute of Technology

3-30-1 Wajiro-higashi, Higashi-ku, Fukuoka, Fukuoka 811-0295, Japan

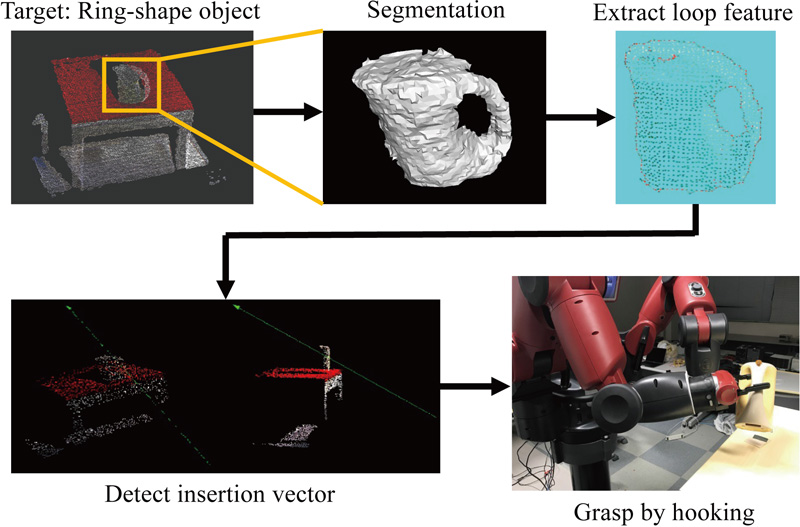

This study investigated a pregrasp strategy for hooking and caging ring-shaped objects. Through-hole features enable the robot hand to hook an object with holes by inserting its finger into one of the holes. Compared to directly grasping the ring, an inserting motion is more convenient to allow the uncertainty of positioning errors and avoid collisions between the hand and the object. Instead of recognizing the exact shape of the object, we only detected its ring-shaped feature as a through-hole to be inserted and estimated its approximate center position and orientation from the point cloud of the object. The estimated geometric properties enabled the approaching motion of the robotic gripper to complete insertion. The proposed perception and motion-planning method was demonstrated for rigid and deformable objects with holes.

Planning of finger insertion motion

- [1] Y. Domae, “Recent trends in the research of industrial robots and future outlook,” J. Robot. Mechatron., Vol.31, No.1, pp. 57-62, 2019. https://doi.org/10.20965/jrm.2019.p0057

- [2] M. Shintani, Y. Fukui, K. Morioka, K. Ishihata, S. Iwaki, T. Ikeda, and T. Lüth, “Object grasping instructions to support robot by laser beam one drag operations,” J. Robot. Mechatron., Vol.33, No.4, pp. 756-767, 2021. https://doi.org/10.20965/jrm.2021.p0756

- [3] A. Saxena, J. Driemeyer, and A. Y. Ng, “Robotic grasping of novel objects using vision,” The Int. J. of Robotics Research, Vol.27, No.2, pp. 157-173, 2008. https://doi.org/10.1177/0278364907087172

- [4] I. Lenz, H. Lee, and A. Saxena, “Deep learning for detecting robotic grasps,” The Int. J. of Robotics Research, Vol.34, No.4-5, pp. 705-724, 2015. https://doi.org/10.1177/0278364914549607

- [5] Y. Domae, A. Noda, T. Nagatani, and W. Wan, “Robotic general parts feeder: Bin-picking, regrasping, and kitting,” IEEE Int. Conf. on Robotics and Automation, pp. 5004-5010, 2020. https://doi.org/10.1109/ICRA40945.2020.9197056

- [6] J. Mahler, M. Matl, V. Satish, M. Danielczuk, B. DeRose, S. McKinley, and K. Goldberg, “Learning ambidextrous robot grasping policies,” Science Robotics, Vol.4, No.26, Article No.eaau4984, 2019. https://doi.org/10.1126/scirobotics.aau4984

- [7] S. Makita and W. Wan, “A survey of robotic caging and its applications,” Advanced Robotics, Vol.31, No.19-20, pp. 1071-1085, 2017. https://doi.org/10.1080/01691864.2017.1371075

- [8] E. Rimon and A. Blake, “Caging planar bodies by one-parameter two-fingered gripping systems,” The Int. J. of Robotics Research, Vol.18, No.3, pp. 299-318, 1999. https://doi.org/10.1177/02783649922066222

- [9] R. Diankov, S. S. Srinivasa, D. Ferguson, and J. Kuffner, “Manipulation planning with caging grasps,” 8th IEEE-RAS Int. Conf. on Humanoid Robots, pp. 285-292, 2008. https://doi.org/10.1109/ICHR.2008.4755966

- [10] W. Wan, R. Fukui, M. Shimosaka, T. Sato, and Y. Kuniyoshi, “Grasping by caging: A promising tool to deal with uncertainty,” 2012 IEEE Int. Conf. on Robotics and Automation, pp. 5142-5149, 2012. https://doi.org/10.1109/ICRA.2012.6224676

- [11] S. Makita and Y. Maeda, “3D multifingered caging: Basic formulation and planning,” Proc. of IEEE/RSJ Int. Conf. on Intelligent Robots and System, Nice, France, pp. 2697-2702, 2008. https://doi.org/10.1109/IROS.2008.4650895

- [12] S. Makita, K. Okita, and Y. Maeda, “3D two-fingered caging for two types of objects: Sufficient conditions and planning,” Int. J. of Mechatronics and Automation, Vol.3, pp. 263-277, 2013. https://doi.org/10.1504/IJMA.2013.058376

- [13] A. Varava, D. Kragic, and F. T. Pokorny, “Caging grasps of rigid and partially deformable 3-d objects with double fork and neck features,” IEEE Trans. on Robotics, Vol.32, No.6, pp. 1479-1497, 2016. https://doi.org/10.1109/TRO.2016.2602374

- [14] F. T. Pokorny, J. A. Stork, and D. Kragic, “Grasping objects with holes: A topological approach,” IEEE Int. Conf. on Robotics and Automation, pp. 1100-1107, 2013. https://doi.org/10.1109/ICRA.2013.6630710

- [15] T.-H. Kwok, W. Wan, J. Pan, C. C. L. Wang, J. Yuan, K. Harada, and Y. Chen, “Rope Caging and Grasping,” Proc. of IEEE Int. Conf. on Robotics and Automation, Stockholm, Sweden, pp. 1980-1986, 2016. https://doi.org/10.1109/ICRA.2016.7487345

- [16] T. Makapunyo, T. Phoka, P. Pipattanasomporn, N. Niparnan, and A. Sudsang, “Measurement framework of partial cage quality based on probabilistic motion planning,” Proc. of IEEE Int. Conf. on Robotics and Automation, Karlsruhe, Germany, pp. 1574-1579, 2013. https://doi.org/10.1109/ICRA.2013.6630780

- [17] M. Welle, A. Varava, J. Mahler, K. Goldberg, D. Kragic, and F. Pokorny, “Partial caging: a clearance-based definition, datasets, and deep learning,” Autonomous Robots, Vol.45, pp. 647-664, 2021. https://doi.org/10.1007/s10514-021-09969-6

- [18] J. Mahler, F. T. Pokorny, Z. Mccarthy, A. F. V. D. Stappen, and K. Goldberg, “Energy-bounded caging : Formal definition and 2-d energy lower bound algorithm based on weighted alpha shapes,” IEEE Robotics and Automation Letters, Vol.1, No.1, pp. 508-515, 2016. https://doi.org/10.1109/LRA.2016.2519145

- [19] A. Shirizly, E. D. Rimon, and W. Wan, “Contact space computation of two-finger gravity based caging grasps security measure,” IEEE Robotics and Automation Letters, Vol.6, No.2, pp. 572-579, 2020. https://doi.org/10.1109/LRA.2020.3047773

- [20] J. A. Stork, F. T. Pokorny, and D. Kragic, “A Topology-based Object Representation for Clasping, Latching and Hooking,” Proc. of IEEE/RAS Int. Conf. on Humanoid Robots, pp. 138-145, 2013. https://doi.org/10.1109/HUMANOIDS.2013.7029968

- [21] M. Yashima and T. Yamawaki, “Robotic nonprehensile catching: Initial experiments,” Proc. of IEEE/RSJ Int. Conf. on Intelligent Robots and Systems, pp. 5480-5486, 2014. https://doi.org/10.1109/IROS.2014.6942897

- [22] S. Akizuki and M. Hashimoto, “Stable position and pose estimation of industrial parts using evaluation of observability of 3D vector pairs,” J. Robot. Mechatron., Vol.27, No.2, pp. 174-181, 2015. https://doi.org/10.20965/jrm.2015.p0174

- [23] U. Asif, M. Bennamoun, and F. A. Sohel, “RGB-D object recognition and grasp detection using hierarchical cascaded forests,” IEEE Trans. on Robotics, Vol.33, No.3, pp. 547-564, 2017. https://doi.org/10.1109/TRO.2016.2638453

- [24] Y. Sakata and T. Suzuki, “Coverage motion planning based on 3D model’s curved shape for home cleaning robot,” J. Robot. Mechatron., Vol.35, No.1, pp. 30-42, 2023. https://doi.org/10.20965/jrm.2023.p0030

- [25] R. Iinuma, Y. Hori, H. Onoyama, Y. Kubo, and T. Fukao, “Robotic forklift for stacking multiple pallets with RGB-D cameras,” J. Robot. Mechatron., Vol.33, No.6, pp. 1265-1273, 2021. https://doi.org/10.20965/jrm.2021.p1265

- [26] B. Calli, A. Walsman, A. Singh, S. Srinivasa, P. Abbeel, and A. M. Dollar, “Benchmarking in manipulation research: The YCB object and model set and benchmarking protocols,” IEEE Robotics and Automation Magazine, Vol.22, pp. 36-52, 2015. https://doi.org/10.1109/MRA.2015.2448951

- [27] M. Quigley, K. Conley, B. P. Gerkey, J. Faust, T. Foote, J. Leibs, R. Wheeler, and A. Y. Ng, “ROS: an open-source robot operating system,” ICRA Workshop on Open Source Software, 2009.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.