Paper:

Development of Aerial Interface by Integrating Omnidirectional Aerial Display, Motion Tracking, and Virtual Reality Space Construction

Masaki Yasugi, Mayu Adachi, Kosuke Inoue, Nao Ninomiya, Shiro Suyama, and Hirotsugu Yamamoto

Utsunomiya University

7-1-2 Yoto, Utsunomiya, Tochigi 321-0904, Japan

By combining an aerial display, user tracking system, and virtual reality (VR) space, we realized an aerial interface for providing both an immersive user experience and real-time communication with people outside the device. We developed a simple application for detecting user contact and changing the color of an object to measure the latency of our aerial interface system.

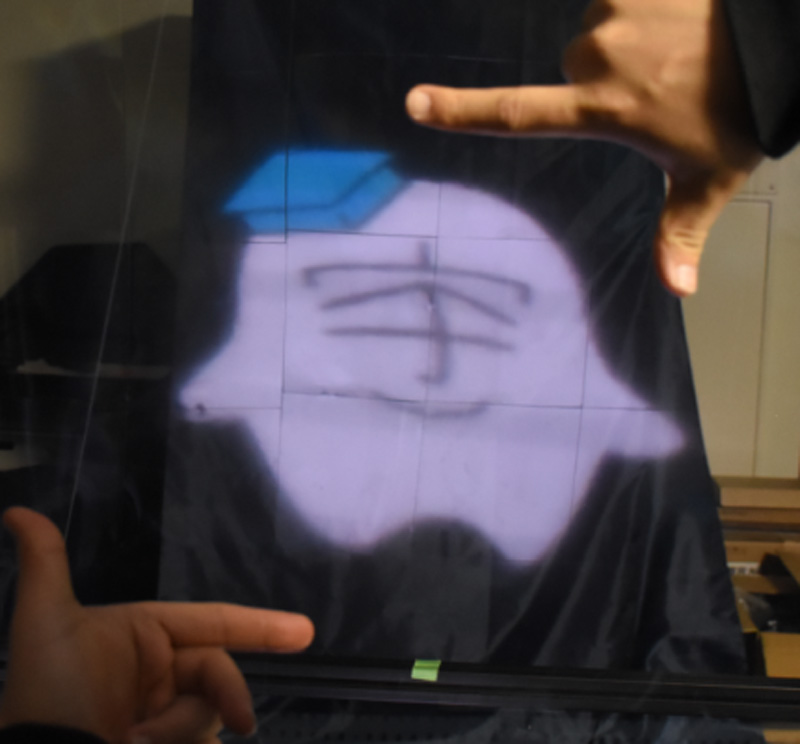

Life-size aerial image formed by our system

- [1] C. Cruz-Neira, D. J. Sandin, and T. A. DeFanti, “Surround-screen Projection-based Virtual Reality: The Design and Implementation of the CAVE,” Proc. SIGGRAPH’93, pp. 135-142, September 1993.

- [2] M. Yasugi, H. Yamamoto, and Y. Takeda, “Immersive aerial interface showing transparent floating screen between users and audience,” Proc. SPIE, Vol.11402, Article No.114020O, May 2020.

- [3] International Electrotechnical Commission, “3D display devices – part 51-1: Generic introduction of aerial display,” IEC Tech. Rep., Article No.TR 62629-51-1:2020, May 2020.

- [4] B. Javidi, A. Carnicer, J. Arai, T. Fujii, H. Hua, H. Liao, M. Martínez-Corral, F. Pla, A. Stern, L. Waller, Q. H. Wang, G. Wetzstein, M. Yamaguchi, and H. Yamamoto, “Roadmap on 3D integral imaging: sensing, processing, and display,” Opt. Express, Vol.28, pp. 32266-32293, October 2020.

- [5] S. Maekawa, K. Nitta, and O. Matoba, “Advances in passive imaging elements with micromirror array,” Proc. SPIE, Vol.6392, Article No.63920E, February 2006.

- [6] H. Yamamoto, Y. Tomiyama, and S. Suyama, “Floating aerial LED signage based on aerial imaging by retro-reflection,” Opt. Express, Vol.22, pp. 26919-26924, October 2014.

- [7] M. Yasugi and H. Yamamoto, “Triple-views aerial display to show different floating images for surrounding directions,” Opt. Express, Vol.28, pp. 35540-35547, November 2020.

- [8] K. Chiba, M. Yasugi, and H. Yamamoto, “Multiple aerial imaging by use of infinity mirror and oblique retro-reflector,” Jpn. J. Appl. Phys., Vol.59, No.SO, Article No.SOOD08, June 2020.

- [9] K. Fujii, M. Yasugi, S. Maekawa, and H. Yamamoto, “Reduction of retro-reflector and expansion of the viewpoint of an aerial image by the use of AIRR with transparent spheres,” OSA Continuum, Vol.4, pp. 1207-1214, April 2021.

- [10] E. Abe, M. Yasugi, H. Takeuchi, E. Watanabe, Y. Kamei, and H. Yamamoto, “Development of omnidirectional aerial display with aerial imaging by retro-reflection (AIRR) for behavioral biology experiments,” Opt. Rev., Vol.26, pp. 221-229, March 2019.

- [11] R. Kakinuma, M. Yasugi, S. Ito, K. Fujii, and H. Yamamoto, “Aerial Interpersonal 3D Screen with AIRR that Shares Your Gesture and Your Screen with an Opposite Viewer,” IMID 2018 DIGEST, p. 636, August 2018.

- [12] A. Ishii, M. Tsuruta, I. Suzuki, S. Nakamae, T. Minagawa, J. Suzuki, and Y. Ochiai, “ReverseCAVE: Providing Reverse Perspectives for Sharing VR Experience,” Proc. SIGGRAPH 2017, Posters Article 28, November 2017.

- [13] D. Kondo, “Projection Screen with Wide-FOV and Motion Parallax Display for Teleoperation of Construction Machinery,” J. Robot. Mechatron., Vol.33, No.4, pp. 604-609, June 2021.

- [14] M. Yasugi and H. Yamamoto, “Optical Design Suitable for Both Immersive Aerial Display System and Capturing User Motion,” Proc. 28th Int. Display Workshops (IDW’21), pp. 733-735, December 2021.

- [15] K.Inoue, M. Yasugi, H. Yamamoto, and N. Ninomiya, “Improvement of the distortion of aerial displays and proposal for utilizing distortion to emulate three-dimensional aerial image,” Opt. Rev., Vol.29, pp. 261-266, February 2022.

- [16] H. Yamamoto, M. Yasui, M. S. Alvissalim, M. Takahashi, Y. Tomiyama, S. Suyama, and M. Ishikawa, “Floating Display Screen Formed by AIRR (Aerial Imaging by Retro-Reflection) for Interaction in 3D Space,” Proc. Int. Conf. on 3D Imaging (IC3D 2014), Article No.40, pp. 1-5, December 2014.

- [17] M. Yasui, M. S. Alvissalim, H. Yamamoto, and M. Ishikawa, “Immersive 3D Environment by Floating Display and High-Speed Gesture UI Integration,” Trans. on SICE, Vol.52, No.3, pp. 134-140, March 2016 (in Japanese).

- [18] J. P. Stauffert, F. Niebling, and M. E. Latoschik, “Latency and Cybersickness: Impact, Causes, and Measures. A Review,” Front. Virtual Real., Article No.582204, Nobember 2020.

- [19] T. Kadowaki, M. Maruyama, T. Hayakawa, N. Matsuzawa, K. Iwasaki, and M. Ishikawa, “Effects of low video latency between visual information and physical sensation in immersive environments,” Proc. ACM Symposium on Virtual Reality Software and Technology (VRST’18), No.84, p. 1, 2018.

- [20] H. Tochioka, H. Ikeda, T. Hayakawa, and M. Ishikawa, “Effects of latency in visual feedback on human performance of path-steering task,” Proc. ACM Symposium on Virtual Reality Software and Technology (VRST’19), No.65, pp. 1-2, 2019.

- [21] C. Attig, N. Rauh, T. Franke, and J. F. Krems, “System latency guidelines then and now–is zero latency really considered necessary?” D. Harris (Ed.), “Engineering Psychology and Cognitive Ergonomics: Cognition and Design,” Switzerland: Springer International Publishing, pp. 3-14, 2017.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.