Paper:

Robotic Assistance for Peg-and-Hole Alignment by Mimicking Annular Solar Eclipse Process

Shouren Huang*1, Kenichi Murakami*2, Masatoshi Ishikawa*1,*3, and Yuji Yamakawa*4

*1Data Science Division, Information Technology Center, The University of Tokyo

7-3-1 Hongo, Bunkyo-ku, Tokyo 113-8656, Japan

*2Department of Mechanical and Biofunctional Systems, Institute of Industrial Science, The University of Tokyo

4-6-1 Komaba, Meguro-ku, Tokyo 153-8505, Japan.

*3Tokyo University of Science

1-3 Kagurazaka, Shinjuku-ku, Tokyo 162-8601, Japan

*4Interfaculty Initiative in Information Studies, The University of Tokyo

4-6-1 Komaba, Meguro-ku, Tokyo 153-8505, Japan

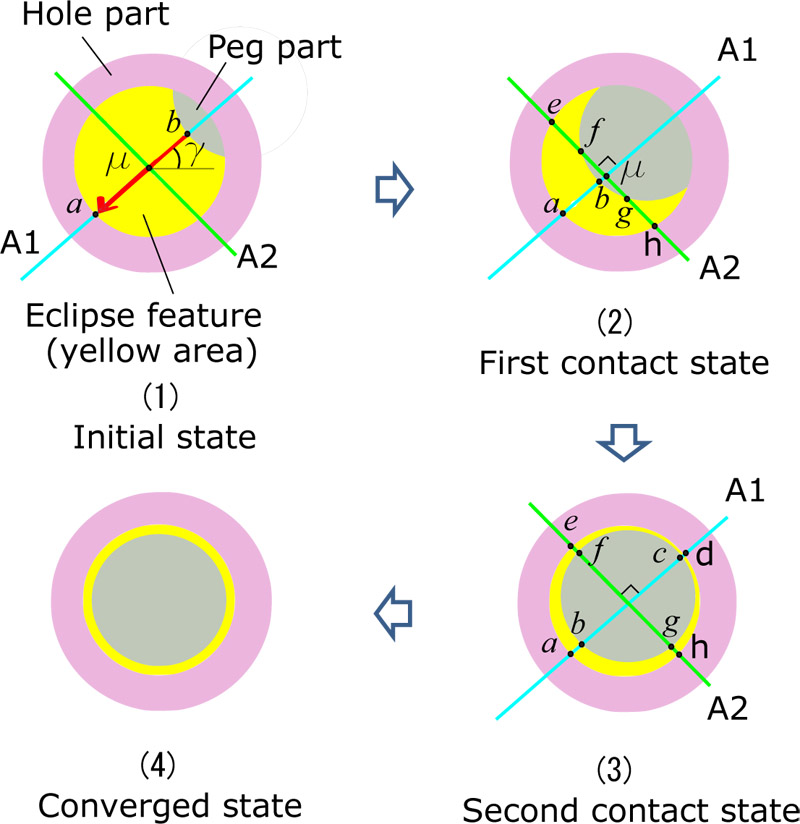

This study focuses on robotic assistance for peg-and-hole alignment with micrometer-order clearance. The objective of the robotic assistance is to cooperate with a human operator based on a coarse-to-fine strategy in which the human operator conducts coarse alignment and the robotic assistance realizes fine alignment. Robotic-assisted fine alignment is achieved by mimicking the process toward annularity of an annular solar eclipse. The first principal axis of a specified image feature (we call it a eclipse feature) is calculated by subtracting the surfaces of a hole part (a small gear with an inner diameter of 1 mm) and a peg part (a shaft with a diameter of 0.95 mm). Accordingly, control strategy is developed to realize accurate alignment. Moreover, the effectiveness of the proposed method is verified by experimental evaluation.

Alignment strategy mimicking annular solar eclipse

- [1] R. K. Jain, S. Majumder, and A. Dutta, “Scara based peg-in-hole assembly using compliant ipmc micro gripper,” Robotics and Autonomous Systems, Vol.61, No.3, pp. 297-311, 2013.

- [2] N. Dechev, W. L. Cleghorn, and J. K. Mills, “Microassembly of 3-d microstructures using a compliant, passive microgripper,” J. of Microelectromechanical Systems, Vol.13, No.2, pp. 176-189, April 2004.

- [3] D. E. Whitney, “Quasi-static assembly of compliantly supported rigid parts,” ASME J. of Dynamic Systems Measurement and Control, Vol.104, No.1, pp. 65-77, 1982.

- [4] I. Kim and D. Lim, “Active peg-in-hole of chamferless parts using force/moment sensor,” Proc. IEEE/RSJ Int. Conf. on Intelligent Robots and Systems, pp. 948-953, 1999.

- [5] A. Ferreira, C. Cassier, and S. Hirai, “Automatic microassembly system assisted by vision servoing and virtual reality,” IEEE ASME Trans. Mechatron., Vol.9, No.2, pp. 321-333, 2004.

- [6] J. Wang, X. Tao, and H. Cho, “Microassembly of micro peg-and-hole using uncalibrated visual servoing method,” Precision Engineering, Vol.32, pp. 173-181, 2008.

- [7] R. J. Chang, C. Y. Lin, and P. S. Lin, “Visual-based automation of peg-in-hole microassembly process,” J. of Manufacturing Science and Engineering, Vol.133, pp. 1-12, 2011.

- [8] G. Morel, E. Malis, and S. Boudet, “Impedance based combination of visual and force control,” Proc. of IEEE Int. Conf. on Robotics and Automation (ICRA1998), pp. 1743-1748, 1998.

- [9] Y. Zhou, B. J. Nelson, and B. Vikramaditya, “Fusing force and vision feedback for micromanipulation,” Proc. of 1998 IEEE Int. Conf. on Robotics and Automation (ICRA1998), Vol.2, pp. 1220-1225, 1998.

- [10] Y. Tanaka, J. Kinugawa, Y. Sugahara, and K. Kosuge, “Motion planning with worker’s trajectory prediction for assembly task partner robot,” Proc. of IEEE/RSJ Int. Conf. on Intelligent Robots and Systems (IROS2012), pp. 1525-1532, 2012.

- [11] F. Jimenez, T. Yoshikawa, T. Furuhashi, and M. Kanoh, “Learning effect of collaborative learning between human and robot having emotion expression model,” 2015 IEEE Int. Conf. on Systems, Man, and Cybernetics, pp. 474-479, 2015.

- [12] J. T. C. Tan, F. Duan, R. Kato, and T. Arai, “Safety strategy for human-robot collaboration:design and development in cellular manufacturing,” Advanced Robotics, Vol.24, No.5-6, pp. 839-860, 2010.

- [13] A. Cherubini, R. Passama, A. Crosnier, A. Lasnier, and P. Fraisse, “Collaborative manufacturing with physical human-robot interaction,” Robot Comput-Integr. Manuf., Vol.40, pp. 1-13, 2016.

- [14] M. Bohan, D. S. McConnell, A. Chaparro, and S. G. Thompson, “The effects of visual magnification and physical movement scale on the manipulation of a tool with indirect vision,” J. of Experimental Psychology: Applied, Vol.16, No.1, pp. 33-44, 2021.

- [15] K. Yamamoto, K. Hyodo, M. Ishii, and T. Matsuo, “Development of power assisting suit for assisting nurse labor,” JSME Int. J., Series C: Mechanical Systems, Machine Elements and Manufacturing, Vol.45, No.3, pp. 703-711, 2002.

- [16] C. Song, P. L. Gehlbach, and J. U. Kang, “Active tremor cancellation by a “smart” handheld vitreoretinal microsurgical tool using swept source optical coherence tomography,” Opt. Express, Vol.20, No.21, pp. 23414-23421, 2012.

- [17] M. M. Dalvand and B. Shirinzadeh, “Motion control analysis of a parallel robot assisted minimally invasive surgery/microsurgery system (pramiss),” Robotics and Computer-Integrated Manufacturing, Vol.29, No.2, pp. 318-327, 2013.

- [18] K. K. Tan and S. C. Ng, “Computer controlled piezo micromanipulation system for biomedical applications,” Engineering Science and Education J., Vol.10, No.6, pp. 249-256, 2001.

- [19] N. Ogawa, Y. Sakaguchi, A. Namiki, and M. Ishikawa, “Adaptive acquisition of dynamics matching in sensory-motor fusion system,” Electronics and Communications in Japan (Part III: Fundamental Electronic Science), Vol.89, No.7, pp. 19-30, 2006.

- [20] K. Ito, T. Sueishi, Y. Yamakawa, and M. Ishikawa, “Tracking and recognition of a human hand in dynamic motion for janken (rock-paper-scissors) robot,” 2016 IEEE Int. Conf. on Automation Science and Engineering (CASE), pp. 891-896, 2016.

- [21] Y. Yamakawa, K. Kuno, and M. Ishikawa, “Human-robot cooperative task realization using high-speed robot hand system,” 2015 IEEE Int. Conf. on Advanced Intelligent Mechatronics (AIM), pp. 281-286, 2015.

- [22] N. Bergström, S. Huang, Y. Yamakawa, T. Senoo, and M. Ishikawa, “Towards assistive human-robot micro manipulation,” 2016 IEEE-RAS 16th Int. Conf. on Humanoid Robots (Humanoids), pp. 1188-1195, 2016.

- [23] W. Tooyama, S. Huang, K. Murakami, Y. Yamakawa, and M. Ishikawa, “Development of an assistive system for position control of a human hand with high speed and high accuracy,” 2016 IEEE-RAS 16th Int. Conf. on Humanoid Robots (Humanoids), pp. 230-235, 2016.

- [24] O. Kojima, S. Huang, K. Murakami, M. Ishikawa, and Y. Yamakawa, “Human-robot interaction system for micromanipulation assistance,” 2018 The 44th Annual Conf. of the IEEE Industrial Electronics Society, pp. 3256-3261, 2018.

- [25] S. Huang, M. Ishikawa, and Y. Yamakawa, “A coarse-to-fine framework for accurate positioning under uncertainties – from autonomous robot to human-robot system,” Int. J. Adv. Manuf. Technol., No.108, pp. 2929-2944, 2020.

- [26] S. Huang, M. Ishikawa, and Y. Yamakawa, “Human-robot collaboration based on dynamic compensation: from micro-manipulation to macro-manipulation,” 2018 The 44th Annual Conf. of the IEEE Industrial Electronics Society, pp. 603-604, 2018.

- [27] S. Huang, M. Ishikawa, and Y. Yamakawa, “An active assistant robotic system based on high-speed vision and haptic feedback for human-robot collaboration,” 2018 The 44th Annual Conf. of the IEEE Industrial Electronics Society, pp. 3256-3261, 2018.

- [28] S. Huang, K. Koyama, M. Ishikawa, and Y. Yamakawa, “Human-robot collaboration with force feedback utilizing bimanual coordination,” 2021 ACM/IEEE Int. Conf. on Human-Robot Interaction (HRI’21 Companion), 2021.

- [29] I. T. Jolliffe and J. Cadima, “Principal component analysis: a review and recent developments,” Phil. Trans. R. Soc. A, No.374, pp. 1-16, 2016.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.