Paper:

Bilaterally Shared Haptic Perception for Human-Robot Collaboration in Grasping Operation

Yoshihiro Tanaka*1, Shogo Shiraki*1, Kazuki Katayama*1, Kouta Minamizawa*2, and Domenico Prattichizzo*3,*4

*1Nagoya Institute of Technology

Gokiso, Showa-ku, Nagoya, Aichi 466-8555, Japan

*2Keio University Graduate School of Media Design

4-1-1 Hiyoshi, Kohoku-ku, Yokohama, Kanagawa 223-8526, Japan

*3Department of Information Engineering and Mathematics, University of Siena

Via Roma 56, Siena 53100, Italy

*4Department of Human Centered Mechatronics, Istituto Italiano di Tecnologia

Via Morego 30, Genova 16163, Italy

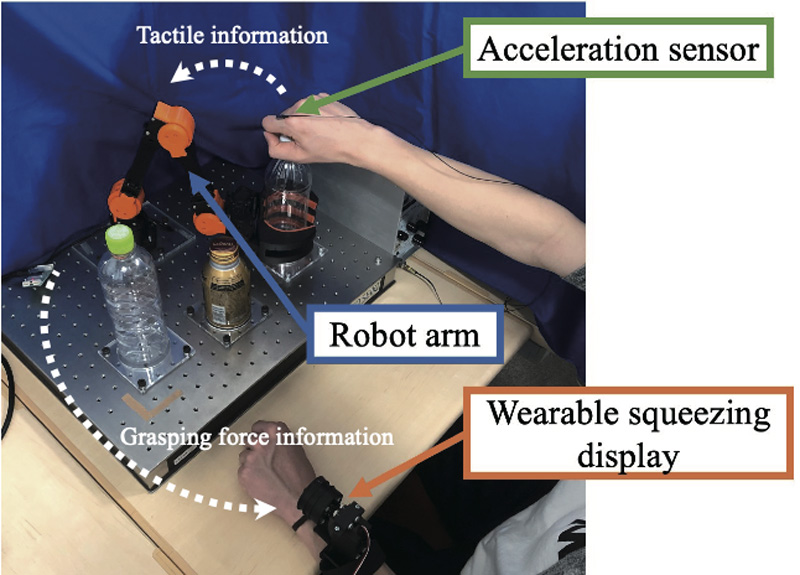

Tactile sensations are crucial for achieving precise operations. A haptic connection between a human operator and a robot has the potential to promote smooth human-robot collaboration (HRC). In this study, we assemble a bilaterally shared haptic system for grasping operations, such as both hands of humans using a bottle cap-opening task. A robot arm controls the grasping force according to the tactile information from the human that opens the cap with a finger-attached acceleration sensor. Then, the grasping force of the robot arm is fed back to the human using a wearable squeezing display. Three experiments are conducted: measurement of the just noticeable difference in the tactile display, a collaborative task with different bottles under two conditions, with and without tactile feedback, including psychological evaluations using a questionnaire, and a collaborative task under an explicit strategy. The results obtained showed that the tactile feedback provided the confidence that the cooperative robot was adjusting its action and improved the stability of the task with the explicit strategy. The results indicate the effectiveness of the tactile feedback and the requirement for an explicit strategy of operators, providing insight into the design of an HRC with bilaterally shared haptic perception.

Human-robot collaboration system with bilaterally shared haptic perception

- [1] V. Villani, F. Pini, F. Leali, and C. Secchi, “Survey on human-robot collaboration in industrial settings: Safety, intuitive interfaces and applications,” Mechatronics, Vol.55, pp. 248-266, 2018.

- [2] G. Canal, G. Alenyà, and C. Torras, “Adapting robot task planning to user preferences: an assistive shoe dressing example,” Autonomous Robots, Vol.43, No.6, pp. 1343-1356, 2019.

- [3] J. Grischke, L. Johannsmeier, L. Eich, and S. Haddadin, “Dentronics: review, first concepts and pilot study of a new application domain for collaborative robots in dental assistance,” Proc. of the 2019 Int. Conf. on Robotics and Automation (ICRA), pp. 6525-6532, 2019.

- [4] K. P. Hawkins, N. Vo, S. Bansal, and A. F. Bobick, “Probabilistic human action prediction and wait-sensitive planning for responsive human-robot collaboration,” Proc. of the 2013 13th IEEE-RAS Int. Conf. on Humanoid Robots (Humanoids), pp. 499-506, 2013.

- [5] M. Morioka, S. Adachi, S. Sakakibara, J. T. C. Tan, R. Kato, and T. Arai, “Cooperation between a high-power robot and a human by functional safety,” J. Robot. Mechatron., Vol.23, No.6, pp. 926-938, 2011.

- [6] R. Ishida, L. Meli, Y. Tanaka, K. Minamizawa, and D. Prattichizzo, “Sensory-motor augmentation of the robot with shared human perception,” Proc. of the 2018 IEEE/RSJ Int. Conf. on Intelligent Robots and Systems (IROS), pp. 2596-2603, 2018.

- [7] L. Peternel, N. Tsagarakis, and A. Ajoudani, “A human-robot co-manipulation approach based on human sensorimotor information,” IEEE Trans. on Neural Systems and Rehabilitation Engineering, Vol.25, No.7, pp. 811-822, 2017.

- [8] J. DelPreto and D. Rus, “Sharing the load: human-robot team lifting using muscle activity,” Proc. of the 2019 Int. Conf. on Robotics and Automation (ICRA), pp. 7906-7912, 2019.

- [9] T. B. Sayer, J. R. Sayer, and J. M. H. Devonshire, “Assessment of a driver interface for lateral drift and curve speed warning systems: Mixed results for auditory and haptic warnings,” Proc. of the 2005 Driving Assessment Conf., 2005.

- [10] K. B. Reed, M. Peshkin, M. J. Hartmann, J. Patton, P. M. Vishton, and M. Grabowecky, “Haptic cooperation between people, and between people and machines,” Proc. of the 2006 IEEE/RSJ Int. Conf. on Intelligent Robots and Systems, pp. 2109-2114, 2006.

- [11] T. Takubo, H. Arai, and K. Tanie, “Human-robot cooperative handling using virtual nonholonomic constraint in 3-D space,” Proc. of the 2001 IEEE Int. Conf. on Robotics and Automation (ICRA), pp. 2680-2685, 2001.

- [12] Y. Che, C. T. Sun, and A. M. Okamura, “Avoiding human-robot collisions using haptic communication,” Proc. of the 2018 IEEE Int. Conf. on Robotics and Automation (ICRA), pp. 5828-5834, 2018.

- [13] A. Casalino, C. Messeri, M. Pozzi, A. M. Zanchettin, P. Rocco, and D. Prattichizzo, “Operator awareness in human-robot collaboration through wearable vibrotactile feedback,” IEEE Robotics and Automation Letters, Vol.3, No.4, pp. 4289-4296, 2018.

- [14] K. Katayama M. Pozzi, Y. Tanaka, K. Minamizawa, and D. Prattichizzo, “Shared haptic perception for human-robot collaboration,” I. Nisky, J. Hartcher-O’Brien, M. Wiertlewski, and J. Smeets (Eds.), “Int. Conf. on Human Haptic Sensing and Touch Enabled Computer Applications,” Lecture Notes in Computer Science, Vol.12272, pp. 536-544, Springer, 2020.

- [15] T. Hashizume and M. Niitsuma, “Pose presentation of end effector using vibrotactile interface for assistance in motion sharing of industrial robot remote operation,” Proc. of the 2019 IEEE 28th Int. Symp. on Industrial Electronics (ISIE), pp. 1186-1191, 2019.

- [16] J. Park and O. Khatib, “A haptic teleoperation approach based on contact force control,” The Int. J. of Robotics Research, Vol.25, No.5-6, pp. 575-591, 2006.

- [17] H. Takahashi, “A preliminary study on the handling of a robotic arm based only on temporarily provided auditory information as a substitute for visual information: The case study that assumed the resilient system architecture,” J. Robot. Mechatron., Vol.29, No.2, pp. 406-418, 2017.

- [18] Y. Tanaka, Y. Ueda, and A. Sano, “Effect of skin-transmitted vibration enhancement on vibrotactile perception,” Experimental Brain Research, Vol.233, No.6, pp. 1721-1731, 2015.

- [19] R. S. Johansson and G. Westling, “Tactile afferent signals in the control of precision grip,” M. Jeannerod (Ed.), “Attention and Performance XIII,” Lawrence Erlbaum Associates, Inc., pp. 677-713, 1990.

- [20] Y. Shao, V. Hayward, and Y. Visell, “Spatial patterns of cutaneous vibration during whole-hand haptic interactions,” Proc. of the National Academy of Sciences of the United States of America, Vol.113, No.15, pp. 4188-4193, 2016.

- [21] L. R. Manfredi, H. P. Saal, K. J. Brown, M. C. Zielinski, J. F. Dammann III, V. S. Polashock, and S. J. Bensmaia, “Natural scenes in tactile texture,” J. of Neurophysiology, Vol.111, No.9, pp. 1792-1802, 2014.

- [22] B. Delhaye, V. Hayward, P. Lefèvre, and J. L. Thonnard, “Texture-induced vibrations in the forearm during tactile exploration,” Frontiers in Behavioral Neuroscience, Vol.6, No.37, 2012.

- [23] T. Katagiri, Y. Tanaka, S. Sugiura, K. Minamizawa, J. Watanabe, and D. Prattichizzo, “Operation identification by shared tactile perception based on skin vibration,” Proc. of the 2020 29th IEEE Int. Conf. on Robot and Human Interactive Communication (RO-MAN), pp. 885-890, 2020.

- [24] Y. Makino, T. Murao, and T. Maeno, “Life log system based on tactile sound,” Proc. of EuroHaptics 2010, pp. 292-297, 2010.

- [25] G. Salvietti, M. Z. Iqbal, and D. Prattichizzo, “Bilateral haptic collaboration for human-robot cooperative tasks,” IEEE Robotics and Automation Letters, Vol.5, No.2, pp. 3517-3524, 2020.

- [26] M. Aggravi, F. Pausé, P. R. Giordano, and C. Pacchierotti, “Design and evaluation of a wearable haptic device for skin stretch, pressure, and vibrotactile Stimuli,” IEEE Robotics and Automation Letters, Vol.3, No.3, pp. 2166-2173, 2018.

- [27] M. Morioka and M. J. Griffin, “Dependence of vibrotactile thresholds on the psychophysical measurement method,” Int. Archives of Occupational and Environmental Health, Vol.75, No.1, pp. 78-84, 2002.

- [28] G. A. Gescheider, “Psychophysics: The Fundamentals,” Lawrence Erlbaum Associates, Inc., 1997.

- [29] I. Hussain, G. Salvietti, L. Meli, C. Pacchierotti, D. Cioncoloni, S. Rossi, and D. Prattichizzo, “Using the robotic sixth finger and vibrotactile feedback for grasp compensation in chronic stroke patients,” Proc. of the 2015 IEEE Int. Conf. on Rehabilitation Robotics (ICORR), pp. 67-72, 2015.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.