Paper:

Dynamic Brake Control for a Wearable Impulsive Force Display by a String and a Brake System

Satoshi Saga and Naoto Ikeda

Kumamoto University

2-39-1 Kurokami, Chuo-ku, Kumamoto 860-8555, Japan

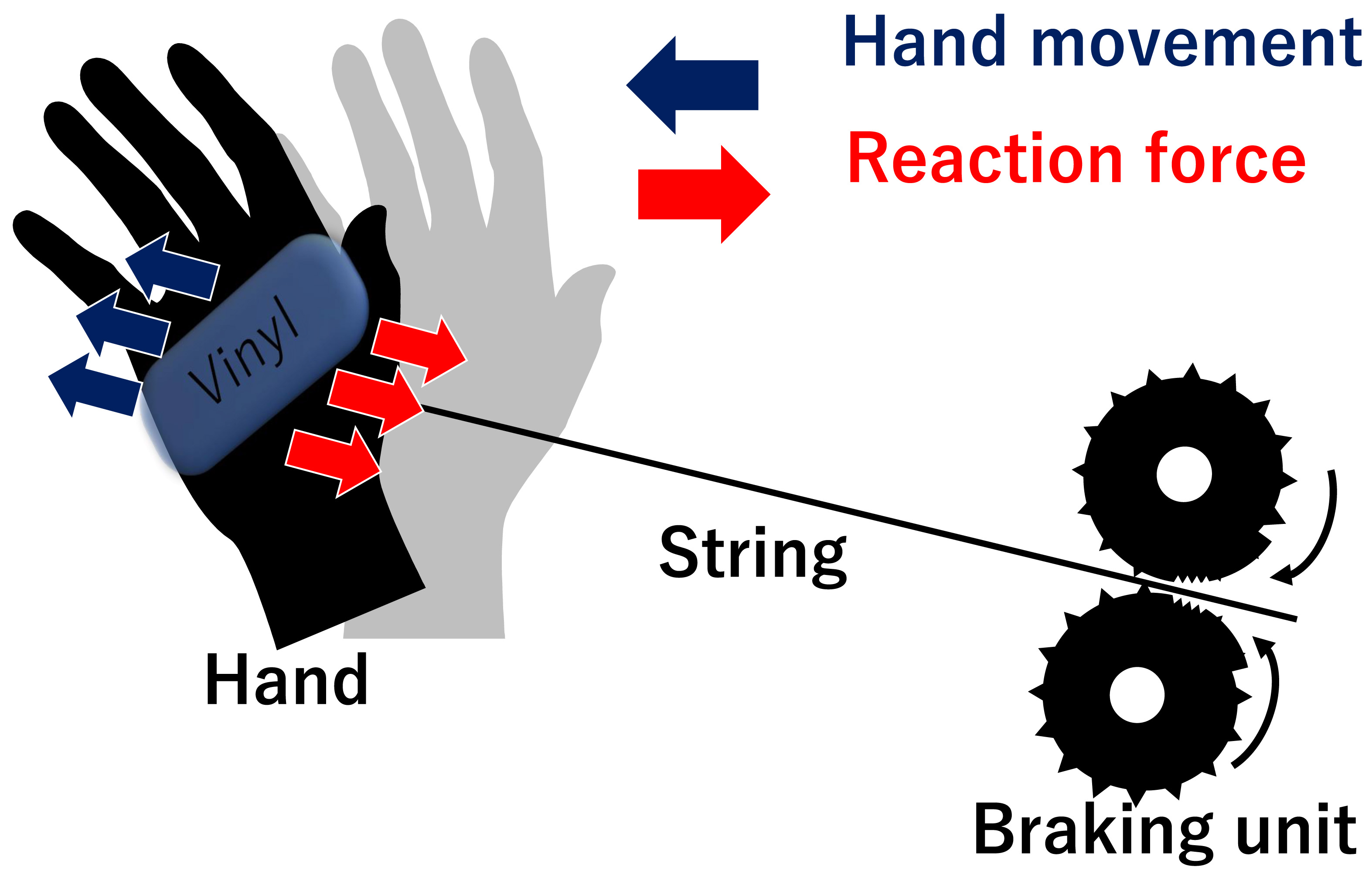

In recent years, it has become possible to experience sports in the virtual reality (VR) space. Although many haptic displays in the VR environment currently use vibrators as the mainstream, the vibrators’ presentation is not suitable to express ball-receiving in the VR sports experience. Therefore, we have developed a novel haptic display that reproduces an impulsive force by instantaneously applying traction to the palm using a string and wearable brake system. This paper proposes a method to present various reaction forces by dynamic control of the braking system and report the quantitative evaluation of the device’s physical and psychological usability.

System overview of the braking mechanism

- [1] G. Nikolakis, D. Tzovaras, S. Moustakidis, and M. G. Strintzis, “CyberGrasp and PHANTOM Integration: Enhanced Haptic Access for Visually Impaired Users,” SPECOM’2004: 9th Conf. Speech and Computer, pp. 507-513, 2004.

- [2] K. Nagai, S. Tanoue, K. Akahane, and M. Sato, “Wearable 6-DoF wrist haptic device “SPIDAR-W”,” SIGGRAPH Asia 2015 Haptic Media And Contents Design (SA’15), pp. 1-2, doi: 10.1145/2818384.2818403, 2015.

- [3] I. Choi, H. Culbertson, M. R. Miller, A. Olwal, and S. Follmer, “Grabity: A Wearable Haptic Interface for Simulating Weight and Grasping in Virtual Reality,” Proc. of the 30th Annual ACM Symp. on User Interface Software and Technology, pp. 119-130, doi: 10.1145/3126594.3126599, 2017.

- [4] D. Trinitatova and D. Tsetserukou, “DeltaTouch: a 3D Haptic Display for Delivering Multimodal Tactile Stimuli at the Palm,” 2019 IEEE World Haptics Conf. (WHC), pp. 73-78, doi: 10.1109/WHC.2019.8816136, 2019.

- [5] S. Komizunai, K. Nishizaki, K. Wada, T. Kijima, and A. Konno, “A Wearable Encounter-Type Haptic Device Suitable for Combination with Visual Display,” J. Robot. Mechatron., Vol.28, No.6, pp. 790-798, doi: 10.20965/jrm.2016.p0790, 2016.

- [6] M. Hagiwara, S. Takahashi, and J. Tanaka, “A Method of Presenting Sharp Tactile Feel in order to Express Damages,” IPSJ SIG Technical Report, Vol.2011-EC-20, No.6, pp. 1-5, May 2011.

- [7] M. Imura, S. Matsui, Y. Yasumuro, Y. Manabe, and K. Chihara, “MR Billiards: Training System for Billiards,” Proc. of the Tenth Int. Conf. on Virtual Systems and MultiMedia, pp. 441-449, 2004.

- [8] P. Lopes, A. Ion, and P. Baudisch, “Impacto: Simulating physical impact by combining tactile stimulation with electrical muscle stimulation,” Proc. of the 28th Annual ACM Symp. on User Interface Software and Technology, pp. 11-19, doi: 10.1145/2807442.2807443, 2015.

- [9] K. Minamizawa, S. Kamuro, N. Kawakami, and S. Tachi, “A Palm-Worn Haptic Display for Bimanual Operations in Virtual Environments,” Int. Conf. on Human Haptic Sensing and Touch Enabled Computer Applications, pp. 458-463, doi: 10.1007/978-3-540-69057-3_59, 2008.

- [10] C. Fang, Y. Zhang, M. Dworman, and C. Harrison, “Wireality: Enabling Complex Tangible Geometries in Virtual Reality with Worn Multi-String Haptics,” Proc. of the 2020 CHI Conf. on Human Factors in Computing Systems, pp. 1-10, doi: 10.1145/3313831.3376470, 2020.

- [11] M. Sato, “Development of string-based force display: SPIDAR,” Proc. of the Eighth Int. Conf. on Virtual Systems and MultiMedia, pp. 1034-1039, 2002.

- [12] A. Bicchi, E. P. Scilingo, and D. D. Rossi, “Haptic discrimination of softness in teleoperation: the role of the contact area spread rate,” IEEE Trans. on Robotics and Automation, Vol.16, No.5, pp. 496-504, doi: 10.1109/70.880800, October 2000.

- [13] K. Fujita and H. Ohmori, “A new softness display interface by dynamic fingertip contact area control,” Proc. of 5th World Multiconference on Systemics, Cybernetics and Informatics, 2001.

- [14] M. Sato, Y. Hirata, and H. Kawarada, “Space interface device for artificial reality, spidar,” Systems and Computers in Japan, Vol.23, No.12, pp. 44-54, doi: 10.1002/scj.4690231204, 1992.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.