Paper:

Development and Experimental Verification of a Person Tracking System of Mobile Robots Using Sensor Fusion of Inertial Measurement Unit and Laser Range Finder for Occlusion Avoidance

Kazuhiro Funato*, Ryosuke Tasaki**, Hiroto Sakurai*, and Kazuhiko Terashima*

*Toyohashi University of Technology

1-1 Hibarigaoka, Tempaku-cho, Toyohashi, Aichi 441-8580, Japan

**Aoyama Gakuin University

5-10-1 Fuchinobe, Chuo-ku, Sagamihara, Kanagawa 252-5258, Japan

The authors have been developing a mobile robot to assist doctors in hospitals in managing medical tools and patient electronic medical records. The robot tracks behind a mobile medical worker while maintaining a constant distance from the worker. However, it was difficult to detect objects in the sensor’s invisible region, called occlusion. In this study, we propose a sensor fusion method to estimate the position of a robot tracking target indirectly by an inertial measurement unit (IMU) in addition to the direct measurement by an laser range finder (LRF) and develop a human tracking system to avoid occlusion by a mobile robot. Based on this, we perform detailed experimental verification of tracking a specified person to verify the validity of the proposed method.

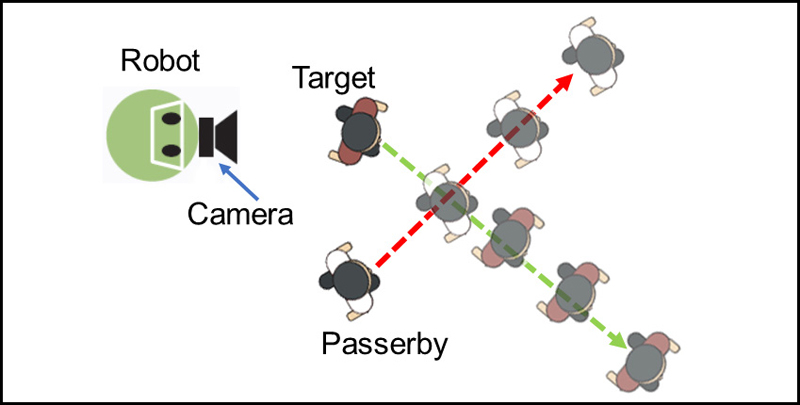

A case of misidentifying a passerby as a tracking target

- [1] J. F. Sucher, S. R. Todd, S. L. Jones, T. Throckmorton, K. L. Turner, and F. A. Moore, “Robotic telepresence: a helpful adjunct that is viewed favorably by critically ill surgical patients,” The American J. of Surgery, Vol.202, Issue 6, pp. 843-847, 2011.

- [2] M. Imai, M. Takahashi, T. Morigushi, T. Okada, Y. Minato, T. Nakano, S. Tanaka, H. Shitamoto, and T. Hori, “A Transportation System using a Robot for a Hospital,” The Robotics Society of Japan, Vol.27, Issue 10, pp. 1101-1104, 2009 (in Japanese).

- [3] R. Murai, T. Sakai, and Y. Kitano, “Autonomous Navigation Technology for ‘HOSPI,’ a Hospital Delivery Robot System,” Collection of Manuscripts for the 55th Japan Joint Automatic Control Conf., CD-ROM, No.1, K303, 2012.

- [4] M. Takahashi, T. Moriguchi, S. Tanaka, H. Namikawa, H. Shitamoto, T. Nakano, Y. Minato, T. Ihama, and T. Murayama, “Development of a Mobile Robot for Transport Application in Hospital,” J. Robot. Mechatron., Vol.24, No.6, pp. 1046-1053, 2012.

- [5] K. Terashima, S. Takenoshita, J. Miura, R. Tasaki, M. Kitazaki, R. Saegusa, T. Miyoshi, N. Uchiyama, S. Sano, J. Satake, R. Ohmura, T. Fukushima, K. Kakihara, H. Kawamura, and M. Takahashi, “Medical Round Robot – Terapio – ,” J. Robot. Mechatron., Vol.26, No.1, pp. 112-114, 2014.

- [6] R. Tasaki, M. Kitazaki, J. Miura, and K. Terashima, “Prototype Design of Medical round Supporting Robot, ‘Terapio’,” 2015 IEEE Int. Conf. on Robotics and Automation (ICRA), pp. 829-834, 2015.

- [7] R. Tasaki, M. Kitazaki, J. Miura, T. Fukushima, and K. Terashima, “Design and Development of Medical Care Supporting Robot,” The Robotics Society of Japan, Vol.35, Issue 3, pp. 249-257, 2017.

- [8] M. Munaro and E. Menegatti, “Fast RGB-D people tracking for service robots,” Autonomous Robots, Vol.37, pp. 227-242, 2014.

- [9] T. Linder, S. Breuers, B. Leibe, and K. Arrans, “On multi-modal people tracking from mobile platforms in very crowded and dynamic environments,” 2016 IEEE Int. Conf. on Robotics and Automation (ICRA), pp. 5512-5519, 2016.

- [10] J. Cai and T. Matsumaru, “Human Detecting and Following Mobile Robot Using a Laser Range Sensor,” J. Robot. Mechatron., Vol.26, No.6, pp. 718-734, 2014.

- [11] M. Kristou, A. Ohya, and S. Yuta, “Target Person Identification and Following Based on Omnidirectional Camera and LRF Sensor Fusion from a Moving Robot,” J. Robot. Mechatron., Vol.23, No.1, pp. 163-172, 2011.

- [12] T. Ogino, M. Tomono, T. Akimoto, and A. Matsumoto, “Human Following by an Omnidirectional Mobile Robot Using Maps Built from Laser Range-Finder Measurement,” J. Robot. Mechatron., Vol.22, No.1, pp. 28-35, 2010.

- [13] H. Yu, H. Hsieh, Y. Tasi, Z. Ou, Y. Huang, and T. Fukuda, “Visual Localization for Mobile Robots Based on Composite Map,” J. Robot. Mechatron., Vol.25, No.1, pp. 25-37, 2013.

- [14] R. Tasaki, H. Sakurai, and K. Terashima, “Moving Target Localization Method using Foot Mounted Acceleration Sensor for Autonomous Following Robot,” 2017 IEEE Conf. on Control Technology and Applications (CCTA), pp. 827-833, 2017.

- [15] Y. Ueno, H. Kitagawa, K. Kakihara, and K. Terashima, “Development of the Differential Drive Steering System Using Spur Gear for Omni-Directional Mobile Robot,” Japan Society of Mechanical Engineers, Vol.78, Issue 789, pp. 1872-1885, 2012 (in Japanese).

- [16] K. Koide, J. Miura, and J. Satake, “Development of an attendant robot with a person tracking capability,” The Robotics and Mechatronics Conf. 2013, 1P1-L03, 2013.

- [17] M. Kobilarov, G. Sukhatme, J. Hyams, and P. Batavia, “People tracking and following with mobile robot using an omnidirectional camera and a laser,” Proc. of 2006 IEEE Int. Conf. on Robotics and Automation, pp. 557-562, 2006.

- [18] S. Okusako and S. Sakane, “Human Tracking with a Mobile Robot using a Laser Range-Finder,” J. of Robotics Society of Japan, Vol.24, Issue 5, pp. 605-613, 2016 (in Japanese).

- [19] I. Ardiyanto and J. Miura, “Real-time Navigation using Ran-domized Kinodynamic Planning with Arrival Time Field,” Robotics and Autonomous Systems, Vol.60, Issue 12, pp. 1579-1591, 2012.

- [20] O. Khatib, “Real-Time Obstacle Avoidance for Manipulators and Mobile Robots,” The Int. J. of Robotics Research, Vol.5, Issue 1, pp. 90-98, 1986.

- [21] K. Koide and J. Miura, “Person Identification Based on the Matching of Foot Strike Timings Obtained by LRFs and a Smartphone,” 2016 IEEE/RSJ Int. Conf. on Intelligent Robots and Systems (IROS), pp. 4187-4192, doi: 10.1109/IROS.2016.7759616, 2016.

- [22] F. Endo, H. Fukuda, Y. Kobayashi, and Y. Kuno, “Pedestrian Tracking and Identification by Integrating Multiple Sensor Information,” Proc. Int. Workshop on Frontiers of Computer Vision (IW-FCV), 2019.

- [23] Y. Okada, J. Miura, I. Ardiyanto, and J. Satake, “Indoor Mapping including Stpdf by a Mobile Robot with Multiple Range Sensors,” The Robotics and Mechatronics Conf., 1P1-L02, 2013.

- [24] U. Steinhoff and B. Schiele, “Dead reckoning from the pocket – An experimental study,” IEEE Int. Conf. on Pervasive Computing and Communications, pp. 162-170, 2010.

- [25] N. B. Priyantha, A. Chakraborty, and H. Balakrishnan, “The Cricket Location-Support System,” 6th Annual ACM Int. Conf. on Mobile Computing and Networking, pp. 32-43, 2000.

- [26] D. Hallaway, T. Hollerer, and S. Feiner, “Coarse, Inexpensive, Infrared Tracking for Wearable Computing,” Int. Symp. on Wearable Computers, pp. 69-78, 2003.

- [27] E. Foxlin, “Pedestrian Tracking with Shoe-mounted Inertial Sensors,” IEEE Computer Graphics and Applications, Vol.25, Issue 6, pp. 38-46, 2005.

- [28] A. Hamaguchi, M. Kanbara, and N. Yokoya, “User Localization Using Wearable Electromagnetic Tracker and Orientation Sensor,” IEEE Int. Symp. on Wearable Computers, pp. 55-58, 2006.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.