Paper:

Proposal of a Behavioral Model for Robots Supporting Learning According to Learners’ Learning Performance

Ryo Yoshizawa*, Felix Jimenez**, and Kazuhito Murakami**

*Graduate School of Information Science and Technology, Aichi Prefectural University

1522-3 Ibaragabasama, Nagakute-shi, Aichi 480-1198, Japan

**School of Information Science and Technology, Aichi Prefectural University

1522-3 Ibaragabasama, Nagakute-shi, Aichi 480-1198, Japan

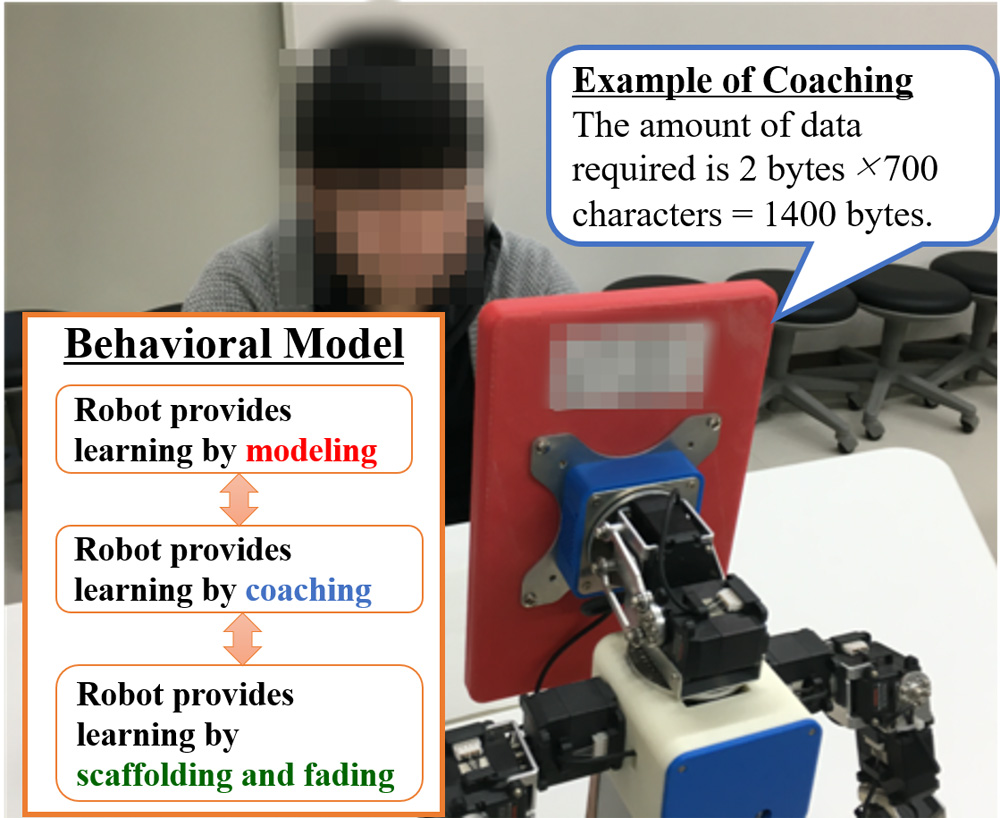

Educational support robots have been the focus of study in recent years. Studies have reported that robots providing educational support, based on cognitive apprenticeship theory, provided learners with effective collaborative learning. However, the robots were remote controlled, so no behavioral model was constructed of robots operating autonomously to provide educational support. Therefore, in this paper, we construct a behavioral model in which robots autonomously provide educational support based on cognitive apprenticeship theory. In addition, through a comparative experiment with a behavioral model providing educational support in accordance with learner requests, which is a conventional technique, we verify the learning effects of this behavioral model on university students.

Overview of the behavioral model

- [1] H. Okazaki, Y. Kanai, M. Ogata, K. Hasegawa, K. Ishii, and M. Imai, “Toward understanding pedagogical relationship in human-robot interaction,” J. Robot. Mechatron., Vol.28, No.1, pp. 69-78, doi: 10.20965/jrm.2016.p0069, 2016.

- [2] T. Tsumugiwa, Y. Takeuchi, and R. Yokogawa, “Maneuverability of impedance-controlled motion in a human-robot cooperative task system,” J. Robot. Mechatron., Vol.29, No.4, pp. 746-756, doi: 10.20965/jrm.2017.p0746, 2017.

- [3] J. Inthiam, A. Mowshowitz, and E. Hayashi, “Mood perception model for social robot based on facial and bodily expression using a hidden markov model,” J. Robot. Mechatron., Vol.31, No.4, pp. 629-638, doi: 10.20965/jrm.2019.p0629, 2019.

- [4] T. Kanda, T. Hirano, D. Eaton, and H. Ishiguro, “Interactive robots as social partners and peer tutors for children: A field trial,” Human-Computer Interaction, Vol.19, No.1, pp. 61-84, doi: 10.1207/s15327051hci1901&2_4, 2004.

- [5] O. H. Kwon, S. Y. Koo, Y. G. Kim, and D. S. Kwon, “Telepresence robot system for english tutoring,” Proc. of IEEE Workshop on Advanced Robotics and its Social Impacts, pp. 152-155, doi: 10.1109/ARSO.2010.5679999, 2010.

- [6] T. Kanda, “How a communication robot can contribute to Education?,” J. of Japanese Society for Artificial Intelligence, Vol.23, No.2, pp. 229-236, 2008 (in Japanese).

- [7] K. Shinozawa, F. Naya, J. Yamato, and K. Kogure, “Differences in effect of robot and screen agent recommendations on human decision-making,” Int. J. of Human-Computer Studies, Vol.62, No.2, pp. 267-279, doi: 10.1016/j.ijhcs.2004.11.003, 2005.

- [8] J. Han, M. Jo, V. Jones, and J. H. Jo, “Comparative study on the educational use of home robots for children,” J. of Information Processing Systems, Vol.4, No.4, pp. 159-168, doi: 10.3745/JIPS.2008.4.4.159, 2008.

- [9] F. Jimenez, T. Yoshikawa, T. Furuhashi, and M. Kanoh, “Effects of a novel sympathy-expression method on collaborative learning among junior high school students and robots,” J. Robot. Mechatron., Vol.30, No.2, pp. 282-291, doi: 10.20965/jrm.2018.p0282, 2018.

- [10] T. Belpaeme, J. Kennedy, A. Ramachandran, B. Scassellati, and F. Tanaka, “Social robots for education: A review,” Science Robotics, Vol.3, eaat5954, doi: 10.1126/scirobotics.aat5954, 2018.

- [11] K. Miyauchi, F. Jimenez, T. Yoshikawa, T. Furuhashi, and M. Kanoh, “Collaborative Learning Between Junior High School Students and Robots Teaching Based on Cognitive Apprenticeship,” J. of Japan Society for Fuzzy Theory and Intelligent Informatics, Vol.31, No.5, pp. 834-841, doi: 10.3156/jsoft.31.5_834, 2019 (in Japanese).

- [12] K. Miyauchi, F. Jimenez, T. Yoshikawa, T. Furuhashi, and M. Kanoh, “Learning effects of robots teaching based on cognitive apprenticeship theory,” J. Adv. Comput. Intell. Intell. Inform., Vol.24, No.1, pp. 101-112, doi: 10.20965/jaciii.2020.p0101, 2020.

- [13] A. Collins, J. S. Brown, and S. E. Newman, “Cognitive apprenticeship: teaching the craft of reading, writing, and mathematics,” Essays in Honor of Robert Glaser, Ebaum, HiLLsdale NJ, doi: 10.4324/9781315044408-14, 1989.

- [14] S. Lajoie and A. Lesgod, “Apprenticeship training in the workplace: computer coached practice environment as a new form of apprenticeship,” Machine Mediated Learning, No.3, pp. 7-28, 1989.

- [15] T. Nagai, “Jukuren jutsusya no Trifecta wo kakyuteki ni sowanaitameno kyouiku sisutemu no kouchiku,” Japanese J. of Endourology, Vol.31, No.1, pp. 27-31, doi: 10.11302/jsejje.31.27, 2018 (in Japanese).

- [16] A. Kahihara, K. Taira, M. Shinya, and K. Sawazaki, “Cognitive apprenticeship approach to developing meta-cognitive skill with cognitive tool for Web-based navigational learning,” Proc. of the Seventh IASTED Int. Conf. on Web-based Education, 2008.

- [17] M. Alemi, A. Meghdari, and M. Ghazisaedy, “Employing humanoid robots for teaching english language in iranian junior high-schools,” Int. J. of Humanoid Robotics, Vol.11, No.3, pp. 1-25, doi: 10.1142/S0219843614500224, 2014.

- [18] G. Gordon, S. Spaulding, J. K. Westlund, J. J. Lee, L. Plummer, M. Martinez, M. Das, and C. Breazeal, “Affective personalization of a social robot tutor for children’s second language skills,” Proc. of the 30th AAAI Conf. on Artificial Intelligence, pp. 3951-3957, 2016.

- [19] S. Saida, “Eye movements and comprehension of good readers (in Japanese),” The Japanese J. of Psychonomic Science, Vol.23, No.1, pp. 64-69, doi: 10.14947/psychoNo.KJ00004414520, 2004.

- [20] Y. Tanizaki, F. Jimenez, T. Yoshikawa, and T. Furuhashi, “Effects of Educational Support Robots using Sympathy Expression Method with Body Movement and Facial Expression on the Learners in Short- and Long-term Experiments,” Advances in Science, Technology and Engineering Systems J., Vol.4, No.2, pp. 183-189, doi: 10.25046/aj040224, 2019.

- [21] F. Jimenez, T. Yoshikawa, T. Furuhashi, and M. Kanoh, “An emotional expression model for educational-support robots,” J. of Artificial Intelligence and Soft Computing Research, Vol.5, No.1, pp. 51-57, doi: 10.1515/jaiscr-2015-0018, 2015.

- [22] N. J. Salkind, “Bonferroni test, encyclopedia of measurement and statistics,” SAGE Publications, Vol.1, pp. 103-107, doi: 10.4135/9781412952644.n60, 2007.

- [23] Y. Kaneda, M. Sasai, and T. Furuhashi, “Statistics, Multivariate Analysis and Soft Computing – toward Analysis of Systems with Ultra Many Degrees of Freedom –,” Kyoritsu Shuppan Co., Ltd., 2014 (in Japanese).

- [24] M. Kayashima, A. Inaba, and R. Mizoguchi, “A Framework of difficulty in metacognitive activity,” Trans. of Japanese Society for Information and Systems in Education, Vol.25, No.1, pp. 19-31, 2008.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.