Paper:

Development of MEMS Tactile Sensation Device for Haptic Robot

Junji Sone*, Yasuyoshi Matsumoto*, Yoji Yasuda*, Shoichi Hasegawa**, and Katsumi Yamada*

*Tokyo Polytechnic University

1583 Iiyama, Atsugi, Kanagawa 243-0297, Japan

**Precision and Intelligence Laboratory, Tokyo Institute of Technology

4259 Nagatsuta-cho, Midori-ku, Yokohama, Kanagawa 226-8503, Japan

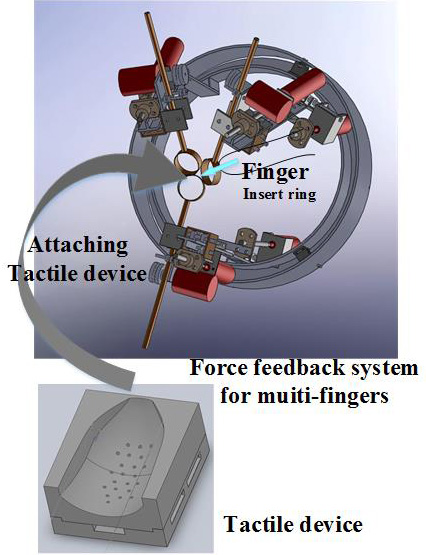

A tactile sensation device using micro-electromechanical system (MEMS) has been developed. This device is integrated with a haptic sensation robot for use as fingers. The tactile device must be miniaturized to enable attachment of the actuator mechanism to the fingers. Therefore, we used MEMS technology for this device. The device is composed of an interface part fabricated by 3D printing, pins, and MEMS cantilever-type actuators. It has the ability to stimulate the mechanoreceptors of the fingertips. The device and robot can display not only high-resolution images of the fingertips but also the repulsion force during finger operations such as tool holding and rotation. We have not yet achieved the final device because of fabrication problems. In this paper, we explain the details, progress of development, and results of trials on the prototype device.

It represents force and tactile information

- [1] A. M. Okamura, L. N. Verner, C. E. Reiley, and M. Mahvash, “Haptics for Robot-Assisted Minimally Invasive Surgery,” Robotics Research, Vol.66, pp. 361-372, 2011.

- [2] M. K. O’Malley and R. O. Ambrose, “Haptic feedback applications for Robonaut,” Industrial Robot, Vol.30, No.6, pp. 531-542, 2003.

- [3] J. Sone, K. Ogiwara, Y. Kume, Y. Tokuyama, and M. Isobe, “Force Profile study of Virtual Cutting,” Proc. of 13th Int. Conf. on Artificial Reality and Telexistence, pp. 143-147, 2003.

- [4] H. Ishizuka and N. Miki, “Review MEMS-based tactile displays,” Displays, Vol.37, pp. 25-32, 2015.

- [5] J. Streque, A. Talbi, P. Pernod, and V. Preobrazhensky, “New magnetic microactuator design based on PDMS elastomer and MEMS technologies for tactile display,” IEEE Trans. Haptics, Vol.3, No.2, pp. 88-97, 2010.

- [6] M. Konyo, S. Tadokoro, and T. Takamori, “Artificial tactile feel display using soft gel actuators,” Proc. of the 2000 IEEE Int. Conf. on Robotics and Automation, pp. 3416-3422, 2000.

- [7] J. Watanabe, H. Ishikawa, X. Aroutte, Y. Matsumoto, and N. Miki, “Demonstration of vibrational braille code display using large displacement micro-electromechanical system actuators,” Japan. J. Appl. Phys., Vol.51, 06FL11, 2012.

- [8] F. Zhao, K. Fukuyama, and H. Sawada, “Compact Braille display using SMA wire array,” Proc. of the 18th IEEE Int. Symp. on Robot and Human Interactive Communication, pp. 28-33, 2009.

- [9] T. Coles, N. John, D. Gould, and D. Caldwell, “Haptic palpation for the femoral pulse in virtual interventional radiology,” Proc. of the 2009 2nd Int. Conf. on Advances in Computer-Human Interactions, pp. 193-198, 2009.

- [10] G. Paschew and A. Richter, “High-resolution tactile display operated by an integrated Smart Hydrogel actuator array,” Proc. SPIE – The Int. Society for Optical Engineering, Vol.7642, 764234, 2010.

- [11] H. S. Lee, D. H. Lee, D. G. Kim, U. K. Kim, C. H. Lee, N. N. Linh, N. C. Toan, J. C. Koo, H. Moon, A. D. Nam, J. Han, and H. R. Choi, “Tactile display with rigid coupling,” Proc. of Electroactive Polymer Actuators and Devices (EAPAD), 83400E, 2012.

- [12] T. Maeno, K. Otokawa, and M. Konyo, “Tactile display of surface texture by use of amplitude modulation of ultrasonic vibration,” Proc. of 2006 IEEE Ultrasonics Symp., pp. 62-65, 2006.

- [13] S. Asano, S. Okamoto, Y. Matuura, H. Nagano, and Y. Yamada, “Vibrotactile displayapproach that modify roughness sensations of real texture,” Proc. of the 21st IEEE Int. Symp. on Robot and Human Interactive Communication, pp. 1001-1006, 2012.

- [14] P. Strohmeier and K. Hornbæk, “Generating Haptic Textures with a Vibrotactile Actuator,” Proc. of the 2017 CHI Conf. on Human Factors in Computing Systems (CHI ’17), pp. 4994-5005, 2017.

- [15] Y. Rekik, E. Vezzoli, L. Grisoni, and F. Giraud, “Localized Haptic Texture: A Rendering Technique based on Taxels for High Density Tactile Feedback,” Proc. of the 2017 CHI Conf. on Human Factors in Computing Systems (CHI ’17), pp. 5006-5015, 2017.

- [16] H. Kajimoto, “Electro-tactile display with real-time impedance feedback using pulse width modulation,” IEEE Trans. Haptics, Vol.5, No.2, pp. 184-188, 2012.

- [17] C. H. Lee and M. G. Jang, “Virtual surface characteristics of a tactile display using magneto-rheological fluids,” Sensors, Vol.11, No.3, pp. 2845-2856, 2011.

- [18] Y. Kim, I. Oakley, and J. Ryu, “Design and psychophysical evaluation of pneumatic tactile display,” Proc. of SICE-ICASE Int. Joint Conf. 2006, pp. 1933-1988, 2006.

- [19] T. Hachisu and M. Fukumoto, “VacuumTouch: attractive force feedback interface for haptic interactive surface using air suction,” Proc. of the 2014 CHI Conf. on Human Factors in Computing Systems (CHI ’14), pp. 411-420, 2014.

- [20] X. Wu, S. H. Kim, H. Zhu, C. H. Ji, and G. Mark, “A refreshable braille cell based on pneumatic microbubble actuators,” J. Microelectromech. Syst., Vol.21, No.4, pp. 908-916, 2012.

- [21] L. Santos-Carreras, K. Leuenberger, P. Retornaz, R. Gassert, and H. Bleuler, “Design and psychophysical evaluation of a tactile pulse display for teleoperated artery palpation,” Proc. of the 2010 IEEE/RSJ Int. Conf. on Intelligent Robots and System, pp. 5060-5066, 2010.

- [22] H. Kawasaki, J. Takai, Y. Tanaka, M. Carafeddine, and T. Mouri, “Control of multi-fingered haptic interface opposite to human hand,” Proc. of Int. Conf. on Intelligent Robotics and Systems, Las Vegas, USA, 2003, pp. 2709-2712, 2003.

- [23] K. J. Kuchenbecker, W. R. Provancher, G. Niemeyer, and M. R. Cutkosky, “Haptic display of contact location,” Haptic Interfaces for Virtual Environment and Teleoperator Systems, pp. 40-47, 2004.

- [24] Y. Yokokohji, N. Muramori, Y. Sato, T. Kikura, and T. Yoshikawa, “Design and path planning of an encountered-type haptic display for multiple fingertip contacts based on the observation of human grasping behavior,” IEEE Int. Conf. on Robotics and Automation, pp. 1986-1991, 2004.

- [25] V. Yem and H. Kajimoto, “Wearable Tactile Device using Mechanical and Electrical Stimulation for Fingertip Interaction with Virtual World,” IEEEVR2017, pp. 99-104, 2017.

- [26] T. Okuda and S. Kidoaki, “Development of Time-Programmed, Dual-Release System Using Multilayered Fiber Mesh Sheet by Sequential Electrospinning,” J. Robot. Mechatron., Vol.22, No.5, pp. 579-586, 2010.

- [27] S. Yoshioka, A. Nagano, D. Hay, I. Tabata, T. Isaka, M. Iemitsu, and S. Fukashiro, “New Method of Evaluating Muscular Strength of Lower Limb Using MEMS Acceleration and Gyro Sensors,” J. Robot. Mechatron., Vol.25, No.1, pp. 153-161, 2013.

- [28] T. Tanaka, T. Takahashi, M. Suzuki, and S. Aoyagi, “Development of Minimally Invasive Microneedle Made of Tungsten – Sharpening Through Electrochemical Etching and Hole Processing for Drawing up Liquid Using Excimer Laser –,” J. Robot. Mechatron., Vol.25, No.4, pp. 755-761, 2013.

- [29] M. Sato, “Development of string-based force display: Spidar,” Proc. of the 8th Int. Conf. on Virtual Systems and Multi Media (VSMM 2002), pp. 1034-1039, 2002.

- [30] J. Sone, R. Tamura, K. Yamada, J. Chen, S. Hasegawa, K. Akahane, M. Sato, and K. Konno, “Mechanism Improvement in Multi-finger Haptic Display – Addition of Rotational Mechanism and Improvement of Thumb Trajectory –,” Proc. of ASIAGRAPH 2010 in Shanghai, 2010.

- [31] J. Sone, Y. Matsumoto, R. Sekiya, K. Ooizumi, Y. Yasuda, Y. Hoshi, and S. Hasegawa, “Fusion of Tactile and Force display – Concept and each display development –,” Proc. of the 16th ACM SIGGRAPH Int. Conf. on Virtual Reality Continuum and its Applications in Industry (VRCAI 2018), 2018.

- [32] P. Smithmaitrie, J. Kanjantoe, and P. Tandayya, “Touching force response of the piezoelectric Braille cell,” Disabil. Rehabil., Assist. Technol., Vol.3, No.14, pp. 360-365, 2008.

- [33] S. Soulimane, M. A. Nigassa, B. Bouazza, and H. Camon, “Microactuator modeling to develop a new template for the Braille,” Proc. of 15th Int. Conf. on Thermal Mechanical and Multi-Physics and Experiments in Microelectronics and Microsystem, pp. 1-3, 2014.

- [34] T. Adachi, Y. Matsumoto, Y. Hoshi, and J. Sone, “Feasible Design of Wearable Tactile Sensation Device,” Int. J. of Science and Research Methodology, Vol.4, No.4, pp. 349-359, 2016.

- [35] M. Moriyama, Y. Kawai, S. Tanaka, and M. Esashi, “Low-Voltage-Driven Thin Film PZT Stacked Actuator for RF-MEMS Switches,” IEEJ Trans. on Sensors and Micromachines, Vol.132, No.9, pp. 282-287, 2012.

- [36] J. Sone, Y. Matsumoto, Y. Yamada, and Y. Hoshi, “Trial Development of Tactile Display Technology Using MEMS Technology,” Proc. of JSME Annual Conf. on Robotics and Mechatronics (Robomec), 1A1-M05, 2017.

- [37] J. Sone, Y. Matsumoto, S. Kutsuzawa, Y. Yasuda, and Y. Hoshi, “Study of piezo-electric film deposition by DC sputtering with two sputtering sources-2,” Proc. of the 9th Symp. on Micro-Nano Science and Technology, 2018.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.