Paper:

Vehicle Localization Based on the Detection of Line Segments from Multi-Camera Images

Kosuke Hara* and Hideo Saito**

*Research & Development Group, Denso IT Laboratory

CROSSTOWER 28F, 2-15-1 Shibuya, Shibuya-ku, Tokyo 150-0002, Japan

**Department of Information and Computer Science, Keio University

3-14-1 Hiyoshi, Kohoku-ku, Yokohama, Kanagawa 223-8522, Japan

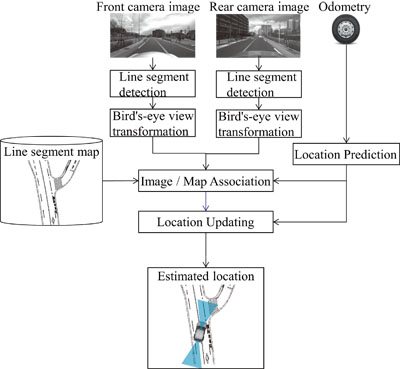

The localization system

The localization system- [1] J. Ziegler, P. Bender, M. Schreiber et al., “Making bertha drive? An autonomous journey on a historic route,” IEEE Intelligent Transportation Systems Magazine, Vol.6, pp. 8-20, 2014.

- [2] J. McCall and M. Trivedi, “Video-based lane estimation and tracking for driver assistance: Survey, System, and Evaluation,” IEEE Trans. on Intelligent Transportation Systems, Vol.7, No.1, pp. 20-37, 2006.

- [3] B. Wu, T. Lee, H. Chang et al., “GPS navigation based autonomous driving system design for intelligent vehicles,” IEEE Int. Conf. on Systems, Man and Cybernetics, pp. 3294-3299, 2007.

- [4] M. Noda, T. Takahashi, D. Deguchi et al., “Vehicle ego-localization by matching in-vehicle camera images to an aerial image,” Asian Conf. on Computer Vision 2010 Workshops – Computer Vision, pp. 163-173, 2011.

- [5] D. Wong, D. Deguchi, I. Ide et al., “Single camera vehicle localization using SURF scale and dynamic time warping,” Proc. of the IEEE Intelligent Vehicles Symposium, pp. 681-686, 2014.

- [6] H. Lategahn, M. Schreiber, J. Ziegler et al., “Urban localization with camera and inertial measurement unit,” Proc. of the IEEE Intelligent Vehicles Symposium, pp. 719-724, 2013.

- [7] H. Yu, H. Hsieh, K. Tasi et al., “Visual Localization for Mobile Robots Based on Composite Map,” J. of Robotics and Mechatronics, Vol.25, No.1, pp. 25-37, 2013.

- [8] D. Zhang, R. Kurazume, Y. Iwashita et al., “Robust Global Localization Using Laser Reflectivity,” J. of Robotics and Mechatronics, Vol.25, No.1, pp. 38-52, 2013.

- [9] M. Schreiber, C. Knoppel, and U. Franke, “LaneLoc: Lane marking based localization using highly accurate maps,” Proc. of the IEEE Intelligent Vehicles Symposium, pp. 449-454, 2013.

- [10] S. Nedevschi, V. Popescu, R. Danescu et al., “Accurate Ego-Vehicle Global Localization at Intersections Through Alignment of Visual Data With Digital Map,” IEEE Trans. on Intelligent Transportation Systems, Vol.14, No.2, pp. 673-687, 2013.

- [11] F. Chausse, J. Laneurit, and R. Chapuis, “Vehicle localization on a digital map using particles filtering,” Proc. of the IEEE Intelligent Vehicles Symposium, pp. 243-248, 2005.

- [12] J. Levinson, M. Montemerlo, and S. Thrun, “Map-Based Precision Vehicle Localization in Urban Environments,” Robotics: Science and Systems, 2007.

- [13] N. Mattern, R. Schubert, and G. Wanielik, “High-accurate vehicle localization using digital maps and coherency images,” Proc. of the Intelligent Vehicles Symposium, pp. 462-469, 2010.

- [14] Y. Yu, H. Zhao, F. Davoine et al., “Monocular visual localization using road structural features,” Proc. of the IEEE Intelligent Vehicles Symposium, pp. 693-699, 2014.

- [15] J. Ziegler, H. Lategahn, M. Schreiber et al., “Video based localization for Bertha,” Proc. of the IEEE Intelligent Vehicles Symposium, pp. 1231-1238, 2014.

- [16] I. Puente, H. Gonzalez-Jorge, J. Martinez-Sanchez et al., “Review of mobile mapping and surveying technologies,” Measurement, Vol.46, No.7, pp. 2127-2145, 2013.

- [17] J. Ziegler, P. Bender, and T. Dang, “Trajectory planning for Bertha – A local, continuous method,” Proc. of the IEEE Intelligent Vehicles Symposium, pp. 450-457, 2014.

- [18] G. Klein and D. Murray, “Parallel tracking and mapping for small AR workspaces,” ACM Int. Symposium on Mixed and Augmented Reality, pp. 225-234, 2007.

- [19] R. Pless, “Using many cameras as one,” IEEE Conf. on Computer Vision and Pattern Recognition, Vol.2, pp. 587-593, 2003.

- [20] S. Thrun, W. Burgard, and D. Fox, “Probabilistic robotics,” MIT press, 2005.

- [21] R. Gioi, J. Jakubowicz, M. Morel et al., “LSD: A fast line segment detector with a false detection control,” IEEE Trans. on Pattern Analysis & Machine Intelligence, Vol.32, No.4, pp. 722-732, 2008.

- [22] K. Hirose and H. Saito, “Real-time Line-based SLAM for AR,” The 3rd Int. Workshop on Benchmark Test Schemes for AR/MR Geometric Registration and Tracking Method, 2012.

- [23] H. Masuda, M. Mizutani, and M, Kimura, “ITS Application: First Step in Automated Cruise System,” Toshiba review, Vol.55, No.11, pp. 23-26, 2000 (in Japanese).

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.