Paper:

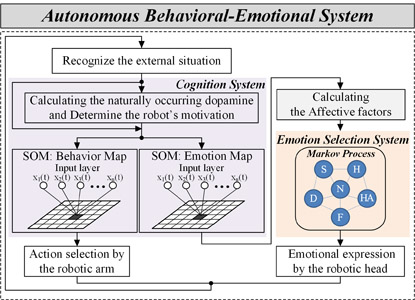

Emotional Model for Robotic System Using a Self-Organizing Map Combined with Markovian Model

Wisanu Jitviriya, Masato Koike, and Eiji Hayashi

Graduate School of Computer Science and Systems Engineering, Kyushu Institute of Technology

680-4 Kawazu, Iizuka, Fukuoka 820-8502, Japan

Behavioral/emotional expression system

Behavioral/emotional expression system- [1] S. Kiesler and P. Hinds, “Introduction to this special issue on human-robot interaction,” J. of Human-Computer Interaction, Vol.19, No.1, pp. 1-8, 2004.

- [2] C. Breazeal, “Function Meets Style: Insights From Emotion Theory Applied to HRI,” IEEE Trans. on Systems, Man, and Cybernetics, Vol.34, No.2, pp. 187-194, 2004.

- [3] T. Fong, I. Nourbakhsh, and K. Dautenhahn, “A Survey of Socially Interactive Robots,” J. of Robotics and Autonomous Systems, Vol.42, pp. 143-166, 2003.

- [4] Y. Mori, K. Ota, and T. Nakamura, “Robot Motion Algorithm Based on Interaction with Human,” J. of Robotics and Mechatronics, Vol.14, No.5, pp. 462-470, 2002.

- [5] J. McCarthy, “Making robots conscious of their mental states,” Machine intelligence, Oxford University Press, pp. 3-17, 1996.

- [6] N. Goto and E. Hayashi, “Design of Robotic Behavior that imitates animal consciousness,” J. of Artificial Life and Robotics, Vol.12, pp. 97-101, 2008.

- [7] E. Hayashi, T. Yamasaki, and K. Kuroki, “Autonomous Behavior System Combing Motivation with Consciousness Using Dopamine,” Proc. of 8th Int. IEEE Symposium on Computational Intelligence in Robotics and Automation (CIRA), pp. 126-131, 2009.

- [8] J. Moren and C. Balkenius, “A computational Model of Emotional Learning in the Amygdla,” J. of Cybernetics and Systems, Vol.32, No.6, pp. 611-636, 2001.

- [9] M. Obayashi, T. Takuno, T. Kuramoto, and K. Kobayashi, “An Emotional Model Embbed Reinforcement learning System,” IEEE Int. Conf. on System, Man, and Cybernetics (SMC), pp. 1058-1063, 2012.

- [10] A. Ortony, G. L. Clore, and A. Collins, “The Cognitive Structure of Emotions,” Cambridge University Press, 1988.

- [11] Q. Xu, L. Zhou, and F. Jiao, “Design for User Experience: an Affective-Cognitive Modeling Perspective,” IEEE Int. Conf. on Management of Innovation and Technology (ICMIT), pp. 1019-1024, 2010.

- [12] N. Kubota and S. Wakisaka, “An Emotional Model Based on Location-Dependent Memory for Partner Robots,” J. of Robotics and Mechatronics, Vol.21, No.3, pp. 317-323, 2009.

- [13] H. Löovheim, “A new three-dimensional model for emotions and monoamine neurotransmitters,” J. of Medical Hypotheses, Vol.78, pp. 341-348, 2012.

- [14] R. Plutchik, “Emotion: Theory, research, and experience,” Theories of emotion Vol.1, Academic, 1980.

- [15] S. C. Banik, “A Computational Model of Emotion and its Application to a Multiagent Robotic System,” Thesis, Department of Advanced Systems Control Engineering, Saga University, Japan, 2009.

- [16] Y. Yamazaki, F. Dong, Y. Uehara, Y. Hatakeyama, H. Nobuhara, Y. Takama, and K. Hirota, “Mentality Expression in Affinity Pleasure-Arousal Space using Ocular and Eyelid Motion of Eye Robot,” Proc. of 3rd Int. Conf. on Soft computing and Intelligent Systems and 7th Int. Symposium on advanced Intelligent Systems (SCIS&ISIS), pp. 422-425, 2006.

- [17] H. Kimura, “A trial to analyze the effect of an atypical anti-psychotic medicine, risperidone on the release of dopamine in the central nervous system,” J. of Aichi Medical University Association, Vol.33, No.1, pp. 21-27, 2005.

- [18] T. Kohonen, “Self-organized formation of topologically correct feature map,” J. of Biological Cybernetics, Vol.43, pp. 56-69, 1982.

- [19] T. Kohonen, “Adaptive, associative, and self-organizing functions in neural computing,” J. of Applied Optics, Vol 26, pp. 4910-4918, 1987.

- [20] M. Herrmann, “Self-Organizing Feature Map with Self-Organizing Neighborhood Widths,” Proc. on IEEE Int. Conf. on Neural Networks, Vol.6, 1995.

- [21] A. Flexer, “Limitations of self-organizing maps for vector quantization and multidimensional scaling,” Technical Report oefai-tr-96-23, The Australian Research Institute for Artificial Intelligence, 1997.

- [22] J. A. Russel, “A circumplex model of affect,” J. of Personality and Social Psychology, Vol.39, pp. 1167-1178, 1980.

- [23] K. Kolji and B. Martin, “Towards an emotion core based on a Hidden Markov Model,” Proc. of 13th IEEE Int. Workshop on Robot and Human Interactive Communication, pp. 119-124, 2004.

- [24] K. Inoue, T. Arai, and J. Ota, “Behavior Acquisition in Partially Observable Environments by Autonomous Segmentation of the Observation Space,” J. of Robotics and Mechatronics, Vol.27, No.3, pp. 317-323, 2015.

- [25] A. Mehrabian, “Framework for a comprehensive description and measurement of emotional states,” J. of Genetic, Social, and General Psychology Monographs, Vol.121, No.3, pp. 339-361, 1995.

- [26] M. S. EI-Nasr, T. Ioerger, and J. Yen, “FLAME: fuzzy logic adaptive model of emotions,” J. of Autonomous Agents and Multi-Agent Systems, Vol.3, No.3, pp. 219-257, 2000.

- [27] H. Ahn, P. Kim, and J. Choi et al, “Emotion head robot with behavior decision model and face recognition,” Proc. of Int. Conf. on Control, Automation and Systems, pp. 2719-2724, 2007.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.