Research Paper:

Reimagining Leadership Assessment: A Comparative Study of Human and AI Scoring Using ChatGPT in School Principal Training

Mingchuan Hsieh†

National Academy for Educational Research

No.2 SanShu Rd., Sanxia District, New Taipei 237201, Taiwan

†Corresponding author

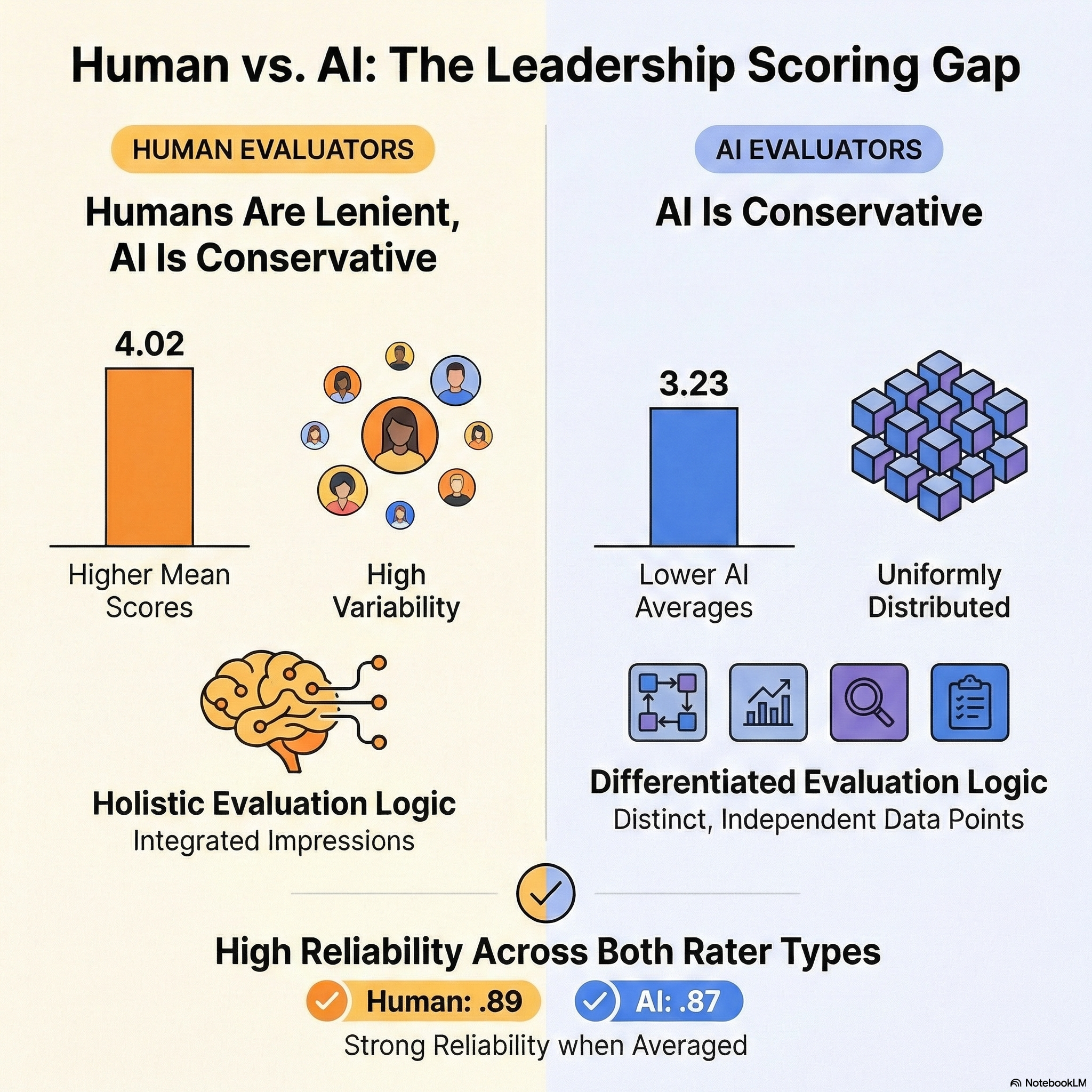

In this study, the application of ChatGPT in the evaluation of pre-service principals within Taiwan’s national school leadership training program was examined. As generative AI technologies become increasingly embedded in education, understanding their role in professional assessment is essential. This research explored whether AI-generated scores align with human ratings, and whether ChatGPT can serve as a reliable tool for providing formative feedback. A total of 131 pre-service principals submitted School Improvement Plans, which were scored by both human evaluators (mentor principals and external scholars) and ChatGPT. The scoring rubric included seven dimensions of leadership competency, with both parties rating assignments on a five-point scale. Descriptive statistics, Pearson correlations, and intraclass correlation coefficients (ICCs) were used to compare scoring patterns, consistency, and reliability. Findings show that human raters consistently assigned higher and more variable scores than ChatGPT, which produced more conservative and evenly distributed ratings. Although both AI and human scores showed internal construct coherence, correlation patterns suggested that ChatGPT applied a more differentiated, data-driven evaluation logic. ICC analysis revealed moderate single-rater reliability and high average-rater reliability for both human and AI assessments. The results indicate that ChatGPT holds potential as a supplementary assessment assistant, particularly in delivering structured, rubric-based feedback. However, discrepancies in scoring tendencies and contextual interpretation underscore the need for human oversight. The findings of this study offer implications for the thoughtful integration of AI in leadership development and broader educational assessment systems.

Human vs. AI scoring comparison

1. Introduction

With the rapid advancement of generative artificial intelligence, tools such as ChatGPT have been increasingly integrated into educational settings 1,2. In assessment contexts, ChatGPT demonstrates the ability to generate personalized feedback and standardized scores in a short amount of time, potentially improving assessment efficiency and scalability 3,4. However, empirical concerns remain regarding the consistency, validity, and comparability of AI-generated scores when benchmarked against human raters.

Traditional human scoring is subject to rater bias, contextual interpretation, and variations in individual judgment 5. Although standardized, AI scoring is limited by its ability to capture nuances and contextual appropriateness. Prior studies suggest that AI scoring systems may yield different average scores than human raters, potentially leading to the under- or overestimation of certain performance dimensions 6.

Moreover, the correlation between AI and human scoring across various assessment constructs is poorly understood. Inter-rater reliability indicators such as the intraclass correlation coefficient (ICC) and Pearson’s correlation provide useful metrics for examining scoring agreement and consistency across rater types 7,8.

To explore the role of artificial intelligence in educational assessment, the differences and consistencies between AI-generated and human-rated scores were investigated in this study in the context of a school principal leadership training program. First, the question of whether there are significant differences in the average scores between human raters and ChatGPT-generated evaluations was examined across various assessment dimensions. The aim of this comparison was to determine whether AI tends to score more strictly or leniently than human evaluators. Second, the degree of correlation between AI and human scores was analyzed across multiple constructs, such as systems thinking, strategic planning, and reflective practice, to evaluate the alignment of their scoring patterns. Finally, the reliability of both scoring sources was assessed by calculating inter-rater reliability indices, particularly the ICC, to determine the internal consistency of the AI and human ratings. Together, the goal of these research questions was to provide a comprehensive understanding of the comparability, consistency, and scoring quality of AI in contrast to human evaluators in professional education contexts.

2. Literature Review

Recent studies have demonstrated the growing capabilities of generative AI tools, such as ChatGPT, in professional assessments across fields such as law, medicine, and business 9,2. ChatGPT performance on four law-school examinations was evaluated at the University of Minnesota 4. The AI completed 95 multiple-choice questions and 12 essay prompts, achieving an average performance equivalent to that of a C+ student, and successfully passed all four tests. Although not exemplary, the scores demonstrate ChatGPT’s capacity to meet minimum academic expectations in rigorous domains 6.

The emergence of ChatGPT also signals a new era for automated writing evaluation (AWE) systems, which have long been used to assess student writing performance, especially in high-stakes contexts such as college admissions. Traditional AWE systems, such as e-rater\(^{®}\), Intelligent Essay Assessor™, Intellimetric\(^{®}\), PEG, and Cambium’s scoring engine 10, rely on rule-based and statistical natural language processing (NLP) techniques to extract writing features. These factors include grammar, spelling, discourse structure, coherence, vocabulary usage, and sentence variety.

With advances in large language models (LLMs), such as GPT-3 and GPT-4, AWE systems have significantly improved in their ability to analyze and score texts 11. LLMs not only enhance the technical robustness of automated scoring, but also increase accessibility and usability owing to their intuitive interfaces. However, despite their potential, further research is needed to examine the reliability, validity, and feedback quality of LLM-based scoring for writing assessments 7,12.

Understanding these scoring dynamics is especially important in high-stakes or developmental assessment contexts 13, such as school principal leadership training programs, where formative and summative evaluations affect professional development and readiness.

3. Methodology

3.1. Participants

The participants in this study were 131 pre-service principals, including 59 females and 72 males. In terms of educational background, the majority held a master’s degree (\(n =115\)), followed by doctoral degrees (\(n =11\)) and bachelor’s degrees (\(n =5\)). The average age of the participants was 47 years and the average length of service was 21 years.

The training curriculum was structured around a series of developmental themes. The assessment covered four major areas: written reports, oral presentations, daily performance, and a final written examination. Although most counties consider the training program a form of pre-service development, where grades are used primarily for reference, some counties do factor these scores into the selection order for principal appointments. As a result, pre-service principals generally regard the assessment outcomes as significant.

Eighteen raters were involved in evaluating the school leadership projects, including 12 internal evaluators who served as mentor principals and six external evaluators comprising academic experts. The assessment adopted a balanced incomplete block (BIB) design to ensure a fair and efficient distribution of scoring tasks among the raters.

Each assignment was independently assessed by at least two mentoring principals and at least one external reviewer to ensure objectivity and scoring consistency. However, owing to the large number of pre-service principals, it was difficult for assessors to provide written feedback on each assignment. Consequently, most evaluators assigned scores without any accompanying comments or suggestions, which resulted in limited guidance for improvement.

3.2. Human Scoring Process

During the pre-training phase, an orientation session is conducted with the pre-service principals to introduce the course structure and assessment components. As the training progresses to school-based applications, each trainee is required to submit a School Improvement Plan based on his or her original school placement. Submissions are uploaded through an online principal assessment platform that includes built-in scoring functionality. This system allows the evaluators to read, score, and provide feedback on specific criteria. All assignment records are stored in a system for process-based reviews and auditing.

The assessment process consists of four phases:

-

Phases 1–2: Mentoring sessions during which pre-service principals begin drafting improvement plans under the guidance of their mentor principals.

-

Phase 3: On-site visit to the trainee’s original school for field observation and interaction with the school principal and staff.

-

Phase 4: Additional mentoring sessions to further develop the plan based on mentor feedback and school observations.

Table 1. Scoring rubric for the School Improvement Plan.

The assessment rubric includes seven dimensions (Table 1) that evaluate core leadership competencies such as systems thinking, vision formulation, strategic planning, innovative leadership, and self-awareness. Each dimension is scored on a five-point scale:

-

Scores 4–5 indicate proficiency (advanced or innovative performance).

-

Scores 2–3 indicate basic competence (generally aligned with expectations).

-

Score 1 indicates needed improvement (lacking clarity or coherence).

The rubric was designed on the basis of criterion-referenced assessment principles, meaning that performance is evaluated against predetermined standards rather than relative to peer performance. The goal is to understand each trainee’s competency development across the targeted leadership domains.

3.3. ChatGPT Implementation Process

In this study, the ChatGPT API was integrated into the primary digital system developed for the project. The main purpose was to evaluate participants’ School Improvement Plans and compare AI-generated assessments with human ratings based on the same scoring rubric.

All past assignments were double-rated by experienced principals and expert scholars. The final score was determined when two researchers reached a consensus on the evaluation. For each scoring dimension, 200 previous reports were randomly selected as exemplars to train the ChatGPT model. To ensure independent judgment and prevent potential cross-article influence, each document was evaluated in a separate and newly initiated ChatGPT session. During the evaluation process, prompts were designed to include detailed scoring rubrics, replacing simple rating instructions with comprehensive descriptions of each performance level. ChatGPT was also required to provide justification for its ratings before assigning scores. This reasoning step has been shown to improve LLM performance when executing complex tasks 14.

3.4. AI-Based Scoring Procedure

ChatGPT was employed as an AI-assisted rater to evaluate School Improvement Plans submitted by pre-service principals. A structured prompt was used to fine-tune the model’s evaluative function, simulating the perspective of an experienced school principal with expertise in analyzing and assessing educational plans. The AI assessed each plan on the basis of seven predefined dimensions aligned with leadership and school management competencies.

For each dimension, the AI assigned a score ranging from 1 to 5, where 1 indicated poor performance and 5 indicated excellent performance. The scoring rubric emphasizes clarity, feasibility, specificity, and alignment with school development goals. In addition to the numeric scores, the AI-generated feedback included short rationale statements to justify the ratings based on the content of each submitted plan.

This AI-generated evaluation was then compared with scores assigned by human raters to examine inter-rater consistency, scoring discrepancies, and the potential of ChatGPT to serve as a reliable assessment assistant in professional development settings.

4. Results

4.1. Descriptive Statistics

Table 2. Descriptive statistics comparing human and AI raters.

Table 2 presents descriptive statistics of the seven leadership performance constructs obtained by comparing ratings from human raters and an AI-based scoring system. Across all constructs, human raters consistently assigned higher mean scores than the AI. For instance, in the Basic (BA) dimension, the human mean was 4.02, whereas the AI mean was notably lower at 3.23. Similar gaps were observed in Strategy (ST; human \(=3.92\); AI \(=2.97\)) and Reflection (RE; human \(=4.03\); AI \(=3.50\)), suggesting that human evaluators tended to be more generous or lenient than the AI system.

Standard deviations indicate that human scores were generally more variable, with values ranging from 0.74 to 0.81, compared to AI standard deviations, which ranged from 0.56 to 0.70. This result implies that the AI ratings were more narrowly distributed and may reflect a more conservative or uniform scoring pattern.

Distribution characteristics further differentiated the two rater types. Human scores consistently exhibited negative skewness (e.g., Reflection \(=-0.71\); Basic \(=-0.65\)), indicating that most ratings clustered at the higher end of the scale. In contrast, the AI ratings displayed less skew or even positive skewness (e.g., Vision [VI] \(=0.73\)), suggesting a more symmetric or lower-centered distribution. Additionally, human ratings tended to be more leptokurtic, with higher kurtosis values (e.g., Basic \(=1.19\); Improvement [IM] \(=0.75\)), denoting peaked distributions with fewer extreme responses. AI scores, on the other hand, were generally platykurtic or near-normal, with lower kurtosis values (e.g., Strategy \(=-0.72\); Basic \(=-0.94\)).

Overall, these results revealed distinct patterns between the human and AI scores. Human raters provided higher and more dispersed ratings, which were potentially influenced by subjective perceptions or contextual empathy. In contrast, AI-generated scores were more conservative, consistent, and centered, reflecting algorithmic precision but potentially reduced sensitivity to nuanced performance. These differences underscore the importance of considering both reliability and interpretability when integrating AI systems into educational and leadership assessment contexts.

4.2. Inter-Construct Correlations: Human vs. AI Ratings

Table 3 presents the Pearson correlation coefficients for the seven leadership constructs separately for the human raters and AI-generated scores. All reported correlations were statistically significant (\(p<.01\)), as indicated by double asterisks (\(^{**}\)).

Among human raters, moderate to strong positive correlations were observed across all constructs. Notably, Plan (PL) showed the strongest correlations with Strategy (\(r =.60\)), Improvement (\(r =.59\)), and Vision (\(r =.55\)), suggesting that these constructs were perceived as tightly interrelated within human evaluations. Similarly, Improvement and Reflection exhibited a strong association (\(r =.65\)), indicating that human raters often view these two dimensions as co-occurring in effective leadership planning.

Table 3. Inter-construct correlations.

By contrast, the AI-generated scores displayed different correlation structures. Although some relationships were similarly strong, such as Basic and Vision (\(r =.72\)) or Basic and Plan (\(r =.70\)), other construct pairs had notably weaker associations. For instance, Strategy correlated only modestly with Plan (\(r =.24\)) and Curriculum (CU; \(r =.22\)), indicating that AI may treat these constructs as more distinct or independent in its assessment logic. Similarly, the correlation between Improvement and Reflection was lower (\(r =.48\)) compared to that observed for human scores.

Overall, these findings suggest that, although AI and human ratings were generally aligned in structure, the AI scoring model exhibited more differentiated construct relationships, potentially reflecting its rule-based or data-driven parsing of content features. Conversely, human raters may apply a more holistic or integrative judgment, resulting in higher inter-construct coherence. This discrepancy highlights the importance of reviewing not only the mean scores but also the internal consistency patterns when comparing human and AI evaluative systems.

Table 4. Inter-rater reliability.

4.3. Inter-Rater Reliability Analysis

To evaluate the consistency of ratings across evaluators, ICCs were computed using a two-way mixed-effects model with a consistency definition. This method assumes that the subjects are random and the raters are fixed, and evaluates the extent to which raters assign consistent ratings across multiple targets, excluding between-measure variance.

For human raters, the ICC for single measures was .55 (95% CI \(=[.51,\, .59]\)), indicating a moderate level of agreement among individual raters. The ICC for the average measures, which represents the reliability of the aggregated score across raters, was .896, indicating excellent consistency. The \(F\)-test for the null hypothesis of no reliability was statistically significant, \(F(452, 2712)=9.65\), \(p<.001\), suggesting that the observed agreement among raters was not due to chance.

The AI rater exhibited a single-measure ICC of .49 (95% CI \(=[.42,\, .57]\)), indicating moderate reliability at the individual level. The average-measures ICC was .87, showing strong interrater reliability when the ratings were averaged. The corresponding \(F\)-test result was also significant: \(F(130, 780)=7.93\), \(p<.001\) (Table 4).

These results confirm that, although human raters may show moderate variability in scoring, the overall scoring system, particularly when using aggregated ratings, demonstrated high reliability. Using multiple raters per case strengthens the dependability of the evaluation process and ensures robust assessment outcomes.

5. Conclusion

The aim of this study was to evaluate scoring consistency, differences, and interconstruct relationships between human and AI (ChatGPT-based) assessments in the context of school principal leadership training. The results address three core research questions and inform several implications for educational practices and AI-assisted evaluations.

Research question 1: Are there significant differences between AI and human ratings?

The study found consistent differences between the AI-generated- and human-assigned scores across all evaluation dimensions. The human raters tended to assign higher and more variable scores, suggesting a lenient and context-sensitive approach. In contrast, the AI scores were more conservative and narrowly distributed, reflecting a standardized and procedural scoring pattern. These differences highlight fundamental distinctions in scoring criteria applications and underscore the importance of aligning expectations when integrating AI into evaluative settings.

Research question 2: What are the correlations between AI and human scores across different dimensions?

Analysis of inter-construct correlations revealed that human raters demonstrated stronger and more cohesive associations among related dimensions, indicating a holistic and integrated evaluation approach. In contrast, the AI-generated scores showed more differentiated relationships, treating each dimension more independently. This result suggests that AI applies segmented, criterion-based logic, whereas human raters rely on overall impressions or conceptual integration in their evaluations.

Research question 3: How reliable are AI and human ratings?

Both the AI and human ratings demonstrated moderate to high reliability, particularly when the average scores from multiple raters were considered. The ICCs confirmed that the aggregated ratings from both sources yielded strong internal consistency. This result supports the potential of AI as a dependable supplementary rater in large-scale assessment contexts, particularly when paired with human oversight to ensure fairness and contextual appropriateness.

As artificial intelligence becomes increasingly embedded in educational assessment, understanding its practical role and impact on evaluative processes becomes critical. The findings of this study highlight both the potential and limitations of ChatGPT as an AI-assisted scoring tool in professional leadership training. These findings contribute to a broader discourse on how AI can complement human judgment while raising important questions about reliability, fairness, and feedback quality. The following implications offer concrete directions for educational institutions and policymakers as they consider integrating AI into formative assessment and leadership development systems.

-

1.

AI as a supplement, not a substitute for human judgment:

Although ChatGPT demonstrated moderate-to-high scoring consistency and provided structured feedback, it lacked the contextual sensitivity often observed in human raters. Educational leaders and training institutions should treat AI as a supplementary tool to enhance efficiency and standardization but still require oversight by experienced evaluators. Maintaining human involvement in high-stakes or nuanced assessments ensures that contextual relevance, emotional tone, and professional insight remain a part of the evaluation process.

-

2.

Reforming feedback systems with AI support:

Given the AI model’s ability to generate immediate criterion-referenced feedback across multiple dimensions, its integration could help address the current challenges of limited or overly generic peer and mentor feedback. Institutions may consider using AI-generated formative comments as a baseline for stimulating deeper mentor-mentee dialogue and reflective learning. This approach not only enhances feedback quality, but also supports more equitable learning opportunities by ensuring that all participants receive timely and consistent evaluative input.

Acknowledgments

This study was supported by the Ministry of Science and Technology (MOST 113-2410-H-656-001-MY).

- [1] T. K. F. Chiu, Q. Xia, X. Zhou, C. S. Chai, and M. Cheng, “Systematic literature review on opportunities, challenges, and future research recommendations of artificial intelligence in education,” Computers and Education: Artificial Intelligence, Vol.4, Article No.100118, 2023. https://doi.org/10.1016/j.caeai.2022.100118

- [2] N. Göksel and A. Bozkurt, “Artificial Intelligence in Education: Current Insights and Future Perspectives,” S. Sisman-Ugur and G. Kurubacak (Eds.), “Handbook of Research on Learning in the Age of Transhumanism,” pp. 224-236, IGI Global, 2019.

- [3] E. Sood, “Artificial Intelligence in Education – Opportunities, Challenges & Legal Implications,” London J. of Research In Management & Business, Vol.25, No.8, pp. 17-26, 2025.

- [4] M. Ekizoğlu and A. N. Demir, “The role of AI assisted writing feedback in developing secondary students writing skills,” Discov. Educ., Vol.4, Article No.454, 2025. https://doi.org/10.1007/s44217-025-00919-3

- [5] S. K. Green and R. L. Johnson, “Assessment is Essential,” McGraw-Hill Education, 2009.

- [6] J. H. Choi, K. E. Hickman, A. Monahan, and D. Schwarcz, “ChatGPT goes to law school,” J. of Legal Education, Vol.71, No.3, pp. 387-400, 2023. https://doi.org/10.2139/ssrn.4335905

- [7] V. de Wilde and O. de Clercq, “Challenges and opportunities of automated essay scoring for low-proficient L2 English writers,” Assessing Writing, Vol.66, Article No.100982, 2025. https://doi.org/10.1016/j.asw.2025.100982

- [8] Z. F. Hu, L. Lin, Y. H. Wang, and J. W. Li, “The Integration of Classical Testing Theory and Item Response Theory,” Psychology, Vol.12, pp. 1397-1409, 2021. https://doi.org/10.4236/psych.2021.129088

- [9] M. Dowling and B. Lucey, “ChatGPT for (finance) research: The Bananarama conjecture,” Finance Research Letters, Vol.53, Article No.103662, 2023. https://doi.org/10.1016/j.frl.2023.103662

- [10] M. D. Shermis and J. Burstein (Eds.), “Handbook of Automated Essay Evaluation: Current Applications and New Directions,” Routledge, 2013.

- [11] T. B. Brown, B. Mann, N. Ryder, M. Subbiah, J. Kaplan, P. Dhariwal et al., “Language models are few-shot learners,” Proc. of the 34th Int. Conf. on Neural Information Processing Systems, pp. 1877-1901, 2020.

- [12] O. Zawacki-Richter, V. I. Marín, M. Bond, and F. Gouverneur, “Systematic review of research on artificial intelligence applications in higher education: Where are the educators?,” Int. J. of Educational Technology in Higher Education, Vol.16, No.1, Article No.39, 2019. https://doi.org/10.1186/s41239-019-0171-0

- [13] H. Park and D. Ahn, “The Promise and Peril of ChatGPT in Higher Education: Opportunities, Challenges, and Design Implications,” Proc. of the 2024 CHI Conf. on Human Factors in Computing Systems, Article No.271, 2024. https://doi.org/10.1145/3613904.3642785

- [14] J. Wei, X. Wang, D. Schuurmans, M. Bosma, B. Ichter, F. Xia et al., “Chain-of-thought prompting elicits reasoning in large language models,” Proc. of the 36th Int. Conf. on Neural Information Processing Systems, pp. 24824-24837, 2022.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.