Research Paper:

Reimagining Leadership Assessment: A Comparative Study of Human and AI Scoring Using ChatGPT in School Principal Training

Mingchuan Hsieh†

National Academy for Educational Research

No.2 SanShu Rd., Sanxia District, New Taipei 237201, Taiwan

†Corresponding author

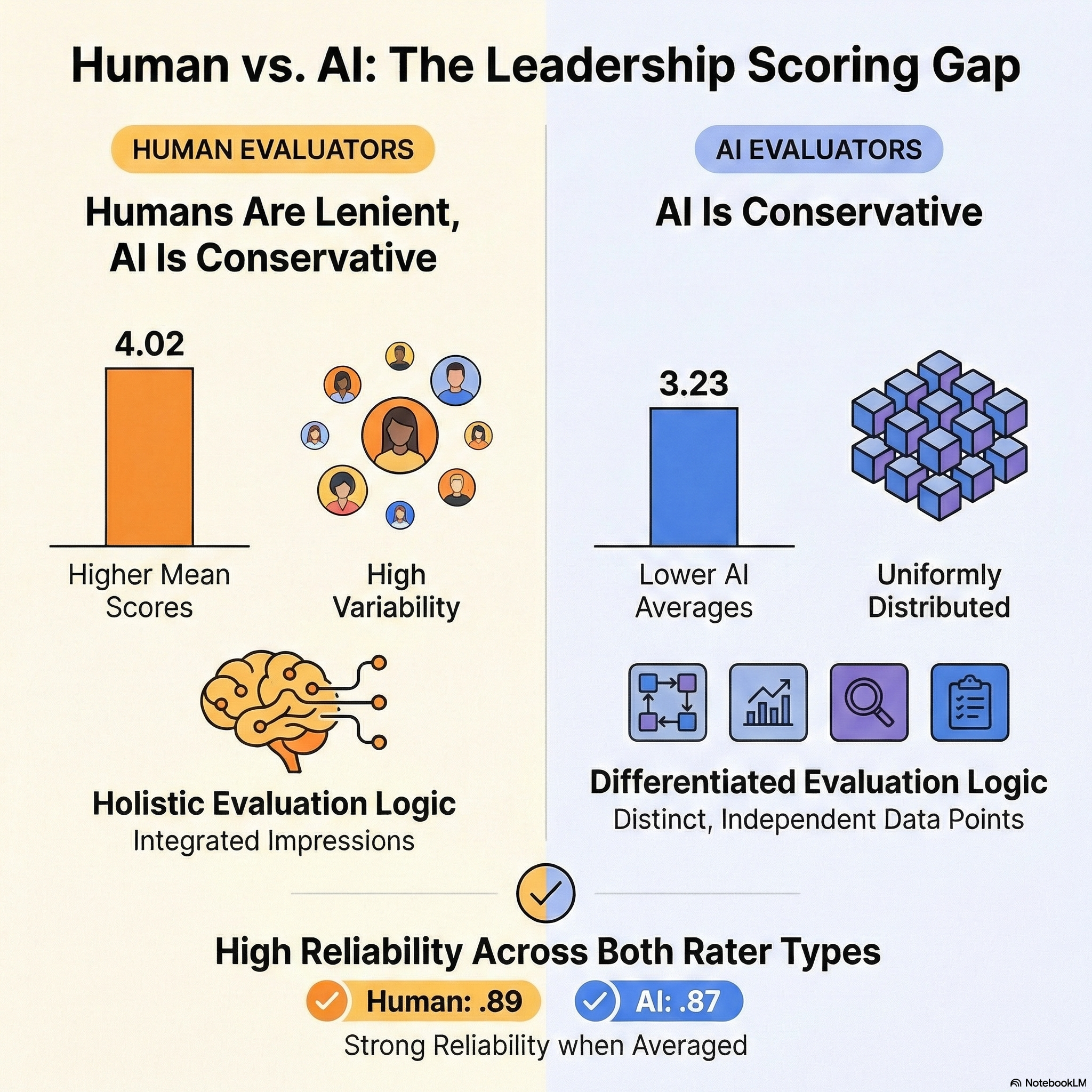

In this study, the application of ChatGPT in the evaluation of pre-service principals within Taiwan’s national school leadership training program was examined. As generative AI technologies become increasingly embedded in education, understanding their role in professional assessment is essential. This research explored whether AI-generated scores align with human ratings, and whether ChatGPT can serve as a reliable tool for providing formative feedback. A total of 131 pre-service principals submitted School Improvement Plans, which were scored by both human evaluators (mentor principals and external scholars) and ChatGPT. The scoring rubric included seven dimensions of leadership competency, with both parties rating assignments on a five-point scale. Descriptive statistics, Pearson correlations, and intraclass correlation coefficients (ICCs) were used to compare scoring patterns, consistency, and reliability. Findings show that human raters consistently assigned higher and more variable scores than ChatGPT, which produced more conservative and evenly distributed ratings. Although both AI and human scores showed internal construct coherence, correlation patterns suggested that ChatGPT applied a more differentiated, data-driven evaluation logic. ICC analysis revealed moderate single-rater reliability and high average-rater reliability for both human and AI assessments. The results indicate that ChatGPT holds potential as a supplementary assessment assistant, particularly in delivering structured, rubric-based feedback. However, discrepancies in scoring tendencies and contextual interpretation underscore the need for human oversight. The findings of this study offer implications for the thoughtful integration of AI in leadership development and broader educational assessment systems.

Human vs. AI scoring comparison

- [1] T. K. F. Chiu, Q. Xia, X. Zhou, C. S. Chai, and M. Cheng, “Systematic literature review on opportunities, challenges, and future research recommendations of artificial intelligence in education,” Computers and Education: Artificial Intelligence, Vol.4, Article No.100118, 2023. https://doi.org/10.1016/j.caeai.2022.100118

- [2] N. Göksel and A. Bozkurt, “Artificial Intelligence in Education: Current Insights and Future Perspectives,” S. Sisman-Ugur and G. Kurubacak (Eds.), “Handbook of Research on Learning in the Age of Transhumanism,” pp. 224-236, IGI Global, 2019.

- [3] E. Sood, “Artificial Intelligence in Education – Opportunities, Challenges & Legal Implications,” London J. of Research In Management & Business, Vol.25, No.8, pp. 17-26, 2025.

- [4] M. Ekizoğlu and A. N. Demir, “The role of AI assisted writing feedback in developing secondary students writing skills,” Discov. Educ., Vol.4, Article No.454, 2025. https://doi.org/10.1007/s44217-025-00919-3

- [5] S. K. Green and R. L. Johnson, “Assessment is Essential,” McGraw-Hill Education, 2009.

- [6] J. H. Choi, K. E. Hickman, A. Monahan, and D. Schwarcz, “ChatGPT goes to law school,” J. of Legal Education, Vol.71, No.3, pp. 387-400, 2023. https://doi.org/10.2139/ssrn.4335905

- [7] V. de Wilde and O. de Clercq, “Challenges and opportunities of automated essay scoring for low-proficient L2 English writers,” Assessing Writing, Vol.66, Article No.100982, 2025. https://doi.org/10.1016/j.asw.2025.100982

- [8] Z. F. Hu, L. Lin, Y. H. Wang, and J. W. Li, “The Integration of Classical Testing Theory and Item Response Theory,” Psychology, Vol.12, pp. 1397-1409, 2021. https://doi.org/10.4236/psych.2021.129088

- [9] M. Dowling and B. Lucey, “ChatGPT for (finance) research: The Bananarama conjecture,” Finance Research Letters, Vol.53, Article No.103662, 2023. https://doi.org/10.1016/j.frl.2023.103662

- [10] M. D. Shermis and J. Burstein (Eds.), “Handbook of Automated Essay Evaluation: Current Applications and New Directions,” Routledge, 2013.

- [11] T. B. Brown, B. Mann, N. Ryder, M. Subbiah, J. Kaplan, P. Dhariwal et al., “Language models are few-shot learners,” Proc. of the 34th Int. Conf. on Neural Information Processing Systems, pp. 1877-1901, 2020.

- [12] O. Zawacki-Richter, V. I. Marín, M. Bond, and F. Gouverneur, “Systematic review of research on artificial intelligence applications in higher education: Where are the educators?,” Int. J. of Educational Technology in Higher Education, Vol.16, No.1, Article No.39, 2019. https://doi.org/10.1186/s41239-019-0171-0

- [13] H. Park and D. Ahn, “The Promise and Peril of ChatGPT in Higher Education: Opportunities, Challenges, and Design Implications,” Proc. of the 2024 CHI Conf. on Human Factors in Computing Systems, Article No.271, 2024. https://doi.org/10.1145/3613904.3642785

- [14] J. Wei, X. Wang, D. Schuurmans, M. Bosma, B. Ichter, F. Xia et al., “Chain-of-thought prompting elicits reasoning in large language models,” Proc. of the 36th Int. Conf. on Neural Information Processing Systems, pp. 24824-24837, 2022.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.