Research Paper:

ASCNet: Attention Mechanism and Self-Calibration Convolution Fusion Network for X-ray Femoral Fracture Classification

Liyuan Zhang*

, Yusi Liu*, Fei He*,†, Xiongfeng Tang**, and Zhengang Jiang*

, Yusi Liu*, Fei He*,†, Xiongfeng Tang**, and Zhengang Jiang*

*School of Computer Science and Technology, Changchun University of Science and Technology

No.7089 Weixing Road, Changchun, Jilin 130022, China

†Corresponding author

**Orthpoeadic Medical Center, Jilin University Second Hospital

No.218 Ziqiang Street, Changchun, Jilin 130041, China

X-ray examinations are crucial for fracture diagnosis and treatment. However, some fractures do not present obvious imaging feature in early X-rays, which can result in misdiagnosis. Therefore, an ASCNet model is proposed in this study for X-ray femoral fracture classification. This model adopts the self-calibration convolution method to obtain more discriminative feature representation. This convolutional way can enable each spatial location to adaptively encode the context information of distant regions and make the model obtain some characteristic information hidden in X-ray images. Additionaly, the ASCNet model integrates the convolutional block attention module and coordinate attention module to capture different information from space and channels to fully obtain the apparent fracture features in X-ray images. Finally, the effectiveness of the proposed model is verified using the femoral fracture dataset. The final classification accuracy and AUC value of the ASCNet are 0.9286 and 0.9720, respectively. The experimental results demonstrate that the ASCNet model performs better than ResNet50 and SCNet50. Furthermore, the proposed model presents specific advantages in recognizing occult fractures in X-ray images.

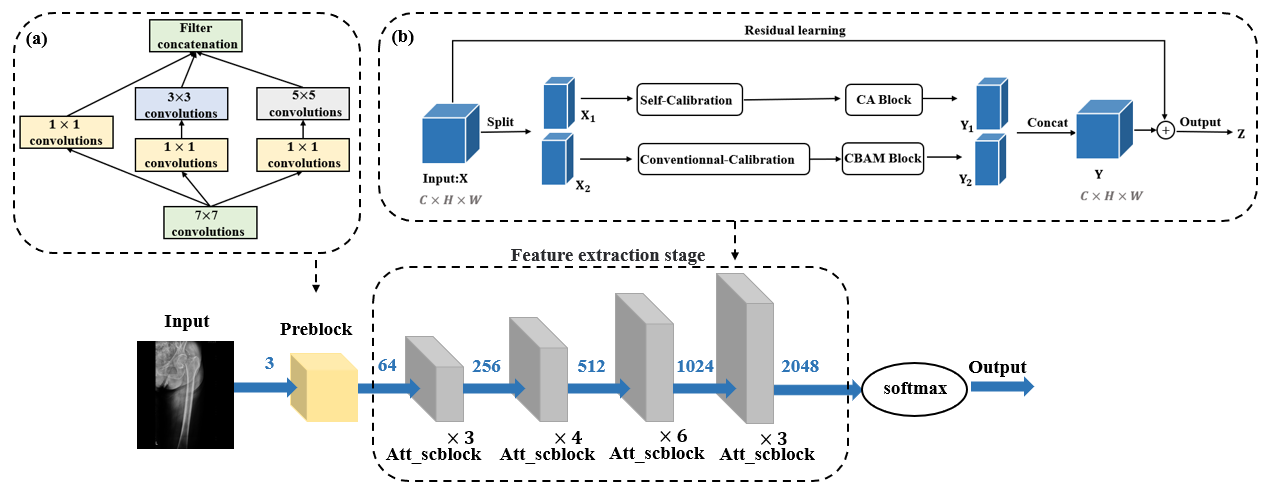

Detailed model of the overall network

- [1] L. Nascimento and M. G. Ruano, “Computer-aided bone fracture identification based on ultrasound images,” 2015 IEEE 4th Portuguese Meeting on Bioengineering (ENBENG), 2015. https://doi.org/10.1109/ENBENG.2015.7088892

- [2] W. Zhu et al., “X-ray image global enhancement algorithm in medical image classification,” Discrete and Continuous Dynamical Systems – Series S, Vol.12, Nos.4-5, pp. 1297-1309, 2019. https://doi.org/10.3934/dcdss.2019089

- [3] E. A. Krupinski et al., “Long radiology workdays reduce detection and accommodation accuracy,” J. of the American College of Radiology, Vol.7, No.9, pp. 698-704, 2010. https://doi.org/10.1016/j.jacr.2010.03.004

- [4] R. Lindsey et al., “Deep neural network improves fracture detection by clinicians,” Proc. of the National Academy of Sciences, Vol.115, No.45, pp. 11591-11596, 2018. https://doi.org/10.1073/pnas.1806905115

- [5] D. P. Yadav et al., “Hybrid SFNet model for bone fracture detection and classification using ML/DL,” Sensors, Vol.22, No.15, Article No.5823, 2022. https://doi.org/10.3390/s22155823

- [6] C. Lee et al., “Classification of femur fracture in pelvic X-ray images using meta-learned deep neural network,” Scientific Reports, Vol.10, Article No.13694, 2020. https://doi.org/10.1038/s41598-020-70660-4

- [7] J. Bae et al., “External validation of deep learning algorithm for detecting and visualizing femoral neck fracture including displaced and non-displaced fracture on plain X-ray,” J. of Digital Imaging, Vol.34, No.5, pp. 1099-1109, 2021. https://doi.org/10.1007/s10278-021-00499-2

- [8] A. Jiménez-Sánchez et al., “Curriculum learning for improved femur fracture classification: Scheduling data with prior knowledge and uncertainty,” Medical Image Analysis, Vol.75, Article No.102273, 2022. https://doi.org/10.1016/j.media.2021.102273

- [9] S. Zhang et al., “Occult fracture of the fibula: One case report,” Orthopaedic Nursing, Vol.41, No.5, pp. 371-373, 2022. https://doi.org/10.1097/NOR.0000000000000891

- [10] J.-J. Liu, et al., “Improving convolutional networks with self-calibrated convolutions,” 2020 IEEE/CVF Conf. on Computer Vision and Pattern Recognition (CVPR), pp. 10093-10102, 2020. https://doi.org/10.1109/CVPR42600.2020.01011

- [11] K. He et al., “Deep residual learning for image recognition,” 2016 IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), pp. 770-778, 2016. https://doi.org/10.1109/CVPR.2016.90

- [12] S. Woo et al., “CBAM: Convolutional block attention module,” Proc. of the 15th European Conf. on Computer Vision (ECCV), Part 7, pp. 3-19, 2018. https://doi.org/10.1007/978-3-030-01234-2_1

- [13] Q. Hou, D. Zhou, and J. Feng, “Coordinate attention for efficient mobile network design,” 2021 IEEE/CVF Conf. on Computer Vision and Pattern Recognition (CVPR), pp. 13708-13717, 2021. https://doi.org/10.1109/CVPR46437.2021.01350

- [14] T.-Y. Lin et al., “Focal loss for dense object detection,” 2017 IEEE Int. Conf. on Computer Vision (ICCV), pp. 2999-3007, 2017. https://doi.org/10.1109/ICCV.2017.324

- [15] Z. Al-Ameen, “Contrast enhancement of medical images using statistical methods with image processing concepts,” 2020 6th Int. Engineering Conf. “Sustainable Technology and Development” (IEC), pp. 169-173, 2020. https://doi.org/10.1109/IEC49899.2020.9122925

- [16] R. R. Selvaraju et al., “Grad-CAM: Visual explanations from deep networks via gradient-based localization,” 2017 IEEE Int. Conf. on Computer Vision (ICCV), pp. 618-626, 2017. https://doi.org/10.1109/ICCV.2017.74

- [17] L. Tanzi et al., “Vision transformer for femur fracture classification,” Injury, Vol.53, No.7, pp. 2625-2634, 2022. https://doi.org/10.1016/j.injury.2022.04.013

- [18] Y. Miao et al., “A method for detecting femur fracture based on SK-DenseNet,” Proc. of the 2019 Int. Conf. on Artificial Intelligence and Advanced Manufacturing (AIAM 2019), Article No.71, 2019. https://doi.org/10.1145/3358331.3358402

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.