Paper:

LSTM Network Classification of Dexterous Individual Finger Movements

Christopher Millar, Nazmul Siddique, and Emmett Kerr

Faculty of Computing, Engineering and Built Environment, Ulster University

Northland Road, Derry, County Londonderry BT48 7JL, UK

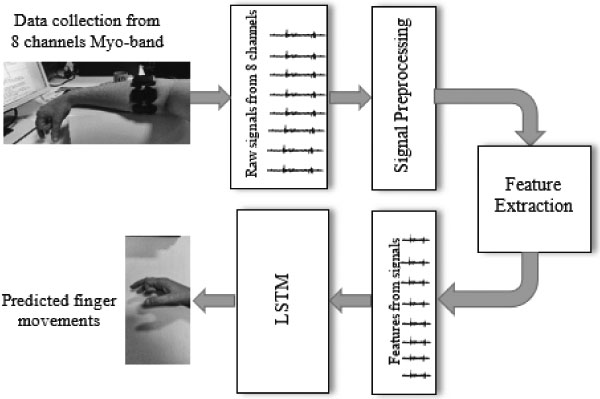

Electrical activity is generated in the forearm muscles during muscular contractions that control dexterous movements of a human finger and thumb. Using this electrical activity as an input to train a neural network for the purposes of classifying finger movements is not straightforward. Low cost wearable sensors i.e., a Myo Gesture control armband (www.bynorth.com), generally have a lower sampling rate when compared with medical grade EMG detection systems e.g., 200 Hz vs 2000 Hz. Using sensors such as the Myo coupled with the lower amplitude generated by individual finger movements makes it difficult to achieve high classification accuracy. Low sampling rate makes it challenging to distinguish between large quantities of subtle finger movements when using a single network. This research uses two networks which enables for the reduction in the number of movements in each network that are being classified; in turn improving the classification. This is achieved by developing and training LSTM networks that focus on the extension and flexion signals of the fingers and a separate network that is trained using thumb movement signal data. By following this method, this research have increased classification of the individual finger movements to between 90 and 100%.

Overview of proposed system

- [1] C. Millar, N. Siddique, and E. Kerr, “LSTM Classification of sEMG Signals For Individual Finger Movements Using Low Cost Wearable Sensor,” Int. Symp. on Community-centric Systems, doi: 10.1109/CcS49175.2020.9231515, 2020.

- [2] R. N. Khushaba, A. Al-Ani, and A. Al-Jumaily, “Orthogonal Fuzzy Neighborhood Discriminant Analysis for Multifunction Myoelectric Hand Control,” IEEE Trans. on Biomedical Engineering, Vol.57, No.6, pp. 1410-1419, doi: 10.1109/TBME.2009.2039480, 2010.

- [3] R. Hodson, “A gripping problem,” Nature, Vol.557, No.7704, pp. S23-S25, 2018.

- [4] J. Bohg, A. Morales, T. Asfour, and D. Kragic, “Data-driven grasp synthesis – A survey,” IEEE Trans. on Robotics, Vol.30, No.2, pp. 289-309, doi: 10.1109/TRO.2013.2289018, 2014.

- [5] H. Liu, Y. Wu, F. Sun, and G. Di, “Recent progress on tactile object recognition,” Int. J. of Advanced Robotic Systems, Vol.14, No.4, pp. 1-12, doi: 10.1177/1729881417717056, 2017.

- [6] A. Saxena, L. L. S. Wong, and A. Y. Ng, “Learning Grasp Strategies with Partial Shape Information,” Proc. of the 23rd National Conf. on Artificial intelligence (AAAI’08), Vol.3, No.2, pp. 1491-1494, 2008.

- [7] Z.-C. Marton, D. Pangercic, N. Blodow, J. Kleinehellefort, and M. Beetz, “General 3D modelling of novel objects from a single view,” IEEE/RSJ 2010 Int. Conf. on Intelligent Robots and Systems, pp. 3700-3705, doi: 10.1109/IROS.2010.5650434, 2010.

- [8] I. Cerulo, F. Ficuciello, V. Lippiello, and B. Siciliano, “Teleoperation of the SCHUNK S5FH under-actuated anthropomorphic hand using human hand motion tracking,” Robotics and Autonomous Systems, Vol.89, pp. 75-84, doi: 10.1016/j.robot.2016.12.004, 2017.

- [9] K. Nymoen, M. R. Haugen, and A. R. Jensenius, “MuMYO – Evaluating and Exploring the MYO Armband for Musical Interaction,” Proc. of the Int. Conf. on New Interfaces for Musical Expression (NIME’15), pp. 215-218, 2015.

- [10] S. Shin, Y. Baek, J. Lee, Y. Eun, and S. H. Son, “Korean sign language recognition using EMG and IMU sensors based on group-dependent NN models,” Proc. of the 2017 IEEE Symp. Series on Computational Intelligence (SSCI 2017), doi: 10.1109/SSCI.2017.8280908, 2018.

- [11] F. Tenore, A. Ramos, A. Fahmy, S. Acharya, R. Etienne-Cummings, and N. V. Thakor, “Towards the Control of Individual Fingers of a Prosthetic Hand Using Surface EMG Signals,” 2007 29th Annual Int. Conf. of the IEEE Engineering in Medicine and Biology Society, pp. 6145-6148, doi: 10.1109/IEMBS.2007.4353752, 2007.

- [12] A. Phinyomark, C. Limsakul, and P. Phukpattaranont, “A Novel Feature Extraction for Robust EMG Pattern Recognition,” J. of Computing, Vol.1, No.1, pp. 71-80, 2009.

- [13] M. Atzori, A. Gijsberts, C. Castellini, B. Caputo, A.-G. M. Hager, S. Elsig, G. Giatsidis, F. Bassetto, and H. Müller, “Electromyography data for non-invasive naturally-controlled robotic hand prostheses,” Scientific Data, Vol.1, Article No.140053, doi: 10.1038/sdata.2014.53, 2014.

- [14] Md. R. Ahsan, M. I. Ibrahimy, and O. O. Khalifa, “Neural Network Classifier for Hand Motion Detection from EMG Signal,” 5th Kuala Lumpur Int. Conf. on Biomedical Engineering 2011, pp. 536-541, doi:10.1007/978-3-642-21729-6_135, 2011.

- [15] B. Hudgins, P. Parker, and R. N. Scott, “A New Strategy for Multifunction Myoelectric Control,” IEEE Trans. on Biomedical Engineering, Vol.40, No.1, pp. 82-94, doi: 10.1109/10.204774, 1993.

- [16] M. Zardoshti-Kermani, B. C. Wheeler, K. Badie, and R. M. Hashemi, “EMG Feature Evaluation for Movement Control of Upper Extremity Prostheses,” IEEE Trans. on Rehabilitation Engineering, Vol.3, No.4, pp. 324-333, doi:10.1109/86.481972, 1995.

- [17] F. V. G. Tenore, A. Ramos, A. Fahmy, S. Acharya, R. Etienne-Cummings, and N. V. Thakor, “Decoding of Individuated Finger Movements Using Surface Electromyography,” IEEE Trans. on Biomedical Engineering, Vol.56, No.5, pp. 1427-1434, doi: 10.1109/TBME.2008.2005485, 2009.

- [18] M. Atzori, A. Gijsberts, I. Kuzborskij, S. Elsig, A.-G. M. Hager, O. Deriaz, C. Castellini, H. Müller, and B. Caputo, “Characterization of a Benchmark Database for Myoelectric Movement Classification,” IEEE Trans. on Neural Systems and Rehabilitation Engineering, Vol.23, No.1, pp. 73-83, doi: 10.1109/TNSRE.2014.2328495, 2015.

- [19] Md. R. Ahsan, M. I. Ibrahimy, and O. O. Khalifa, “Electromygraphy (EMG) signal based hand gesture recognition using artificial neural network (ANN),” 2011 4th Int. Conf. on Mechatronics: Integrated Engineering for Industrial and Societal Development (ICOM’11), doi: 10.1109/ICOM.2011.5937135, 2011.

- [20] K. Tatarian, M. S. Couceiro, E. P. Ribeiro, and D. R. Faria, “Stepping-stones to Transhumanism: An EMG-controlled Low-cost Prosthetic Hand for Academia,” 2018 Int. Conf. on Intelligent Systems (IS), pp. 807-812, doi: 10.1109/is.2018.8710489, 2019.

- [21] M. E. Benalcazar, A. G. Jaramillo, Jonathan, A. Zea, A. Páez, and V. H. Andaluz, “Hand gesture recognition using machine learning and the Myo armband,” 2017 25th European Signal Processing Conf. (EUSIPCO), pp. 1040-1044, doi: 10.23919/EUSIPCO.2017.8081366, 2017.

- [22] M. E. Benalcázar, C. Motoche, J. A. Zea, A. G. Jaramillo, C. E. Anchundia, P. Zambrano, M. Segura, F. B. Palacios, and M. Pérez, “Real-time hand gesture recognition using the Myo armband and muscle activity detection,” 2017 IEEE 2nd Ecuador Technical Chapters Meeting (ETCM 2017), doi: 10.1109/ETCM.2017.8247458, 2018.

- [23] T. Supuk, T. Bajd, and G. Kurillo, “Assessment of reach-to-grasp trajectories toward stationary objects,” Clinical Biomechanics, Vol.26, No.8, pp. 811-818, doi: 10.1016/j.clinbiomech.2011.04.007, 2011.

- [24] R. M. Stephenson, R. Chai, and D. Eager, “Isometric Finger Pose Recognition with Sparse Channel SpatioTemporal EMG Imaging,” Proc. of the Annual Int. Conf. of the IEEE Engineering in Medicine and Biology Society (EMBS), pp. 5232-5235, doi: 10.1109/EMBC.2018.8513445, 2018.

- [25] Y. Wu, B. Zheng, and Y. Zhao, “Dynamic Gesture Recognition Based on LSTM-CNN,” Proc. 2018 Chinese Automation Congress (CAC 2018), pp. 2446-2450, doi: 10.1109/CAC.2018.8623035, 2019.

- [26] K. Akhmadeev, E. Rampone, T. Yu, Y. Aoustin, and E. Le Carpentier, “A testing system for a real-time gesture classification using surface EMG,” IFAC-PapersOnLine, Vol.50, No.1, pp. 11498-11503, doi: 10.1016/j.ifacol.2017.08.1602, 2017.

- [27] P. Paudyal, J. Lee, A. Banerjee, and S. K. S. Gupta, “A comparison of techniques for sign language alphabet recognition using armband wearables,” ACM Trans. on Interactive Intelligent Systems, Vol.9, No.2-3, Article No.14, doi: 10.1145/3150974, 2019.

- [28] A. Phinyomark, R. N. Khushaba, and E. Scheme, “Feature extraction and selection for myoelectric control based on wearable EMG sensors,” Sensors, Vol.18, No.5, Article No.1615, doi: 10.3390/s18051615, 2018.

- [29] M. H. Schieber, “Muscular Production of lndividuated Extrinsic Finger Muscles Finger Movements,” J. of Neuroscience, Vol.15, No.1, pp. 284-297, doi: 10.1523/JNEUROSCI.15-01-00284.1995, 1995.

- [30] Y. Gu, D. Yang, Q. Huang, W. Yang, and H. Liu, “Robust EMG pattern recognition in the presence of confounding factors: features, classifiers and adaptive learning,” Expert Systems with Applications, Vol.96, pp. 208-217, doi: 10.1016/j.eswa.2017.11.049, 2018.

- [31] I. Mendez, B. W. Hansen, C. M. Grabow, E. J. L. Smedegaard, N. B. Skogberg, X. J. Uth, A. Bruhn, B. Geng, and E. N. Kamavuako, “Evaluation of the Myo armband for the classification of hand motions,” IEEE Int. Conf. on Rehabilitation Robotics (ICORR), pp. 1211-1214, doi: 10.1109/ICORR.2017.8009414, 2017.

- [32] A. Phinyomark and E. Scheme, “A feature extraction issue for myoelectric control based on wearable EMG sensors,” 2018 IEEE Sensors Applications Symp. (SAS), doi: 10.1109/SAS.2018.8336753, 2018.

- [33] A. Gijsberts, M. Atzori, C. Castellini, H. Müller, and B. Caputo, “Movement error rate for evaluation of machine learning methods for sEMG-based hand movement classification,” IEEE Trans. on Neural Systems and Rehabilitation Engineering, Vol.22, No.4, pp. 735-744, doi: 10.1109/TNSRE.2014.2303394, 2014.

- [34] S. Pizzolato, L. Tagliapietra, M. Cognolato, M. Reggiani, H. Müller, and M. Atzori, “Comparison of six electromyography acquisition setups on hand movement classification tasks,” PLoS ONE, Vol.12, No.10, doi: 10.1371/journal.pone.0186132, 2017.

- [35] S. Hochreiter and J. Schmidhuber, “Long Short-Term Memory,” Neural Computation, Vol.9, No.8, pp. 1735-1780, doi: 10.1162/neco.1997.9.8.1735, 1997.

- [36] T. Cooijmans, N. Ballas, C. Laurent, Ç. Gülçehre, and A. Courville, “Recurrent batch normalization,” 5th Int. Conf. on Learning Representations (ICLR 2017), 2019.

- [37] M. Tomaszewski, “Myo SDK MATLAB MEX Wrapper,” 2019.

- [38] S. Thalkar and D. Upasani, “Various Techniques for Removal of Power Line Interference From ECG Signal,” Int. J. of Scientific and Engineering Research, Vol.4, Issue 12, pp. 12-23, 2013.

- [39] A. Lopez-del Rio, M. Martin, A. Perera-Lluna, and R. Saidi, “Effect of sequence padding on the performance of deep learning models in archaeal protein functional prediction,” Scientific Reports, Vol.10, Article No.14634, doi: 10.1038/s41598-020-71450-8, 2020.

- [40] M. Schak and A. Gepperth, “A study on catastrophic forgetting in deep LSTM networks,” Artificial Neural Networks and Machine Learning – ICANN 2019: Deep Learning, pp. 714-728, doi: 10.1007/978-3-030-30484-3_56, 2019.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.