Paper:

Variable Expanding Structure for Data Center Interconnection Networks

Jianfei Zhang†, Yuchen Jiang, and Yan Liu

School of Computer Science and Technology, Changchun University of Science and Technology

No.7089 Weixing Road, Changchun, Jilin 130022, China

†Corresponding author

Data centers are fundamental facilities that support high-performance computing and large-scale data processing. To guarantee that a data center can provide excellent properties of expanding and routing, the interconnection network of a data center should be designed elaborately. Herein, we propose a novel structure for the interconnection network of data centers that can be expanded with a variable coefficient, also known as a variable expanding structure (VES). A VES is designed in a hierarchical manner and built iteratively. A VES can include hundreds of thousands and millions of servers with only a few layers. Meanwhile, a VES has an extremely short diameter, which implies better performance on routing between every pair of servers. Furthermore, we design an address space for the servers and switches in a VES. In addition, we propose a construction algorithm and routing algorithm associated with the address space. The results and analysis of simulations verify that the expanding rate of a VES depends on three factors: n, m, and k where the n is the number of ports on a switch, the m is the expanding speed and the k is the number of layers. However, the factor m yields the optimal effect. Hence, a VES can be designed with factor m to achieve the expected expanding rate and server scale based on the initial planning objectives.

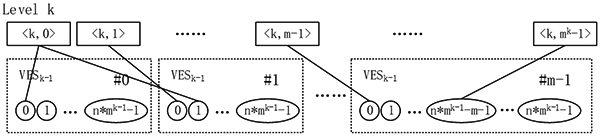

A VESk structure that consists of m VESk-1s

- [1] S. Ghemawat, H. Gobioff, and S.-T. Leung, “The Google file system,” ACM SIGOPS Operating Systems Review, Vol.37, No.5, pp. 29-43, 2003.

- [2] J. Dean and S. Ghemawat, “MapReduce: simplified data processing on large clusters,” Communications of the ACM, Vol.51, No.1, pp. 107-113, 2008.

- [3] M. Isard, M. Budiu, Y. Yu, A. Birrell, and D. Fetterly, “Dryad: distributed data-parallel programs from sequential building blocks,” ACM SIGOPS Operating Systems Review, Vol.41, No.3, pp. 59-72, 2007.

- [4] M. Al-Fares, A. Loukissas, and A. Vahdat, “A scalable, commodity data center network architecture,” ACM SIGCOMM Computer Communication Review, Vol.38, No.4, pp. 63-74, 2008.

- [5] A. Greenberg, J. R. Hamilton, N. Jain, S. Kandula, C. Kim, P. Lahiri, D. A. Maltz, P. Patel, and S. Sengupta, “VL2: a scalable and flexible data center network,” ACM SIGCOMM Computer Communication Review, Vol.39, No.4, pp. 51-62, 2009.

- [6] G. Qu, Z. Fang, J. Zhang, and S.-Q. Zheng, “Switch-centric data center network structures based on hypergraphs and combinatorial block designs,” IEEE Trans. on Parallel and Distributed Systems, Vol.26, No.4, pp. 1154-1164, 2015.

- [7] A. Singh et al., “Jupiter rising: A decade of clos topologies and centralized control in Google’s datacenter network,” ACM SIGCOMM Computer Communication Review, Vol.45, No.4, pp. 183-197, 2015.

- [8] C. Guo, H. Wu, K. Tan, L. Shi, Y. Zhang, and S. Lu, “Dcell: a scalable and fault-tolerant network structure for data centers,” ACM SIGCOMM Computer Communication Review, Vol.38, No.4, pp. 75-86, 2008.

- [9] C. Guo, G. Lu, D. Li, H. Wu, X. Zhang, Y. Shi, C. Tian, Y. Zhang, and S. Lu, “BCube: a high performance, server-centric network architecture for modular data centers,” ACM SIGCOMM Computer Communication Review, Vol.39, No.4, pp. 63-74, 2009.

- [10] D. Li, C. Guo, H. Wu, K. Tan, Y. Zhang, S. Lu, and J. Wu, “Scalable and cost-effective interconnection of data-center servers using dual server ports,” IEEE/ACM Trans. on Networking, Vol.19, No.1, pp. 102-114, 2011.

- [11] D. Li and J. Wu, “On data center network architectures for interconnecting dual-port servers,” IEEE Tran. on Computers, Vol.64, No.11, pp. 3210-3222, 2015.

- [12] T. Wang, Z. Su, Y. Xia, J. Muppala, and M. Hamdi, “Designing efficient high performance server-centric data center network architecture,” Computer Networks: The Int. J. of Computer and Telecommunications Networking, Vol.79, No.C, pp. 283-296, 2015.

- [13] Z. Li and Y. Yang, “GBC3: A versatile cube-based server-centric network for data centers,” IEEE Trans. on Parallel and Distributed Systems, Vol.27, No.10, pp. 2895-2910, 2016.

- [14] T. Hoff, “Google Architecture,” http://highscalability.com/google-architecture [accessed November 23, 2008]

- [15] L. Rabbe, “Powering the yahoo! Network,” http://yodel.yahoo.com/2006/11/27/powering-the-yahoo-network [accessed December 29, 2006]

- [16] Y. Zhang and N. Ansari, “On architecture design, congestion notification, TCP incast and power consumption in data centers,” IEEE Communications Surveys & Tutorials, Vol.15, No.1, pp. 39-64, 2013.

- [17] Y. Deng, “What is the future of disk drives, death or rebirth?,” ACM Computing Surveys, Vol.43, No.3, Article No.23, pp. 1-27, 2011.

- [18] P. Rajendiran, M. P. Karthikeyan, R. Venkatesan, and B. Srinivasan, “Cloud based multicasting using fat tree data confidential recurrent neural network,” Automatika, Vol.60, No.3, pp. 305-313, 2019.

- [19] F. Zahid, B. Bogdanski, B. R. D. Johnsen, and E. G. Gran, “System and Method for Efficient Network Reconfiguration in Fat-Trees,” US Patent 20190020535, 2019.

- [20] B. Hu, K. L. Yeung, and L. Cui, “Multicast Group Establishment Method in Fat-Tree Network, Apparatus, and Fat-Tree Network,” US Patent 20170302464, 2017.

- [21] M. Lv, S. Zhou, X. Sun, G. Lian, and J. Liu, “Reliability Evaluation of Data Center Network DCell,” Parallel Processing Letters, Vol.28, No.4, Article No.1850015, 2018.

- [22] X. Li, J. Fan, C.-K. Lin, B. Cheng, and X. Jia, “The extra connectivity, extra conditional diagnosability and t/k-diagnosability of the data center network DCell,” Theoretical Computer Science, Vol.766, pp. 16-29, 2019.

- [23] H. Lü, “On the conjecture of vertex-transitivity of DCell,” Information Processing Letters, Vol.142, pp. 80-83, 2018.

- [24] L. N. Bhuyan and D. P. Agrawal, “Generalized Hypercube and Hyperbus Structures for a Computer Network,” IEEE Trans. on Computers, Vol.33, No.4, pp. 323-333, 1984.

- [25] A. Sahba and J. J. Prevost, “Hypercube Based Clusters in Cloud Computing,” 2016 World Automation Congress, pp. 1-6, 2016.

- [26] M. Shanthi and A. A. Irudhiyaraj, “High Performance Hypercube for Better Resource Discovery and Allocation in Simulated Grid based Environment,” Int. J. of Computer Applications, Vol.71, pp. 15-18, 2013.

- [27] M. Shanthi, A. A. Irudhayaraj, and R. Maruthi, “Prioritized Least Cost Method for Better Resource Allocation in Hypercube Based Cluster Environment,” 2014 Int. Conf. on Intelligent Computing and Applications, pp. 42-46, 2014.

- [28] A. Gallardo, L. Díaz de Cerio, and K. Sanjeevan, “Self-configuring Resource Discovery on a Hypercube Grid Overlay,” European Conf. on Parallel Processing, pp. 510-519, 2008.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.