Paper:

Support System for Teachers in Communication with Educational Support Robot

Felix Jimenez* and Masayoshi Kanoh**

*School of Information Science and Technology, Aichi Prefectural University

1522-3 Ibaragabasama, Nagakute, Aichi 480-1198, Japan

**School of Engineering, Chukyo University

101-2 Yagoto Honmachi, Showa-ku, Nagoya, Aichi 466-8666, Japan

With the growth of robot technology, more educational-support robots, which support learning, are paid attention to. For example, one robot supports the school life of students. Another robot helps students to learn English better. Most researches have focused on robot behavior and investigating the effect. Previous research has reported that a society in which robots and humans learn together will soon be a reality. If a society where robots and humans learn side by side is realized, children will be in houses where they will learn alongside multiple unspecified robots. We think that perspectives of third parties, such as educators and guardians, are important for further improvements in the field. Thus, we think that a system is necessary wherein third parties can direct robots to provide suitable learning support to learners. This paper proposes a system for teachers that can direct robots to provide suitable learning support to learners, who simultaneously can grasp their learning conditions as they study alongside robots.

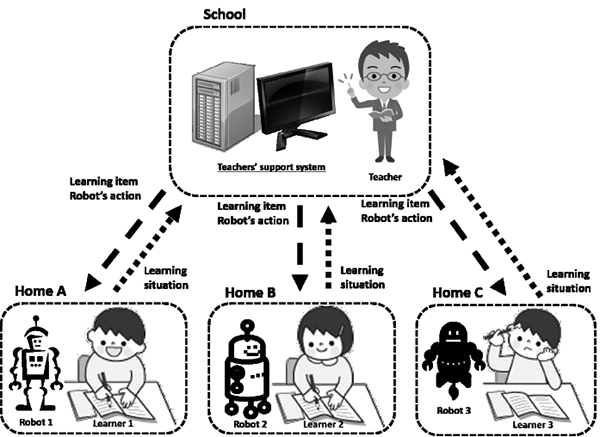

Overview of the communication environment

- [1] T. Kanda, T. Hirano, D. Eaton, and H. Ishiguro, “Interactive robots as social partners and peer tutors for children: A field trial,” Human-Computer Interaction, Vol.19, No.1, pp. 61-84, 2004.

- [2] J. Han, M. Jo, V. Jones, and J. H. Jo, “Comparative study on the educational use of home robots for children,” J. of Information Processing Systems, Vol.4, No.4, pp. 159-168, 2008.

- [3] T. Belpaeme, J. Kennedy, A. Ramachandran, B. Scassellati, and F. Tanaka, “Social robots for education: A review,” Science Robotics, Vol.3. No.21, 2018.

- [4] M. Shiomi, T. Kanda, I. Howly, K. Hayashi, and N. Hagita, “Can a Social Robot Stimulate Science Curiosity in Classrooms?,” Int. J. of Social Robotics, Vol.7, No.5, pp. 641-652, 2015.

- [5] N. Miyake and H. Ishiguro, “Toward a collaboratively creative society through human-robot symbiosis,” J. of the Robotics Society of Japan, Vol.29, No.10, pp. 868-870, 2011 (in Japanese).

- [6] M. Narita and Y. Murakawa, “Standardization on robot service and RSi (robot service initiative) activities,” J. of the Robotics Society of Japan, Vol.29, No.4, pp. 353-356, 2011 (in Japanese).

- [7] M. Narita, Y. Murakawa, M. Ueki, H. Nakamoto, R. Hiura, S. Hirano, H. Kurata, and Y. Kato, “Development of RSNP (robot service network protocol) 2.0 Targeting a robot service platform in diffusion period,” J. of the Robotics Society of Japan, Vol.27, No.8, pp. 857-867, 2009 (in Japanese).

- [8] Ministry of Education, Culture, Sports, Science and Technology (MEXT), “Current status and issues of education in Japan,” 2016, http://www.mext.go.jp/en/policy/education/lawandplan/title01/detail01/sdetail01/1373809.htm [accessed April 1, 2019]

- [9] Ministry of Education, Culture, Sports, Science and Technology (MEXT), “Promotion of Information Science Technology,” 2016, http://www.mext.go.jp/en/policy/science_technology/researchpromotion/title01/detail01/1374075.htm [accessed April 1, 2019]

- [10] Robot Services Initiative (RSi), “Toward the Popularization of Personal Robots Living Together with Humans,” http://robotservices.org/index.php/english/ [accessed April 1, 2019]

- [11] H. Furukawa, “Microsoft robotics studio programming,” Mainichi Communications Inc, 2007 (in Japanese).

- [12] Y.-G. Ha, J.-C. Sohn, Y.-J. Cho, and H. Yoon, “Towards Ubiquitous Robotic Companion: Design and Implementation of Ubiquitous Robotic Service Framework,” ETRI J., Vol.27, No.6, pp. 666-676, 2005.

- [13] S. Nakagawa, N. Igarashi, H. Nakayama, Y. Saito, N. Ohyama, R. Tsunoda, K. Sakaguchi, S. Shimizu, M. Narita, and Y. Kato, “Evaluation of a distributed service framework for integrating robots with internet services,” IEICE Technical Report, Cloud Network Robotics (CNR), Vol.111, No.366, pp. 1-6, 2011 (in Japanese).

- [14] Y. W. Chen, X. Y. Zeng, Z. Nakao, and H.-Q. Lu, “Edge detection by learning the principal components of images,” Proc. IEEE Int. Workshop of Intelligent Signal Processing, 2001.

- [15] T. Kan, “Excel de manabu tahenryo kaiseki nyumon,” Ohmsha, Ltd., 2013 (in Japanese).

- [16] S. Nozaki, R. Azuma, and Y. Namihira, “A proposal of individualized improvement for students who do not effectively improve learning score: Finding individual feature by an analysis of questionnaire with principal component analysis,” IEICE Technical Report, Educational Technology (ET), Vol.110, No.453, pp. 99-102, 2011 (in Japanese).

- [17] S. Nozaki, R. Azuma, S. Narieda, and Y. Namihira, “Improvements of lectures by using a multiple classification analysis and its feedback into students,” IEICE Technical Report, Educational Technology (ET), Vol.109, No.453, pp. 113-117, 2010 (in Japanese).

- [18] J. Enkenberg, “Situated programming in a Legologo environment,” Computers & Education, Vol.22 No.1-2, pp. 119-128, 1994.

- [19] J. S. Brown, A. Collins, and P. Duguid, “Situated cognition and the culture of learning,” Educational Researcher, Vol.18, No.1, pp. 32-42, 1989.

- [20] K. Miyauchi, F. Jimenez, T. Yoshikawa, T. Furuhashi, and M. Kanoh, “Learning Effects of Robots Teaching Based on Cognitive Apprenticeship Theory,” J. Adv. Comput. Intell. Intell. Inform., Vol.24, No.1, pp. 101-112, 2020.

- [21] J. Brooke, “SUS: A “quick and dirty” usability scale,” P. W. Jordan et al. (Eds.), “Usability Evaluation in Industry,” pp. 189-194, Taylor & Francis Ltd., 1996.

- [22] A. Bangor, P. Kortum, and J. Miller, “Determining what individual SUS scores mean: Adding an adjective rating scale,” J. of Usability Studies, Vol.4, No.3, pp. 114-123, 2009.

- [23] H. Alathas, “How to measure product usability with the system usability scale (SUS) score,” UX Planet, 2018, https://uxplanet.org/how-to-measure-product-usability-with-the-system-usability-scale-sus-score-69f3875b858f [accessed April 1, 2019]

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.