Paper:

Global Thresholding for Scene Understanding Towards Autonomous Drone Navigation

Alvin Wai Chung Lee, Suet-Peng Yong, and Junzo Watada

Universiti Teknologi PETRONAS

32610 Seri Iskandar, Perak Darul Ridzuan, Malaysia

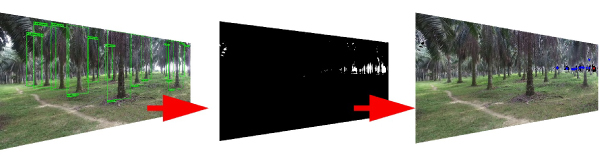

Unmanned aerial vehicles, more typically known as drones are flying aircrafts that do not have a pilot onboard. For drones to fly through an area without GPS signals, developing scene understanding algorithms to assist in autonomous navigation will be useful. In this paper, various thresholding algorithms are evaluated to enhance scene understanding in addition to object detection. Based on the results obtained, Gaussian filter global thresholding can segment regions of interest in the scene effectively and provide the least cost of processing time.

Global thresholding for drone navigation

- [1] H. X. Pham, H. M. La, D. Feil-Seifer, and L. V. Nguyen, “Autonomous UAV Navigation Using Reinforcement Learning,” arXiv: 1801.05086v1, 2018.

- [2] A. Abbaspour, K. K. Yen, P. Forouzannezhad, and A. Sargolzaei, “A Neural Adaptive Approach for Active Fault-Tolerant Control Design in UAV,” IEEE Trans. on Systems, Man, and Cybernetics: Systems, doi: 10.1109/TSMC.2018.2850701, 2018.

- [3] M. Ugliano, L. Bianchi, A. Bottino, and W. Allasia, “Automatically detecting changes and anomalies in unmanned aerial vehicle images,” Proc. of the 2015 IEEE 1st Int. Forum on Research and Technologies for Society and Industry Leveraging a better tomorrow (RTSI), pp. 484-489. 2015.

- [4] S. Kwak, M. Cho, I. Laptev, J. Ponce, and C. Schmid, “Unsupervised object discovery and tracking in video collections,” Proc. of the 2015 IEEE Int. Conf. on Computer Vision (ICCV), pp. 3173-3181, 2015.

- [5] S. Yong and Y. Yeong, “Human Object Detection in Forest with Deep Learning based on Drone’s Vision,” Proc. of 4th Int. Conf. on Computer and Information Sciences (ICCOINS), pp. 1-5, 2018.

- [6] S. U. Lee, S. Y. Chung, and R. H. Park, “A comparative performance study of several global thresholding techniques for segmentation,” Computer Vision, Graphics, and Image Processing, Vol.52, Issue 2, pp. 171-190, 1990.

- [7] G. Gómez, “Local Smoothness in Terms of Variance: The Adaptive Gaussian Filter,” Proc. of the 11th British Machine Vision Conf. (BMVC), Vol.2, pp. 815-824, 2000.

- [8] Z. Qu and L. Zhang, “Research on Image Segmentation Based on the Improved Otsu Algorithm,” Proc. of 2nd Int. Conf. on Intelligent Human-Machine Systems and Cybernetics, Vol.2, pp. 228-231, 2010.

- [9] Y. G. Ng, M. T. S. Bahri, M. Y. I. Syah, I. Mori, and Z. Hashim, “Ergonomics Observation: Harvesting Tasks at Oil Palm Plantation,” J. of Occupational Health, Vol.55, No.5, pp. 405-414, 2013.

- [10] S. Ren, K. He, R. Girshick, and J. Sun, “Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks,” Proc. of Advances in Neural Information Processing Systems 28 (NIPS 2015), pp. 91-99, 2015.

- [11] J. R. Uijlings, K. E. van de Sande, T. Gevers, and A. W. M. Smeulders, “Selective Search for Object Recognition,” Int. J. of Computer Vision, Vol.104, Issue 2, pp. 154-171, 2013.

- [12] R. Girshick, J. Donahue, T. Darrell, and J. Malik, “Rich Feature Hierarchies for Accurate Object Detection and Semantic Segmentation,” Proc. of the IEEE Conf. on Computer Vision and Pattern Recognition, pp. 580-587, 2014.

- [13] P. Arbeláez, J. Pont-Tuset, J. Barron, F. Marques, and J. Malik, “Multiscale Combinatorial Grouping,” Proc. of 2014 IEEE Conf. on Computer Vision and Pattern Recognition, pp. 328-335, 2014.

- [14] C. L. Zitnick and P. Dollár, “Edge Boxes: Locating Object Proposals from Edges,” Proc. of the 13th European Conf. on Computer Vision (ECCV 2014), Part 5, pp. 391-405, 2014.

- [15] J. Xu, “Deep Learning for Object Detection: A Comprehensive Review,” Towards Data Science, 2017, https://towardsdatascience.com/deep-learning-for-object-detection-a-comprehensive-review- 73930816d8d9 [accessed March 13, 2019]

- [16] M. Hsieh, Y. Lin, and W. Hsu, “Drone-Based Object Counting by Spatially Regularized Regional Proposal Network,” Proc. of the 2017 IEEE Int. Conf. on Computer Vision (ICCV), pp. 4165-4173, 2017.

- [17] K. He, G. Gkioxari, P. Dollár, and R. Girshick, “Mask R-CNN,” Proc. of the 2017 IEEE Int. Conf. on Computer Vision (ICCV), pp. 2980-2988, 2017.

- [18] A. Shrivastava, A. Gupta, and R. Girshick, “Training Region-Based Object Detectors with Online Hard Example Mining,” Proc. of the 2016 IEEE Conf. on Computer Vision and Pattern Recognition, pp. 761-769, 2016.

- [19] J. Huang, V. Rathod, C. Sun, M. Zhu, A. Korattikara, A. Fathi, I. Fischer, Z. Wojna, Y. Song, S. Guadarrama, and K. Murphy, “Speed/Accuracy Trade-Offs for Modern Convolutional Object Detectors,” Proc. of the 2017 IEEE Conf. on Computer Vision and Pattern Recognition, pp. 3296-3297, 2017.

- [20] N. Senthilkumaran and S. Vaithegi, “Image Segmentation by Using Thresholding Techniques for Medical Images,” Computer Science & Engineering: An Int. J. (CSEIJ), Vol.6, No.1, pp. 1-13, 2016.

- [21] H. Zhang, J. E. Fritts, and S. A. Goldman, “Image segmentation evaluation: A survey of unsupervised methods,” Computer Vision and Image Understanding, Vol.110, Issue 2, pp. 260-280, 2008.

- [22] S.-P. Yong, J. D. Deng, and M. K. Purvis, “Novelty detection in wildlife scenes through semantic context modelling,” Pattern Recognition, Vol.45, Issue 9, pp. 3439-3450, 2012.

- [23] M. Liu, Y. Liu, H. Hu, and L. Nie, “Genetic algorithm and mathematical morphology based binarization method for strip steel defect image with non-uniform illumination,” J. of Visual Communication and Image Representation, Vol.37, pp. 70-77, 2016.

- [24] Y. Wu, P. Natarajan, S. Rawls, and W. Abd-Almageed, “Learning document image binarization from data,” Proc. of the 2016 IEEE Int. Conf. on Image Processing (ICIP), pp. 3763-3767. 2016.

- [25] R. Girshick, J. Donahue, T. Darrell, and J. Malik, “Rich Feature Hierarchies for Accurate Object Detection and Semantic Segmentation,” Proc. of the 2014 IEEE Conf. on Computer Vision and Pattern Recognition, pp. 580-587, 2014.

- [26] Y. Jia, E. Shelhamer, J. Donahue, S. Karayev, J. Long, R. Girshick, S. Guadarrama, and T. Darrell, “Caffe: Convolutional Architecture for Fast Embedding,” Proc. of the 22nd ACM Int. Conf. on Multimedia (MM’14), pp. 675-678, 2014.

- [27] A. Krizhevsky, I. Sutskever, and G. E. Hinton, “ImageNet classification with deep convolutional neural networks,” Proc. of the 25th Int. Conf. on Neural Information Processing Systems (NIPS’12), pp. 1097-1105, 2012.

- [28] R. Girshick, “Fast R-CNN,” Proc. of the 2015 IEEE Int. Conf. on Computer Vision (ICCV), pp. 1440-1448, 2015.

- [29] K. He, X. Zhang, S. Ren, and J. Sun, “Deep Residual Learning for Image Recognition,” Proc. of the IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), pp. 770-778, 2016.

- [30] N. Otsu, “A Threshold Selection Method from Gray-Level Histograms,” IEEE Trans. on Systems, Man, and Cybernetics, Vol.9, No.1, pp. 62-66, 1979.

- [31] Y. Song and H. Yan, “Image Segmentation Algorithms Overview,” arXiv: 1707.02051, 2017.

- [32] N. R. Pal and S. K. Pal, “A review on image segmentation techniques,” Pattern Recognition, Vol.26, Issue 9, pp. 1277-1294, 1993.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.