Paper:

Orchard Navigation of UGVs Using UAV-LiDAR-Based Semantic Costmap

Soki Nishiwaki, Shuhei Yoshida, and Takanori Emaru

Faculty and Graduate School of Engineering, Hokkaido University

Kita 13, Nishi 8, Kita-ku, Sapporo, Hokkaido 060-8628, Japan

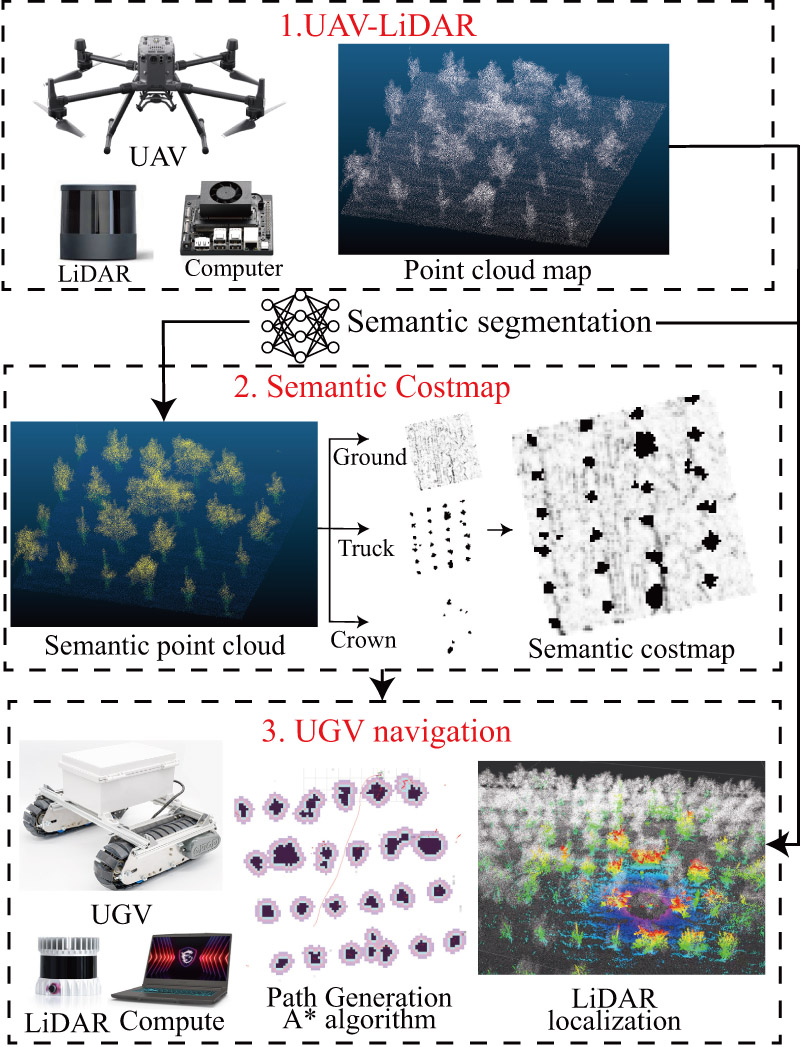

This study proposes a costmap generation method for orchard navigation that integrates both semantic and geometric information from point clouds acquired using unmanned aerial vehicle (UAV)-based light detection and ranging (LiDAR). Conventional approaches often rely on aerial imagery, which cannot capture the internal structures of tree crowns, or on ground-based mapping, which is inefficient and typically limited to height-based costmaps. In this study, orchard-scale three-dimensional point clouds were acquired using UAV-LiDAR, and RandLA-Net was applied for semantic segmentation to classify tree trunks, crowns, and ground. Based on this classification, we constructed a semantic costmap that incorporates obstacle height and shape and integrated it into the Navigation2 framework for unmanned ground vehicle (UGV) navigation. Simulation experiments (20 trials) achieved an 85% success rate, significantly higher than that of conventional methods (60%–65%). Furthermore, field experiments (15 trials) achieved a 93% success rate, demonstrating safe and efficient path planning even in densely canopied environments.

Orchard navigation pipeline

- [1] A. Jiang and T. Ahamed, “Navigation of an autonomous spraying robot for orchard operations using lidar for tree trunk detection,” Sensors, Vol.23, No.10, Article No.4808, 2023. https://doi.org/10.3390/s23104808

- [2] E. Yurtsever, J. Lambert, A. Carballo, and K. Takeda, “A survey of autonomous driving: Common practices and emerging technologies,” IEEE Access, Vol.8, pp. 58443-58469, 2020. https://doi.org/10.1109/ACCESS.2020.2983149

- [3] X. Li and Q. Qiu, “Autonomous navigation for orchard mobile robots: A rough review,” 2021 36th Youth Academic Annual Conf. of Chinese Association of Automation (YAC), pp. 552-557, 2021. https://doi.org/10.1109/YAC53711.2021.9486486

- [4] L. Ye, F. Wu, X. Zou, and J. Li, “Path planning for mobile robots in unstructured orchard environments: An improved kinematically constrained bi-directional RRT approach,” Computers and Electronics in Agriculture, Vol.215, Article No.108453, 2023. https://doi.org/10.1016/j.compag.2023.108453

- [5] S. Nishiwaki, H. Kondo, S. Yoshida, and T. Emaru, “Proposal of UAV-SLAM-based 3D point cloud map generation method for orchards measurements,” J. Robot. Mechatron., Vol.36, No.5, pp. 1001-1009, 2024. https://doi.org/10.20965/jrm.2024.p1001

- [6] H. Li, K. Huang, Y. Sun, X. Lei, Q. Yuan, J. Zhang, and X. Lv, “An autonomous navigation method for orchard mobile robots based on octree 3D point cloud optimization,” Frontiers in Plant Science, Vol.15, Article No.1510683, 2025. https://doi.org/10.3389/fpls.2024.1510683

- [7] X. Yao, Y. Bai, B. Zhang, D. Xu, G. Cao, and Y. Bian, “Autonomous navigation and adaptive path planning in dynamic greenhouse environments utilizing improved LeGO-LOAM and openplanner algorithms,” J. of Field Robotics, Vol.41, No.7, pp. 2427-2440, 2024. https://doi.org/10.1002/rob.22315

- [8] G. Cao, B. Zhang, Y. Li, Z. Wang, Z. Diao, Q. Zhu, and Z. Liang, “Environmental mapping and path planning for robots in orchard based on traversability analysis, improved LeGO-LOAM and RRT* algorithms,” Computers and Electronics in Agriculture, Vol.230, Article No.109889, 2025. https://doi.org/10.1016/j.compag.2024.109889

- [9] S. Pan, Y. Hu, A. Ohya, and A. Yorozu, “LiDAR mapping using point cloud segmentation by intensity calibration for localization in seasonal changing environment,” Smart Agricultural Technology, Vol.11, Article No.100970, 2025. https://doi.org/10.1016/j.atech.2025.100970

- [10] T. Lowe, P. Moghadam, E. Edwards, and J. Williams, “Canopy density estimation in perennial horticulture crops using 3D spinning lidar SLAM,” J. of Field Robotics, Vol.38, No.4, pp. 598-618, 2021. https://doi.org/10.1002/rob.22006

- [11] Y. Wang, Z. Zhang, W. Jia, M. Ou, X. Dong, and S. Dai, “A review of environmental sensing technologies for targeted spraying in orchards,” Horticulturae, Vol.11, No.5, Article No.551, 2025. https://doi.org/10.3390/horticulturae11050551

- [12] T. Shan and B. Englot, “LeGO-LOAM: Lightweight and ground-optimized lidar odometry and mapping on variable terrain,” 2018 IEEE/RSJ Int. Conf. on Intelligent Robots and Systems (IROS), pp. 4758-4765, 2018. https://doi.org/10.1109/IROS.2018.8594299

- [13] T. Shan, B. Englot, D. Meyers, W. Wang, C. Ratti, and D. Rus, “LIO-SAM: Tightly-coupled lidar inertial odometry via smoothing and mapping,” 2020 IEEE/RSJ Int. Conf. on Intelligent Robots and Systems (IROS), pp. 5135-5142, 2020. https://doi.org/10.1109/IROS45743.2020.9341176

- [14] Y. Dong, E. Xu, S. Qiu, W. Li, Y. Liu, and B. Han, “Vibration-aware lidar-inertial odometry based on point-wise post-undistortion uncertainty,” arXiv preprint, arXiv:2507.04311, 2025. https://doi.org/10.48550/arXiv.2507.04311

- [15] J. Kim, S. Kim, C. Ju, and H. I. Son, “Unmanned aerial vehicles in agriculture: A review of perspective of platform, control, and applications,” IEEE Access, Vol.7, pp. 105100-105115, 2019. https://doi.org/10.1109/ACCESS.2019.2932119

- [16] G. Sun, X. Wang, Y. Ding, W. Lu, and Y. Sun, “Remote measurement of apple orchard canopy information using unmanned aerial vehicle photogrammetry,” Agronomy, Vol.9, No.11, Article No.774, 2019. https://doi.org/10.3390/agronomy9110774

- [17] X. Dong, Z. Zhang, R. Yu, Q. Tian, and X. Zhu, “Extraction of information about individual trees from high-spatial-resolution UAV-acquired images of an orchard,” Remote Sensing, Vol.12, No.1, Article No.133, 2020. https://doi.org/10.3390/rs12010133

- [18] T. Yang, R. Ibrahimov, and M. W. Mueller, “Towards safe and efficient through-the-canopy autonomous fruit counting with UAVs,” arXiv preprint, arXiv:2409.18293, 2024. https://doi.org/10.48550/arXiv.2409.18293

- [19] D. V. Lu, D. Hershberger, and W. D. Smart, “Layered costmaps for context-sensitive navigation,” 2014 IEEE/RSJ Int. Conf. on Intelligent Robots and Systems, pp. 709-715, 2014. https://doi.org/10.1109/IROS.2014.6942636

- [20] R. Chai, Y. Guo, Z. Zuo, K. Chen, H.-S. Shin, and A. Tsourdos, “Cooperative motion planning and control for aerial-ground autonomous systems: Methods and applications,” Progress in Aerospace Sciences, Vol.146, Article No.101005, 2024. https://doi.org/10.1016/j.paerosci.2024.101005

- [21] G. Christie, A. Shoemaker, K. Kochersberger, P. Tokekar, L. McLean, and A. Leonessa, “Radiation search operations using scene understanding with autonomous UAV and UGV,” J. of Field Robotics, Vol.34, No.8, pp. 1450-1468, 2017. https://doi.org/10.1002/rob.21723

- [22] L. C. de Lima, N. Lawrance, K. Khosoussi, P. Borges, and M. Brünig, “Under-canopy navigation using aerial lidar maps,” IEEE Robotics and Automation Letters, Vol.9, No.8, pp. 7031-7038, 2024. https://doi.org/10.1109/LRA.2024.3417115

- [23] D. Katikaridis, V. Moysiadis, N. Tsolakis, P. Busato, D. Kateris, S. Pearson, C. G. Sørensen, and D. Bochtis, “UAV-supported route planning for UGVs in semi-deterministic agricultural environments,” Agronomy, Vol.12, No.8, Article No.1937, 2022. https://doi.org/10.3390/agronomy12081937

- [24] Y. Xu, X. Xue, Z. Sun, W. Gu, L. Cui, Y. Jin, and Y. Lan, “Global path planning for navigating orchard vehicle based on fruit tree positioning and planting rows detection from UAV imagery,” Computers and Electronics in Agriculture, Vol.236, Article No.110446, 2025. https://doi.org/10.1016/j.compag.2025.110446

- [25] C. Zhenyu, D. Hanjie, G. Yuanyuan, Z. Changyuan, W. Xiu, and Z. Wei, “Research on an orchard row centreline multipoint autonomous navigation method based on LiDAR,” Artificial Intelligence in Agriculture, Vol.15, No.2, pp. 221-231, 2025. https://doi.org/10.1016/j.aiia.2024.12.003

- [26] I. Kostavelis and A. Gasteratos, “Semantic mapping for mobile robotics tasks: A survey,” Robotics and Autonomous Systems, Vol.66, pp. 86-103, 2015. https://doi.org/10.1016/j.robot.2014.12.006

- [27] S. Matsuzaki, H. Masuzawa, J. Miura, and S. Oishi, “3D semantic mapping in greenhouses for agricultural mobile robots with robust object recognition using robots’ trajectory,” 2018 IEEE Int. Conf. on Systems, Man, and Cybernetics (SMC), pp. 357-362, 2018. https://doi.org/10.1109/SMC.2018.00070

- [28] S. Matsuzaki, H. Masuzawa, and J. Miura, “Image-based scene recognition for robot navigation considering traversable plants and its manual annotation-free training,” IEEE Access, Vol.10, pp. 5115-5128, 2022. https://doi.org/10.1109/ACCESS.2022.3141594

- [29] Y. Li, Q. Feng, C. Ji, J. Sun, and Y. Sun, “GNSS and LiDAR integrated navigation method in orchards with intermittent GNSS dropout,” Applied Sciences, Vol.14, No.8, Article No.3231, 2024. https://doi.org/10.3390/app14083231

- [30] S. Jiang, P. Qi, L. Han, L. Liu, Y. Li, Z. Huang, Y. Liu, and X. He, “Navigation system for orchard spraying robot based on 3D LiDAR SLAM with NDT_ICP point cloud registration,” Computers and Electronics in Agriculture, Vol.220, Article No.108870, 2024. https://doi.org/10.1016/j.compag.2024.108870

- [31] Y. Xia, X. Lei, J. Pan, L. Chen, Z. Zhang, and X. Lyu, “Research on orchard navigation method based on fusion of 3D SLAM and point cloud positioning,” Front. Plant Sci., Vol.14, Article No.1207742, 2023. https://doi.org/10.3389/fpls.2023.1207742

- [32] Q. Hu, B. Yang, L. Xie, S. Rosa, Y. Guo, Z. Wang, N. Trigoni, and A. Markham, “RandLA-Net: Efficient semantic segmentation of large-scale point clouds,” Proc. of the 2020 IEEE/CVF Conf. on Computer Vision and Pattern Recognition (CVPR), 2020. https://doi.org/10.1109/CVPR42600.2020.01112

- [33] Q. Hu, B. Yang, L. Xie, S. Rosa, Y. Guo, Z. Wang, N. Trigoni, and A. Markham, “Learning semantic segmentation of large-scale point clouds with random sampling,” IEEE Trans. on Pattern Analysis and Machine Intelligence, Vol.44, No.11, pp. 8338-8354, 2021. https://doi.org/10.1109/TPAMI.2021.3083288

- [34] N. Varney, V. K. Asari, and Q. Graehling, “DALES: A large-scale aerial lidar data set for semantic segmentation,” arXiv preprint, arXiv:2004.11985, 2020. https://doi.org/10.48550/arXiv.2004.11985

- [35] S. Macenski, F. Martín, R. White, and J. G. Clavero, “The marathon 2: A navigation system,” 2020 IEEE/RSJ Int. Conf. on Intelligent Robots and Systems (IROS), 2020. https://doi.org/10.1109/IROS45743.2020.9341207

- [36] S. Macenski, T. Foote, B. Gerkey, C. Lalancette, and W. Woodall, “Robot operating system 2: Design, architecture, and uses in the wild,” Science Robotics, Vol.7, No.66, Article No.eabm6074, 2022. https://doi.org/10.1126/scirobotics.abm6074

- [37] Food and Agriculture Organization of the United Nations, “FAOSTAT Statistical Database.” https://www.fao.org/faostat/ [Accessed January 28, 2026]

- [38] United States Department of Agriculture, Economic Research Service, “U.S. Fruit and Vegetable Industries Try To Cope With Rising Labor Costs.” https://www.ers.usda.gov/amber-waves/2022/december/u-s-fruit-and-vegetable-industries-try-to-cope-with-rising-labor-costs [Accessed January 28, 2026]

- [39] Ministry of Agriculture, Forestry and Fisheries of Japan, “FY2021 Summary of the Annual Report on Food, Agriculture and Rural Areas in Japan.” https://www.maff.go.jp/e/data/publish/ [Accessed January 28, 2026]

- [40] “ROS 2 Documentation Website.” https://docs.ros.org/en/foxy/index.html [Accessed September 24, 2025]

- [41] “lidar_localization_ros2 github.” https://github.com/rsasaki0109/lidar_localization_ros2 [Accessed September 24, 2025]

- [42] “Navigation2 github.” https://github.com/ros-navigation/navigation2 [Accessed September 24, 2025]

- [43] “Navfn Planner github.” https://github.com/ros-navigation/navigation2/tree/main/nav2_navfn_planner [Accessed September 24, 2025]

- [44] “DWB Controller github.” https://github.com/ros-navigation/navigation2/tree/main/nav2_dwb_controller [Accessed September 24, 2025]

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.