Research Paper:

Usage Behaviors of Large Language Models: Taking Undergraduate Students as an Example

Chien-Chou Chen

and Hung-Yi Lu†

and Hung-Yi Lu†

Department of Statistics and Information Science, Fu Jen Catholic University

No.510 Zhongzheng Rd., Xinzhuang Dist., New Taipei 242062, Taiwan

†Corresponding author

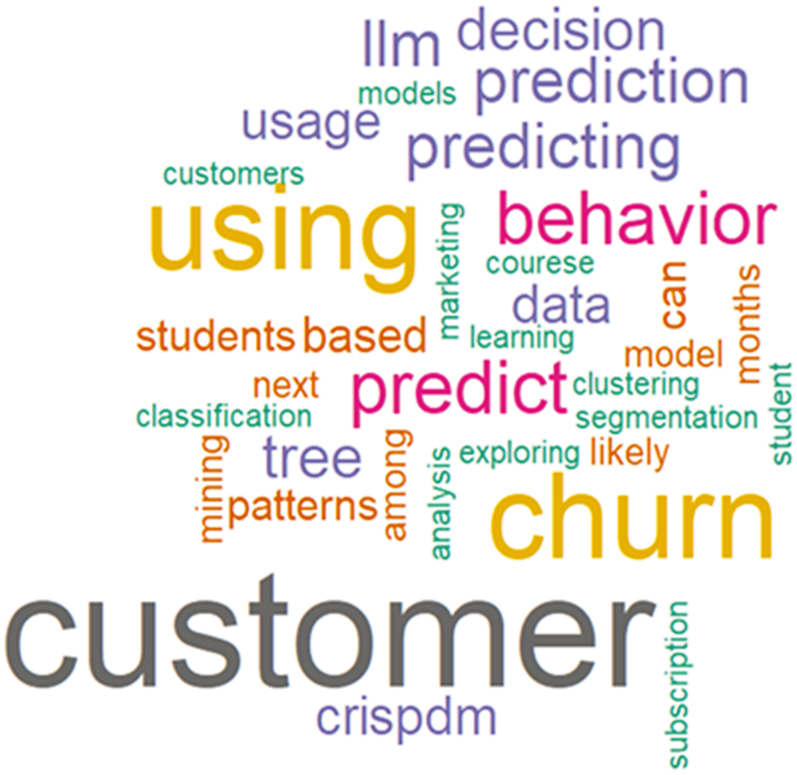

This study reveals the usage behaviors of large language models (LLMs) by undergraduate students (n=56) taking a Management Information Systems course in Spring 2025 at a private university in Taiwan. The study investigates the following: (1) the required courses where students use LLMs, (2) the LLM tools students use, and (3) the paid tools of LLMs students use to assist in their studies. We also examine (1) the association rule analysis of courses using LLMs and (2) the content analysis of the title of an assignment (a word cloud). This study provides firsthand information on the use of LLMs by undergraduate students and offers relevant suggestions for academic settings based on both quantitative and qualitative analyses.

Word cloud of proposal titles generated by large language models

- [1] G. Yenduri et al., “GPT (generative pre-trained transformer) – A comprehensive review on enabling technologies, potential applications, emerging challenges, and future directions,” IEEE Access, Vol.12, pp. 54608-54649, 2024. https://doi.org/10.1109/ACCESS.2024.3389497

- [2] ChatGPT, 2025. https://chatgpt.com/ [Accessed June 30, 2025]

- [3] Gemini, 2025. https://gemini.google/ [Accessed June 30, 2025]

- [4] DeepSeek, 2025. https://www.deepseek.com/ [Accessed June 30, 2025]

- [5] W. Lyu et al., “Evaluating the effectiveness of LLMs in introductory computer science education: A semester-long field study,” Proc. of the 11th ACM Conf. on Learning @ Scale, pp. 63-74, 2024. https://doi.org/10.1145/3657604.3662036

- [6] H. Naveed et al., “A comprehensive overview of large language models,” ACM Trans. on Intelligent Systems and Technology, Vol.16, No.5, Article No.106, 2025. https://doi.org/10.1145/3744746

- [7] T. B. Brown et al., “Language models are few-shot learners,” arXiv:2005.14165, 2020. https://doi.org/10.48550/arXiv.2005.14165

- [8] I. Ahmed et al., “ChatGPT versus Bard: A comparative study,” Engineering Reports, Vol.6, No.11, Article No.e12890, 2024. https://doi.org/10.1002/eng2.12890

- [9] T. Paustian and B. Slinger, “Students are using large language models and AI detectors can often detect their use,” Frontiers in Education. Vol.9, Article No.1374889, 2024. https://doi.org/10.3389/feduc.2024.1374889

- [10] M. Bernabei et al., “Students’ use of large language models in engineering education: A case study on technology acceptance, perceptions, efficacy, and detection chances,” Computers and Education: Artificial Intelligence, Vol.5, Article No.100172, 2023. https://doi.org/10.1016/j.caeai.2023.100172

- [11] U. Arora et al., “Analyzing LLM usage in an advanced computing class in India,” Proc. of the 27th Australasian Computing Education Conf., pp. 154-163, 2025. https://doi.org/10.1145/3716640.3716657

- [12] A. Padiyath et al., “Insights from social shaping theory: The appropriation of large language models in an undergraduate programming course,” Proc. of the 2024 ACM Conf. on Int. Computing Education Research, Vol.1, pp. 114-130, 2024. https://doi.org/10.1145/3632620.3671098

- [13] G. Piatetsky-Shapiro, “Discovery, analysis, and presentation of strong rules,” G. Piatetsky-Shapiro and W. Frawley (Eds.), “Knowledge Discovery in Databases,” pp. 255-264, AAAI/MIT Press, 1991.

- [14] R. Agrawal, T. Imieliński, and A. Swami, “Mining association rules between sets of items in large databases,” Proc. of the 1993 ACM SIGMOD Int. Conf. on Management of data, pp. 207-216, 1993. https://doi.org/10.1145/170035.170072

- [15] V. Srinadh, “Evaluation of Apriori, FP growth and Eclat association rule mining algorithms,” Int. J. of Health Sciences, Vol.6, No.S2, pp. 7475-7485, 2022. https://doi.org/10.53730/ijhs.v6nS2.6729

- [16] Y.-L. Chou, Y.-L. Wu, and R.-F. Chao, “Applying the UTAUT model to explore user behavior in ChatGPT usage,” Int. J. of Research in Business & Social Science, Vol.14, No.2, pp. 332-341, 2025. https://doi.org/10.20525/ijrbs.v14i2.4047

- [17] M. Westerlund and A. Shcherbakov, “LLM integration in workbook design for teaching coding subjects,” Proc. of the 21st Int. Conf. on Smart Technologies & Education, Vol.1, pp. 77-85, 2024. https://doi.org/10.1007/978-3-031-61891-8_7

- [18] D. Weber-Wulff et al., “Testing of detection tools for AI-generated text,” Int. J. for Educational Integrity, Vol.19, Article No.26, 2023. https://doi.org/10.1007/s40979-023-00146-z

- [19] M. Lissack and B. Meagher, “Responsible use of large language models: An analogy with the Oxford tutorial system,” She Ji: The J. of Design, Economics, and Innovation, Vol.10, No.4, pp. 389-413, 2024. https://doi.org/10.1016/j.sheji.2024.11.001

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.