Review:

Agricultural Robots in Open Field

Michihisa Iida

Graduate School of Agriculture, Kyoto University

Kitashirakawa Oiwake-cho, Sakyo-ku, Kyoto 606-8502, Japan

Agricultural robots that are operated in open fields have different mechanisms, sensing technologies, and functionalities depending on the crop and task. In this review, we present the classification of such agricultural robots and describe the mobile mechanism, power source, implementation, navigation, and sensing technology for each task. Finally, conclusions are suggested based on future perspectives.

Electric agricultural platform: Weed mower

1. Introduction

Owing to the increasing population of the world, agriculture is playing an important role in the production of safe and high-quality food. However, as the agricultural population is decreasing and farmers are aging, agricultural robots are beginning to be put to practical use in order to improve agricultural productivity. In addition, dangerous tasks such as spraying pesticides, which can cause exposure to agricultural chemicals, and harvesting fruits at high altitudes are being increasingly performed by robots.

Agricultural robots that are used in practical applications can be classified into vehicles equipped with agricultural machinery, such as tractors and combine harvesters, and with autonomous driving technology and robots equipped with arms and hands, which can replace human manual labor.

This review presents an overview of the core technologies being developed and studied for agricultural robots that perform tasks in open fields.

2. Classification of Agricultural Robot

We classified agricultural robots according to the tasks they perform in open fields. The classification results are listed in Table 1.

This classification categorizes agricultural work into nine tasks. The following are the six main features of each task: mobile platform, power source, implementation, navigation, sensing, and functionality.

2.1. Mobile Platform

A mobile platform is essential for robots to perform agricultural tasks in open fields. Mobile platforms are broadly classified as ground and aerial vehicles. Ground vehicles are classified into wheel-, track-, leg-, and hybrid wheel-leg-type vehicles. In this review, only multicopters were considered as aerial vehicles.

2.1.1. Wheel-Type Platform

Wheel-type platforms are the most used platforms, such as tractors [a–c] and rice transplanters d,e. Robot tractors have a four-wheel drive and front- or rear-wheel steering mechanisms. Certain platforms have a four-wheel drive and four-wheel steering mechanisms (Fig. 1) f. A four-wheel steering mechanism has the ability to freely change direction; however, it is complex. A typical front-wheel steering mechanism is the Ackerman steering type, and a geometric or dynamic analysis was performed by treating a four-wheel vehicle model as a front and rear two-wheel (bicycle) model.

Table 1. Classification of agricultural robots used in open fields.

2.1.2. Track-Type Platform

When a wheel-type platform travels after rain or on soft soil, it may slip or sink significantly, thereby making travel impossible. In the rice paddy fields of Japan, where harvesting work is performed on soft and wet fields, track-type platforms are used because transporting the harvested crops is necessary [1, g, h]. A track-type platform (Fig. 2) has a large contact area and can firmly transmit a propulsive force to the contact surface. Therefore, track-type platforms are used to ensure running performance on rough terrains. In addition, a track-type platform can spin turn, which allows it to turn with a small radius.

Fig. 1. Weed mower with four-wheel-drive and four-steering mechanisms.

Fig. 2. A full track-type robot combine 1.

However, track-type vehicles require a large driving force when turning, which can disturb the soil surface. Thus, semi-crawler vehicles that combine wheels and tracks also exist.

2.1.3. Leg-Type Platform

Wheel- or track-type platforms may not be capable of traversing steep slopes or rough surfaces. Therefore, biomimetic leg-type platforms with four and six legs, such as horses and insects, are being studied. By alternating between four or six legs 2, they can walk while adapting to uneven road surfaces. Studies on human-like bipedal robots have increased in recent years. Although legged vehicles can be used for various farm tasks, they are slower to move and consume more energy than wheeled or tracked vehicles. In addition, their mechanisms are complex and unsuitable for transporting heavy objects.

2.1.4. Hybrid Leg-Wheel-Type Platform

To combine the high mobility of a wheel-type platform with the ability to maneuver on rough ground, hybrid platforms combining legs and wheels have been developed.

Figure 3 shows the Kubota All-Terrain Rover [3, i]. This platform is a four-wheel drive and four-wheel steering vehicle. It has four legs, which consist of a three-degree of freedom hydraulic manipulator and hydraulic motor. The height and attitude of the vehicle are adjusted using two joints of the manipulator. The steering angle is controlled by a joint of the manipulator. The platform can be maintained horizontally by adjusting the positions of the four legs. In addition, the wheels attached to the elbow joints allow the platform to move on uneven surfaces.

Fig. 3. Hybrid leg-wheel-type platform (photo: M. Iida).

2.1.5. Multi-Copter Platform

The use of unmanned aerial vehicles, which have multiple rotors and can fly, hover, takeoff, and land, is also increasing in agriculture. Initially, they were equipped with visible and near-infrared cameras and were used for remote sensing to photograph crops from the sky and diagnose their growth. In recent years, they have become well-known as aerial platforms that can fly automatically, spray pesticides, and apply fertilizers over a wide area in a short time 4,5.

2.2. Power Source

Power sources are important for agricultural robots that operate outdoors. Agricultural machinery such as tractors and combines uses engines as a power source to generate hydraulic power and electricity to operate various implements. However, for a decarbonized society, electric robots that operate on electricity from batteries or fuel cells rather than engines that use fossil fuels are being studied. Therefore, in this review, power source classification includes engine and electric types 6 and hybrid types that combine both engines and electricity.

2.3. Implementation

Many agricultural robots are robotized agricultural machines, such as commercially available tractors 7,8,9, rice transplanters 10, and combine harvesters 1. These are equipped with various implements on a mobile platform using automatic driving technology. In case of tractors, automation and robotization are achieved by selecting and replacing the implements for each agricultural task. Grains such as rice, wheat, and beans are harvested simultaneously on a field-by-field basis using harvesters. Therefore, combine harvesters have been robotized by adding automatic driving technology. In contrast, for selective harvesting of fruit trees and vegetables, research and development tasks are combining a manipulator, hand, and square part to detect the position and ripeness of a harvested product. However, studies on using manipulators and hands for tasks other than harvesting are lacking.

2.4. Navigation in Open Field

Since the 1990s, autonomous navigation using the global navigation satellite system (GNSS), total stations, and inertial measurement units (IMUs) have been studied as a guidance method for agricultural robots working in farm fields. Since 2000, the focus has been on methods that combine the GNSS and IMUs to estimate the position and orientation of a robot with high accuracy and allow it to travel along a target route.

Navigation is classified into absolute and relative. The GNSS can determine the absolute position of an object on Earth with high accuracy.

By contrast, detecting position and orientation using ultrasonic sensors, light detection and ranging (LiDAR), and cameras yields the position and orientation of an object relative to those of the surrounding objects.

2.4.1. Absolute Positioning

The GNSS positioning accuracy varies significantly depending on the method used, such as single, differential, and real-time kinematic (RTK) positioning. Agricultural robots use RTK positioning with a positioning error of a few centimeters. Differential positioning is used for automatic straight-line movement of tractors and rice transplanters. For automatic driving of agricultural robots, detecting not only their position but also their attitude and heading is important; therefore, a combination of the GNSS and IMUs is used 1,7,8,9,10.

For a robot to perform agricultural work automatically in a field, accurately detecting its position and orientation is essential. Therefore, high-precision positioning technology combining RTK positioning and the GNSS has been used in agricultural robots since the late 1990s. This method is most commonly used as a navigation sensor for agricultural robots, such as tractors, rice transplanters, and combine harvesters.

Since the 2000s, GNSS positioning uses not only the global positioning system (GPS) but also the GLONASS to improve the positioning accuracy, stability, and reliability. Since 2018, multifrequency GNSS compatible with GALLILEO, Beidou, and QZSS 9 has been used, and high-precision positioning has become stable, even on farmland in mountainous areas of Japan.

2.4.2. Relative Positioning

Many studies have been conducted on detecting the relative position and orientation of a target using camera images to position a vehicle or an arm. Ma 11 developed a visual navigation system to detect the relative position between a vehicle and rice row while capturing images of rice plants using an industrial camera in real time. Automatic harvesting using a robot combine has been performed using RTK–GNSS positioning and IMUs. When the grain tank is full, the position and orientation of the container of the transporter are detected using camera images to position the spout of the unloader 12. Studies have also been conducted to detect the relative position and orientation of a charging station using camera images to connect the charger electrodes to the vehicle electrodes. This will enable electric agricultural machinery to charge its batteries and control its position 13.

2.4.3. Path Planning and Path Following Control

Agricultural robots perform plowing, rice planting, and harvesting while following a target path. Therefore, separate control methods are used to move straight along the target path and turn around the headland.

When tilling using a robot tractor, a target route that combines straight movements and turning around the headland is generated. Keyhole or switchback turns are performed on the headland depending on the working width 8,14.

In a robotic combine harvester, a spiral working path is created that combines straight movements and turns to harvest from the periphery of a field 1. Combine harvesters can spin turns; however, to avoid trampling crops, they perform alpha or switchback turns.

As described above, straight-line and turning control of agricultural robots are important technologies, and although they differ depending on the steering mechanism of the platform, they perform agricultural work by following a target path.

3. Implementation

In many cases, agricultural works such as tilling, fertilizing, sowing, transplanting, and spraying are performed autonomously by attaching various implements to tractors. A manipulator equipped with a hand is used to harvest fruits and vegetables. In particular, a visual system for fruit-harvesting robots detects fruits and determines whether their location is important.

3.1. Manipulator and Hand

When harvesting fruits and vegetables, the fruits are selected and picked individually according to their ripeness. Therefore, a hand that can gently yet securely grasp a fruit and a manipulator that can accurately position the hand on the fruit are required. Since the 1980s, research and development have been conducted on tomato and citrus fruit-harvesting robots in Japan, orange-harvesting robots in the United States, and apple-harvesting robots in France. These manipulators are available in hydraulic and electric types. However, the joint configuration is a vertically multi-jointed and polar coordinate type, considering the range of motion relative to the position of the harvesting target.

In addition, for heavy fruits and wide planting rows in outdoor watermelon plantation, research has been conducted on manipulators and hands that have a long range of motion and can pick up fruits grown on the ground surface 15.

Fruit-harvesting robots 16,17,18 require functions such as fruit position detection, ripeness determination, accurate positioning of the hand, and fruit separation. Therefore, the integration of cameras, hands, manipulators, and artificial intelligence (AI) is being promoted. In apple production, harvesting requires considerable labor time; therefore, research was conducted on a dual-arm harvesting robot to solve this problem. Using an algorithm to operate the two arms efficiently, a 34% reduction in labor time was achieved 19.

3.2. Fruit Detection

Yang et al. 20 reviewed vision-based fruit recognition and harvest-positioning control for fruit-harvesting robots. To detect fruits, color (RGB) information and AI-based fruit detection are applied, and stereo vision and depth images are used to obtain the harvesting position.

Zhou et al. 21 used a handheld camera to detect a fruit and determine its relative position for harvesting.

4. Sensing Technology

4.1. Simultaneous Localization and Mapping

Performing high-precision positioning using the GNSS is difficult in farm fields and orchards in mountainous regions. In such environments, self-location estimation methods using LiDAR and cameras are being studied to estimate a self-location of a robot.

In orchards, the shape of the tree crown changes significantly with the season; however, trunks are easy to detect and suitable for map creation. Therefore, detection is possible with simultaneous localization and mapping (SLAM) using two-dimensional (2D) LiDAR. Because the detection results change depending on the posture of the vehicle when traveling on rough terrain, SLAM using three-dimensional (3D) LiDAR is more appropriate. However, as the number of points obtained from a single scan increases, the time required for matching calculations increases significantly. Normal distributions transform and iterative closet point are used to process scans efficiently and effectively 22.

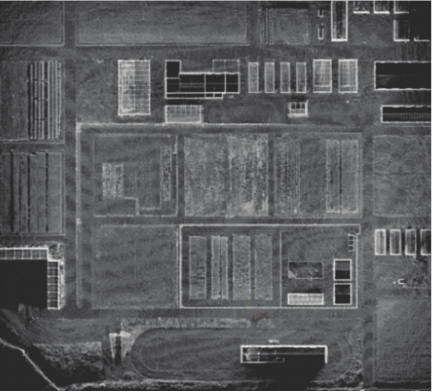

Okamoto et al. 23 used LiDAR-based SLAM technology to enable a robot combine to enter and exit garages where the GNSS cannot be used and to navigate farm roads that are subject to seasonal changes. They created a point cloud map of the experimental farm using point cloud data obtained using 3D LiDAR (Fig. 4).

In camera-based SLAM, feature points in an image are detected, and the 3D position of the feature points is calculated using stereo vision or the distance (depth) from an RGB-D camera 24. In the former method, parallax is calculated from the shift in viewpoint caused by movement using a monocular camera; a stereo camera may also be used.

Fig. 4. A 3D point cloud map of the driving area in the experimental farm 23.

Fig. 5. Ultrasonic sensors and 2D LiDAR (photo: M. Iida).

In SLAM, the position and orientation of a robot are estimated by matching the detected point cloud with a point cloud map created in advance. However, when a large change occurs in the speed or angular velocity, the range in which the matching process is performed must be widened, which increases the calculation time and causes false positives. To compensate for this, the distance of movement of the robot is detected using a vehicle speed sensor or an IMU to shorten the processing time and improve the estimation accuracy 25.

4.2. Safety and Intelligence

4.2.1. Obstacle Detection and Avoidance

When a robot performs an automated task, ensuring its safety by preventing it from coming into contact with nearby people or objects and causing harm is necessary. To achieve this, a robot must detect nearby people and objects as obstacles.

Ultrasonic sensors are frequently used to detect obstacles in open fields (Fig. 5). Ultrasonic sensors are inexpensive and reliable. They can easily measure their distance from an object. However, the measurement cycle depends on the frequency of ultrasonic waves used, and the measurement accuracy varies depending on the temperature. In addition, ultrasonic sensors are limited to detecting obstacles at a close range. Therefore, studies have been conducted to combine them with millimeter-wave radar sensors, which can detect obstacles at longer distances 26. Studies have been also conducted on using 3D LiDAR to detect obstacles and track their movements 27. In recent years, obstacle detection has been performed in combination with LiDAR and cameras used in SLAM.

Ospina and Itakura showed that a robot tractor operating on farm roads can avoid obstacles by detecting them using 2D LiDAR and a layered cost map 28.

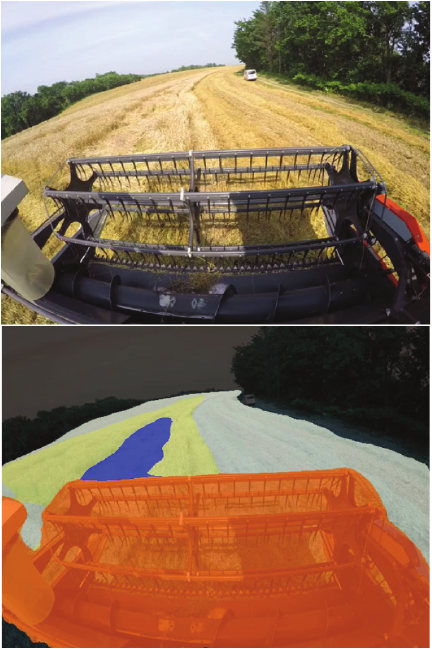

Fig. 6. Object detection by semantic segmentation 31.

Ultrasonic sensors and LiDAR only detect the distance of a robot to an obstacle; they do not identify whether an obstacle is a person. Li et al. 29,30 detected people from camera images through real-time processing using semantic segmentation to ensure the safety of a robot combine.

4.2.2. Object Detection

Object identification is important for robots to recognize their surroundings. Zhu et al. 31,32 used semantic segmentation to detect not only rice plants but also stubbles, ridges, and people in a rice-harvesting field for a robot combine harvester (Fig. 6).

Chen et al. 33 used deep learning to detect lodging of rice plants, harvest scars, ridges, people, and other obstacles in real time from images of a large robotic combine harvester captured using a fisheye lens camera in a large robotic combine harvester.

Tractors, rice transplanters, and combine harvesters are equipped with implements such as tilling, rice planting, and harvesting devices such that they can perform agricultural work while driving automatically. Therefore, in Japan and overseas, navigation technology is used to detect the position of a vehicle and control its speed, turning, and implements, thereby making it possible for the vehicle to work automatically.

Because fruits and vegetables ripen at different stages, selectively harvesting individual fruits according to their ripeness is necessary. Therefore, studies have been conducted on harvesting robots that use manipulators and hands.

In addition, cultivation methods for fruits and vegetables vary depending on the type and variety as well as the region in which they are grown. The sizes and shapes of fruits and vegetables also vary. Therefore, manipulators and hands with various sizes, shapes, and functions have been researched.

4.2.3. Remote Monitoring System for Safety

Under current legal conditions, human supervision is essential during operation of agricultural robots. Therefore, to perform agricultural operation efficiently, a system was proposed that allows one person to monitor multiple robots remotely. In this system, images of the surroundings of a robot are acquired using a monocular camera mounted on the robot. The images are then analyzed by a remote server to detect the locations of humans, and the results are sent to the robot and supervisor 34.

5. Summary

We reviewed agricultural robots operating in open fields. Many agricultural tasks involve repeating simple steps while driving. Agricultural tasks can be automated by adding navigation sensors and autonomous driving technology to existing agricultural machinery. However, because weather, soil conditions, and shapes vary depending on the time of the work and field, corrections and reconfigurations must be made on-site. In the future, these task settings are expected to be automated using AI.

Current agricultural robots require an assistant to replenish materials such as fertilizers and seeds when fertilizing or sowing. To further advance automation in agricultural production sites, automation of material replenishment is necessary.

Robots that selectively harvest fruits and vegetables use a visual device to find fruits as well as a manipulator and hand as working machines. Fruit detection, which previously had low detection accuracy and required long processing times, can now achieve high detection accuracy in real time using AI. However, manipulators and hands are expensive, and the working speed is limited. In addition, developing a cultivation system for robotic harvesting is necessary.

With regard to the safety of robotic work, in addition to the research and development of safety features to be installed in robots, discussions are required regarding legal regulations and health applications.

- [1] M. Iida, R. Uchida, H. Zhu, M. Suguri, H. Kurita, and R. Masuda, “Path-following control of a head-feeding combine robot,” Engineering in Agriculture, Environment and Food, Vol.6, No.2, pp. 61-67, 2013. https://doi.org/10.11165/eaef.6.61

- [2] M. Iida, D. Kang, M. Taniwaki, M. Tanaka, and M. Umeda, “Localization of CO _2 2 source by a hexapod robot equipped with an anemoscope and a gas sensor,” Computers and Electronics in Agriculture, Vol.63, No.1, pp. 73-80, 2008. https://doi.org/10.1016/j.compag.2008.01.016

- [3] K. Takayama, M. Iida, M. Suguri, and R. Masuda, “Automatic travelling of 4-wheel-drive and 4-wheel-steering vehicle ”KATR”,” Report of Kansai Society of Agricultural Machinery and Food Engineers, Vol.138, 2025 (in Japanese).

- [4] S. Ijaz, Y. Shi, Y. A. Khan, M. Khodaverdian, and U. Javid, “Robust adaptive control low design for enhanced stability of agriculture UAV used for pesticide spraying,” Aerospace Science and Technology, Vol.155, Article No.109676, 2024. https://doi.org/10.1016/j.ast.2024.109676

- [5] Z. Ren, H. Zheng, J. Chen, T. Chen, P. Xie, Y. Xu, J. Beng, H. Wang, M. Sun, and W. Jiao, “Integrating UAV, UGV and UAV-UGV collaboration in future industrialized agriculture: Analysis, opportunities and challenges,” Computers and Electronics in Agriculture, Vol.227, Article No.109631, 2024. https://doi.org/10.1016/j.compag.2024.109631

- [6] Y. Yamasaki, K. Ishii, and N. Noguchi, “Speed control of an autonomous electric vehicle for orchard spraying,” Computers and Electronics in Agriculture, Vol.236, Article No.110419, 2025. https://doi.org/10.1016/j.compag.2025.110419

- [7] R. Takai, L. Yang, and N. Noguchi, “Development of crawler-type robot tractor based on GNSs and IMU,” IFAC Proc. Volumes, Vol.46, No.4, pp. 95-98, 2013. https://doi.org/10.3182/20130327-3-JP-3017.00024

- [8] L, Yang, N. Noguchi, and R. Takai, “Development and application of a wheel-type robot tractor,” Engineering in Agriculture, Environment and Food, Vol.9, pp. 131-140, 2016. https://doi.org/10.1016/j.eaef.2016.04.003

- [9] H. Wang and N. Noguchi, “Navigation of a robot tractor using the centimeter level augmentation information via quasi-zenith satellite system,” Engineering in Agriculture, Environment and Food, Vol.12, No.4, pp. 414-419, 2019. https://doi.org/10.1016/j.eaef.2019.06.003

- [10] Y. Nagasaka, N. Umeda, Y. Kanetani, K. Taniwaki, and Y. Sasaki, “Autonomous guidance for rice transplanting using global positioning and gyroscopes,” Computers and Electronics in Agriculture, Vol.43, pp. 223-234, 2004. https://doi.org/10.1016/j.compag.2004.01.005

- [11] Z. Ma, “Rice row tracking control of crawler tractor based on the satellite and visual integrated navigation,” Computers and Electronics in Agriculture, Vol.197, Article No.106935, 2022. https://doi.org/10.1016/j.compag.2022.106935

- [12] H. Kurita, M. Iida, W. J. Cho, and M. Suguri, “Rice autonomous harvesting: Operation framework,” J. of Field Robotics, Vol.34, No.6, pp. 1084-1099, 2017. https://doi.org/10.1002/rob.21705

- [13] H. Hsu, M. Iida, J. Zhu, M. Ishii, K. Nonami, and M. Suguri, “Vision-based parking control system for docking to recharge an agricultural electric vehicle,” Engineering in Agriculture, Environment and Food, Vol.18, No.3, pp. 186-192, 2025. https://doi.org/10.37221/eaef.18.3_186

- [14] R. Takai, L, Yang, and N. Noguchi, “Development of a crawler-type robot tractor using RTK-GPS and IMU,” Engineering in Agriculture, Environment and Food, Vol.7, pp. 143-147, 2014. https://doi.org/10.1016/j.eaef.2014.08.004

- [15] S. Sakai, M. Iida, K. Osuka, and M. Umeda, “Design and control of a heavy material handling manipulator for agricultural robots,” Autonomous Robots, Vol.25, pp. 189-204, 2008. https://doi.org/10.1007/s10514-008-9090-y

- [16] W. Hua, Z. Zhang, W. Zhang, X. Liu, C. Hu, Y. He, M. Mhamed, X. Li, H. Dong, C. K. Saha, W. U. Khan, F. Abid, and M. A. Abdelhamid, “Key technologies in apple harvesting robot for standardized orchards: A comprehensive review of innovations, challenges, and future directions,” Computers and Electronics in Agriculture, Vol.235, Article No.110343, 2025. https://doi.org/10.1016/j.compag.2025.110343

- [17] Y. Onishi, T. Yoshida, H. Kurita, T. Fukao, H. Arihara, and A. Iwai, “An automated fruit harvesting robot by using deep learning,” ROBOMECH J., Vol.6, Article No.13, 2019. https://doi.org/10.1186/s40648-019-0141-2

- [18] D. W. Choi, J. H. Park, J. Yoo, and K. Ko, “AI-driven adaptive grasping and precise detaching robot for efficient citrus harvesting,” Computers and Electronics in Agriculture, Vol.232, Article No.110131, 2025. https://doi.org/10.1016/j.compag.2025.110131

- [19] K. Lammers, K. Zhang, K. Zhu, P. Chu, Z. Li, and R. Lu, “Development and evaluation of a dual-arm robotic apple harvesting system,” Computers and Electronics in Agriculture, Vol.227, Article No.109586, 2024. https://doi.org/10.1016/j.compag.2024.109586

- [20] Y. Yang, Y. Han, S. Li, Y. Yang, M. Zhang, and H. Li, “Vision based fruit recognition and positioning technology for harvesting robots,” Computers and Electronics in Agriculture, Vol.213, Article No.108258, 2023. https://doi.org/10.1016/j.compag.2023.108258

- [21] H. Zhou, A. Ahmed, T. Liu, M. Romeo, T. Beh, Y. Pan, H. Kang, and C. Chen, “Finger vision enabled real-time defect detection in robotic harvesting,” Computers and Electronics in Agriculture, Vol.234, Article No.110222, 2025. https://doi.org/10.1016/j.compag.2025.110222

- [22] S. Jiang, P. Qi, L. Han, L. Liu, Y. Li, Z. Hung, Y. Liu, and X. He, “Navigation system for orchard spraying robot based on 3D LiDAR SLAM with NDT_ICP point cloud registration,” Computers and Electronics in Agriculture, Vol.220, Article No.108870, 2024. https://doi.org/10.1016/j.compag.2024.108870

- [23] S. Okamoto, M. Iida, D. Chen, S. Konishi, M. Suguri, and R. Masuda, “Autonomous farm-road traveling of a robot with harvester using LiDAR-SLAM,” J. of Japanese Society of Agricultural Machinery and Food Engineers, Vol.87, No.3, pp. 204-214, 2025 (in Japanese). https://doi.org/10.11357/jsamfe.87.3_204

- [24] J. Gimenez, S. Sansoni, S. Tosetti, F. Capraro, and R. Carelli, “Trunk detection in tree crops using RGB-D images for structure-based ICM-SLAM,” Computers and Electronics in Agriculture, Vol.199, Article No.107099, 2022. https://doi.org/10.1016/j.compag.2022.107099

- [25] H. G. Kim, H. M. Lee, and S. H. Lee, “A new covariance intersection based integrated SLAM framework for 3D outdoor agricultural applications,” Electronics Letters, Vol.60, No.9, Article No.e13206, 2024. https://doi.org/10.1049/ell2.13206

- [26] N. Noguchi, “Agricultural Vehicle Robot,” J. Robot. Mechatron., Vol.30, No.2, pp. 165-172, 2018. https://doi.org/10.20965/jrm.2018.p0165

- [27] W. Jiang, W. Chen, C. Song, Y. Yan, Y. Zhang, and S. Wang, “Obstacle detection and tracking for intelligent agricultural machinery,” Computers and Electrical Engineering, Vol.108, No.1, Article No.108670, 2023. https://doi.org/10.1016/j.compeleceng.2023.108670

- [28] R. Ospina and K. Itakura, “Obstacle detection and avoidance system based on layered costmaps for robot tractors,” Smart Agricultural Technology, Vol.11, Article No.100973, 2025. https://doi.org/10.1016/j.atech.2025.100973

- [29] Y. Li, M. Iida, M. Suguri, and R. Masuda, “Reginal segmentation of field images based on convolutional neural network for rice combine harvester,” J. of the Japanese Society of Agricultural Machinery and Food Engineers, Vol.82, No.1, pp. 47-56, 2020.

- [30] Y. Li, M. Iida, T. Suyama, M. Suguri, and R. Masuda, “Implementation of deep-learning algorithm for obstacle detection and collision avoidance for robotic harvester,” Computers and Electronics in Agriculture, Vol.174, Article No.105499, 2020. https://doi.org/10.1016/j.compag.2020.105499

- [31] J. Zhu, M. Iida, Y. Li, S. Chen, S. Cheng, M. Suguri, and R. Masuda, “Real-time object detection in rice field images by semantic segmentation for robotic combine harvester – Pixel-wise level detection of rice lodging existence –,” J. of the Japanese Society of Agricultural Machinery and Food Engineers, Vol.84, No.3, pp. 145-154, 2022.

- [32] J. Zhu, M. Iida, S. Chen, S. Cheng, M. Suguri, and R. Masuda, “Paddy field object detection for robotic combine based on real-time semantic segmentation algorithm,” J. of Field Robotics, Vol.41, pp. 273-287, 2023. https://doi.org/10.1002/rob.22260

- [33] S. Chen, M. Iida, Y. Li, J. Zhu, S. Cheng, M. Suguri, and R. Masuda, “Detection of lodging rice areas in rice fields by semantic segmentation via a fisheye-lens camera,” J. of the Japanese Society of Agricultural Machinery and Food Engineers, Vol.85, No.4, pp. 226-233, 2023.

- [34] S. Chen and N. Noboru, “Remote safety system for a robot tractor using a monocular camera and a YOLO-based method,” Computers and Electronics in Agriculture, Vol.215, Article No.108409, 2023. https://doi.org/10.1016/j.compag.2023.108409

- [a] https://products.iseki.co.jp/tractor/trac-robot/ [Accessed June 15, 2025]

- [b] https://agriculture.kubota.co.jp/product/tractor/MR-1000-AH/ [Accessed June 15, 2025]

- [c] https://www.yanmar.com/jp/about/technology/vision2/robotics.html [Accessed June 15, 2025]

- [d] https://agriculture.kubota.co.jp/product/taueki/NW-8-10S-A/ [Accessed June 15, 2025]

- [e] https://www.yanmar.com/jp/about/ymedia/article/yr8da.html [Accessed June 15, 2025]

- [f] https://www.spidermower.com/SPIDER_ILD01 [Accessed June 15, 2025]

- [g] https://agriculture.kubota.co.jp/product/combine/DR-6130-A/ [Accessed June 15, 2025]

- [h] https://www.yanmar.com/jp/agri/products/harvest/combine/yh6101_yh6115/auto.html [Accessed June 15, 2025]

- [i] https://www.kubota.co.jp/kubotapress/technology/ces-katr.html [Accessed June 15, 2025]

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.