Paper:

Human Tracking of a Crawler Robot in Climbing Stairs

Yasuaki Orita*, Kiyotsugu Takaba*, and Takanori Fukao**

*Ritsumeikan University

1-1-1 Noji-higashi, Kusatsu-shi, Shiga 525-8577, Japan

**University of Tokyo

7-3-1 Hongo, Bunkyo-ku, Tokyo 113-8656, Japan

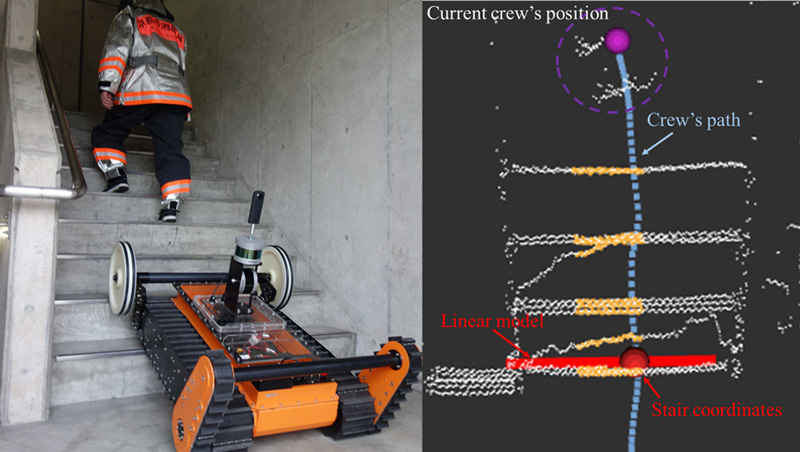

There are many reports of secondary damage to crews during firefighting operations. One way to support and enhance their activities is to get robots to track them and carry supplies. In this paper, we propose a localization method for stairs that includes scene detection. The proposed method allows a robot to track a person across stairs. First, the scene detection autonomously detects that the person is climbing the stairs. Then, the linear model representing the first step of the staircase is combined with the person’s trajectory for localization. The method uses omnidirectional imaging and point clouds, and the localization and scene detection are available from any posture around the stairs. Finally, using the localization result, the robot automatically navigates to a posture where it can climb the stairs. Verification confirmed the accuracy and real-time capability of the method and demonstrated that the actual crawler robot autonomously chooses a posture that is ready for climbing.

Autonomous human tracking in ascending stairs

- [1] Y. Orita and T. Fukao, “Robust Human Tracking of a Crawler Robot,” J. Robot. Mechatron., Vol.31, No.2, pp. 194-202, 2019.

- [2] Y. Iwano et al., “Development of Rescue Support Stretcher System with Stair-Climbing,” J. Robot. Mechatron., Vol.25, No.3, pp. 567-574, 2013.

- [3] T. Haji et al., “New Body Design for Flexible Mono-Tread Mobile Track: Layered Structure and Passive Retro-Flexion,” J. Robot. Mechatron., Vol.26, No.4, pp. 460-468, 2014.

- [4] B. T. Pinhas et al., “A mobile robot with autonomous climbing and descending of stairs,” Robotica, Vol.27, No.2, pp. 171-188, 2009.

- [5] B. Chen et al., “Motion Planning for Autonomous Climbing Stairs for Flipper Robot,” Proc. IEEE Int. Conf. Real-time Computing and Robotics, pp. 531-538, 2020.

- [6] J. Hirasawa, “Improvement of the Mobility on the Step-Field for a Stair Climbable Robot with Passive Crawlers,” J. Robot. Mechatron., Vol.32, No.4, pp. 780-788, 2020.

- [7] A. Watanabe et al., “Effect of Compliance on Ground Adaptability of Crawler Mobile Robots with Sub-Crawlers,” Proc. IEEE/SICE Int. Symp. System Integration, pp. 1348-1353, 2020.

- [8] Y. Okada et al., “Semi-autonomous Operation of Tracked Vehicles on Rough Terrain using Autonomous Control of Active Flippers,” Proc. IEEE/RSJ Int. Conf. Intelligent Robots and Systems, pp. 2815-2820, 2009.

- [9] S. Kojima et al., “Stable Autonomous Spiral Stair Climbing of Tracked Vehicles using wall Reaction Force,” Proc. IEEE/RSJ Int. Conf. Intelligent Robots and Systems, pp. 1065-1072, 2020.

- [10] K. Omichi et al., “Robust stairs climbing of a crawler robot,” Proc. 36th Annual Conf. of the Robot Society of Japan (RSJ2018), 2018 (in Japanese).

- [11] K. Kawabata, “Toward technological contributions to remote operations in the decommissioning of the Fukushima Daiichi Nuclear Power Station,” Jpn. J. of Applied Physics, Vol.59, No.5, pp. 567-574, 2020.

- [12] D. S. Chan et al., “Efficient stairway detection and modeling for autonomous robot climbing,” Proc. IEEE/RSJ Int. Conf. on Intelligent Robots and Systems, pp. 5916-5921, 2017.

- [13] X. Zhao et al., “Real-time stairs geometric parameters estimation for lower limb rehabilitation exoskeleton,” Proc. of 2018 Chinese Control And Decision Conf., pp. 5018-5023, 2018.

- [14] H. Harms et al., “Detection of Ascending Stairs using Stereo Vision,” Proc. IEEE/RSJ Int. Conf. Intelligent Robots and Systems, pp. 2496-2502, 2015.

- [15] J. A. Delmerico et al., “Ascending Stairway Modeling form Dense Depth Imagery for Traversability Analysis,” Proc. IEEE Int. Conf. Robotics and Automation, pp. 2283-2290, 2013.

- [16] T. Westfechtel et al., “3D Graph Based Stairway Detection and Localization for Mobile Robots,” Proc. IEEE/RSJ Int. Conf. Intelligent Robots and Systems, pp. 473-479, 2016.

- [17] K. Miyakawa et al., “Automatic Estimation of the Position and Orientation of Stairs to Be Reached and Climbed by a Disaster Response Robot by Analyzing 2D Image and 3D Point Cloud,” Int. J. of Mechanical Engineering and Robotics Research, Vol.9, No.9, pp. 1312-1321, 2020.

- [18] A. V. Le et al., “Autonomous Floor and Staircase Cleaning Framework by Reconfigurable sTetro Robot with Perception Sensors,” J. of Intelligent and Robotic Systems, Vol.101, No.1, pp. 1-19, 2021.

- [19] U. Patil et al., “Deep Learning Based Stair Detection and Statistical Image Filtering for Autonomous Stair climbing,” Proc. IEEE Int. Conf. Robotic Computing, pp. 159-166, 2019.

- [20] J. A. Hesch et al., “Descending-stair Detection, Approach, and Traversal with an Autonomous Tracked Vehicle,” Proc. IEEE/RSJ Int. Conf. Intelligent Robots and Systems, pp. 5525-5531, 2010.

- [21] E. Mihankhah et al., “Autonomous Staircase Detection and Stair Climbing for a Tracked Mobile Robot using Fuzzy Controller,” Proc. Int. Conf. Robotics and Biomimetics, pp. 1980-1985, 2009.

- [22] B. Sharma and I. A. Syed, “Where to begin climbing? Computing start-of-stair position for robotic platforms,” Proc. 11th Int. Conf. Computational Intelligence and Communication Networks, pp. 110-116, 2019.

- [23] J. Redmon et al., “You only look once: Unified, real-time object detection,” Proc. IEEE/CVF Int. Conf. Computer Vision and Pattern Recognition, pp. 779-788, 2016.

- [24] S. Ren et al., “Faster r-cnn: Towards real-time object detection with region proposal networks,” IEEE Trans. Pattern Analysis and Machine Intelligence, Vol.39, No.6, pp. 1137-1149, 2017.

- [25] W. Liu et al., “SSD: Single Shot Multibox Detector,” Proc. Euro. Conf. Computer Vision, pp. 21-37, 2016.

- [26] Y. Wenyan et al., “Object detection in equirectangular panorama,” Proc. Int. Conf. Pattern Recognition, pp. 2190-2195, 2018.

- [27] S. H. Chou et al., “360-Indoor: Towards Learning Real-World Objects in 360deg Indoor Equirectangular Images,” Proc. IEEE/CVF Winter Conf. Applications of Computer Vision, pp. 845-853, 2020.

- [28] M. Fischler and R. Bolles, “Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography,” Commun. ACM, Vol.24, No.6, pp. 381-395, 1981.

- [29] Y. Kanayama et al., “A Stable Tracking Control Method for an Autonomous Mobile robot,” Proc. IEEE Int. Conf. Robot Automation, pp. 384-389, 1990.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.