Paper:

Estimating Children’s Personalities Through Their Interaction Activities with a Tele-Operated Robot

Kasumi Abe*1,*2, Takayuki Nagai*2,*3, Chie Hieida*3, Takashi Omori*4, and Masahiro Shiomi*1

*1Advanced Telecommunications Research Institute International (ATR)

2-2-2 Hikaridai, Keihanna Science City, Kyoto 619-0288, Japan

*2The University of Electro-Communications

1-5-1 Chofugaoka, Chofu, Tokyo 182-8585, Japan

*3Osaka University

1-1 Yamada-oka, Suita, Osaka 565-0871, Japan

*4Tamagawa University

6-1-1 Tamagawagakuen, Machida, Tokyo 194-8610, Japan

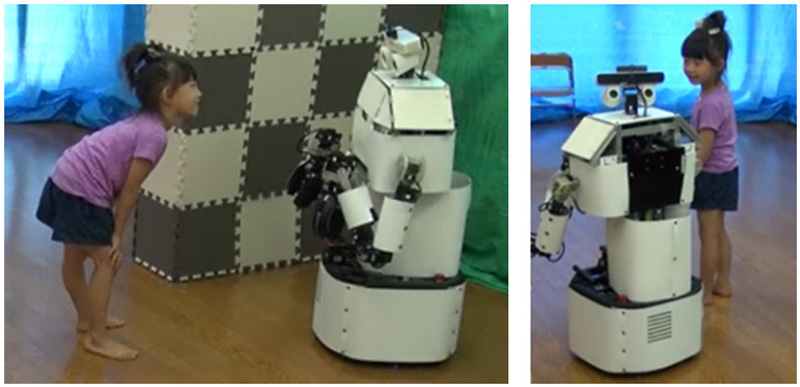

Based on the little big-five inventory, we developed a technique to estimate children’s personalities through their interaction with a tele-operated childcare robot. For personality estimation, our approach observed not only distance-based but also face-image-based features when a robot interacted with a child at a close distance. We used only the robot’s sensors to track the child’s positions, detect its eye contact, and estimate how much it smiled. We collected data from a kindergarten, where each child individually interacted for 30 min with a robot that was controlled by the teachers. We used 29 datasets of the interaction between a child and the robot to investigate whether face-image-based features improved the performance of personality estimation. The evaluation results demonstrated that the face-image-based features significantly improved the performance of personality estimation, and the accuracy of the personality estimation of our system was 70% on average for the personality scales.

Child interacts with a tele-operated robot

- [1] M. Shiomi and N. Hagita, “Social acceptance toward a childcare support robot system: Web-based cultural differences investigation and a field study in Japan,” Advanced Robotics, pp. 727-738, 2017.

- [2] F. Tanaka, K. Isshiki, F. Takahashi, M. Uekusa, R. Sei, and K. Hayashi, “Pepper learns together with children: Development of an educational application,” 2015 IEEE-RAS 15th Int. Conf. on Humanoid Robots (Humanoids), pp. 270-275, 2015.

- [3] J. Fink et al., “Which robot behavior can motivate children to tidy up their toys?: design and evaluation of “ranger”,” Proc. of the 2014 ACM/IEEE Int. Conf. on Human-Robot Interaction, pp. 439-446, 2014.

- [4] K. Abe et al., “Toward playmate robots that can play with children considering personality,” Proc. of the 2nd Int. Conf. on Human-Agent Interaction, pp. 165-168, 2014.

- [5] M. Shiomi, K. Abe, Y. Pei, N. Ikeda, and T. Nagai, ““I’m Scared”: Little Children Reject Robots,” Proc. of the 4th Int. Conf. on Human Agent Interaction, pp. 245-247, 2016.

- [6] J. B. Asendorpf and G. H. Meier, “Personality effects on children’s speech in everyday life: Sociability-mediated exposure and shyness-mediated reactivity to social situations,” J. of Personality and Social Psychology, Vol.64, No.6, pp. 1072-1083, 1993.

- [7] R. A. Fabes et al., “Regulation, emotionality, and preschoolers’ socially competent peer interactions,” Child Development, Vol.70, No.2, pp. 432-442, 1999.

- [8] P. Prinzie, P. Onghena, W. Hellinckx, H. Grietens, P. Ghesquiere, and H. Colpin, “The additive and interactive effects of parenting and children’s personality on externalizing behaviour,” European J. of Personality, Vol.17, No.2, pp. 95-117, 2003.

- [9] M. Zupančič and T. Kavčič, “Child personality measures as contemporaneous and longitudinal predictors of social behaviour in pre-school,” Horizons of Psychology, Vol.14, No.1, pp. 17-33, 2005.

- [10] R. B. Silver, J. R. Measelle, J. M. Armstrong, and M. J. Essex, “Trajectories of classroom externalizing behavior: Contributions of child characteristics, family characteristics, and the teacher-child relationship during the school transition,” J. of School Psychology, Vol.43, No.1, pp. 39-60, 2005.

- [11] J. C. Meunier, I. Roskam, and D. T. Browne, “Relations between parenting and child behavior: Exploring the child’s personality and parental self-efficacy as third variables,” Int. J. of Behavioral Development, Vol.35, No.3, pp. 246-259, 2011.

- [12] Y. Murakami and N. Hatayama, “The Construction of Big Five Personality Inventory for Children,” Kodo Keiryogaku (The Japanese J. of Behaviormetrics), Vol.37, No.1, pp. 93-104, 2010.

- [13] O. P. John, A. Caspi, R. W. Robins, T. E. Moffitt, and M. Stouthamer-Loeber, “The “little five”: Exploring the nomological network of the five-factor model of personality in adolescent boys,” Child Development, Vol.65, No.1, pp. 160-178, 1994.

- [14] C. Barbaranelli, G. V. Caprara, A. Rabasca, and C. Pastorelli, “A questionnaire for measuring the Big Five in late childhood,” Personality and Individual Differences, Vol.34, No.4, pp. 645-664, 2003.

- [15] P. Muris, C. Meesters, and R. Diederen, “Psychometric properties of the Big Five Questionnaire for Children (BFQ-C) in a Dutch sample of young adolescents,” Personality and Individual Differences, Vol.38, No.8, pp. 1757-1769, 2005.

- [16] O. Aran and D. Gatica-Perez, “One of a kind: Inferring personality impressions in meetings,” Proc. of the 15th ACM Int. Conf. on Multimodal Interaction, pp. 11-18, 2013.

- [17] H. Hung et al., “Using audio and video features to classify the most dominant person in a group meeting,” Proc. of the 15th ACM Int. Conf. on Multimedia (MM ’07), pp. 835-838, 2007.

- [18] S. M. Anzalone, G. Varni, S. Ivaldi, and M. Chetouani, “Automated Prediction of Extraversion During Human-Humanoid Interaction,” Int. J. of Social Robotics, Vol.9, No.3, pp. 385-399, 2017.

- [19] H. Salam, O. Celiktutan, I. Hupont, H. Gunes, and M. Chetouani, “Fully automatic analysis of engagement and its relationship to personality in human-robot interactions,” IEEE Access, Vol.5, pp. 705-721, 2017.

- [20] O. Celiktutan, E. Skordos, and H. Gunes, “Multimodal Human-Human-Robot Interactions (MHHRI) Dataset for Studying Personality and Engagement,” IEEE Trans. on Affective Computing, pp. 484-497, 2017.

- [21] S. Strohkorb, I. Leite, N. Warren, and B. Scassellati, “Classification of Children’s Social Dominance in Group Interactions with Robots,” Proc. of the 2015 ACM on Int. Conf. on Multimodal Interaction, pp. 227-234, 2015.

- [22] T. Kanda and H. Ishiguro, “An approach for a social robot to understand human relationships,” Interaction Studies, Vol.7, No.3, pp. 369-403, 2006.

- [23] T. Omori, K. Abe, and T. Nagai, “Modeling of stress/interest state controlling in robot-child play situation,” Procedia Computer Science, Vol.71, pp. 119-124, 2015.

- [24] E. T. Hall, “The hidden dimension,” Doubleday, 1966.

- [25] L. Takayama and C. Pantofaru, “Influences on proxemic behaviors in human-robot interaction,” 2009 IEEE/RSJ Int. Conf. on Intelligent Robots and Systems (IROS 2009), pp. 5495-5502, 2009.

- [26] S. Rossi, M. Staffa, L. Bove, R. Capasso, and G. Ercolano, “User’s Personality and Activity Influence on HRI Comfortable Distances,” Int. Conf. on Social Robotics, pp. 167-177, 2017.

- [27] M. L. Walters et al., “The influence of subjects’ personality traits on personal spatial zones in a human-robot interaction experiment,” 2005 IEEE Int. Workshop on Robot and Human Interactive Communication (ROMAN 2005), pp. 347-352, 2005.

- [28] F. Rahbar, S. M. Anzalone, G. Varni, E. Zibetti, S. Ivaldi, and M. Chetouani, “Predicting extraversion from non-verbal features during a face-to-face human-robot interaction,” Int. Conf. on Social Robotics, pp. 543-553, 2015.

- [29] R. Cuperman and W. Ickes, “Big Five predictors of behavior and perceptions in initial dyadic interactions: Personality similarity helps extraverts and introverts, but hurts “disagreeables”,” J. of Personality and Social Psychology, Vol.97, No.4, pp. 667-684, 2009.

- [30] N. Mobbs, “Eye-contact in relation to Social Introversion/Extraversion,” British J. of Clinical Psychology, Vol.7, No.4, pp. 305-306, 1968.

- [31] B. H. La France, A. D. Heisel, and M. J. Beatty, “Is there empirical evidence for a nonverbal profile of extraversion?: a meta-analysis and critique of the literature,” Communication Monographs, Vol.71, No.1, pp. 28-48, 2004.

- [32] B. Post and E. M. Hetherington, “Sex differences in the use of proximity and eye contact in judgments of affiliation in preschool children,” Developmental Psychology, Vol.10, No.6, pp. 881-889, 1974.

- [33] T. Belpaeme et al., “Multimodal child-robot interaction: Building social bonds,” J. of Human-Robot Interaction, Vol.1, No.2, pp. 33-53, 2012.

- [34] T. Kanda, R. Sato, N. Saiwaki, and H. Ishiguro, “A two-month field trial in an elementary school for long-term human-robot interaction,” IEEE Trans. on Robotics, Vol.23, No.5, pp. 962-971, 2007.

- [35] T. Komatsubara, M. Shiomi, T. Kaczmarek, T. Kanda, and H. Ishiguro, “Estimating Children’s Social Status Through Their Interaction Activities in Classrooms with a Social Robot,” Int. J. of Social Robotics, Vol.11, No.1, pp. 35-48, 2018.

- [36] M. Shiomi, T. Komatsubara, T. Kaczmarek, T. Kanda, and H. Ishiguro, “Estimating Children’s Characteristics by Observing their Classroom Activities,” Proc. of 2018 Asia-Pacific Signal and Information Processing Association Annual Summit and Conf. (APSIPA ASC), pp. 133-138, 2018.

- [37] K. Abe, M. Shiomi, Y. Pei, T. Zhang, N. Ikeda, and T. Nagai, “ChiCaRo: tele-presence robot for interacting with babies and toddlers,” Advanced Robotics, Vol.32, No.4, pp. 176-190, 2018.

- [38] Y. Tamura, M. Kimoto, M. Shiomi, T. Iio, K. Shimohara, and N. Hagita, “Effects of a Listener Robot with Children in Storytelling,” Proc. of the 5th Int. Conf. on Human Agent Interaction, pp. 35-43, 2017.

- [39] A. Lee, T. Kawahara, and K. Shikano, “Julius – An open source real-time large vocabulary recognition engine,” Proc. of 7th European Conf. on Speech Communication and Technology, pp. 1691-1694, 2001.

- [40] J. I. Biel, L. Teijeiro-Mosquera, and D. Gatica-Perez, “Facetube: Predicting personality from facial expressions of emotion in online conversational video,” Proc. of the 14th ACM Int. Conf. on Multimodal Interaction, pp. 53-56, 2012.

- [41] P. S. Bradley and O. L. Mangasarian, “Feature selection via concave minimization and support vector machines,” Proc. of the 15th Int. Conf. on Machine Learning (ICML ’98), Vol.98, pp. 82-90, 1998.

- [42] T. G. Dietterich, “Approximate statistical tests for comparing supervised classification learning algorithms,” J. of Neural Computation, Vol.10, No.7, pp. 1895-1923, 1998.

- [43] T. Van Gestel et al., “Benchmarking least squares support vector machine classifiers,” Machine Learning, Vol.54, No.1, pp. 5-32, 2004.

- [44] A. J. Smola and B. J. S. Schölkopf, “A tutorial on support vector regression,” Statistics and Computing, Vol.14, pp. 199-222, 2004.

- [45] C.-L. Huang, M.-C. Chen, and C.-J. Wang, “Credit scoring with a data mining approach based on support vector machines,” Expert Systems with Applications, Vol.33, No.4, pp. 847-856, 2007.

- [46] S. Hassan, M. Rafi, and M. S. Shaikh, “Comparing SVM and naïve Bayes classifiers for text categorization with Wikitology as knowledge enrichment,” Proc. of 2011 IEEE 14th Int. Multitopic Conf., pp. 31-34, 2011.

- [47] F. Pedregosa et al., “Scikit-learn: Machine learning in Python,” J. of Machine Learning Research, Vol.12, pp. 2825-2830, 2011.

- [48] M. F. Whiteside, F. Busch, and T. Horner, “From egocentric to cooperative play in young children: a normative study,” J. of the American Academy of Child & Adolescent Psychiatry, Vol.15, No.2, pp. 294-313, 1976.

- [49] H. Kozima and C. Nakagawa, “Interactive robots as facilitators of children’s social development,” A. Lazinica (Ed.), “Mobile Robots: Towards New Applications,” IntechOpen, 2006.

- [50] K. Wada, D. F. Glas, M. Shiomi, T. Kanda, H. Ishiguro, and N. Hagita, “Capturing Expertise: Developing Interaction Content for a Robot Through Teleoperation by Domain Experts,” Int. J. of Social Robotics, Vol.7, No.5, pp. 653-672, 2015.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.