Research Paper:

Gaze-Data-Based Probability Inference for Menu Item Position Effect on Information Search

Yutaka Matsushita

Department of Media Informatics, Kanazawa Institute of Technology

3-1 Yatsukaho, Hakusan, Ishikawa 924-0838, Japan

This study examines the effect of menu items placed around a slideshow at the center of a webpage on an information search. Specifically, the study analyzes eye movements of users whose search time is long or short on a mixed-type landing page and considers the cause in relation to “directed search” (which triggers a certain type of mental workload). To this end, a Bayesian network model is developed to elucidate the relation between eye movement measures and search time. This model allows the implementation degree of directed search to be gauged from the levels of the measures that characterize a long or short search time. The model incorporates probabilistic dependencies and interactions among eye movement measures, and hence it enables the association of various combinations of these measure levels with different browsing patterns, helping judge whether directed search is implemented or not. When viewers move their eyes in the direction opposite (identical) to the side on which the target information is located, the search time increases (decreases); this movement is a result of the menu items around the slideshow capturing viewers’ attention. However, viewers’ browsing patterns are not related to the initial eye movement directions, which may be classified into either a series of orderly scans (directed search) to reach the target or long-distance eye movements derived from the desire to promptly reach the target (undirected search). These findings suggest that the menu items of a website should not be basically placed around a slideshow, except in cases where they are intentionally placed in only one direction (e.g., left, right, or below).

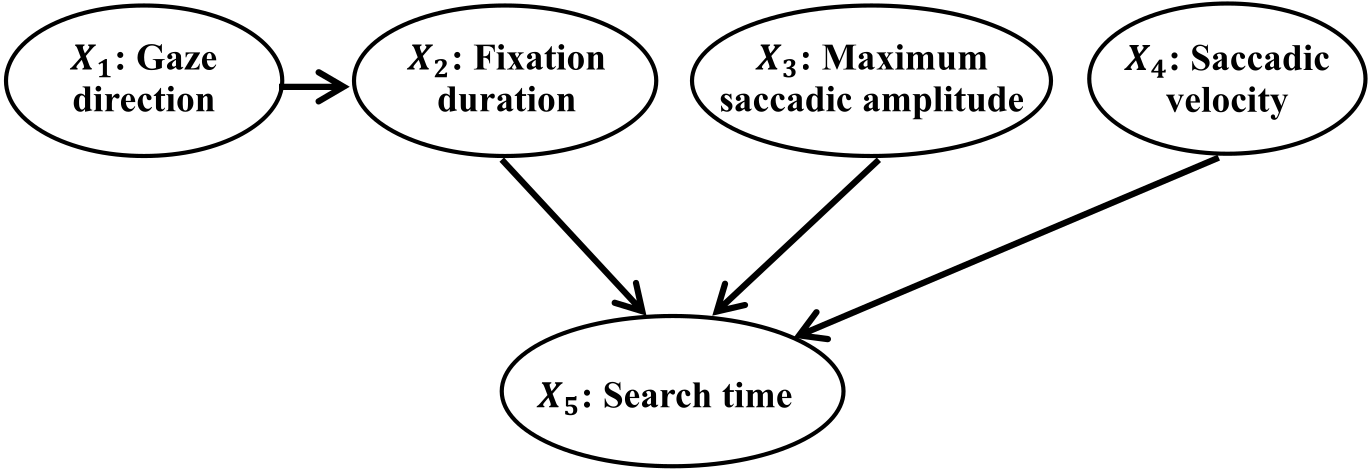

Graph structure of Bayesian network

- [1] T. Anzai, T. Oya, and T. Kasuya, “Consideration of the Transition in Mitsubishi Electric Corporate Website Design – Transition in Response to Environmental Change and Record Through the Case of Corporate Website Design Examples,” Trans. of Japan Society of Kansei Engineering, Vol.13, No.2, pp. 391-402, 2014 (in Japanese). https://doi.org/10.5057/jjske.13.391

- [2] S. Chu, N. Paul, and L. Ruel, “Using Eye Tracking Technology to Examine the Effectiveness of Design Elements on News Websites,” Information Design J., Vol.17, Issue 1, pp. 31-43, 2009. https://doi.org/10.1075/idj.17.1.04chu

- [3] N. Nakamichi, M. Sakai, K. Shima, and K. Matsumoto, “Empirical Study on Evaluating Web Usability With Eye Information,” SIG Technical Reports, Vol.2003, No.73, 2003 (in Japanese).

- [4] K. Rayner, “Eye Movements and Information Processing: 20 Years of Research,” Psychological Bulletin, Vol.124, No.3, pp. 372-422, 1998. https://doi.org/10.1037/0033-2909.124.3.372

- [5] B. Pan, H. A. Hembrook, G. K. Gay, L. A. Granka, M. K. Feusner, and J. K. Newman, “The Determinants of Web Page Viewing Behavior: An Eye-Tracking Study,” Proc. of the 2004 Symp. on Eye Tracking Research and Applications (ETRA’04), pp. 147-154, 2004. https://doi.org/10.1145/968363.968391

- [6] S. Josephson and M. E. Holmes, “Attention to Repeated Images on the World-Wide Web: Another Look at Scanpath Theory,” Behavior Research Methods, Instruments, & Computers, Vol.34, Issue 4, pp. 539-548, 2002. https://doi.org/10.3758/BF03195483

- [7] J. H. Goldberg, M. J. Stimson, M. Lewenstein, N. Scott, and A. M. Wichansky, “Eye Tracking in Web Search Tasks: Design Implications,” Proc. of the 2002 Symp. on Eye Tracking Research and Applications (ETRA’02), pp. 51-58, 2002. https://doi.org/10.1145/507072.507082

- [8] E. Bataineh, B. A. Mourad, and F. Kammoun, “Usability Analysis on Dubai e-Government Portal Using Eye Tracking Methodology,” 2017 Computing Conf., pp. 591-600, 2017. https://doi.org/10.1109/SAI.2017.8252156

- [9] L. Chen and P. Pu, “Users’ Eye Pattern in Organization-Based Recommender Interfaces,” Proc. of the 2011 Int. Conf. Intelligent User Interfaces, pp. 311-314, 2011. https://doi.org/10.1145/1943403.1943453

- [10] S. Eraslan and Y. Yesilada, “Patterns in Eyetracking Scanpaths and the Affecting Factors,” J. of Web Engineering, Vol.14, No.5&6, pp. 363-385, 2015.

- [11] R. Menges, C. Kumar, and S. Staab, “Improving User Experience of Eye Tracking-Based Interaction: Introspecting and Adapting Interfaces,” ACM Trans. on Computer-Human Interaction, Vol.26, Issue 6, pp. 1-46, 2019. https://doi.org/10.1145/3338844

- [12] T. Doi and A. Murata, “Effects of Display Complexity on Reaction Time and Ocular Movement Characteristics in Visual Information Search on GUI,” The Japanese J. of Ergonomics, Vol.54, No.6, pp. 236-247, 2018 (in Japanese). https://doi.org/10.5100/jje.54.236

- [13] S. Jacob, S. Ishimaru, S. S. Bukhari, and A. Dengel, “Gaze-Based Interest Detection on Newspaper Articles,” Proc. 7th Workshop Pervasive Eye Tracking and Mobile Eye-Based Interaction (PETMEI’18), 2018. https://doi.org/10.1145/3208031.3208034

- [14] R. E. Morrison, “Manipulation of Stimulus Onset Delay in Reading: Evidence for Parallel Programming of Saccades,” J. of Experimental Psychology: Human Perception and Performance, Vol.10, No.5, pp. 667-682, 1984. https://doi.org/10.1037/0096-1523.10.5.667

- [15] J. K. O’Regan, “Eye Movements and Reading,” E. Kowler (Ed.), “Eye Movements and Their Role in Visual and Cognitive Processes,” Elsevier, pp. 395-453, 1990.

- [16] J. K. O’Regan, “Optimal Viewing Position in Words and the Strategy-Tactics Theory of Eye Movements in Reading,” K. Rayner (Ed.), “Eye Movements and Visual Cognition: Scene Perception in Reading,” Springer-Verlag, pp. 333-354, 1992. https://doi.org/10.1007/978-1-4612-2852-3_20

- [17] Q. Ji, P. Lan, and C. Looney, “A Probabilistic Framework for Modeling and Real-Time Monitoring Human Fatigue,” IEEE Trans. on Systems, Man, and Cybernetics-Part A, Vol.36, Issue 5, pp. 862-875, 2006. https://doi.org/10.1109/TSMCA.2005.855922

- [18] Y. Matsushita and S. Maeda, “Bayesian Network Model that Infers Purchase Probability in an Online Shopping Site,” J. Adv. Comput. Intell. Intell. Inform., Vol.17, No.2, pp. 221-226, 2013. https://doi.org/10.20965/jaciii.2013.p0221

- [19] J. H. Goldberg and X. P. Kotval, “Computer Interface Evaluation Using Eye Movements: Methods and Constructs,” Int. J. of Industrial Ergonomics, Vol.24, Issue 6, pp. 631-645, 1999. https://doi.org/10.1016/S0169-8141(98)00068-7

- [20] K. Toda, N. Nakamichi, K. Shima, M. Ohira, M. Sakai, and K. Matsumoto, “An Information Exploration Model Based on Eye Movements During Browsing Web Pages,” IPSJ SIG Technical Reports, Vol.2005, Issue 52, pp. 35-42, 2005 (in Japanese).

- [21] A. Mazzei, T. Koll, F. Kaplan, and P. Dillenbourg, “Attention Processes in Natural Reading: The Effect of Margin Annotations on Reading Behavior and Comprehension,” Proc. of the Symp. on Eye Tracking Research and Applications (ETRA 2014), pp. 235-238, 2014. https://doi.org/10.1145/2578153.2578195

- [22] B. W. Wojdynski and H. Bang, “Distraction Effects of Contextual Advertisement on Online News Processing: An Eye-Tracking Study,” Behaviour & Information Technology, Vol.35, No.8, pp. 654-664, 2016. https://dx.doi.org/10.1080/0144929X.2016.1177115

- [23] X. H. Li, M. Rötting, and W. S. Wang, “Bayesian Network-Based Identification of Driver Lane-Changing Intents Using Eye Tracking and Vehicle-Based Data,” J. Edelmann, M. Plöchli, and P. Pfeffer (Eds.), “Advanced Vehicle Control,” Taylor & Francis Group, pp. 299-304, 2017.

- [24] J. Liu and Z. Xia, “An Approach of Web Service Organization Using Bayesian Network Learning,” J. of Web Engineering, Vol.16, No.3&4, pp. 252-276, 2017.

- [25] “Whitepaper: Tobii Pro I-VT Fixation Filter.” https://go.tobii.com/Tobii-I-VT-fixation-filter-white-paper [Accessed November 30, 2023]

- [26] H. Akaike, “A New Look at the Statistical Model Identification,” IEEE Trans. on Automatic Control, Vol.19, Issue 6, pp. 716-723, 1974. https://doi.org/10.1109/TAC.1974.1100705

- [27] S. J. Russell and P. Norvig, “Artificial Intelligence: A Modern Approach (2nd Ed.),” Prentice Hall, 2003.

- [28] M. Stone, “Cross-Validation and Multinomial Prediction,” Biometrika, Vol.61, Issue 3, pp. 509-515, 1974. https://doi.org/10.1093/biomet/61.3.509

- [29] J. D. Hamilton, “Time Series Analysis,” Princeton University Press, 1994. https://doi.org/10.1515/9780691218632

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.