Paper:

Effects of Shape Characteristics on Tactile Sensing Recognition and Brain Activation

Hidenori Sakaniwa*, Stephanie Sutoko**, Akiko Obata**, Hirokazu Atsumori**, Nobuhiro Fukuda***, Masashi Kiguchi**, and Akihiko Kandori**

*Center for Exploratory Research, Hitachi, Ltd.

1-280 Higashi-koigakubo, Kokubunji, Tokyo 185-8601, Japan

**Center for Exploratory Research, Hitachi, Ltd.

2520 Akanuma, Hatoyama-machi, Hiki-gun, Saitama 350-0395, Japan

***Center for Technology Innovation – Digital Technology, Hitachi, Ltd.

1-280 Higashi-koigakubo, Kokubunji, Tokyo 185-8601, Japan

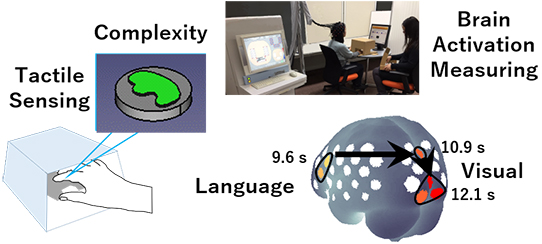

Training tactile sensing for shape recognition is considered to be an effective rehabilitation technique. Previous studies in tactile sensing showed a tendency of recognition ambiguity, thus necessitating tactile sensing rehabilitation. Eleven subjects observed invisible objects using their fingers and were asked to identify the shape of the objects. The relationship between the degree of recognition and shape complexity was investigated. The results showed high self-confidence in recognizing high complexity shapes. The recognition process was confirmed in a second experiment measuring brain activation using near-infrared spectroscopy. Measurement of eight subjects showed the activation of verbal and visual processing regions, indicating that the act of handling the shape was translated to verbal expression and visual imaging. These results potentially quantify tactile sensing and contribute to the realization of personalized rehabilitation.

Tactile sensing recognition and brain activation

- [1] Ministry of Health, Labour and Welfare, Japan, “Survey of Long-term Care Benefit Expenditures,” https://www.mhlw.go.jp/english/database/db-hss/dl/soltcbe2016_b.pdf [accessed October 25, 2019]

- [2] S. Miyamoto, “Rehabilitation Renaissance,” Shunjusha, 2006 (in Japanese).

- [3] S. Tachi and J. Nomura, “Embodied Media Technology Based on Haptic Primary Colors,” ACCEL, Japan Science and Technology Agency, “ACCEL: Accelerated Innovation Research Initiative Turning Top Science and Ideas into High-Impact Values – PROJECTS,” pp. 27-28, 2019, http://www.jst.go.jp/kisoken/accel/en/brochure/accel_en_pamph_project_2019_27.pdf [accessed October 25, 2019]

- [4] “Haptic Technology Market by Component (Actuators, Drivers & Controllers), Feedback (Tactile, Force), Application (Automotive & Transportation, Consumer Electronics, Healthcare, Gaming, Engineering, Education & Research), and Region – Global Forecast to 2022,” MarketsandMarkets, 2016.

- [5] Y. Ban, T. Narumi, T. Tanikawa, and M. Hirose, “Altering Perception of Shape by the Hand Image Replacement,” Trans. of the Virtual Reality Society of Japan, Vol.17, No.4, pp. 457-468, 2012 (in Japanese with English Abstract).

- [6] N. Goda, I. Yokoi, A. Tachibana, T. Minamimoto, and H. Komatsu, “Crossmodal Association of Visual and Haptic Material Properties of Objects in the Monkey Ventral Visual Cortex,” Current Biology, Vol.26, Issue 7, pp. 928-934, 2016.

- [7] Telexistence, https://tx-inc.com/ja/top/ [accessed October 25, 2019]

- [8] R. Doizaki, J. Watanabe, and M. Sakamoto, “Automatic Estimation of Multidimensional Ratings from a Single Sound-Symbolic Word and Word-Based Visualization of Tactile Perceptual Space,” IEEE Trans. on Haptics, Vol.10, Issue 2, pp. 173-182, 2017.

- [9] H. Sakaniwa, M. Seki, F. Dong, and K. Hirota, “KANSEI TEXTURE for Remote Object Image and its Visualization Method,” Trans. of Japan Society of Kansei Engineering, Vol.13, No.1, pp. 281-288, 2014 (in Japanese with English Abstract).

- [10] H. Sakaniwa, M. Seki, F. Dong, and K. Hirota, “Visualization Method of Kansei Texture and Its Individual Difference,” J. of Information Assurance and Security, Vol.9, Issue 5, pp. 288-296, 2014.

- [11] H. Sakaniwa, F. Dong, and K. Hirota, “Fuzzy Set Representation of Kansei Texture and its Visualization for Online Shopping,” J. Adv. Comput. Intell. Intell. Inform., Vol.19, No.2, pp. 284-292, 2015.

- [12] Tachi_lab., https://tachilab.org/jp/index.html [accessed October 25, 2019]

- [13] V. Yem, R. Okazaki, and H. Kajimoto, “FinGAR: combination of electrical and mechanical stimulation for high-fidelity tactile presentation,” Proc. of the ACM SIGGRAPH 2016 Emerging Technologies, Article No.7, 2016.

- [14] S. Inoue, Y. Makino, and H. Shinoda, “Mid-Air Ultrasonic Pressure Control on Skin by Adaptive Focusing,” Proc. of the 10th Int. Conf. on Human Haptic Sensing and Touch Enabled Computer Applications (EuroHaptics), Part 1, pp. 68-77, 2016.

- [15] UnlimitedHand, http://unlimitedhand.com/ [accessed October 25, 2019]

- [16] H. Maru, Y. Nagashima, H. Kanai, and T. Nishimatsu, “Study on Fabric Character Triggering Fabric Appearance / Fabric Hand,” J. of Textile Engineering, Vol.63, No.5, pp. 141-148, 2017 (in Japanese with English Abstract).

- [17] T. Harada et al., “Asymmetrical Neural Substrates of Tactile Discrimination in Humans: A Functional Magnetic Resonance Imaging Study,” The J. of Neuroscience, Vol.24, Issue 34, pp. 7524-7530, 2004.

- [18] Y. Li Hegner, Y. Lee, W. Grodd, and C. Braun, “Comparing Tactile Pattern and Vibrotactile Frequency Discrimination: A Human fMRI Study,” J. of Neurophysiology, Vol.103, Issue 6, pp. 3115-3122, 2010.

- [19] R. Stilla et al., “Neural processing underlying tactile microspatial discrimination in the blind: A functional magnetic resonance imaging study,” J. of Vision Vol.8, Issue 10, Article No.13, 2011.

- [20] S. Hartmann et al., “Functional Connectivity in Tactile Object Discrimination – A Principal Component Analysis of an Event Related fMRI-Study,” PLoS ONE, Vol.3, Issue 12, Article No.e3831, 2008.

- [21] E. Rojas-Hortelano, L. Concha, and V. de Lafuente, “The parietal cortices participate in encoding, short-term memory, and decision-making related to tactile shape,” J. of Neurophysiology, Vol.112, Issue 8, pp. 1894-1902, 2014.

- [22] R. Shih, A. Dubrowski, and H. Carnahan, “Evidence for Haptic Memory,” Proc. of the World Haptics 2009 – 3rd Joint EuroHaptics Conf. and Symp. on Haptic Interfaces for Virtual Environment and Teleoperator Systems, pp. 145-149, 2009.

- [23] H. Yamamoto, “Human Brain Representations of Haptic and Visual Textures,” Brain and Nerve, Vol.67, No.6, pp. 691-700, 2015 (in Japanese with English Abstract).

- [24] T. Kasai and T. Masuda, “The illusions of haptic perception for graphics – A preliminary study focused on bodily motions –,” Bulletin of the Faculty of Education Hirosaki University, No.115, Part 1, pp. 97-103, 2016 (in Japanese).

- [25] Q. Wu, C. Li, Y. Li, H. Sun, Q. Guo, and J. Wu, “SII and the fronto-parietal areas are involved in visually cued tactile top-down spatial attention: a functional MRI study,” NeuroReport, Vol.25, Issue 6, pp. 415-421, 2014.

- [26] F. Pavani, C. Spence, and J. Driver, “Visual Capture of Touch: Out-of-the-Body Experiences with Rubber Gloves,” Psychological Science, Vol.11, No.5, pp. 353-359, 2000.

- [27] K. Doi, Y. Kaihatsu, W. Toyoda, T. Nishimura, and H. Fujimoto, “Evaluation of effect of tactile alphabet size on identification for people without rich tactile experiences and verification of aging effect,” Trans. of the JSME, Vol.83, No.850, Article No.16-00470, 2017 (in Japanese with English Abstract).

- [28] F. Attneave and M. D. Arnoult, “The Quantitative Study of Shape and Pattern Perception,” Psychological Bulletin, Vol.53, No.6, pp. 452-471, 1956.

- [29] H. Kato and S. Inokuchi, “The Basics of Image Processing,” Science of Cookery, Vol.24, No.1, pp. 62-66, 1991 (in Japanese).

- [30] Wikipedia, “Ramer–Douglas–Peucker Algorithm,” https://en.wikipedia.org/wiki/Ramer%E2%80%93Douglas%E2%80%93Peucker_algorithm [accessed October 25, 2019]

- [31] Wikipedia, “Two-Point Discrimination,” https://en.wikipedia.org/wiki/Two-point_discrimination [accessed October 25, 2019]

- [32] F. F. Jöbsis, “Non-invasive, infra-red monitoring of cerebral O2 sufficiency, bloodvolume, HbO2-Hb shifts and bloodflow,” Acta Neurologica Scandinavica, Vol.56, Issue s64, pp. 452-453, 1977.

- [33] A. Villringer, J. Planck, C. Hock, L. Schleinkofer, and U. Dirnagl, “Near infrared spectroscopy (NIRS): A new tool to study hemodynamic changes during activation of brain function in human adults,” Neuroscience Letters, Vol.154, Issue 1-2, pp. 101-104, 1993.

- [34] A. Maki et al., “Spatial and temporal analysis of human motor activity using noninvasive NIR topography,” Medical Physics, Vol.22, Issue 12, pp. 1997-2005, 1995.

- [35] W. Richards, J. J. Koenderink, and D. D. Hoffman, “Inferring 3D Shapes from 2D Codons,” Massachusetts Institute of Technology Artificial Intelligence Laboratory, A.I. Memo No.840, 1985.

- [36] D. Tsuzuki et al., “Stable and convenient spatial registration of stand-alone NIRS data through anchor-based probabilistic registration,” Neuroscience Research, Vol.72, Issue 2, pp. 163-171, 2012.

- [37] D. Tsuzuki and I. Dan, “Spatial registration for functional near-infrared spectroscopy: From channel position on the scalp to cortical location in individual and group analyses,” NeuroImage, Vol.85, Part 1, pp. 92-103, 2014.

- [38] D. Tsuzuki et al., “Virtual spatial registration of stand-alone fNIRS data to MNI space,” NeuroImage, Vol.34, Issue 4, pp. 1506-1518, 2007.

- [39] S. Sutoko et al., “Tutorial on platform for optical topography analysis tools,” Neurophotonics, Vol.3, Issue 1, Article No.010801, 2016.

- [40] Y. Hoshi, N. Kobayashi, and M. Tamura, “Interpretation of near-infrared spectrosocopy signals: a study with a newly developed perfused rat brain model,” J. of Applied Physiology, Vol.90, Issue 5, pp. 1657-1662, 2001.

- [41] G. Strangman, D. A. Boas, and J. P. Sutton, “Non-invasive neuroimaging using near-infrared light,” Biological Psychiatry, Vol.52, Issue 7, pp. 679-693, 2002.

- [42] Y. Hoshi, “Functional near-infrared optical imaging: Utility and limitations in human brain mapping,” Psychophysiology, Vol.40, Issue 4, pp. 511-520, 2003.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.