Paper:

Estimation of Branch Geometry and Hierarchy in Orchard Trees for Robotic Pruning

Mohammad Albaroudi*

, Raji Alahmad*

, Raji Alahmad*

, Hussam Alraie**

, Hussam Alraie**

, Abdullah Alraee*

, Abdullah Alraee*

, Shi Puwei*, Irmiya R. Inniyaka*

, Shi Puwei*, Irmiya R. Inniyaka*

, and Kazuo Ishii*

, and Kazuo Ishii*

*Kyushu Institute of Technology

2-4 Hibikino, Wakamatsu-ku, Kitakyushu, Fukuoka 808-0196, Japan

**Middle East Technical University Northern Cyprus Campus

99750 Kalkanli, Guzelyurt, Mersin 10, Türkiye

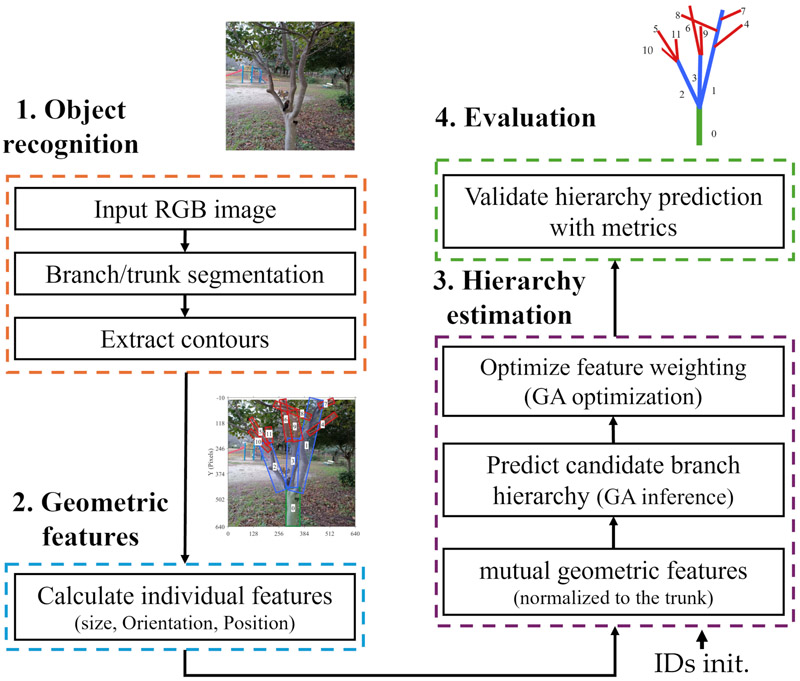

Orchard trees are primarily cultivated for food production, but they also provide environmental and aesthetic benefits. Proper maintenance, particularly through pruning, is crucial; however, manual pruning is labor-intensive, time-consuming, and dependent on expertise, limiting its consistency on a large scale. Automated pruning reduces labor demands and enhances scalability. In another context, automated pruning encounters additional challenges owing to the complex and inconsistent geometry of trees (branch size, position, and orientation) and their hierarchy (parent-child relationships), which play a vital role in pruning decisions. To address these challenges, a pipeline for estimating tree geometry and hierarchy was proposed. Branches and trunk from a single RGB image were segmented using a custom YOLOv8 model, and key geometric and distance features were extracted through principal component analysis, which captured over 99% of the geometric variation. A genetic algorithm then infers hierarchical relationships, assisting in the recognition of branch levels and supporting biological pruning decisions. The experimental results demonstrated distinct features across the hierarchical levels, achieving an F1 score of approximately 80% and a Jaccard index exceeding 70% during hierarchical validation. These findings demonstrate the potential of the proposed method to transform visual perception into geometric and hierarchical representations of tree structure, thereby providing essential structural information to support autonomous and biologically informed pruning decisions.

Tree geometry and hierarchy estimation

- [1] D. Mason-D’Croz et al., “Gaps between fruit and vegetable production, demand, and recommended consumption at global and national levels: An integrated modelling study,” The Lancet Planetary Health, Vol.3, No.7, pp. E318-E329, 2019. https://doi.org/10.1016/S2542-5196(19)30095-6

- [2] K. Pawlak and M. Kołodziejczak, “The role of agriculture in ensuring food security in developing countries: Considerations in the context of the problem of sustainable food production,” Sustainability, Vol.12, No.13, Article No.5488, 2020. https://doi.org/10.3390/su12135488

- [3] G. Montanaro, C. Xiloyannis, V. Nuzzo, and B. Dichio, “Orchard management, soil organic carbon and ecosystem services in Mediterranean fruit tree crops,” Scientia Horticulturae, Vol.217, pp. 92-101, 2017. https://doi.org/10.1016/j.scienta.2017.01.012

- [4] M. Rodríguez-Entrena, J. Barreiro-Hurlé, J. A. Gómez-Limón, M. Espinosa-Goded, and J. Castro-Rodríguez, “Evaluating the demand for carbon sequestration in olive grove soils as a strategy toward mitigating climate change,” J. of Environmental Management, Vol.112, pp. 368-376, 2012. https://doi.org/10.1016/j.jenvman.2012.08.004

- [5] Ş. Alp and B. Mutlu, “Aesthetic and ecological characteristics of Prunus Taxa in landscape design: A Study in eastern and southeastern provinces of Türkiye,” Eurasian J. of Forest Science, Vol.11, No.3, pp. 116-136, 2023. https://doi.org/10.31195/ejejfs.1275740

- [6] T. L. Davenport, “Pruning strategies to maximize tropical mango production from the time of planting to restoration of old orchards,” HortScience, Vol.41, No.3, pp. 544-548, 2006. https://doi.org/10.21273/HORTSCI.41.3.544

- [7] L. Esche, M. Schneider, J. Milz, and L. Armengot, “The role of shade tree pruning in cocoa agroforestry systems: Agronomic and economic benefits,” Agroforestry Systems, Vol.97, No.2, pp. 175-185, 2023. https://doi.org/10.1007/s10457-022-00796-x

- [8] A. M. Dilla, P. J. Smethurst, N. I. Huth, and K. M. Barry, “Plot-scale agroforestry modeling explores tree pruning and fertilizer interactions for maize production in a Faidherbia parkland,” Forests, Vol.11, No.11, Article No.1175, 2020. https://doi.org/10.3390/f11111175

- [9] L. He and J. Schupp, “Sensing and automation in pruning of apple trees: A review,” Agronomy, Vol.8, No.10, Article No.211, 2018. https://doi.org/10.3390/agronomy8100211

- [10] S. Mei et al., “Current status of orchard mechanization technologies and key development priorities in the 15th Five-Year Plan period,” Int. J. of Agricultural and Biological Engineering, Vol.18, No.6, pp. 12-23, 2025. https://doi.org/10.25165/j.ijabe.20251806.9314

- [11] W. Liu, G. Kantor, F. De la Torre, and N. Zheng, “Image-based tree pruning,” 2012 IEEE Int. Conf. on Robotics and Biomimetics, pp. 2072-2077, 2012. https://doi.org/10.1109/ROBIO.2012.6491274

- [12] P. J. Bedker, J. G. O’Brien, and M. E. Mielke, “How to prune trees,” United States Department of Agriculture, 1995. https://doi.org/10.5962/bhl.title.98699

- [13] Aneja N. M. et al., “Pruning in horticulture: A blend of art and science,” J. of Scientific Research and Reports, Vol.30, No.10, pp. 313-329, 2024. https://doi.org/10.9734/jsrr/2024/v30i102458

- [14] L. Purcell, “Tree pruning essentials,” Purdue Extension, FNR-506-W, Purdue University, 2015.

- [15] A. Zahid et al., “Development of a robotic end-effector for apple tree pruning,” Trans. of the ASABE, Vol.63, No.4, pp. 847-856, 2020. https://doi.org/10.13031/trans.13729

- [16] M. Fernandes et al., “Grapevine winter pruning automation: On potential pruning points detection through 2D plant modeling using grapevine segmentation,” 2021 IEEE 11th Annual Int. Conf. on CYBER Technology in Automation, Control, and Intelligent Systems, pp. 13-18, 2021. https://doi.org/10.1109/CYBER53097.2021.9588303

- [17] Y. Yang et al., “Automatic method for extracting tree branching structures from a single RGB image,” Forests, Vol.15, No.9, Article No.1659, 2024. https://doi.org/10.3390/f15091659

- [18] S. Tong, J. Zhang, W. Li, Y. Wang, and F. Kang, “An image-based system for locating pruning points in apple trees using instance segmentation and RGB-D images,” Biosystems Engineering, Vol.236, pp. 277-286, 2023. https://doi.org/10.1016/j.biosystemseng.2023.11.006

- [19] A. You et al., “An autonomous robot for pruning modern, planar fruit trees,” arXiv:2206.07201, 2022. https://doi.org/10.48550/arXiv.2206.07201

- [20] F. Westling, J. Underwood, and M. Bryson, “A procedure for automated tree pruning suggestion using LiDAR scans of fruit trees,” Computers and Electronics in Agriculture, Vol.187, Article No.106274, 2021. https://doi.org/10.1016/j.compag.2021.106274

- [21] Y. Ban et al., “Monkeybot: A climbing and pruning robot for standing trees in fast-growing forests,” Actuators, Vol.11, No.10, Article No.287, 2022. https://doi.org/10.3390/act11100287

- [22] Y. Li and S. Ma, “Navigation of apple tree pruning robot based on improved RRT-Connect algorithm,” Agriculture, Vol.13, No.8, Article No.1495, 2023. https://doi.org/10.3390/agriculture13081495

- [23] A. Alraee, H. Alraie, M. Albaroudi, R. Alahmad, and K. Ishii, “Efficient ball position estimation for tennis court robot assistants using a dual-camera system,” Proc. of the 2025 Int. Conf. on Artificial Life and Robotics, pp. 378-381, 2025. https://doi.org/10.5954/ICAROB.2025.OS12-9

- [24] R. Alahmad et al., “Visual-based system for fish detection and velocity estimation in marine aquaculture,” Proc. of the 2025 Int. Conf. on Artificial Life and Robotics, pp. 351-355, 2025. https://doi.org/10.5954/ICAROB.2025.OS12-3

- [25] J. Lee and K.-I. Hwang, “YOLO with adaptive frame control for real-time object detection applications,” Multimedia Tools and Applications, Vol.81, No.25, pp. 36375-36396, 2022. https://doi.org/10.1007/s11042-021-11480-0

- [26] M. Hussain, “YOLOv5, YOLOv8 and YOLOv10: The go-to detectors for real-time vision,” arXiv:2407.02988, 2024. https://doi.org/10.48550/arXiv.2407.02988

- [27] P. Hidayatullah et al., “YOLOv8 to YOLO11: A comprehensive architecture in-depth comparative review,” arXiv:2501.13400, 2025. https://doi.org/10.48550/arXiv.2501.13400

- [28] M. Albaroudi, R. Alahmad, A. Alraee, and K. Ishii, “Evaluation of tree branch recognition algorithm in pruning robots under augmented environmental conditions,” Proc. of the 2025 Int. Conf. on Artificial Life and Robotics, pp. 356-360, 2025. https://doi.org/10.5954/ICAROB.2025.OS12-4

- [29] E. O. Omuya, G. O. Okeyo, and M. W. Kimwele, “Feature selection for classification using principal component analysis and information gain,” Expert Systems with Applications, Vol.174, Article No.114765, 2021. https://doi.org/10.1016/j.eswa.2021.114765

- [30] M. Greenacre et al., “Principal component analysis,” Nature Reviews Methods Primers, Vol.2, Article No.100, 2022. https://doi.org/10.1038/s43586-022-00184-w

- [31] W. Palubicki et al., “Self-organizing tree models for image synthesis,” ACM Trans. on Graphics, Vol.28, No.3, Article No.58, 2009. https://doi.org/10.1145/1531326.1531364

- [32] D. Waysi, B. T. Ahmed, and I. M. Ibrahim, “Optimization by nature: A review of genetic algorithm techniques,” The Indonesian J. of Computer Science, Vol.14, No.1, 2025. https://doi.org/10.33022/ijcs.v14i1.4596

- [33] C. Cavallaro, V. Cutello, M. Pavone, and F. Zito, “Machine learning and genetic algorithms: A case study on image reconstruction,” Knowledge-Based Systems, Vol.284, Article No.111194, 2024. https://doi.org/10.1016/j.knosys.2023.111194

- [34] S. Cateni, V. Colla, and M. Vannucci, “A genetic algorithms-based approach for selecting the most relevant input variables in classification tasks,” 4th UKSim European Symp. on Computer Modeling and Simulation, pp. 63-67, 2010. https://doi.org/10.1109/EMS.2010.23

- [35] L. D. F. Costa, “Further generalizations of the Jaccard index,” arXiv:2110.09619, 2021. https://doi.org/10.48550/arXiv.2110.09619

- [36] N. Aishwarya, K. M. Prabhakaran, F. T. Debebe, M. S. S. A. Reddy, and P. Pranavee, “Skin cancer diagnosis with Yolo deep neural network,” Procedia Computer Science, Vol.220, pp. 651-658, 2023. https://doi.org/10.1016/j.procs.2023.03.083

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.