Development Report:

Robot Navigation in Forest Management

Abbe Mowshowitz*, Ayumu Tominaga**, and Eiji Hayashi**

*Department of Computer Science, The City College of New York (CUNY)

Convent Avenue at 138th Street, New York, NY 10031, USA

**Graduate School of Computer Science and Systems Engineering, Kyushu Institute of Technology

680-4 Kawazu, Iizuka, Fukuoka 820-8502, Japan

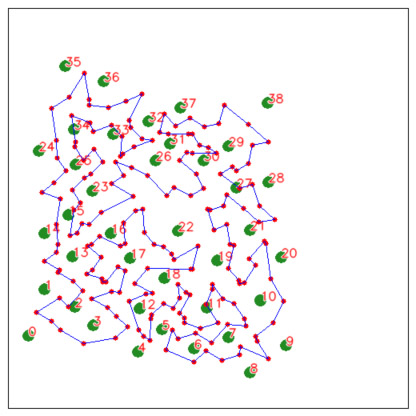

This paper addresses the problem of using a mobile, autonomous robot to manage a forest whose trees are destined for eventual harvesting. “Manage” in this context means periodical weeding between all the trees in the forest. We have constructed a robotic system enabling an autonomous robot to move between the trees without damaging them and to cut the weeds as it traverses the forest. This was accomplished by 1) computing a trajectory for the robot in advance of its entrance into the forest, and 2) developing a program and equipping the robot with the instruments needed to follow the trajectory. Computation of a trajectory in a forest is facilitated by treating the trees as vertices in a graph. Current, laser-based instruments make it possible to identify individual trees and compute distances between them. With this information a forest can be represented as a weighted graph. This graph can then be modified systematically in a way that allows for computing a Hamiltonian circuit that passes between each pair of trees. This representation is an instance of the well known Travelling Salesman Problem. The theory was put into practice in an experimental forest located at the Kyushu Institute of Technology. Our robot “SOMA,” built on an ATV platform, was able to follow a part of the trajectory computed for this small forest, thus demonstrating the feasibility of forest maintenance by an autonomous, labor saving robot.

Robot trajectory: Optimized Hamiltonian circuit derived from L(H*).

- [1] R. Parker, K. Bayne, and P. W. Clinton, “Robotics in Forestry,” New Zealand J. of Forestry, Vol.60, pp. 8-14, 2016.

- [2] G. Brolly and G. Király, “Algorithms for Stem Mapping by Means of Terrestrial Laser Scanning,” Acta Silv. Lign. Hung., Vol.5, pp. 119-130, 2009.

- [3] M. Holopainen, M. Vastaranta, and J. Hyyppä, “Outlook for the Next Generation’s Precision Forestry in Finland,” Forests, Vol.5, pp. 1682-1694, 2014.

- [4] H.-G. Maas, A. Bienert, S. Scheller, and E. Keane, “Automatic Forest Inventory Parameter Determination from Terrestrial Laser Scanner Data,” Int. J. of Remote Sensing, Vol.29, No.5, pp. 1579-1593, 2008.

- [5] T. Ritter, M. Schwarz, A. Tockner, F. Leisch, and A. Nothdurft, “Automatic Mapping of Forest Stands Based on Three-Dimensional Point Clouds Derived from Terrestrial Laser-Scanning,” Forests, Vol.8, No.8, pp. 1-19, 2017.

- [6] J. Tang, Y. Chen, A. Kukko, H. Kaartinen, A. Jaakkola, E. Khoramshahi, T. Hakala, J. Hyyppä, M. Holopainen, and H. Hyyppä, “SLAM-Aided Stem Mapping for Forest Inventory with Small-Footprint Mobile LiDAR,” Forests, Vol.6, No.12, pp. 4588-4606, 2015.

- [7] F. Harary, “Graph Theory,” Reading MA, Addison-Wesley, 1969.

- [8] E. L. Lawler, J. K. Lenstra, A. H. G. Rinnooy Kan, and D. B. Shmoys, “The Traveling Salesman Problem: A Guided Tour of Combinatorial Optimization,” Wiley, 1985.

- [9] S. Skiena, “Line Graph,” in Implementing Discrete Mathematics: Combinatorics and Graph Theory with Mathematica, Addison-Wesley, 1990.

- [10] F. Fujie and P. Zhang, “Line Graphs and Power of Graphs,” in Covering Walks in Graphs, Springer, 2014.

- [11] K. H. Chen and W. H. Tsai, “Vision-Based Obstacle Detection and Avoidance for Autonomous Land Vehicle Navigation in Outdoor Roads,” Automation in Construction, Vol.10, No.1, pp. 1-25, 2000.

- [12] A. Ohya, A. Kosaka, and A. Kak, “Vision-Based Navigation of Mobile Robot with Obstacle Avoidance Using Single-Camera Vision and Ultrasonic Sensing,” Procs. of the 1997 IEEE/RSJ Int. Conf. on Intelligent Robot and Systems. Innovative Robotics for Real-World Applications, IROS ’97, Vol.2, pp. 704-711, 1997.

- [13] R. Ejiri, T. Kubota, and I. Nakatani, “Vision-Based Behavior Planning for Lunar or Planetary Exploration Rover on Flat Surface,” J. Robot. Mechatron., Vol.29, No.5, pp. 847-855, 2017.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.