Paper:

Verification of Model Accuracy and Photo Shooting Efficiency of Large-Scale SfM for Flight Path Calculation

Sho Yamauchi and Keiji Suzuki

Future University Hakodate

116-2 Kamedanakano-cho, Hakodate, Hokkaido 041-8655, Japan

In drone photography of vast natural terrain, it is difficult to know in advance the exact location and shape of an object. In addition, there are many time and location constraints in such environments; therefore, it is desirable to capture images quickly by automatic flight. The authors had previously proposed a method to determine the safety of such an automatic flight photography plan in advance and capture photographs quickly. The method was designed to model an object from the safety zone using multiple drones and determine a safe path in advance. However, further improvement in the efficiency was necessary when photographing the object over a large area. In contrast, in this study, to improve the efficiency of the safe flight path determination method for large-scale subjects, we developed a new method to model each number of photographs using structure from motion (SfM) and verify the accuracy of the model obtained for each number of photographs in advance. In addition, by determining the appropriate number of shots based on the results obtained and reducing the loss of time and battery during shooting, we verified the extent to which the total flight time could be reduced for a flight path of shooting a large-scale object in the Esan Prefectural Natural Park. In the case of the Esan Prefectural Natural Park, we demonstrate that the difference in the small-object shooting time was not a problem, but the difference was significant for shooting large objects. The effectiveness of determining and applying, in advance, the number of shots that provides appropriate accuracy is demonstrated.

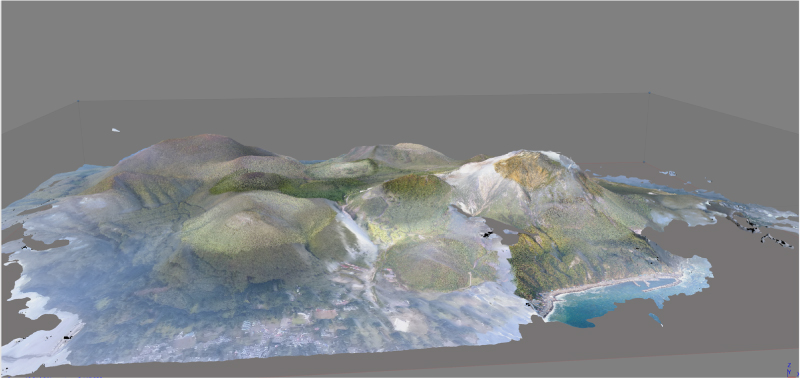

Model of Esan Prefectural Natural Park

- [1] T. Nägeli, L. Meier, A. Domahidi, J. Alonso-Mora, and O. Hilliges, “Real-time planning for automated multi-view drone cinematography,” ACM Trans. on Graphics, Vol.36, No.4, Article No.132, 2017.

- [2] T. Nägeli, J. Alonso-Mora, A. Domahidi, D. Rus, and O. Hilliges, “Real-time motion planning for aerial videography with dynamic obstacle avoidance and viewpoint optimization,” IEEE Robotics and Automation Letters, Vol.2, No.3, pp. 1696-1703, 2017.

- [3] Q. Galvane, C. Lino, M. Christie, J. Fleureau, F. Servant, F. Tariolle, P. Guillotel et al., “Directing cinematographic drones,” ACM Trans. on Graphics (TOG), Vol.37, No.3, Article No.34, 2018.

- [4] N. Snavely, S. M. Seitz, and R. Szeliski, “Modeling the World from Internet Photo Collections,” Int. J. of Computer Vision, Vol.80, No.2, pp. 189-210, 2008.

- [5] S. Yamauchi, K. Ogata, K. Suzuki, and T. Kawashima, “Development of an Accurate Video Shooting Method Using Multiple Drones Automatically Flying over Onuma Quasi-National Park,” J. Robot. Mechatron., Vol.30, No.3, pp. 436-442, 2018.

- [6] S. Siebert and J. Teizer, “Mobile 3D mapping for surveying earthwork projects using an Unmanned Aerial Vehicle (UAV) system,” Automation in Construction, Vol.41, pp. 1-14, 2014.

- [7] K. Schmid, H. Hirschmüller, A. Dömel, I. Grixa, M. Suppa, and G. Hirzinger, “View planning for multi-view stereo 3D reconstruction using an autonomous multicopter,” J. of Intelligent and Robotic Systems, Vol.65, pp. 309-323, 2012.

- [8] C. Di Franco and G. Buttazzo, “Coverage Path Planning for UAVs Photogrammetry with Energy and Resolution Constraints,” J. of Intelligent and Robotic Systems, Vol.83, pp. 445-462, 2016.

- [9] F. Nex and F. Remondino, “UAV for 3D mapping applications: a review,” Applied Geomatics, Vol.6, pp. 1-15, 2014.

- [10] J. Liénard, A. Vogs, D. Gatziolis, and N. Strigul, “Embedded, real-time UAV control for improved, image-based 3D scene reconstruction,” Measurement, Vol.81, pp. 264-269, 2016.

- [11] M. W. Smith, J. L. Carrivick, and D. J. Quincey, “Structure from motion photogrammetry in physical geography,” Progress in Physical Geography, Vol.40, No.2, pp. 247-275, 2016.

- [12] M. J. Westoby, J. Brasington, N. F. Glasser, M. J. Hambrey, and J. M. Reynolds, “‘Structure-from-Motion’ photogrammetry: A low-cost, effective tool for geoscience applications,” Geomorphology, Vol.179, pp. 300-314, 2012.

- [13] T. Rosnell and E. Honkavaara, “Point Cloud Generation from Aerial Image Data Acquired by a Quadrocopter Type Micro Unmanned Aerial Vehicle and a Digital Still Camera,” Sensors, Vol.12, No.2, pp. 453-480, 2012.

- [14] A. Lucieer, S. M. de Jong, and D. Turner, “Mapping landslide displacements using Structure from Motion (SfM) and image correlation of multi-temporal UAV photography,” Progress in Physical Geography, Vol.38, No.1, pp. 97-116, 2014.

- [15] M. R. James and S. Robson, “Mitigating systematic error in topographic models derived from UAV and ground-based image networks,” Earth Surface Processes and Landforms, Vol.39, No.10, pp. 1413-1420, 2014.

- [16] I. Maza, K. Kondak, M. Bernard, and A. Ollero, “Multi-UAV Cooperation and Control for Load Transportation and Deployment,” J. of Intelligent and Robotic Systems, Vol.75, No.1-4, Article No.417, 2010.

- [17] I. Mademlis, V. Mygdalis, N. Nikolaidis, M. Montagnuolo, F. Negro, A. Messina, and I. Pitas, “High-level multiple-UAV cinematography tools for covering outdoor events,” IEEE Trans. on Broadcasting, Vol.65, No.3, pp. 627-635, 2019.

- [18] S. Yamauchi, K. Ogata, K. Suzuki, and T. Kawashima, “Development of an Accurate Video Shooting Method Using Multiple Drones Automatically Flying over Esan prefectural natural park,” Digital Practice, Vol.9, No.3, 2018 (in Japanese).

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.