Paper:

Automatic Generation of Multidestination Routes for Autonomous Wheelchairs

Yusuke Mori and Katashi Nagao

Graduate School of Informatics, Nagoya University

Furo-cho, Chikusa-ku, Nagoya, Aichi 464-8603, Japan

To solve the problem of autonomously navigating multiple destinations, which is one of the tasks in the Tsukuba Challenge 2019, this paper proposes a method for automatically generating the optimal travel route based on costs associated with routes. In the proposed method, the route information is generated by playing back the acquired driving data to perform self-localization, and the self-localization log is stored. In addition, the image group of road surfaces is acquired from the driving data. The costs of routes are generated based on texture analysis of the road surface image group and analysis of the self-localization log. The cost-added route information is generated by combining the costs calculated by the two methods, and by assigning the combined costs to the route. The minimum-cost multidestination route is generated by conducting a route search using cost-added route information. Then, we evaluated the proposed method by comparing it with the method of generating the route using only the distance cost. The results confirmed that the proposed method generates travel routes that account for safety when the autonomous wheelchair is being driven.

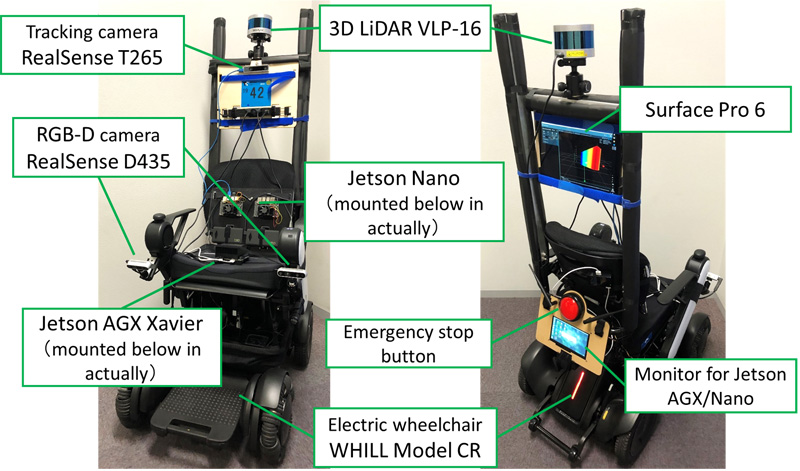

Autonomous wheelchair in this research

- [1] T. Leung and J. Malik, “Representing and recognizing the visual appearance of materials using three-dimensional texton,” Int. J. Computer Vision (IJCV), Vol.43, No.1, pp. 29-44, 2001.

- [2] M. Cimpoi, S. Maji, and A. Vedaldi, “Deep filter banks for texture recognition and segmentation,” Proc. IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), pp. 3828-3836, 2015.

- [3] H. Zhang, J. Xue, and K. Dana, “Deep TEN: texture encoding network,” Proc. IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), pp. 708-717, 2017.

- [4] J. Xue, H. Zhang, and K. Dana, “Deep texture manifold for ground terrain recognition,” Proc. IEEE Conf. on Computer Vision and Pattern Recognition (CVPR), pp. 558-567, 2018.

- [5] R. Hadsell, P. Sermanet, J. Ben, A. Erkan, M. Scoffier, K. Kavukcuoglu, U. Muller, and Y. LeCun, “Learning long-range vision for autonomous off-road driving,” J. Field Robot., Vol.26, No.2, pp. 120-144, 2009.

- [6] H. Santoso and K. Nakamura, “Discrimination of sidewalk surface condition based on image textures and meteorological information,” J. Adv. Comput. Intell. Intell. Inform., Vol.11, No.5, pp. 491-501, 2007.

- [7] S. Hoshino and K. Uchida, “Interactive motion planning for mobile robot navigation in dynamic environments,” J. Adv. Comput. Intell. Intell. Inform., Vol.21, No.4, pp. 667-674, 2017.

- [8] Y. Suzuki, S. Thompson, and S. Kagami, “Smooth path planning with pedestrian avoidance for wheeled robots,” J. Robot. Mechatron., Vol.22, No.1, pp. 21-27, 2010.

- [9] T. Tomizawa and Y. Shibata, “Oncoming human avoidance for autonomous mobile robot based on gait characteristics,” J. Robot. Mechatron., Vol.28, No.4, pp. 500-507, 2016.

- [10] H. Yoshida, K. Yoshida, and T. Honjo, “Path planning design for boarding-type personal mobility unit passing pedestrians based on pedestrian behavior,” J. Robot. Mechatron., Vol.32, No.3, pp. 588-597, 2020.

- [11] Y. Hosoda, R. Sawahashi, N. Machinaka, R. Yamazaki, Y. Sadakuni, K. Onda, R. Kusakari, M. Kimba, T. Oishi, and Y. Kuroda, “Robust road-following navigation system with a simple map,” J. Robot. Mechatron., Vol.30, No.4, pp. 552-562, 2018.

- [12] Y. Kobayashi, M. Kondo, Y. Hiramatsu, H. Fujii, and T. Kamiya, “Mobile robot decision-making based on offline simulation for navigation over uneven terrain,” J. Robot. Mechatron., Vol.30, No.4, pp. 671-682, 2018.

- [13] J. Xiang, Y. Tazaki, T. Suzuki, and B. Levedahl, “Variable-resolution velocity roadmap generation considering safety constraints for mobile robots,” Proc. IEEE Conf. on Robotics and Biomimetics (ROBIO), pp. 854-859, 2012.

- [14] A. Farid and T. Matsumaru, “Path planning in outdoor pedestrian settings using 2D digital maps,” J. Robot. Mechatron., Vol.31, No.3, pp. 464-473, 2019.

- [15] R. Ejiri, T. Kubota, and I. Nakatani, “Vision-based behavior planning for lunar or planetary exploration rover on flat surface,” J. Robot. Mechatron., Vol.29, No.5, pp. 847-855, 2017.

- [16] S. Kato, E. Takeuchi, Y. Ishiguro, Y. Ninomiya, K. Takeda, and T. Hamada, “An open approach to autonomous vehicles,” IEEE Micro, Vol.35, No.6, pp. 60-69, 2015.

- [17] A. Krizhevsky, I. Sutskever, and G. E. Hinton, “ImageNet classification with deep convolutional neural networks,” Proc. Neural Information Processing Systems (NIPS), pp. 1-9, 2012.

- [18] S. Thrun, W. Burgard, and D. Fox, “Probabilistic robotics,” The MIT Press, 2005.

- [19] E. W. Dijkstra, “A note on two problems in connexion with graphs,” J. Numerische Mathematik, Vol.1, pp. 269-271, 1959.

- [20] D. L. Applegate, R. E. Bixby, V. Chvátal, and W. J. Cook, “The traveling salesman problem: a computational study,” Princeton University Press, 2006.

- [21] L. L. Thurstone, “Psychophysical analysis,” American J. Psychology, Vol.38, No.3, pp. 368-389, 1927.

- [22] H. Scheffe, “An analysis of variance for paired comparisons,” J. the American Statistical Association, Vol.47, No.259, pp. 381-400, 1952.

- [23] R. A. Bradley, “Science, statistics, and paired comparisons,” J. Biometrics, Vol.32, No.2, pp. 213-239, 1976.

- [24] J. Cohen, “A coefficient of agreement for nominal scales,” Educational and Psychological Measurement, Vol.20, No.1, pp. 37-46, 1960.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.

This article is published under a Creative Commons Attribution-NoDerivatives 4.0 Internationa License.